Antti Oulasvirta

Department of Communications and Networking, Aalto University, Finland

Modeling Trial-and-Error Navigation With a Sequential Decision Model of Information Scent

Mar 12, 2026Abstract:Users often struggle to locate an item within an information architecture, particularly when links are ambiguous or deeply nested in hierarchies. Information scent has been used to explain why users select incorrect links, but this concept assumes that users see all available links before deciding. In practice, users frequently select a link too quickly, overlook relevant cues, and then rely on backtracking when errors occur. We extend the concept of information scent by framing navigation as a sequential decision-making problem under memory constraints. Specifically, we assume that users do not scan entire pages but instead inspect strategically, looking "just enough" to find the target given their time budget. To choose which item to inspect next, they consider both local (this page) and global (site) scent; however, both are constrained by memory. Trying to avoid wasting time, they occasionally choose the wrong links without inspecting everything on a page. Comparisons with empirical data show that our model replicates key navigation behaviors: premature selections, wrong turns, and recovery from backtracking. We conclude that trial-and-error behavior is well explained by information scent when accounting for the sequential and bounded characteristics of the navigation problem.

Simulation-based Optimization for Augmented Reading

Feb 26, 2026Abstract:Augmented reading systems aim to adapt text presentation to improve comprehension and task performance, yet existing approaches rely heavily on heuristics, opaque data-driven models, or repeated human involvement in the design loop. We propose framing augmented reading as a simulation-based optimization problem grounded in resource-rational models of human reading. These models instantiate a simulated reader that allocates limited cognitive resources, such as attention, memory, and time under task demands, enabling systematic evaluation of text user interfaces. We introduce two complementary optimization pipelines: an offline approach that explores design alternatives using simulated readers, and an online approach that personalizes reading interfaces in real time using ongoing interaction data. Together, this perspective enables adaptive, explainable, and scalable augmented reading design without relying solely on human testing.

Adaptive Prompt Elicitation for Text-to-Image Generation

Feb 04, 2026Abstract:Aligning text-to-image generation with user intent remains challenging, for users who provide ambiguous inputs and struggle with model idiosyncrasies. We propose Adaptive Prompt Elicitation (APE), a technique that adaptively asks visual queries to help users refine prompts without extensive writing. Our technical contribution is a formulation of interactive intent inference under an information-theoretic framework. APE represents latent intent as interpretable feature requirements using language model priors, adaptively generates visual queries, and compiles elicited requirements into effective prompts. Evaluation on IDEA-Bench and DesignBench shows that APE achieves stronger alignment with improved efficiency. A user study with challenging user-defined tasks demonstrates 19.8% higher alignment without workload overhead. Our work contributes a principled approach to prompting that, for general users, offers an effective and efficient complement to the prevailing prompt-based interaction paradigm with text-to-image models.

Cost-Aware Bayesian Optimization for Prototyping Interactive Devices

Feb 02, 2026Abstract:Deciding which idea is worth prototyping is a central concern in iterative design. A prototype should be produced when the expected improvement is high and the cost is low. However, this is hard to decide, because costs can vary drastically: a simple parameter tweak may take seconds, while fabricating hardware consumes material and energy. Such asymmetries, can discourage a designer from exploring the design space. In this paper, we present an extension of cost-aware Bayesian optimization to account for diverse prototyping costs. The method builds on the power of Bayesian optimization and requires only a minimal modification to the acquisition function. The key idea is to use designer-estimated costs to guide sampling toward more cost-effective prototypes. In technical evaluations, the method achieved comparable utility to a cost-agnostic baseline while requiring only ${\approx}70\%$ of the cost; under strict budgets, it outperformed the baseline threefold. A within-subjects study with 12 participants in a realistic joystick design task demonstrated similar benefits. These results show that accounting for prototyping costs can make Bayesian optimization more compatible with real-world design projects.

Simulating Human Audiovisual Search Behavior

Feb 02, 2026Abstract:Locating a target based on auditory and visual cues$\unicode{x2013}$such as finding a car in a crowded parking lot or identifying a speaker in a virtual meeting$\unicode{x2013}$requires balancing effort, time, and accuracy under uncertainty. Existing models of audiovisual search often treat perception and action in isolation, overlooking how people adaptively coordinate movement and sensory strategies. We present Sensonaut, a computational model of embodied audiovisual search. The core assumption is that people deploy their body and sensory systems in ways they believe will most efficiently improve their chances of locating a target, trading off time and effort under perceptual constraints. Our model formulates this as a resource-rational decision-making problem under partial observability. We validate the model against newly collected human data, showing that it reproduces both adaptive scaling of search time and effort under task complexity, occlusion, and distraction, and characteristic human errors. Our simulation of human-like resource-rational search informs the design of audiovisual interfaces that minimize search cost and cognitive load.

Log2Motion: Biomechanical Motion Synthesis from Touch Logs

Jan 28, 2026Abstract:Touch data from mobile devices are collected at scale but reveal little about the interactions that produce them. While biomechanical simulations can illuminate motor control processes, they have not yet been developed for touch interactions. To close this gap, we propose a novel computational problem: synthesizing plausible motion directly from logs. Our key insight is a reinforcement learning-driven musculoskeletal forward simulation that generates biomechanically plausible motion sequences consistent with events recorded in touch logs. We achieve this by integrating a software emulator into a physics simulator, allowing biomechanical models to manipulate real applications in real-time. Log2Motion produces rich syntheses of user movements from touch logs, including estimates of motion, speed, accuracy, and effort. We assess the plausibility of generated movements by comparing against human data from a motion capture study and prior findings, and demonstrate Log2Motion in a large-scale dataset. Biomechanical motion synthesis provides a new way to understand log data, illuminating the ergonomics and motor control underlying touch interactions.

Controllable GUI Exploration

Feb 05, 2025

Abstract:During the early stages of interface design, designers need to produce multiple sketches to explore a design space. Design tools often fail to support this critical stage, because they insist on specifying more details than necessary. Although recent advances in generative AI have raised hopes of solving this issue, in practice they fail because expressing loose ideas in a prompt is impractical. In this paper, we propose a diffusion-based approach to the low-effort generation of interface sketches. It breaks new ground by allowing flexible control of the generation process via three types of inputs: A) prompts, B) wireframes, and C) visual flows. The designer can provide any combination of these as input at any level of detail, and will get a diverse gallery of low-fidelity solutions in response. The unique benefit is that large design spaces can be explored rapidly with very little effort in input-specification. We present qualitative results for various combinations of input specifications. Additionally, we demonstrate that our model aligns more accurately with these specifications than other models.

AgentForge: A Flexible Low-Code Platform for Reinforcement Learning Agent Design

Oct 25, 2024

Abstract:Developing a reinforcement learning (RL) agent often involves identifying effective values for a large number of parameters, covering the policy, reward function, environment, and the agent's internal architecture, such as parameters controlling how the peripheral vision and memory modules work. Critically, since these parameters are interrelated in complex ways, optimizing them can be viewed as a black box optimization problem, which is especially challenging for non-experts. Although existing optimization-as-a-service platforms (e.g., Vizier, Optuna) can handle such problems, they are impractical for RL systems, as users must manually map each parameter to different components, making the process cumbersome and error-prone. They also require deep understanding of the optimization process, limiting their application outside ML experts and restricting access for fields like cognitive science, which models human decision-making. To tackle these challenges, we present AgentForge, a flexible low-code framework to optimize any parameter set across an RL system. AgentForge allows the user to perform individual or joint optimization of parameter sets. An optimization problem can be defined in a few lines of code and handed to any of the interfaced optimizers. We evaluated its performance in a challenging vision-based RL problem. AgentForge enables practitioners to develop RL agents without requiring extensive coding or deep expertise in optimization.

Graph4GUI: Graph Neural Networks for Representing Graphical User Interfaces

Apr 21, 2024

Abstract:Present-day graphical user interfaces (GUIs) exhibit diverse arrangements of text, graphics, and interactive elements such as buttons and menus, but representations of GUIs have not kept up. They do not encapsulate both semantic and visuo-spatial relationships among elements. To seize machine learning's potential for GUIs more efficiently, Graph4GUI exploits graph neural networks to capture individual elements' properties and their semantic-visuo-spatial constraints in a layout. The learned representation demonstrated its effectiveness in multiple tasks, especially generating designs in a challenging GUI autocompletion task, which involved predicting the positions of remaining unplaced elements in a partially completed GUI. The new model's suggestions showed alignment and visual appeal superior to the baseline method and received higher subjective ratings for preference. Furthermore, we demonstrate the practical benefits and efficiency advantages designers perceive when utilizing our model as an autocompletion plug-in.

EyeFormer: Predicting Personalized Scanpaths with Transformer-Guided Reinforcement Learning

Apr 15, 2024

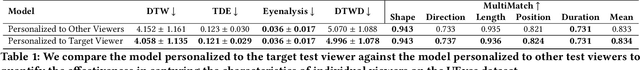

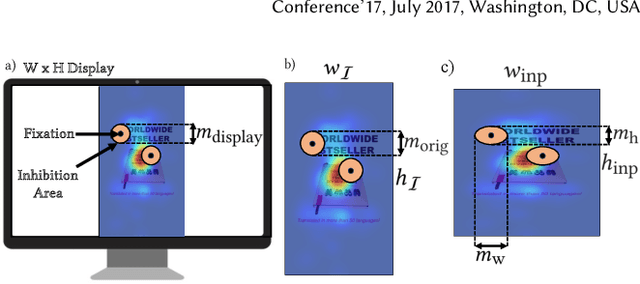

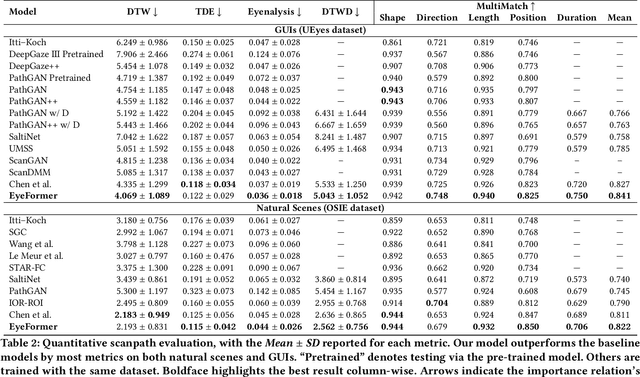

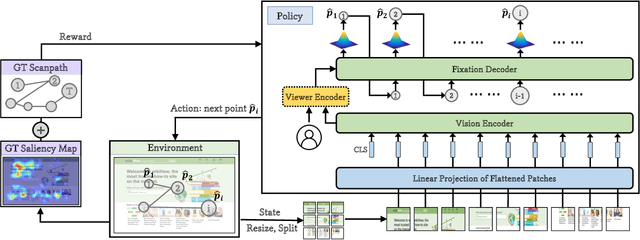

Abstract:From a visual perception perspective, modern graphical user interfaces (GUIs) comprise a complex graphics-rich two-dimensional visuospatial arrangement of text, images, and interactive objects such as buttons and menus. While existing models can accurately predict regions and objects that are likely to attract attention ``on average'', so far there is no scanpath model capable of predicting scanpaths for an individual. To close this gap, we introduce EyeFormer, which leverages a Transformer architecture as a policy network to guide a deep reinforcement learning algorithm that controls gaze locations. Our model has the unique capability of producing personalized predictions when given a few user scanpath samples. It can predict full scanpath information, including fixation positions and duration, across individuals and various stimulus types. Additionally, we demonstrate applications in GUI layout optimization driven by our model. Our software and models will be publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge