John J. Dudley

Swarm manipulation: An efficient and accurate technique for multi-object manipulation in virtual reality

Oct 24, 2024Abstract:The theory of swarm control shows promise for controlling multiple objects, however, scalability is hindered by cost constraints, such as hardware and infrastructure. Virtual Reality (VR) can overcome these limitations, but research on swarm interaction in VR is limited. This paper introduces a novel Swarm Manipulation interaction technique and compares it with two baseline techniques: Virtual Hand and Controller (ray-casting). We evaluated these techniques in a user study ($N$ = 12) in three tasks (selection, rotation, and resizing) across five conditions. Our results indicate that Swarm Manipulation yielded superior performance, with significantly faster speeds in most conditions across the three tasks. It notably reduced resizing size deviations but introduced a trade-off between speed and accuracy in the rotation task. Additionally, we conducted a follow-up user study ($N$ = 6) using Swarm Manipulation in two complex VR scenarios and obtained insights through semi-structured interviews, shedding light on optimized swarm control mechanisms and perceptual changes induced by this interaction paradigm. These results demonstrate the potential of the Swarm Manipulation technique to enhance the usability and user experience in VR compared to conventional manipulation techniques. In future studies, we aim to understand and improve swarm interaction via internal swarm particle cooperation.

Promptor: A Conversational and Autonomous Prompt Generation Agent for Intelligent Text Entry Techniques

Oct 15, 2023Abstract:Text entry is an essential task in our day-to-day digital interactions. Numerous intelligent features have been developed to streamline this process, making text entry more effective, efficient, and fluid. These improvements include sentence prediction and user personalization. However, as deep learning-based language models become the norm for these advanced features, the necessity for data collection and model fine-tuning increases. These challenges can be mitigated by harnessing the in-context learning capability of large language models such as GPT-3.5. This unique feature allows the language model to acquire new skills through prompts, eliminating the need for data collection and fine-tuning. Consequently, large language models can learn various text prediction techniques. We initially showed that, for a sentence prediction task, merely prompting GPT-3.5 surpassed a GPT-2 backed system and is comparable with a fine-tuned GPT-3.5 model, with the latter two methods requiring costly data collection, fine-tuning and post-processing. However, the task of prompting large language models to specialize in specific text prediction tasks can be challenging, particularly for designers without expertise in prompt engineering. To address this, we introduce Promptor, a conversational prompt generation agent designed to engage proactively with designers. Promptor can automatically generate complex prompts tailored to meet specific needs, thus offering a solution to this challenge. We conducted a user study involving 24 participants creating prompts for three intelligent text entry tasks, half of the participants used Promptor while the other half designed prompts themselves. The results show that Promptor-designed prompts result in a 35% increase in similarity and 22% in coherence over those by designers.

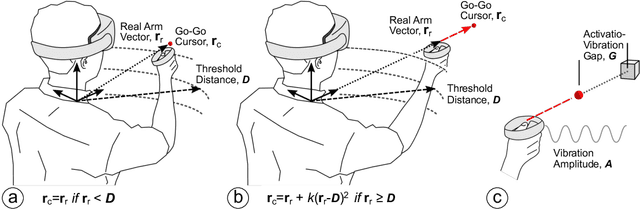

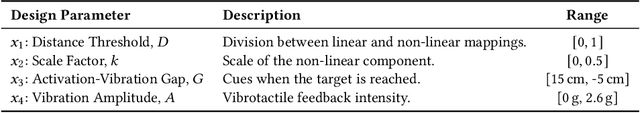

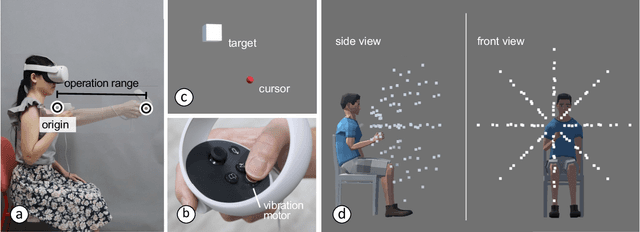

Investigating Positive and Negative Qualities of Human-in-the-Loop Optimization for Designing Interaction Techniques

Apr 15, 2022

Abstract:Designers reportedly struggle with design optimization tasks where they are asked to find a combination of design parameters that maximizes a given set of objectives. In HCI, design optimization problems are often exceedingly complex, involving multiple objectives and expensive empirical evaluations. Model-based computational design algorithms assist designers by generating design examples during design, however they assume a model of the interaction domain. Black box methods for assistance, on the other hand, can work with any design problem. However, virtually all empirical studies of this human-in-the-loop approach have been carried out by either researchers or end-users. The question stands out if such methods can help designers in realistic tasks. In this paper, we study Bayesian optimization as an algorithmic method to guide the design optimization process. It operates by proposing to a designer which design candidate to try next, given previous observations. We report observations from a comparative study with 40 novice designers who were tasked to optimize a complex 3D touch interaction technique. The optimizer helped designers explore larger proportions of the design space and arrive at a better solution, however they reported lower agency and expressiveness. Designers guided by an optimizer reported lower mental effort but also felt less creative and less in charge of the progress. We conclude that human-in-the-loop optimization can support novice designers in cases where agency is not critical.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge