Antonello Ceravola

HyperGraphOS: A Meta Operating System for Science and Engineering

Dec 06, 2024

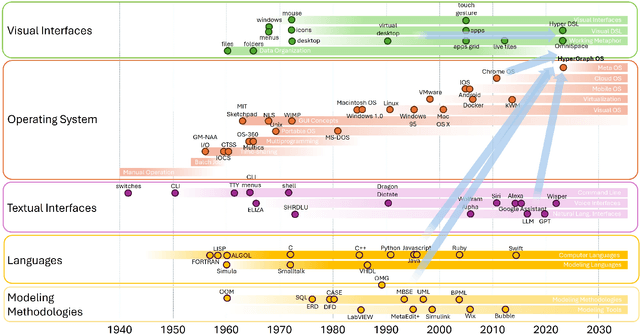

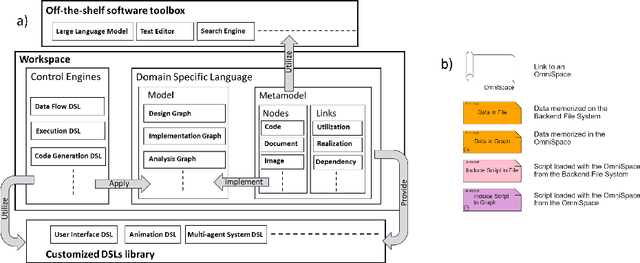

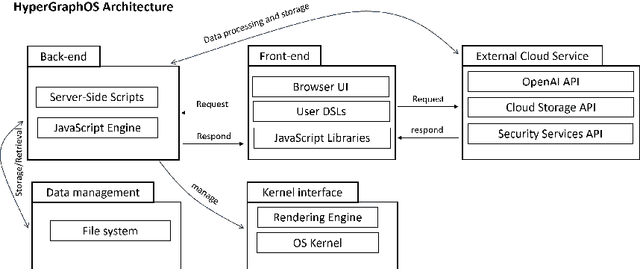

Abstract:This paper presents HyperGraphOS, an innovative Operating System designed for the scientific and engineering domains. It combines model based engineering, graph modeling, data containers, and computational tools, offering users a dynamic workspace for creating and managing complex models represented as customizable graphs. Using a web based architecture, HyperGraphOS requires only a modern browser to organize knowledge, documents, and content into interconnected models. Domain Specific Languages drive workspace navigation, code generation, AI integration, and process organization.The platform models function as both visual drawings and data structures, enabling dynamic modifications and inspection, both interactively and programmatically. HyperGraphOS was evaluated across various domains, including virtual avatars, robotic task planning using Large Language Models, and meta modeling for feature based code development. Results show significant improvements in flexibility, data management, computation, and document handling.

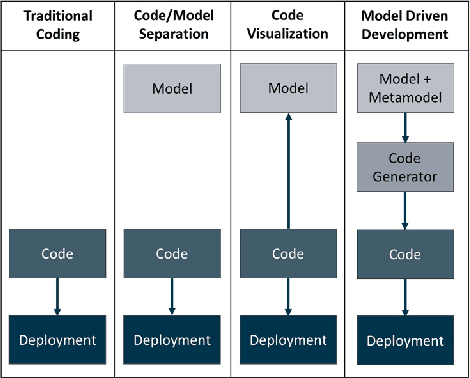

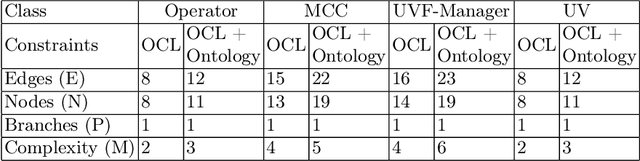

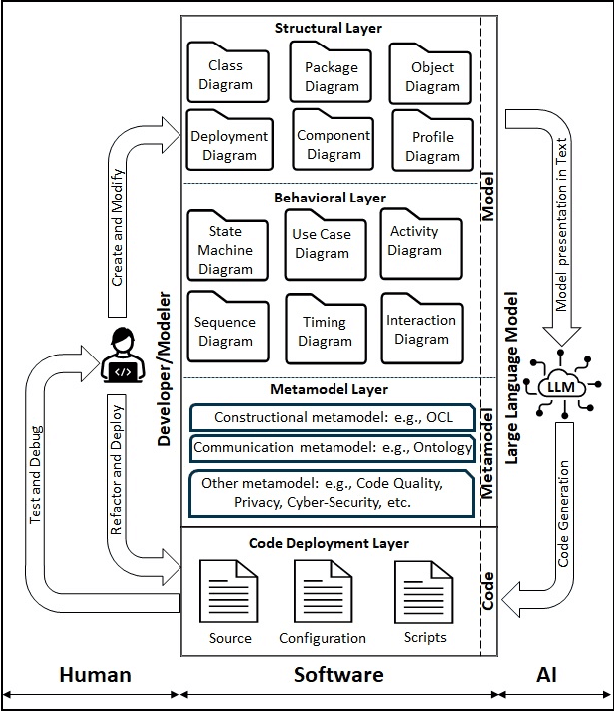

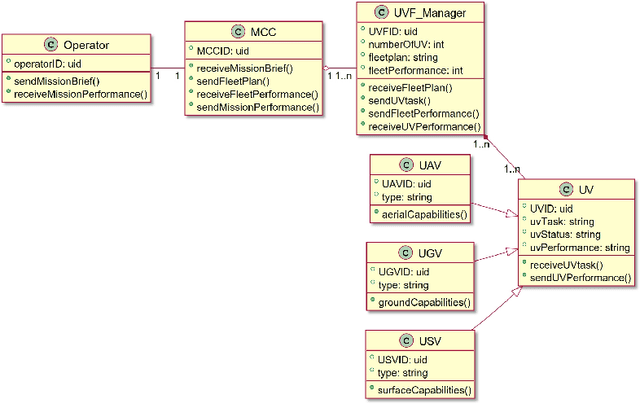

LLM as a code generator in Agile Model Driven Development

Oct 24, 2024

Abstract:Leveraging Large Language Models (LLM) like GPT4 in the auto generation of code represents a significant advancement, yet it is not without its challenges. The ambiguity inherent in natural language descriptions of software poses substantial obstacles to generating deployable, structured artifacts. This research champions Model Driven Development (MDD) as a viable strategy to overcome these challenges, proposing an Agile Model Driven Development (AMDD) approach that employs GPT4 as a code generator. This approach enhances the flexibility and scalability of the code auto generation process and offers agility that allows seamless adaptation to changes in models or deployment environments. We illustrate this by modeling a multi agent Unmanned Vehicle Fleet (UVF) system using the Unified Modeling Language (UML), significantly reducing model ambiguity by integrating the Object Constraint Language (OCL) for code structure meta modeling, and the FIPA ontology language for communication semantics meta modeling. Applying GPT4 auto generation capabilities yields Java and Python code that is compatible with the JADE and PADE frameworks, respectively. Our thorough evaluation of the auto generated code verifies its alignment with expected behaviors and identifies enhancements in agent interactions. Structurally, we assessed the complexity of code derived from a model constrained solely by OCL meta models, against that influenced by both OCL and FIPA ontology meta models. The results indicate that the ontology constrained meta model produces inherently more complex code, yet its cyclomatic complexity remains within manageable levels, suggesting that additional meta model constraints can be incorporated without exceeding the high risk threshold for complexity.

Dialogue You Can Trust: Human and AI Perspectives on Generated Conversations

Sep 03, 2024

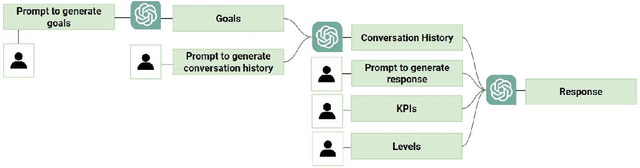

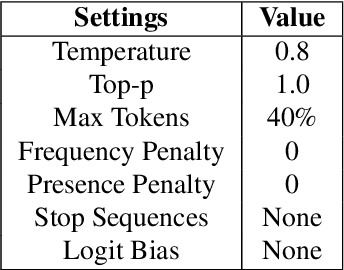

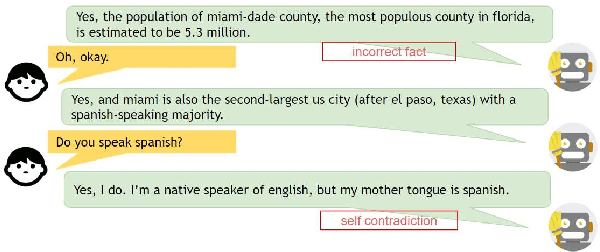

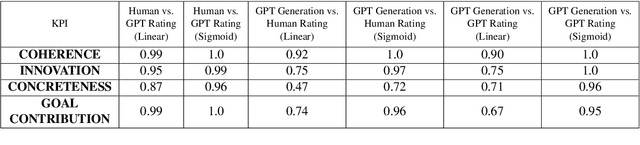

Abstract:As dialogue systems and chatbots increasingly integrate into everyday interactions, the need for efficient and accurate evaluation methods becomes paramount. This study explores the comparative performance of human and AI assessments across a range of dialogue scenarios, focusing on seven key performance indicators (KPIs): Coherence, Innovation, Concreteness, Goal Contribution, Commonsense Contradiction, Incorrect Fact, and Redundancy. Utilizing the GPT-4o API, we generated a diverse dataset of conversations and conducted a two-part experimental analysis. In Experiment 1, we evaluated multi-party conversations on Coherence, Innovation, Concreteness, and Goal Contribution, revealing that GPT models align closely with human judgments. Notably, both human and AI evaluators exhibited a tendency towards binary judgment rather than linear scaling, highlighting a shared challenge in these assessments. Experiment 2 extended the work of Finch et al. (2023) by focusing on dyadic dialogues and assessing Commonsense Contradiction, Incorrect Fact, and Redundancy. The results indicate that while GPT-4o demonstrates strong performance in maintaining factual accuracy and commonsense reasoning, it still struggles with reducing redundancy and self-contradiction. Our findings underscore the potential of GPT models to closely replicate human evaluation in dialogue systems, while also pointing to areas for improvement. This research offers valuable insights for advancing the development and implementation of more refined dialogue evaluation methodologies, contributing to the evolution of more effective and human-like AI communication tools.

Introducing Brain-like Concepts to Embodied Hand-crafted Dialog Management System

Jun 13, 2024Abstract:Along with the development of chatbot, language models and speech technologies, there is a growing possibility and interest of creating systems able to interface with humans seamlessly through natural language or directly via speech. In this paper, we want to demonstrate that placing the research on dialog system in the broader context of embodied intelligence allows to introduce concepts taken from neurobiology and neuropsychology to define behavior architecture that reconcile hand-crafted design and artificial neural network and open the gate to future new learning approaches like imitation or learning by instruction. To do so, this paper presents a neural behavior engine that allows creation of mixed initiative dialog and action generation based on hand-crafted models using a graphical language. A demonstration of the usability of such brain-like inspired architecture together with a graphical dialog model is described through a virtual receptionist application running on a semi-public space.

Generating consistent PDDL domains with Large Language Models

Apr 11, 2024Abstract:Large Language Models (LLMs) are capable of transforming natural language domain descriptions into plausibly looking PDDL markup. However, ensuring that actions are consistent within domains still remains a challenging task. In this paper we present a novel concept to significantly improve the quality of LLM-generated PDDL models by performing automated consistency checking during the generation process. Although the proposed consistency checking strategies still can't guarantee absolute correctness of generated models, they can serve as valuable source of feedback reducing the amount of correction efforts expected from a human in the loop. We demonstrate the capabilities of our error detection approach on a number of classical and custom planning domains (logistics, gripper, tyreworld, household, pizza).

Large Language Models for Multi-Modal Human-Robot Interaction

Jan 26, 2024

Abstract:This paper presents an innovative large language model (LLM)-based robotic system for enhancing multi-modal human-robot interaction (HRI). Traditional HRI systems relied on complex designs for intent estimation, reasoning, and behavior generation, which were resource-intensive. In contrast, our system empowers researchers and practitioners to regulate robot behavior through three key aspects: providing high-level linguistic guidance, creating "atomics" for actions and expressions the robot can use, and offering a set of examples. Implemented on a physical robot, it demonstrates proficiency in adapting to multi-modal inputs and determining the appropriate manner of action to assist humans with its arms, following researchers' defined guidelines. Simultaneously, it coordinates the robot's lid, neck, and ear movements with speech output to produce dynamic, multi-modal expressions. This showcases the system's potential to revolutionize HRI by shifting from conventional, manual state-and-flow design methods to an intuitive, guidance-based, and example-driven approach.

CoPAL: Corrective Planning of Robot Actions with Large Language Models

Oct 11, 2023Abstract:In the pursuit of fully autonomous robotic systems capable of taking over tasks traditionally performed by humans, the complexity of open-world environments poses a considerable challenge. Addressing this imperative, this study contributes to the field of Large Language Models (LLMs) applied to task and motion planning for robots. We propose a system architecture that orchestrates a seamless interplay between multiple cognitive levels, encompassing reasoning, planning, and motion generation. At its core lies a novel replanning strategy that handles physically grounded, logical, and semantic errors in the generated plans. We demonstrate the efficacy of the proposed feedback architecture, particularly its impact on executability, correctness, and time complexity via empirical evaluation in the context of a simulation and two intricate real-world scenarios: blocks world, barman and pizza preparation.

Analysis of ChatGPT on Source Code

Jun 06, 2023

Abstract:This paper explores the use of Large Language Models (LLMs) and in particular ChatGPT in programming, source code analysis, and code generation. LLMs and ChatGPT are built using machine learning and artificial intelligence techniques, and they offer several benefits to developers and programmers. While these models can save time and provide highly accurate results, they are not yet advanced enough to replace human programmers entirely. The paper investigates the potential applications of LLMs and ChatGPT in various areas, such as code creation, code documentation, bug detection, refactoring, and more. The paper also suggests that the usage of LLMs and ChatGPT is expected to increase in the future as they offer unparalleled benefits to the programming community.

A Glimpse in ChatGPT Capabilities and its impact for AI research

May 10, 2023Abstract:Large language models (LLMs) have recently become a popular topic in the field of Artificial Intelligence (AI) research, with companies such as Google, Amazon, Facebook, Amazon, Tesla, and Apple (GAFA) investing heavily in their development. These models are trained on massive amounts of data and can be used for a wide range of tasks, including language translation, text generation, and question answering. However, the computational resources required to train and run these models are substantial, and the cost of hardware and electricity can be prohibitive for research labs that do not have the funding and resources of the GAFA. In this paper, we will examine the impact of LLMs on AI research. The pace at which such models are generated as well as the range of domains covered is an indication of the trend which not only the public but also the scientific community is currently experiencing. We give some examples on how to use such models in research by focusing on GPT3.5/ChatGPT3.4 and ChatGPT4 at the current state and show that such a range of capabilities in a single system is a strong sign of approaching general intelligence. Innovations integrating such models will also expand along the maturation of such AI systems and exhibit unforeseeable applications that will have important impacts on several aspects of our societies.

Designing Interaction for Multi-agent Cooperative System in an Office Environment

Feb 15, 2020

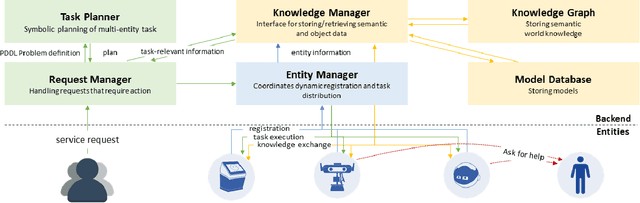

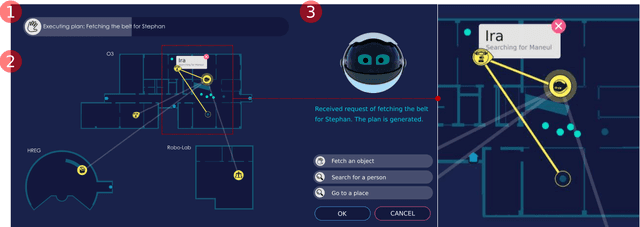

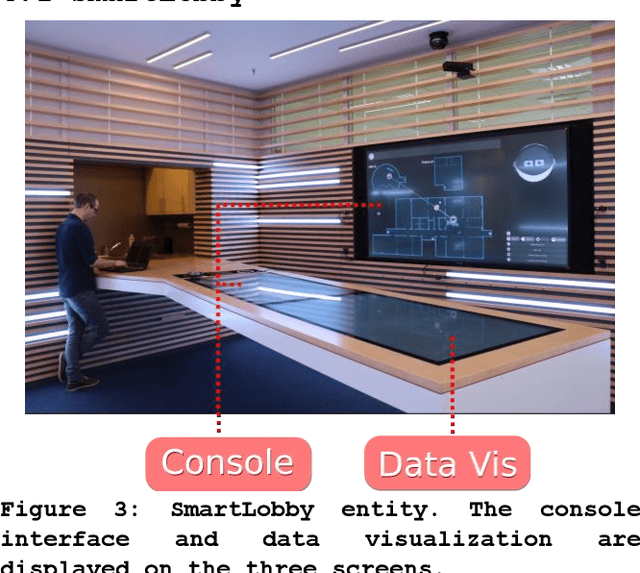

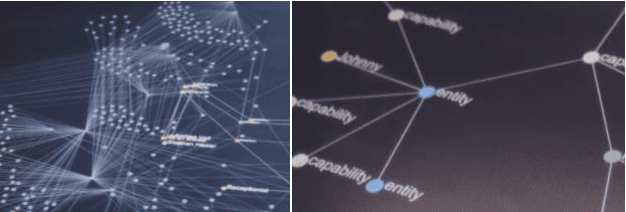

Abstract:Future intelligent system will involve very various types of artificial agents, such as mobile robots, smart home infrastructure or personal devices, which share data and collaborate with each other to execute certain tasks.Designing an efficient human-machine interface, which can support users to express needs to the system, supervise the collaboration progress of different entities and evaluate the result, will be challengeable. This paper presents the design and implementation of the human-machine interface of Intelligent Cyber-Physical system (ICPS),which is a multi-entity coordination system of robots and other smart devices in a working environment. ICPS gathers sensory data from entities and then receives users' command, then optimizes plans to utilize the capability of different entities to serve people. Using multi-model interaction methods, e.g. graphical interfaces, speech interaction, gestures and facial expressions, ICPS is able to receive inputs from users through different entities, keep users aware of the progress and accomplish the task efficiently

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge