Angela Doufexi

Analysis on Energy Efficiency of RIS-Assisted Multiuser Downlink Near-Field Communications

May 23, 2025Abstract:In this paper, we focus on the energy efficiency (EE) optimization and analysis of reconfigurable intelligent surface (RIS)-assisted multiuser downlink near-field communications. Specifically, we conduct a comprehensive study on several key factors affecting EE performance, including the number of RIS elements, the types of reconfigurable elements, reconfiguration resolutions, and the maximum transmit power. To accurately capture the power characteristics of RISs, we adopt more practical power consumption models for three commonly used reconfigurable elements in RISs: PIN diodes, varactor diodes, and radio frequency (RF) switches. These different elements may result in RIS systems exhibiting significantly different energy efficiencies (EEs), even when their spectral efficiencies (SEs) are similar. Considering discrete phases implemented at most RISs in practice, which makes their optimization NP-hard, we develop a nested alternating optimization framework to maximize EE, consisting of an outer integer-based optimization for discrete RIS phase reconfigurations and a nested non-convex optimization for continuous transmit power allocation within each iteration. Extensive comparisons with multiple benchmark schemes validate the effectiveness and efficiency of the proposed framework. Furthermore, based on the proposed optimization method, we analyze the EE performance of RISs across different key factors and identify the optimal RIS architecture yielding the highest EE.

A Heuristic-Integrated DRL Approach for Phase Optimization in Large-Scale RISs

May 07, 2025

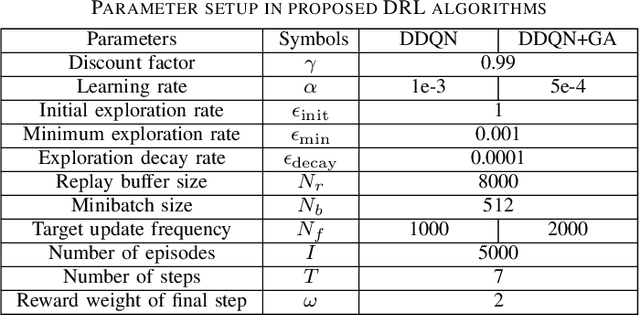

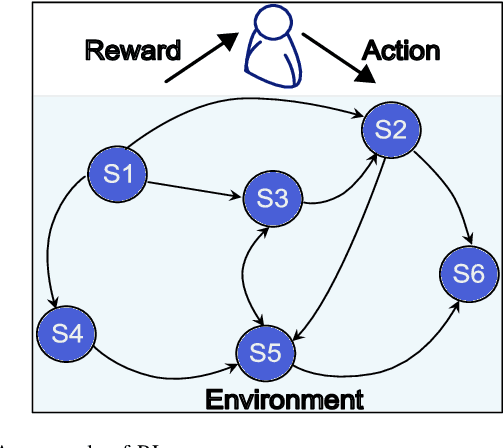

Abstract:Optimizing discrete phase shifts in large-scale reconfigurable intelligent surfaces (RISs) is challenging due to their non-convex and non-linear nature. In this letter, we propose a heuristic-integrated deep reinforcement learning (DRL) framework that (1) leverages accumulated actions over multiple steps in the double deep Q-network (DDQN) for RIS column-wise control and (2) integrates a greedy algorithm (GA) into each DRL step to refine the state via fine-grained, element-wise optimization of RIS configurations. By learning from GA-included states, the proposed approach effectively addresses RIS optimization within a small DRL action space, demonstrating its capability to optimize phase-shift configurations of large-scale RISs.

Low-Complexity Resource Management for MC-RSMA in URLLC With Imperfect CSIT

Dec 29, 2023

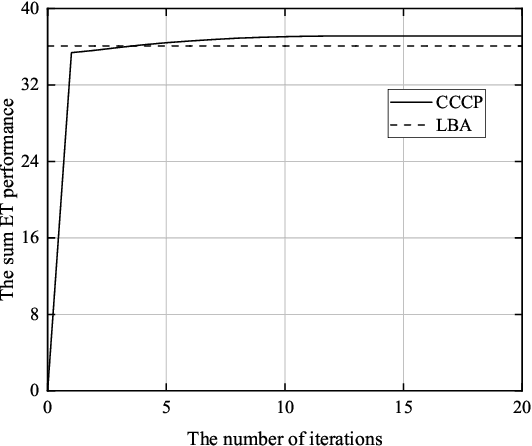

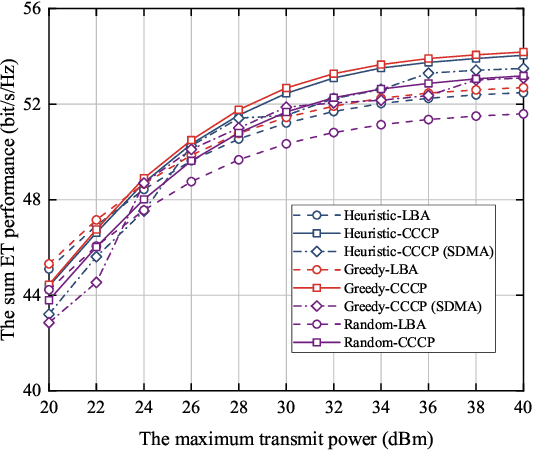

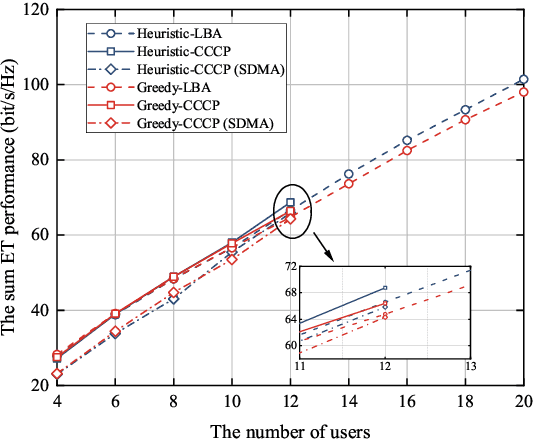

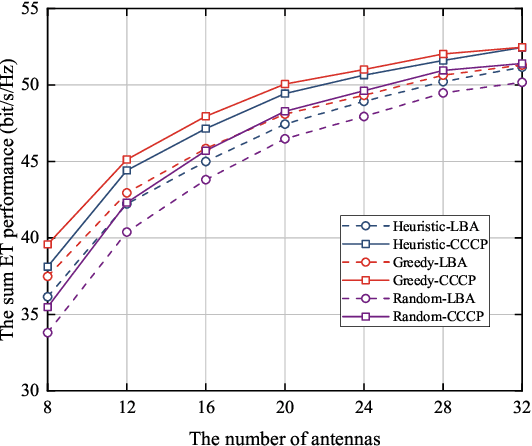

Abstract:This paper investigates low-complexity resource management design in multi-carrier rate-splitting multiple access (MC-RSMA) systems with imperfect channel state information (CSI) for ultra-reliable and low-latency communications (URLLC) applications. To explore the trade-off between the decoding error probability and achievable rate, effective throughput (ET) is adopted as the utility function in this study. Then, a mixed-integer non-convex problem is formulated, where power allocation, rate adaption, and user grouping are jointly taken into consideration. To solve this problem, we first prove that ET is a monotone increasing function of rate under the strict reliability constraint of URLLC. Based on this proposition, an iteration-based concave-convex programming (CCCP) method and an iteration-free lower-bound approximation (LBA) method are developed to optimize power allocation within a single subcarrier. Next, a dynamic programming (DP)-based method is proposed to determine near-optimal user grouping schemes. Besides, a CSI-based method is further proposed to reduce the complexity and obtain important insights into user grouping for MC-RSMA systems. The simulation results verify the effectiveness of the CCCP and LBA methods in power allocation and the DP-based and CSI-based methods in user grouping. Besides, the superiority of RSMA for URLLC services is demonstrated when compared to spatial division multiple access.

Integer-Based Pattern Synthesis for Asymmetric Multi-Reflection RIS

Dec 28, 2023

Abstract:This study delves into the radiation pattern synthesis of reconfigurable intelligent surfaces (RIS) / reflection metasurfaces. Through superimposing multiple single-reflection profiles, which comprise the amplitude and/or phase settings of all constituent elements, a single incident wave can be effectively reflected in multiple asymmetric directions. However, some mismatch and interference between adjacent reflection beams may be caused by this superposition as well. Additionally, it is constrained by the inherent limitation that achieving linear and continuous amplitude adjustments and phase shifts in real-world designs is challenging. Consequently, the reconfigurable amplitude and phase must be approximated to discrete values, necessitating the arrangement of reflection profile before and after optimization based on integer. Therefore, in this paper, we adapt the traditional particle swarm optimization (PSO) algorithm to discretized integer-based PSO by proposing the concepts of 'discard rate' and 'knowledge.' With the enhancement of the integer-based programming, the multiple asymmetric reflection pattern can be synthesized with suppressed sidelobe levels within limited iterations and time cost.

DRL-Based Sidelobe Suppression for Multi-focus Reconfigurable Intelligent Surface

Dec 24, 2023

Abstract:Reconfigurable intelligent surface (RIS) technology is receiving significant attention as a key enabling technology for 6G communications, with much attention given to coverage infill and wireless power transfer. However, relatively little attention has been paid to the radiation pattern fidelity, for example, sidelobe suppression. When considering multi-user coverage infill, direct beam pattern synthesis using superposition can result in undesirable sidelobe levels. To address this issue, this paper introduces and applies deep reinforcement learning (DRL) as a means to optimize the far-field pattern, offering a 4dB reduction in the unwanted sidelobe levels, thereby improving energy efficiency and decreasing the co-channel interference levels.

A DRL-based Reflection Enhancement Method for RIS-assisted Multi-receiver Communications

Sep 11, 2023

Abstract:In reconfigurable intelligent surface (RIS)-assisted wireless communication systems, the pointing accuracy and intensity of reflections depend crucially on the 'profile,' representing the amplitude/phase state information of all elements in a RIS array. The superposition of multiple single-reflection profiles enables multi-reflection for distributed users. However, the optimization challenges from periodic element arrangements in single-reflection and multi-reflection profiles are understudied. The combination of periodical single-reflection profiles leads to amplitude/phase counteractions, affecting the performance of each reflection beam. This paper focuses on a dual-reflection optimization scenario and investigates the far-field performance deterioration caused by the misalignment of overlapped profiles. To address this issue, we introduce a novel deep reinforcement learning (DRL)-based optimization method. Comparative experiments against random and exhaustive searches demonstrate that our proposed DRL method outperforms both alternatives, achieving the shortest optimization time. Remarkably, our approach achieves a 1.2 dB gain in the reflection peak gain and a broader beam without any hardware modifications.

Variational Autoencoder Assisted Neural Network Likelihood RSRP Prediction Model

Jun 27, 2022

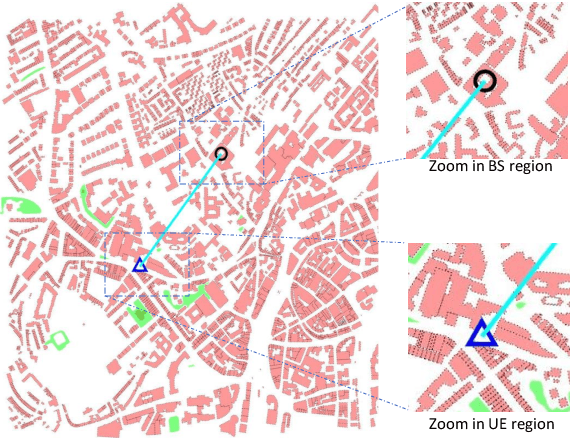

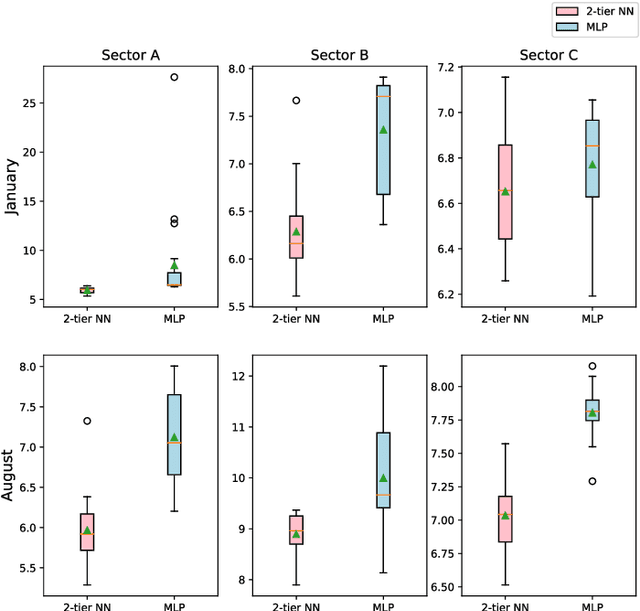

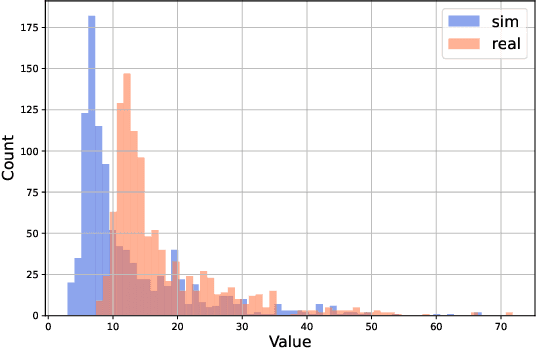

Abstract:Measuring customer experience on mobile data is of utmost importance for global mobile operators. The reference signal received power (RSRP) is one of the important indicators for current mobile network management, evaluation and monitoring. Radio data gathered through the minimization of drive test (MDT), a 3GPP standard technique, is commonly used for radio network analysis. Collecting MDT data in different geographical areas is inefficient and constrained by the terrain conditions and user presence, hence is not an adequate technique for dynamic radio environments. In this paper, we study a generative model for RSRP prediction, exploiting MDT data and a digital twin (DT), and propose a data-driven, two-tier neural network (NN) model. In the first tier, environmental information related to user equipment (UE), base stations (BS) and network key performance indicators (KPI) are extracted through a variational autoencoder (VAE). The second tier is designed as a likelihood model. Here, the environmental features and real MDT data features are adopted, formulating an integrated training process. On validation, our proposed model that uses real-world data demonstrates an accuracy improvement of about 20% or more compared with the empirical model and about 10% when compared with a fully connected prediction network.

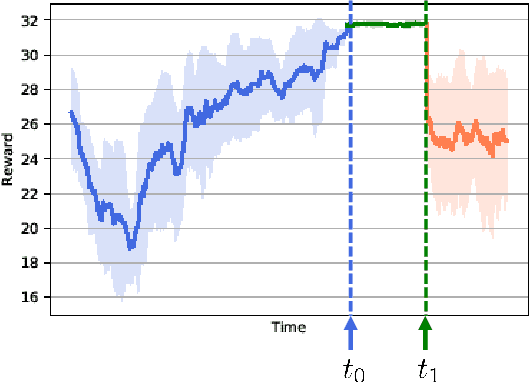

Sim2real for Reinforcement Learning Driven Next Generation Networks

Jun 08, 2022

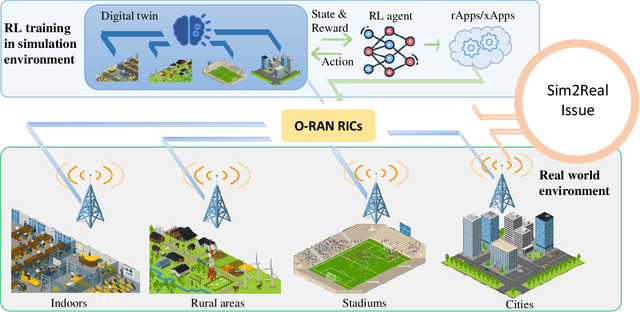

Abstract:The next generation of networks will actively embrace artificial intelligence (AI) and machine learning (ML) technologies for automation networks and optimal network operation strategies. The emerging network structure represented by Open RAN (O-RAN) conforms to this trend, and the radio intelligent controller (RIC) at the centre of its specification serves as an ML applications host. Various ML models, especially Reinforcement Learning (RL) models, are regarded as the key to solving RAN-related multi-objective optimization problems. However, it should be recognized that most of the current RL successes are confined to abstract and simplified simulation environments, which may not directly translate to high performance in complex real environments. One of the main reasons is the modelling gap between the simulation and the real environment, which could make the RL agent trained by simulation ill-equipped for the real environment. This issue is termed as the sim2real gap. This article brings to the fore the sim2real challenge within the context of O-RAN. Specifically, it emphasizes the characteristics, and benefits that the digital twins (DT) could have as a place for model development and verification. Several use cases are presented to exemplify and demonstrate failure modes of the simulations trained RL model in real environments. The effectiveness of DT in assisting the development of RL algorithms is discussed. Then the current state of the art learning-based methods commonly used to overcome the sim2real challenge are presented. Finally, the development and deployment concerns for the RL applications realisation in O-RAN are discussed from the view of the potential issues like data interaction, environment bottlenecks, and algorithm design.

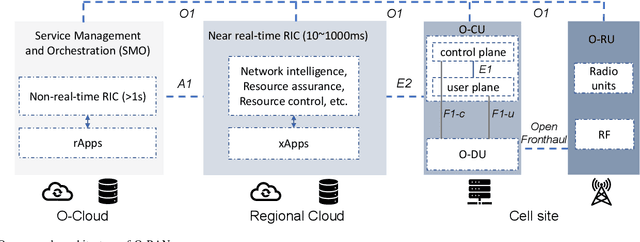

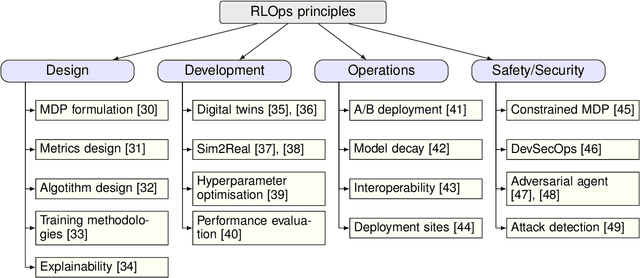

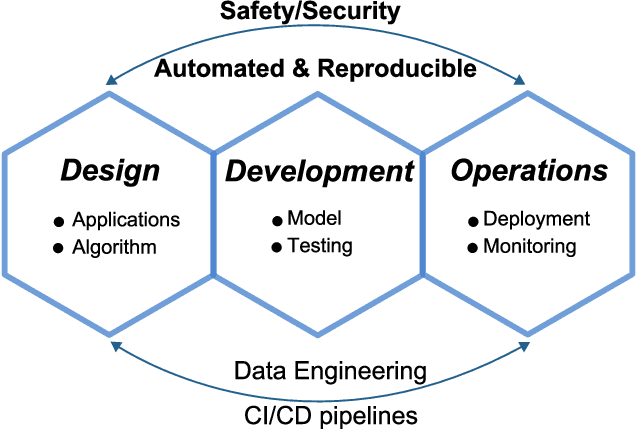

RLOps: Development Life-cycle of Reinforcement Learning Aided Open RAN

Nov 12, 2021

Abstract:Radio access network (RAN) technologies continue to witness massive growth, with Open RAN gaining the most recent momentum. In the O-RAN specifications, the RAN intelligent controller (RIC) serves as an automation host. This article introduces principles for machine learning (ML), in particular, reinforcement learning (RL) relevant for the O-RAN stack. Furthermore, we review state-of-the-art research in wireless networks and cast it onto the RAN framework and the hierarchy of the O-RAN architecture. We provide a taxonomy of the challenges faced by ML/RL models throughout the development life-cycle: from the system specification to production deployment (data acquisition, model design, testing and management, etc.). To address the challenges, we integrate a set of existing MLOps principles with unique characteristics when RL agents are considered. This paper discusses a systematic life-cycle model development, testing and validation pipeline, termed: RLOps. We discuss all fundamental parts of RLOps, which include: model specification, development and distillation, production environment serving, operations monitoring, safety/security and data engineering platform. Based on these principles, we propose the best practices for RLOps to achieve an automated and reproducible model development process.

Deep Transfer Learning for WiFi Localization

Mar 08, 2021

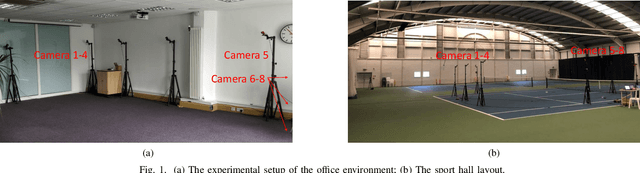

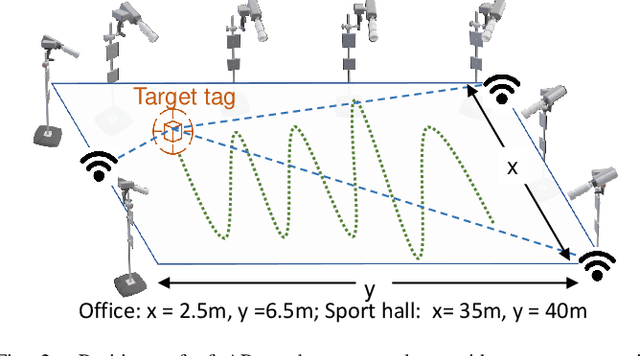

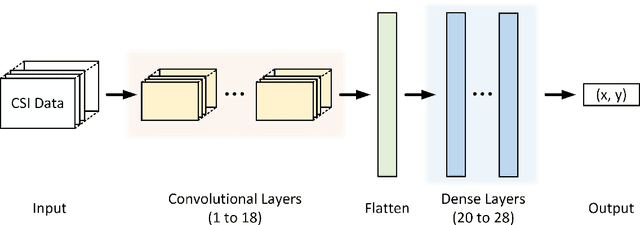

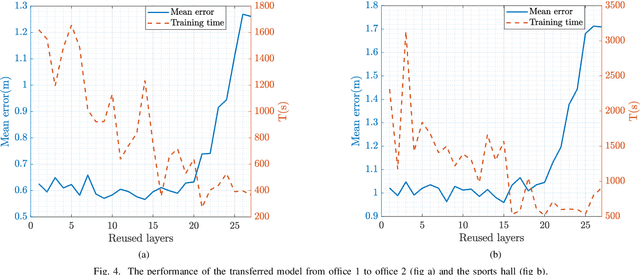

Abstract:This paper studies a WiFi indoor localisation technique based on using a deep learning model and its transfer strategies. We take CSI packets collected via the WiFi standard channel sounding as the training dataset and verify the CNN model on the subsets collected in three experimental environments. We achieve a localisation accuracy of 46.55 cm in an ideal $(6.5m \times 2.5m)$ office with no obstacles, 58.30 cm in an office with obstacles, and 102.8 cm in a sports hall $(40 \times 35m)$. Then, we evaluate the transfer ability of the proposed model to different environments. The experimental results show that, for a trained localisation model, feature extraction layers can be directly transferred to other models and only the fully connected layers need to be retrained to achieve the same baseline accuracy with non-transferred base models. This can save 60% of the training parameters and reduce the training time by more than half. Finally, an ablation study of the training dataset shows that, in both office and sport hall scenarios, after reusing the feature extraction layers of the base model, only 55% of the training data is required to obtain the models' accuracy similar to the base models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge