Shipra Kapoor

Federated Meta-Learning for Traffic Steering in O-RAN

Sep 13, 2022

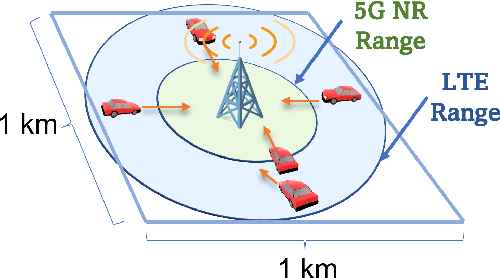

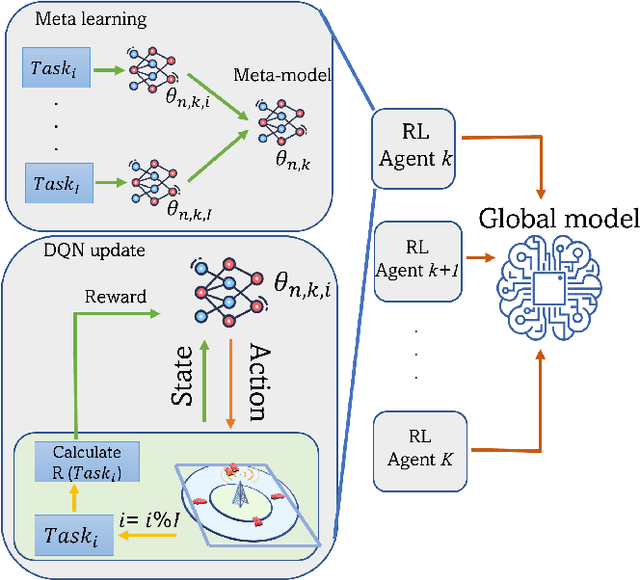

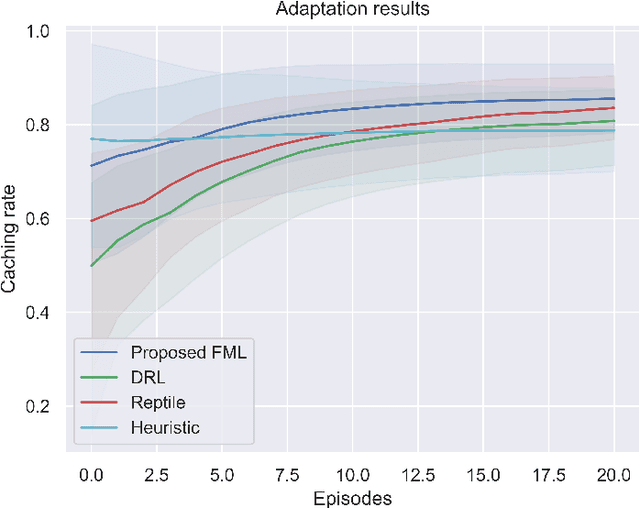

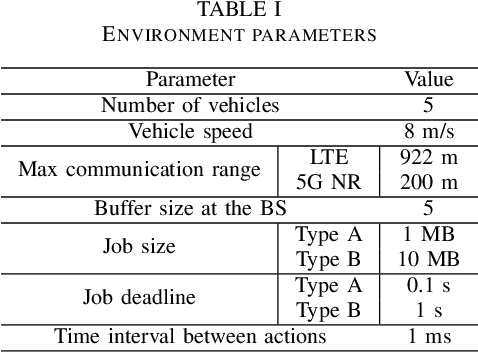

Abstract:The vision of 5G lies in providing high data rates, low latency (for the aim of near-real-time applications), significantly increased base station capacity, and near-perfect quality of service (QoS) for users, compared to LTE networks. In order to provide such services, 5G systems will support various combinations of access technologies such as LTE, NR, NR-U and Wi-Fi. Each radio access technology (RAT) provides different types of access, and these should be allocated and managed optimally among the users. Besides resource management, 5G systems will also support a dual connectivity service. The orchestration of the network therefore becomes a more difficult problem for system managers with respect to legacy access technologies. In this paper, we propose an algorithm for RAT allocation based on federated meta-learning (FML), which enables RAN intelligent controllers (RICs) to adapt more quickly to dynamically changing environments. We have designed a simulation environment which contains LTE and 5G NR service technologies. In the simulation, our objective is to fulfil UE demands within the deadline of transmission to provide higher QoS values. We compared our proposed algorithm with a single RL agent, the Reptile algorithm and a rule-based heuristic method. Simulation results show that the proposed FML method achieves higher caching rates at first deployment round 21% and 12% respectively. Moreover, proposed approach adapts to new tasks and environments most quickly amongst the compared methods.

Variational Autoencoder Assisted Neural Network Likelihood RSRP Prediction Model

Jun 27, 2022

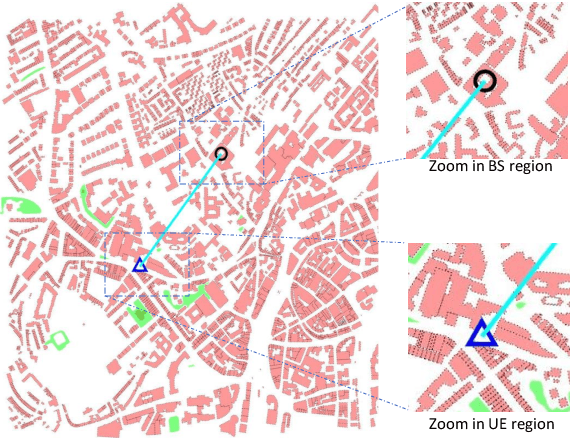

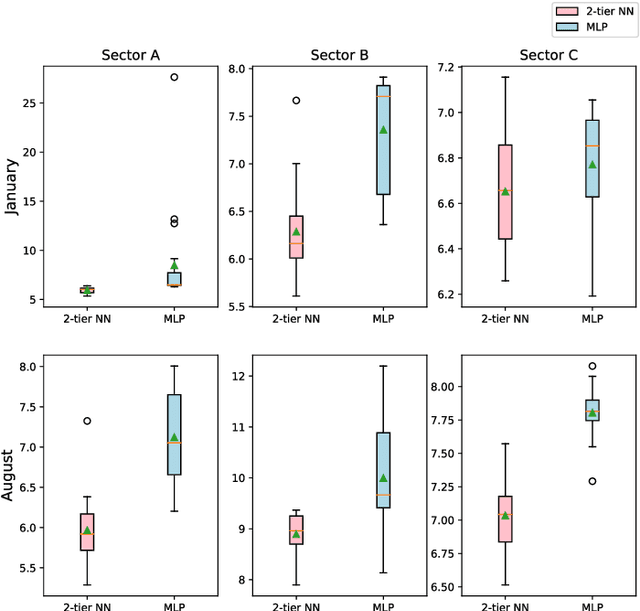

Abstract:Measuring customer experience on mobile data is of utmost importance for global mobile operators. The reference signal received power (RSRP) is one of the important indicators for current mobile network management, evaluation and monitoring. Radio data gathered through the minimization of drive test (MDT), a 3GPP standard technique, is commonly used for radio network analysis. Collecting MDT data in different geographical areas is inefficient and constrained by the terrain conditions and user presence, hence is not an adequate technique for dynamic radio environments. In this paper, we study a generative model for RSRP prediction, exploiting MDT data and a digital twin (DT), and propose a data-driven, two-tier neural network (NN) model. In the first tier, environmental information related to user equipment (UE), base stations (BS) and network key performance indicators (KPI) are extracted through a variational autoencoder (VAE). The second tier is designed as a likelihood model. Here, the environmental features and real MDT data features are adopted, formulating an integrated training process. On validation, our proposed model that uses real-world data demonstrates an accuracy improvement of about 20% or more compared with the empirical model and about 10% when compared with a fully connected prediction network.

Sim2real for Reinforcement Learning Driven Next Generation Networks

Jun 08, 2022

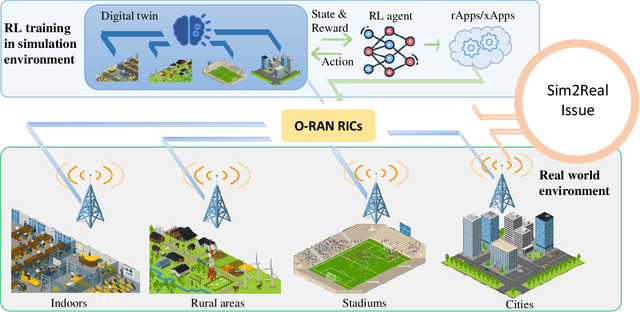

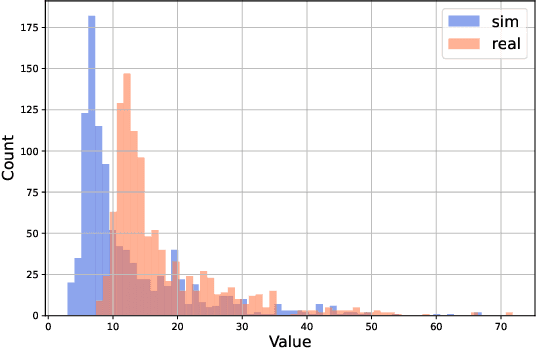

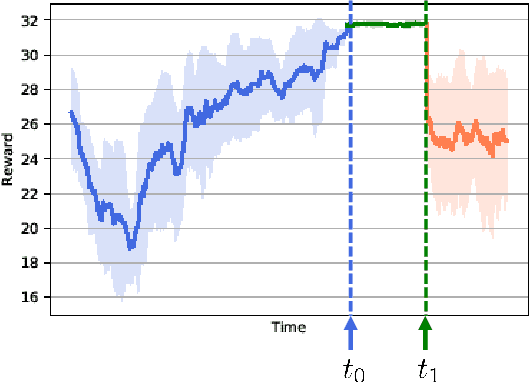

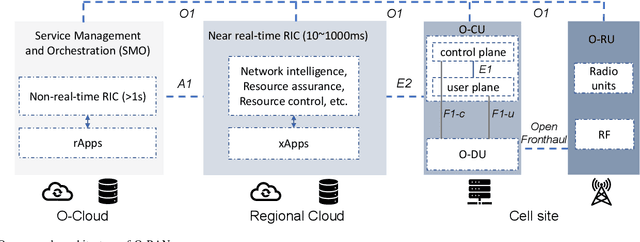

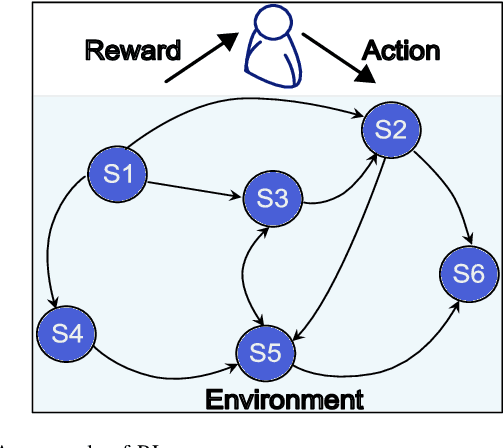

Abstract:The next generation of networks will actively embrace artificial intelligence (AI) and machine learning (ML) technologies for automation networks and optimal network operation strategies. The emerging network structure represented by Open RAN (O-RAN) conforms to this trend, and the radio intelligent controller (RIC) at the centre of its specification serves as an ML applications host. Various ML models, especially Reinforcement Learning (RL) models, are regarded as the key to solving RAN-related multi-objective optimization problems. However, it should be recognized that most of the current RL successes are confined to abstract and simplified simulation environments, which may not directly translate to high performance in complex real environments. One of the main reasons is the modelling gap between the simulation and the real environment, which could make the RL agent trained by simulation ill-equipped for the real environment. This issue is termed as the sim2real gap. This article brings to the fore the sim2real challenge within the context of O-RAN. Specifically, it emphasizes the characteristics, and benefits that the digital twins (DT) could have as a place for model development and verification. Several use cases are presented to exemplify and demonstrate failure modes of the simulations trained RL model in real environments. The effectiveness of DT in assisting the development of RL algorithms is discussed. Then the current state of the art learning-based methods commonly used to overcome the sim2real challenge are presented. Finally, the development and deployment concerns for the RL applications realisation in O-RAN are discussed from the view of the potential issues like data interaction, environment bottlenecks, and algorithm design.

Smart Interference Management xApp using Deep Reinforcement Learning

Apr 12, 2022

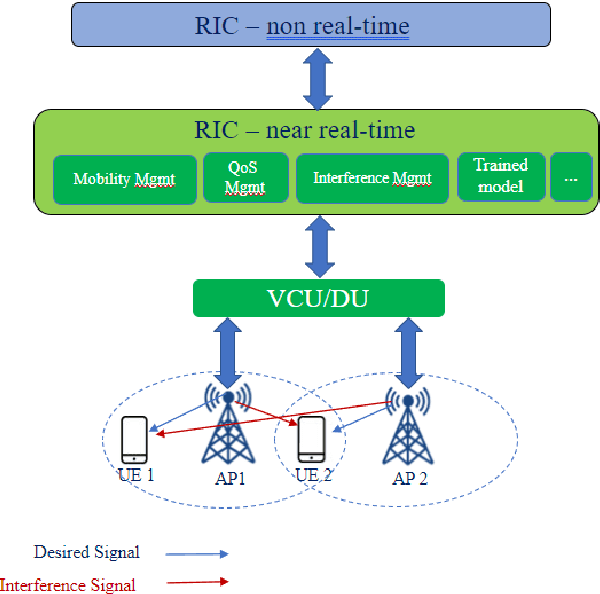

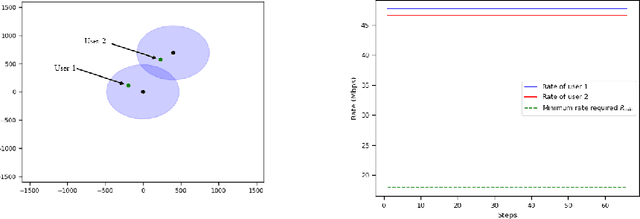

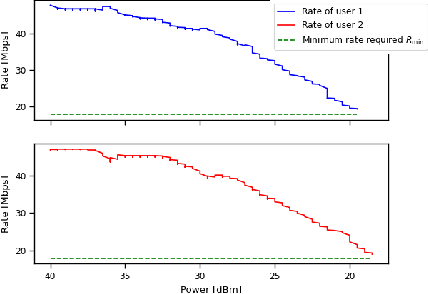

Abstract:Interference continues to be a key limiting factor in cellular radio access network (RAN) deployments. Effective, data-driven, self-adapting radio resource management (RRM) solutions are essential for tackling interference, and thus achieving the desired performance levels particularly at the cell-edge. In future network architecture, RAN intelligent controller (RIC) running with near-real-time applications, called xApps, is considered as a potential component to enable RRM. In this paper, based on deep reinforcement learning (RL) xApp, a joint sub-band masking and power management is proposed for smart interference management. The sub-band resource masking problem is formulated as a Markov Decision Process (MDP) that can be solved employing deep RL to approximate the policy functions as well as to avoid extremely high computational and storage costs of conventional tabular-based approaches. The developed xApp is scalable in both storage and computation. Simulation results demonstrate advantages of the proposed approach over decentralized baselines in terms of the trade-off between cell-centre and cell-edge user rates, energy efficiency and computational efficiency.

RLOps: Development Life-cycle of Reinforcement Learning Aided Open RAN

Nov 12, 2021

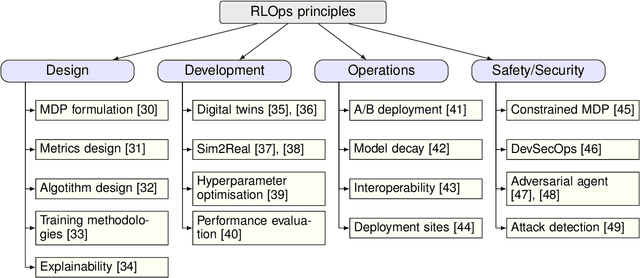

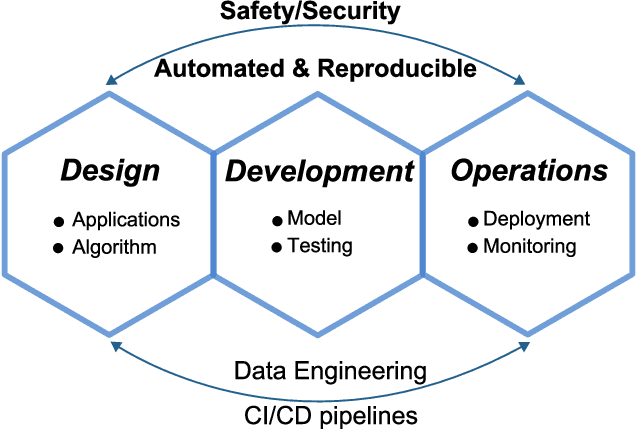

Abstract:Radio access network (RAN) technologies continue to witness massive growth, with Open RAN gaining the most recent momentum. In the O-RAN specifications, the RAN intelligent controller (RIC) serves as an automation host. This article introduces principles for machine learning (ML), in particular, reinforcement learning (RL) relevant for the O-RAN stack. Furthermore, we review state-of-the-art research in wireless networks and cast it onto the RAN framework and the hierarchy of the O-RAN architecture. We provide a taxonomy of the challenges faced by ML/RL models throughout the development life-cycle: from the system specification to production deployment (data acquisition, model design, testing and management, etc.). To address the challenges, we integrate a set of existing MLOps principles with unique characteristics when RL agents are considered. This paper discusses a systematic life-cycle model development, testing and validation pipeline, termed: RLOps. We discuss all fundamental parts of RLOps, which include: model specification, development and distillation, production environment serving, operations monitoring, safety/security and data engineering platform. Based on these principles, we propose the best practices for RLOps to achieve an automated and reproducible model development process.

Self-play Learning Strategies for Resource Assignment in Open-RAN Networks

Mar 03, 2021

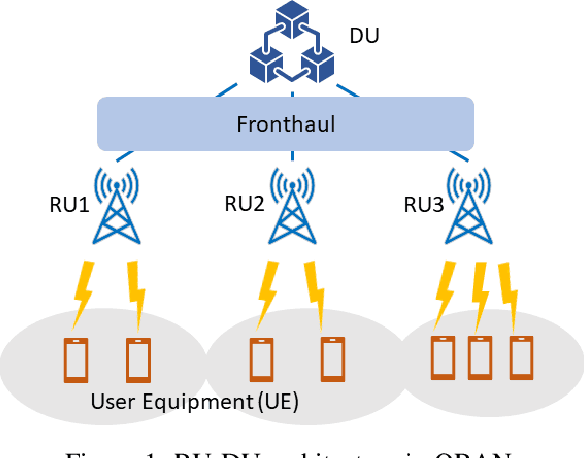

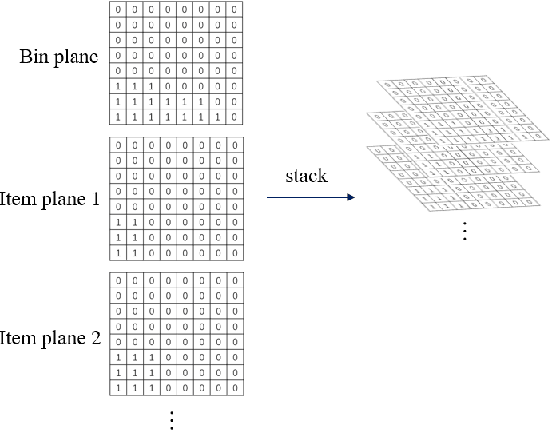

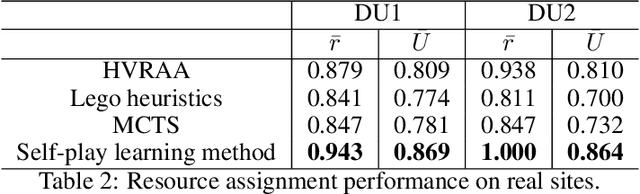

Abstract:Open Radio Access Network (ORAN) is being developed with an aim to democratise access and lower the cost of future mobile data networks, supporting network services with various QoS requirements, such as massive IoT and URLLC. In ORAN, network functionality is dis-aggregated into remote units (RUs), distributed units (DUs) and central units (CUs), which allows flexible software on Commercial-Off-The-Shelf (COTS) deployments. Furthermore, the mapping of variable RU requirements to local mobile edge computing centres for future centralized processing would significantly reduce the power consumption in cellular networks. In this paper, we study the RU-DU resource assignment problem in an ORAN system, modelled as a 2D bin packing problem. A deep reinforcement learning-based self-play approach is proposed to achieve efficient RU-DU resource management, with AlphaGo Zero inspired neural Monte-Carlo Tree Search (MCTS). Experiments on representative 2D bin packing environment and real sites data show that the self-play learning strategy achieves intelligent RU-DU resource assignment for different network conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge