Andrew Cheng

GenAI for Systems: Recurring Challenges and Design Principles from Software to Silicon

Feb 16, 2026Abstract:Generative AI is reshaping how computing systems are designed, optimized, and built, yet research remains fragmented across software, architecture, and chip design communities. This paper takes a cross-stack perspective, examining how generative models are being applied from code generation and distributed runtimes through hardware design space exploration to RTL synthesis, physical layout, and verification. Rather than reviewing each layer in isolation, we analyze how the same structural difficulties and effective responses recur across the stack. Our central finding is one of convergence. Despite the diversity of domains and tools, the field keeps encountering five recurring challenges (the feedback loop crisis, the tacit knowledge problem, trust and validation, co-design across boundaries, and the shift from determinism to dynamism) and keeps arriving at five design principles that independently emerge as effective responses (embracing hybrid approaches, designing for continuous feedback, separating concerns by role, matching methods to problem structure, and building on decades of systems knowledge). We organize these into a challenge--principle map that serves as a diagnostic and design aid, showing which principles have proven effective for which challenges across layers. Through concrete cross-stack examples, we show how systems navigate this map as they mature, and argue that the field needs shared engineering methodology, including common vocabularies, cross-layer benchmarks, and systematic design practices, so that progress compounds across communities rather than being rediscovered in each one. Our analysis covers more than 275 papers spanning eleven application areas across three layers of the computing stack, and distills open research questions that become visible only from a cross-layer vantage point.

Lifetime-Aware Design of Item-Level Intelligence

Sep 09, 2025

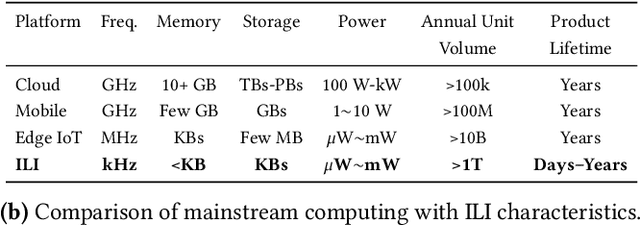

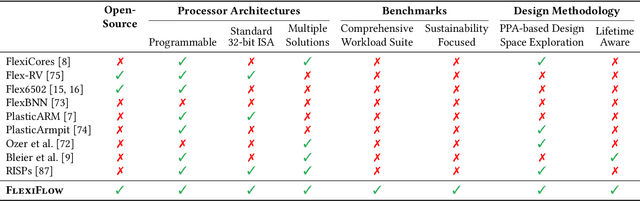

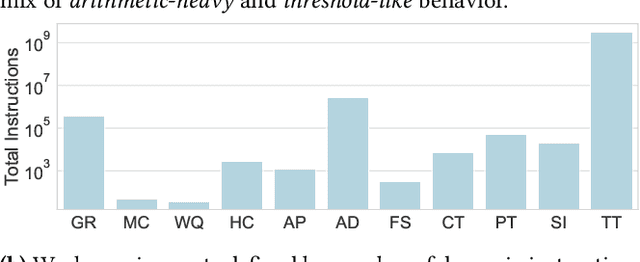

Abstract:We present FlexiFlow, a lifetime-aware design framework for item-level intelligence (ILI) where computation is integrated directly into disposable products like food packaging and medical patches. Our framework leverages natively flexible electronics which offer significantly lower costs than silicon but are limited to kHz speeds and several thousands of gates. Our insight is that unlike traditional computing with more uniform deployment patterns, ILI applications exhibit 1000X variation in operational lifetime, fundamentally changing optimal architectural design decisions when considering trillion-item deployment scales. To enable holistic design and optimization, we model the trade-offs between embodied carbon footprint and operational carbon footprint based on application-specific lifetimes. The framework includes: (1) FlexiBench, a workload suite targeting sustainability applications from spoilage detection to health monitoring; (2) FlexiBits, area-optimized RISC-V cores with 1/4/8-bit datapaths achieving 2.65X to 3.50X better energy efficiency per workload execution; and (3) a carbon-aware model that selects optimal architectures based on deployment characteristics. We show that lifetime-aware microarchitectural design can reduce carbon footprint by 1.62X, while algorithmic decisions can reduce carbon footprint by 14.5X. We validate our approach through the first tape-out using a PDK for flexible electronics with fully open-source tools, achieving 30.9kHz operation. FlexiFlow enables exploration of computing at the Extreme Edge where conventional design methodologies must be reevaluated to account for new constraints and considerations.

QuArch: A Question-Answering Dataset for AI Agents in Computer Architecture

Jan 06, 2025

Abstract:We introduce QuArch, a dataset of 1500 human-validated question-answer pairs designed to evaluate and enhance language models' understanding of computer architecture. The dataset covers areas including processor design, memory systems, and performance optimization. Our analysis highlights a significant performance gap: the best closed-source model achieves 84% accuracy, while the top small open-source model reaches 72%. We observe notable struggles in memory systems, interconnection networks, and benchmarking. Fine-tuning with QuArch improves small model accuracy by up to 8%, establishing a foundation for advancing AI-driven computer architecture research. The dataset and leaderboard are at https://harvard-edge.github.io/QuArch/.

Structured Regularization for Constrained Optimization on the SPD Manifold

Oct 12, 2024

Abstract:Matrix-valued optimization tasks, including those involving symmetric positive definite (SPD) matrices, arise in a wide range of applications in machine learning, data science and statistics. Classically, such problems are solved via constrained Euclidean optimization, where the domain is viewed as a Euclidean space and the structure of the matrices (e.g., positive definiteness) enters as constraints. More recently, geometric approaches that leverage parametrizations of the problem as unconstrained tasks on the corresponding matrix manifold have been proposed. While they exhibit algorithmic benefits in many settings, they cannot directly handle additional constraints, such as inequality or sparsity constraints. A remedy comes in the form of constrained Riemannian optimization methods, notably, Riemannian Frank-Wolfe and Projected Gradient Descent. However, both algorithms require potentially expensive subroutines that can introduce computational bottlenecks in practise. To mitigate these shortcomings, we introduce a class of structured regularizers, based on symmetric gauge functions, which allow for solving constrained optimization on the SPD manifold with faster unconstrained methods. We show that our structured regularizers can be chosen to preserve or induce desirable structure, in particular convexity and "difference of convex" structure. We demonstrate the effectiveness of our approach in numerical experiments.

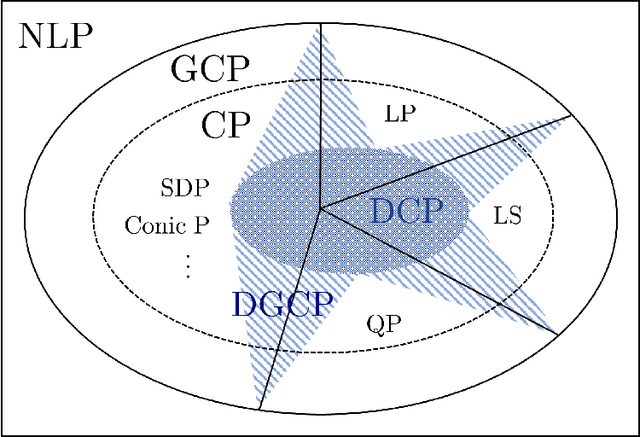

Disciplined Geodesically Convex Programming

Jul 07, 2024

Abstract:Convex programming plays a fundamental role in machine learning, data science, and engineering. Testing convexity structure in nonlinear programs relies on verifying the convexity of objectives and constraints. \citet{grant2006disciplined} introduced a framework, Disciplined Convex Programming (DCP), for automating this verification task for a wide range of convex functions that can be decomposed into basic convex functions (atoms) using convexity-preserving compositions and transformations (rules). However, the restriction to Euclidean convexity concepts can limit the applicability of the framework. For instance, many notable instances of statistical estimators and matrix-valued (sub)routines in machine learning applications are Euclidean non-convex, but exhibit geodesic convexity through a more general Riemannian lens. In this work, we extend disciplined programming to this setting by introducing Disciplined Geodesically Convex Programming (DGCP). We determine convexity-preserving compositions and transformations for geodesically convex functions on general Cartan-Hadamard manifolds, as well as for the special case of symmetric positive definite matrices, a common setting in matrix-valued optimization. For the latter, we also define a basic set of atoms. Our paper is accompanied by a Julia package SymbolicAnalysis.jl, which provides functionality for testing and certifying DGCP-compliant expressions. Our library interfaces with manifold optimization software, which allows for directly solving verified geodesically convex programs.

Hyperspectral Image Denoising Using Non-convex Local Low-rank and Sparse Separation with Spatial-Spectral Total Variation Regularization

Jan 08, 2022

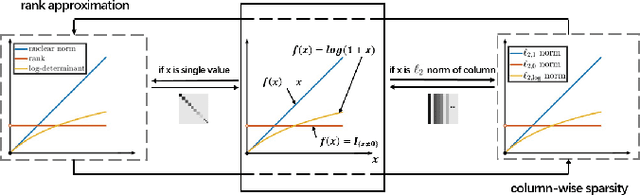

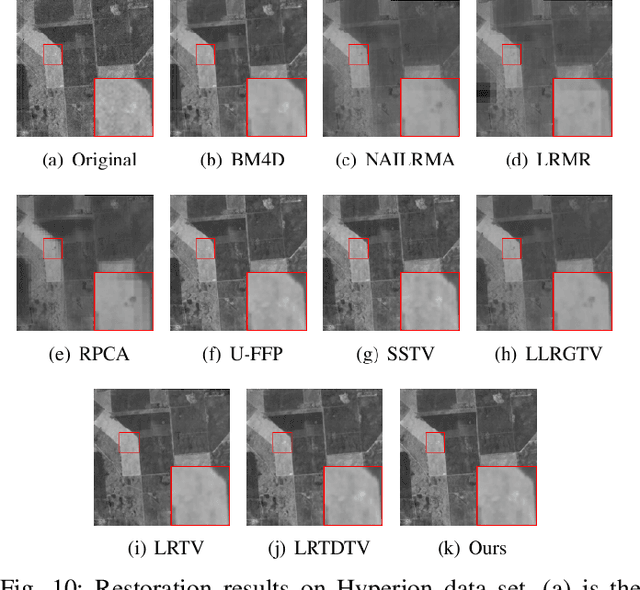

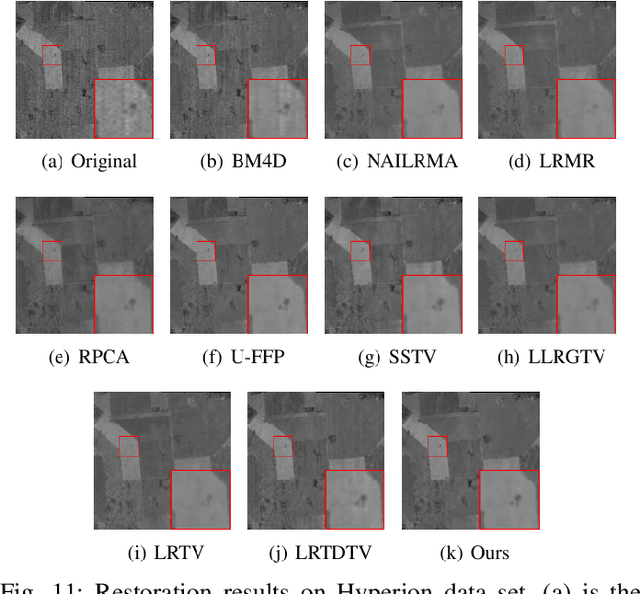

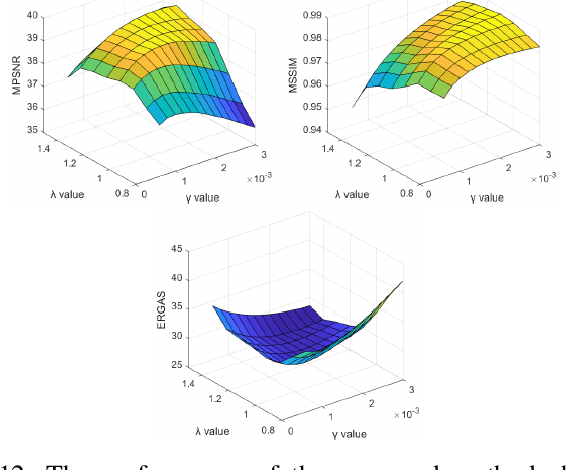

Abstract:In this paper, we propose a novel nonconvex approach to robust principal component analysis for HSI denoising, which focuses on simultaneously developing more accurate approximations to both rank and column-wise sparsity for the low-rank and sparse components, respectively. In particular, the new method adopts the log-determinant rank approximation and a novel $\ell_{2,\log}$ norm, to restrict the local low-rank or column-wisely sparse properties for the component matrices, respectively. For the $\ell_{2,\log}$-regularized shrinkage problem, we develop an efficient, closed-form solution, which is named $\ell_{2,\log}$-shrinkage operator. The new regularization and the corresponding operator can be generally used in other problems that require column-wise sparsity. Moreover, we impose the spatial-spectral total variation regularization in the log-based nonconvex RPCA model, which enhances the global piece-wise smoothness and spectral consistency from the spatial and spectral views in the recovered HSI. Extensive experiments on both simulated and real HSIs demonstrate the effectiveness of the proposed method in denoising HSIs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge