Andrei Kapishnikov

Beyond Rewards: a Hierarchical Perspective on Offline Multiagent Behavioral Analysis

Jun 17, 2022

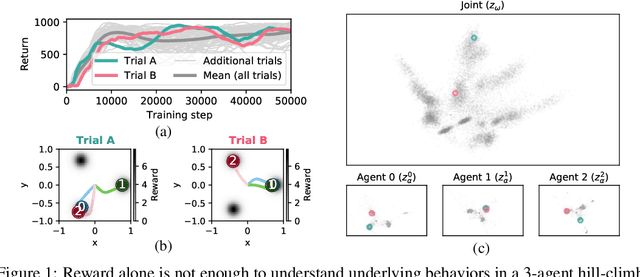

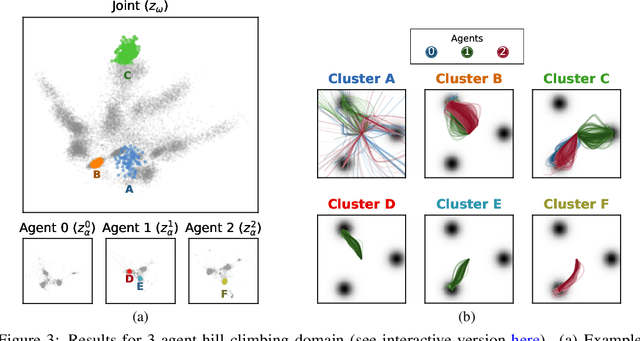

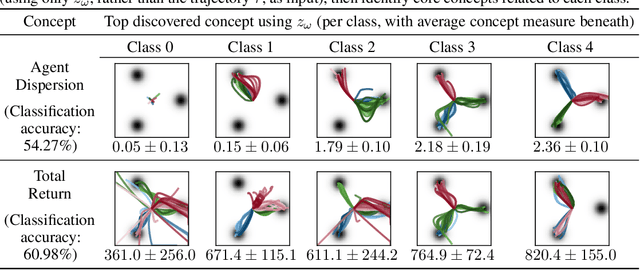

Abstract:Each year, expert-level performance is attained in increasingly-complex multiagent domains, notable examples including Go, Poker, and StarCraft II. This rapid progression is accompanied by a commensurate need to better understand how such agents attain this performance, to enable their safe deployment, identify limitations, and reveal potential means of improving them. In this paper we take a step back from performance-focused multiagent learning, and instead turn our attention towards agent behavior analysis. We introduce a model-agnostic method for discovery of behavior clusters in multiagent domains, using variational inference to learn a hierarchy of behaviors at the joint and local agent levels. Our framework makes no assumption about agents' underlying learning algorithms, does not require access to their latent states or models, and can be trained using entirely offline observational data. We illustrate the effectiveness of our method for enabling the coupled understanding of behaviors at the joint and local agent level, detection of behavior changepoints throughout training, discovery of core behavioral concepts (e.g., those that facilitate higher returns), and demonstrate the approach's scalability to a high-dimensional multiagent MuJoCo control domain.

IMACS: Image Model Attribution Comparison Summaries

Jan 26, 2022

Abstract:Developing a suitable Deep Neural Network (DNN) often requires significant iteration, where different model versions are evaluated and compared. While metrics such as accuracy are a powerful means to succinctly describe a model's performance across a dataset or to directly compare model versions, practitioners often wish to gain a deeper understanding of the factors that influence a model's predictions. Interpretability techniques such as gradient-based methods and local approximations can be used to examine small sets of inputs in fine detail, but it can be hard to determine if results from small sets generalize across a dataset. We introduce IMACS, a method that combines gradient-based model attributions with aggregation and visualization techniques to summarize differences in attributions between two DNN image models. More specifically, IMACS extracts salient input features from an evaluation dataset, clusters them based on similarity, then visualizes differences in model attributions for similar input features. In this work, we introduce a framework for aggregating, summarizing, and comparing the attribution information for two models across a dataset; present visualizations that highlight differences between 2 image classification models; and show how our technique can uncover behavioral differences caused by domain shift between two models trained on satellite images.

Acquisition of Chess Knowledge in AlphaZero

Nov 27, 2021

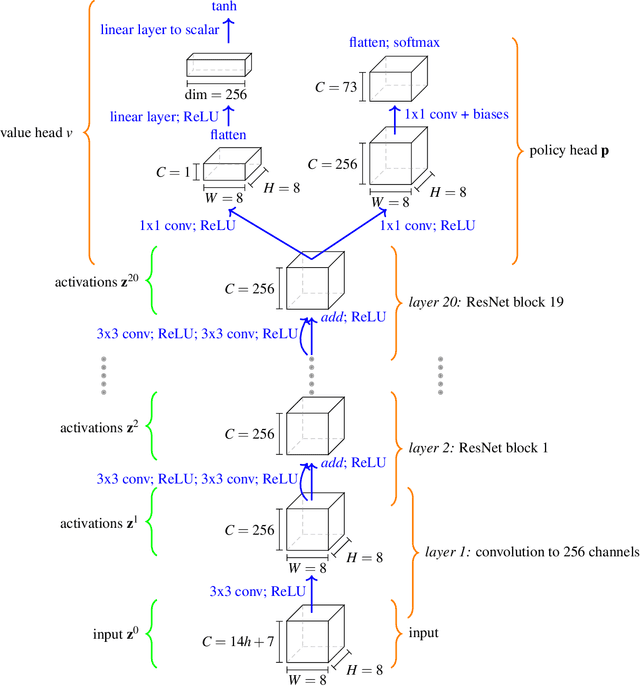

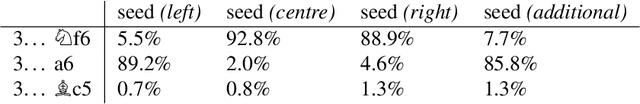

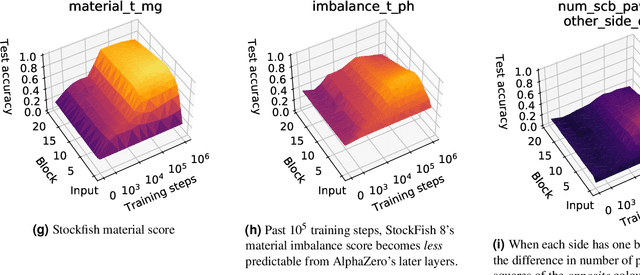

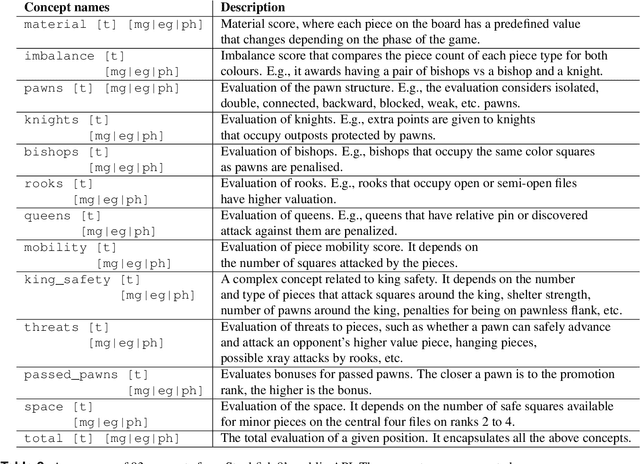

Abstract:What is learned by sophisticated neural network agents such as AlphaZero? This question is of both scientific and practical interest. If the representations of strong neural networks bear no resemblance to human concepts, our ability to understand faithful explanations of their decisions will be restricted, ultimately limiting what we can achieve with neural network interpretability. In this work we provide evidence that human knowledge is acquired by the AlphaZero neural network as it trains on the game of chess. By probing for a broad range of human chess concepts we show when and where these concepts are represented in the AlphaZero network. We also provide a behavioural analysis focusing on opening play, including qualitative analysis from chess Grandmaster Vladimir Kramnik. Finally, we carry out a preliminary investigation looking at the low-level details of AlphaZero's representations, and make the resulting behavioural and representational analyses available online.

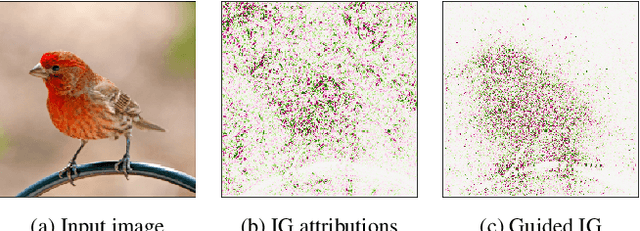

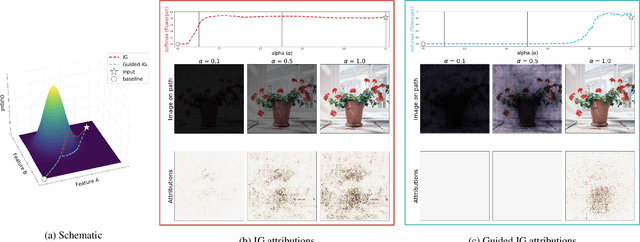

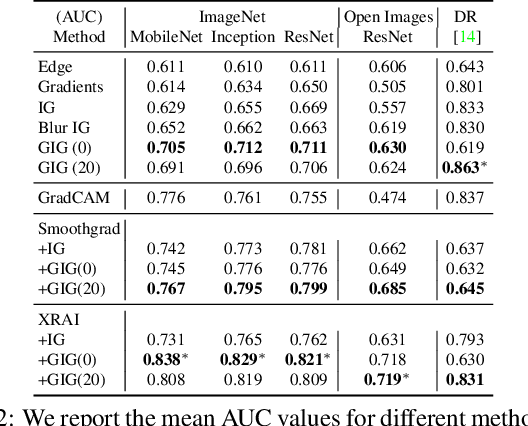

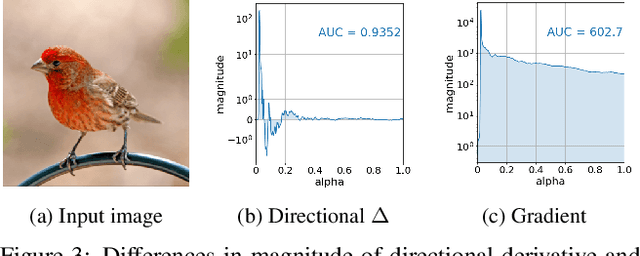

Guided Integrated Gradients: An Adaptive Path Method for Removing Noise

Jun 17, 2021

Abstract:Integrated Gradients (IG) is a commonly used feature attribution method for deep neural networks. While IG has many desirable properties, the method often produces spurious/noisy pixel attributions in regions that are not related to the predicted class when applied to visual models. While this has been previously noted, most existing solutions are aimed at addressing the symptoms by explicitly reducing the noise in the resulting attributions. In this work, we show that one of the causes of the problem is the accumulation of noise along the IG path. To minimize the effect of this source of noise, we propose adapting the attribution path itself -- conditioning the path not just on the image but also on the model being explained. We introduce Adaptive Path Methods (APMs) as a generalization of path methods, and Guided IG as a specific instance of an APM. Empirically, Guided IG creates saliency maps better aligned with the model's prediction and the input image that is being explained. We show through qualitative and quantitative experiments that Guided IG outperforms other, related methods in nearly every experiment.

* 13 pages, 11 figures, for implementation sources see https://github.com/PAIR-code/saliency

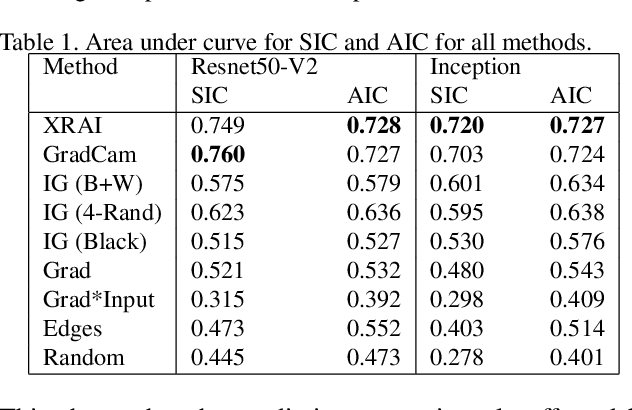

Segment Integrated Gradients: Better attributions through regions

Jun 06, 2019

Abstract:Saliency methods can aid understanding of deep neural networks. Recent years have witnessed many improvements to saliency methods, as well as new ways for evaluating them. In this paper, we 1) present a novel region-based attribution method, Segment-Integrated Gradients (SIG), that builds upon integrated gradients (Sundararajan et al. 2017), 2) introduce evaluation methods for empirically assessing the quality of image-based saliency maps (Performance Information Curves (PICs)), and 3) contribute an axiom-based sanity check for attribution methods. Through empirical experiments and example results, we show that SIG produces better results than other saliency methods for common models and the ImageNet dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge