Alfie Roddan

Explainable Image Classification with Reduced Overconfidence for Tissue Characterisation

Jul 31, 2025Abstract:The deployment of Machine Learning models intraoperatively for tissue characterisation can assist decision making and guide safe tumour resections. For image classification models, pixel attribution methods are popular to infer explainability. However, overconfidence in deep learning model's predictions translates to overconfidence in pixel attribution. In this paper, we propose the first approach which incorporates risk estimation into a pixel attribution method for improved image classification explainability. The proposed method iteratively applies a classification model with a pixel attribution method to create a volume of PA maps. This volume is used for the first time, to generate a pixel-wise distribution of PA values. We introduce a method to generate an enhanced PA map by estimating the expectation values of the pixel-wise distributions. In addition, the coefficient of variation (CV) is used to estimate pixel-wise risk of this enhanced PA map. Hence, the proposed method not only provides an improved PA map but also produces an estimation of risk on the output PA values. Performance evaluation on probe-based Confocal Laser Endomicroscopy (pCLE) data and ImageNet verifies that our improved explainability method outperforms the state-of-the-art.

SAMSA: Segment Anything Model Enhanced with Spectral Angles for Hyperspectral Interactive Medical Image Segmentation

Jul 31, 2025Abstract:Hyperspectral imaging (HSI) provides rich spectral information for medical imaging, yet encounters significant challenges due to data limitations and hardware variations. We introduce SAMSA, a novel interactive segmentation framework that combines an RGB foundation model with spectral analysis. SAMSA efficiently utilizes user clicks to guide both RGB segmentation and spectral similarity computations. The method addresses key limitations in HSI segmentation through a unique spectral feature fusion strategy that operates independently of spectral band count and resolution. Performance evaluation on publicly available datasets has shown 81.0% 1-click and 93.4% 5-click DICE on a neurosurgical and 81.1% 1-click and 89.2% 5-click DICE on an intraoperative porcine hyperspectral dataset. Experimental results demonstrate SAMSA's effectiveness in few-shot and zero-shot learning scenarios and using minimal training examples. Our approach enables seamless integration of datasets with different spectral characteristics, providing a flexible framework for hyperspectral medical image analysis.

Confidence-Based Annotation Of Brain Tumours In Ultrasound

Feb 21, 2025

Abstract:Purpose: An investigation of the challenge of annotating discrete segmentations of brain tumours in ultrasound, with a focus on the issue of aleatoric uncertainty along the tumour margin, particularly for diffuse tumours. A segmentation protocol and method is proposed that incorporates this margin-related uncertainty while minimising the interobserver variance through reduced subjectivity, thereby diminishing annotator epistemic uncertainty. Approach: A sparse confidence method for annotation is proposed, based on a protocol designed using computer vision and radiology theory. Results: Output annotations using the proposed method are compared with the corresponding professional discrete annotation variance between the observers. A linear relationship was measured within the tumour margin region, with a Pearson correlation of 0.8. The downstream application was explored, comparing training using confidence annotations as soft labels with using the best discrete annotations as hard labels. In all evaluation folds, the Brier score was superior for the soft-label trained network. Conclusion: A formal framework was constructed to demonstrate the infeasibility of discrete annotation of brain tumours in B-mode ultrasound. Subsequently, a method for sparse confidence-based annotation is proposed and evaluated. Keywords: Brain tumours, ultrasound, confidence, annotation.

SurgRIPE challenge: Benchmark of Surgical Robot Instrument Pose Estimation

Jan 06, 2025

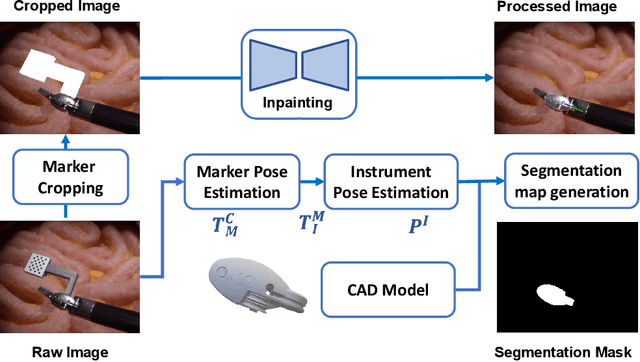

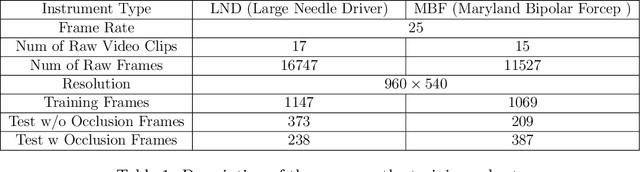

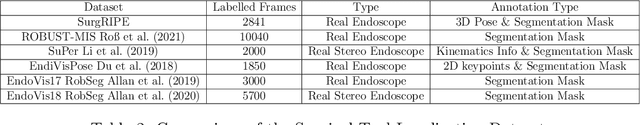

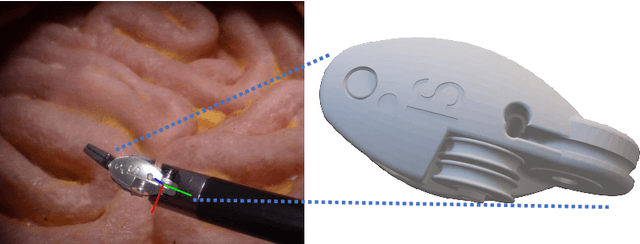

Abstract:Accurate instrument pose estimation is a crucial step towards the future of robotic surgery, enabling applications such as autonomous surgical task execution. Vision-based methods for surgical instrument pose estimation provide a practical approach to tool tracking, but they often require markers to be attached to the instruments. Recently, more research has focused on the development of marker-less methods based on deep learning. However, acquiring realistic surgical data, with ground truth instrument poses, required for deep learning training, is challenging. To address the issues in surgical instrument pose estimation, we introduce the Surgical Robot Instrument Pose Estimation (SurgRIPE) challenge, hosted at the 26th International Conference on Medical Image Computing and Computer-Assisted Intervention (MICCAI) in 2023. The objectives of this challenge are: (1) to provide the surgical vision community with realistic surgical video data paired with ground truth instrument poses, and (2) to establish a benchmark for evaluating markerless pose estimation methods. The challenge led to the development of several novel algorithms that showcased improved accuracy and robustness over existing methods. The performance evaluation study on the SurgRIPE dataset highlights the potential of these advanced algorithms to be integrated into robotic surgery systems, paving the way for more precise and autonomous surgical procedures. The SurgRIPE challenge has successfully established a new benchmark for the field, encouraging further research and development in surgical robot instrument pose estimation.

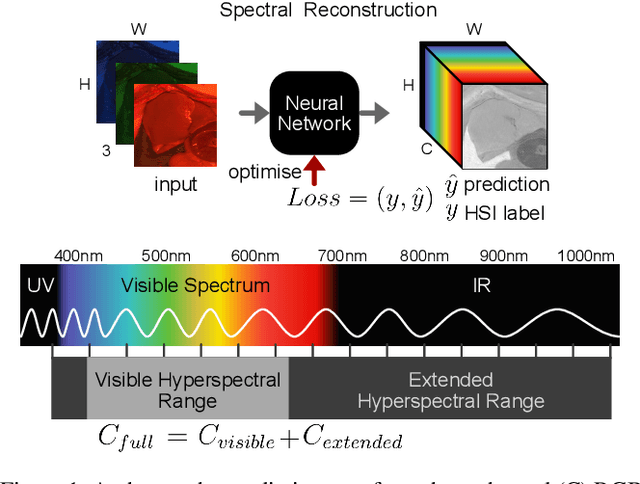

RGB to Hyperspectral: Spectral Reconstruction for Enhanced Surgical Imaging

Oct 17, 2024

Abstract:This study investigates the reconstruction of hyperspectral signatures from RGB data to enhance surgical imaging, utilizing the publicly available HeiPorSPECTRAL dataset from porcine surgery and an in-house neurosurgery dataset. Various architectures based on convolutional neural networks (CNNs) and transformer models are evaluated using comprehensive metrics. Transformer models exhibit superior performance in terms of RMSE, SAM, PSNR and SSIM by effectively integrating spatial information to predict accurate spectral profiles, encompassing both visible and extended spectral ranges. Qualitative assessments demonstrate the capability to predict spectral profiles critical for informed surgical decision-making during procedures. Challenges associated with capturing both the visible and extended hyperspectral ranges are highlighted using the MAE, emphasizing the complexities involved. The findings open up the new research direction of hyperspectral reconstruction for surgical applications and clinical use cases in real-time surgical environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge