Giulio Anichini

Confidence-Based Annotation Of Brain Tumours In Ultrasound

Feb 21, 2025

Abstract:Purpose: An investigation of the challenge of annotating discrete segmentations of brain tumours in ultrasound, with a focus on the issue of aleatoric uncertainty along the tumour margin, particularly for diffuse tumours. A segmentation protocol and method is proposed that incorporates this margin-related uncertainty while minimising the interobserver variance through reduced subjectivity, thereby diminishing annotator epistemic uncertainty. Approach: A sparse confidence method for annotation is proposed, based on a protocol designed using computer vision and radiology theory. Results: Output annotations using the proposed method are compared with the corresponding professional discrete annotation variance between the observers. A linear relationship was measured within the tumour margin region, with a Pearson correlation of 0.8. The downstream application was explored, comparing training using confidence annotations as soft labels with using the best discrete annotations as hard labels. In all evaluation folds, the Brier score was superior for the soft-label trained network. Conclusion: A formal framework was constructed to demonstrate the infeasibility of discrete annotation of brain tumours in B-mode ultrasound. Subsequently, a method for sparse confidence-based annotation is proposed and evaluated. Keywords: Brain tumours, ultrasound, confidence, annotation.

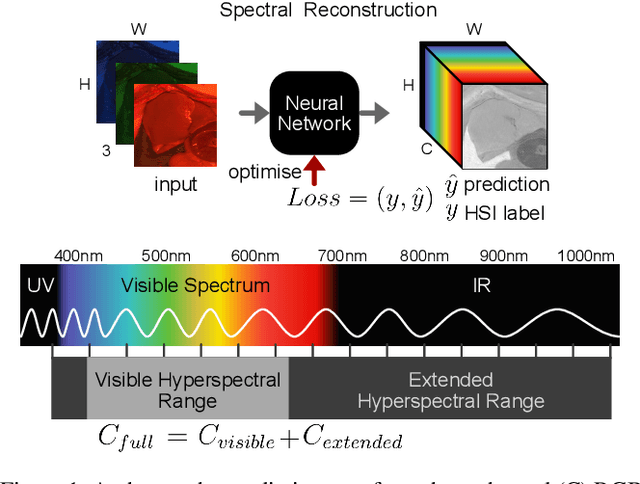

RGB to Hyperspectral: Spectral Reconstruction for Enhanced Surgical Imaging

Oct 17, 2024

Abstract:This study investigates the reconstruction of hyperspectral signatures from RGB data to enhance surgical imaging, utilizing the publicly available HeiPorSPECTRAL dataset from porcine surgery and an in-house neurosurgery dataset. Various architectures based on convolutional neural networks (CNNs) and transformer models are evaluated using comprehensive metrics. Transformer models exhibit superior performance in terms of RMSE, SAM, PSNR and SSIM by effectively integrating spatial information to predict accurate spectral profiles, encompassing both visible and extended spectral ranges. Qualitative assessments demonstrate the capability to predict spectral profiles critical for informed surgical decision-making during procedures. Challenges associated with capturing both the visible and extended hyperspectral ranges are highlighted using the MAE, emphasizing the complexities involved. The findings open up the new research direction of hyperspectral reconstruction for surgical applications and clinical use cases in real-time surgical environments.

Towards Safe and Collaborative Robotic Ultrasound Tissue Scanning in Neurosurgery

Jan 04, 2024

Abstract:Intraoperative ultrasound imaging is used to facilitate safe brain tumour resection. However, due to challenges with image interpretation and the physical scanning, this tool has yet to achieve widespread adoption in neurosurgery. In this paper, we introduce the components and workflow of a novel, versatile robotic platform for intraoperative ultrasound tissue scanning in neurosurgery. An RGB-D camera attached to the robotic arm allows for automatic object localisation with ArUco markers, and 3D surface reconstruction as a triangular mesh using the ImFusion Suite software solution. Impedance controlled guidance of the US probe along arbitrary surfaces, represented as a mesh, enables collaborative US scanning, i.e., autonomous, teleoperated and hands-on guided data acquisition. A preliminary experiment evaluates the suitability of the conceptual workflow and system components for probe landing on a custom-made soft-tissue phantom. Further assessment in future experiments will be necessary to prove the effectiveness of the presented platform.

Identifying Visible Tissue in Intraoperative Ultrasound Images during Brain Surgery: A Method and Application

Jun 01, 2023

Abstract:Intraoperative ultrasound scanning is a demanding visuotactile task. It requires operators to simultaneously localise the ultrasound perspective and manually perform slight adjustments to the pose of the probe, making sure not to apply excessive force or breaking contact with the tissue, whilst also characterising the visible tissue. In this paper, we propose a method for the identification of the visible tissue, which enables the analysis of ultrasound probe and tissue contact via the detection of acoustic shadow and construction of confidence maps of the perceptual salience. Detailed validation with both in vivo and phantom data is performed. First, we show that our technique is capable of achieving state of the art acoustic shadow scan line classification - with an average binary classification accuracy on unseen data of 0.87. Second, we show that our framework for constructing confidence maps is able to produce an ideal response to a probe's pose that is being oriented in and out of optimality - achieving an average RMSE across five scans of 0.174. The performance evaluation justifies the potential clinical value of the method which can be used both to assist clinical training and optimise robot-assisted ultrasound tissue scanning.

Automated robotic intraoperative ultrasound for brain surgery

Apr 03, 2023

Abstract:During brain tumour resection, localising cancerous tissue and delineating healthy and pathological borders is challenging, even for experienced neurosurgeons and neuroradiologists. Intraoperative imaging is commonly employed for determining and updating surgical plans in the operating room. Ultrasound (US) has presented itself a suitable tool for this task, owing to its ease of integration into the operating room and surgical procedure. However, widespread establishment of this tool has been limited because of the difficulty of anatomy localisation and data interpretation. In this work, we present a robotic framework designed and tested on a soft-tissue-mimicking brain phantom, simulating intraoperative US (iUS) scanning during brain tumour surgery.

Collaborative Robotic Ultrasound Tissue Scanning for Surgical Resection Guidance in Neurosurgery

Jan 19, 2023

Abstract:The aim of this paper is to introduce a robotic platform for autonomous iUS tissue scanning to optimise intraoperative diagnosis and improve surgical resection during robot-assisted operations. To guide anatomy specific robotic scanning and generate a representation of the robot task space, fast and accurate techniques for the recovery of 3D morphological structures of the surgical cavity are developed. The prototypic DLR MIRO surgical robotic arm is used to control the applied force and the in-plane motion of the US transducer. A key application of the proposed platform is the scanning of brain tissue to guide tumour resection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge