Adam Carpenter

eLog analysis for accelerators: status and future outlook

Jun 15, 2025

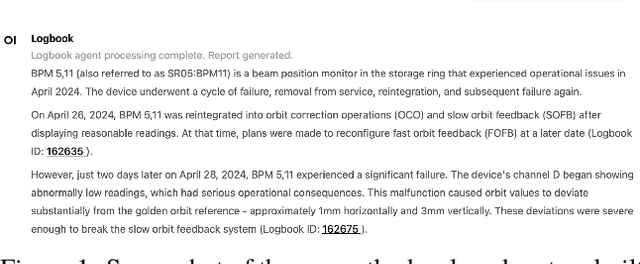

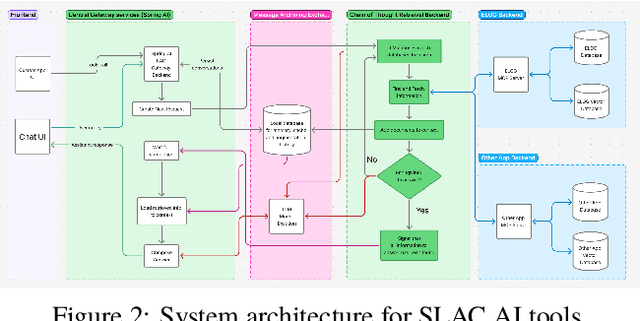

Abstract:This work demonstrates electronic logbook (eLog) systems leveraging modern AI-driven information retrieval capabilities at the accelerator facilities of Fermilab, Jefferson Lab, Lawrence Berkeley National Laboratory (LBNL), SLAC National Accelerator Laboratory. We evaluate contemporary tools and methodologies for information retrieval with Retrieval Augmented Generation (RAGs), focusing on operational insights and integration with existing accelerator control systems. The study addresses challenges and proposes solutions for state-of-the-art eLog analysis through practical implementations, demonstrating applications and limitations. We present a framework for enhancing accelerator facility operations through improved information accessibility and knowledge management, which could potentially lead to more efficient operations.

Data-Driven Gradient Optimization for Field Emission Management in a Superconducting Radio-Frequency Linac

Nov 11, 2024

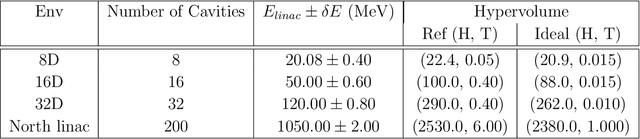

Abstract:Field emission can cause significant problems in superconducting radio-frequency linear accelerators (linacs). When cavity gradients are pushed higher, radiation levels within the linacs may rise exponentially, causing degradation of many nearby systems. This research aims to utilize machine learning with uncertainty quantification to predict radiation levels at multiple locations throughout the linacs and ultimately optimize cavity gradients to reduce field emission induced radiation while maintaining the total linac energy gain necessary for the experimental physics program. The optimized solutions show over 40% reductions for both neutron and gamma radiation from the standard operational settings.

Harnessing the Power of Gradient-Based Simulations for Multi-Objective Optimization in Particle Accelerators

Nov 07, 2024

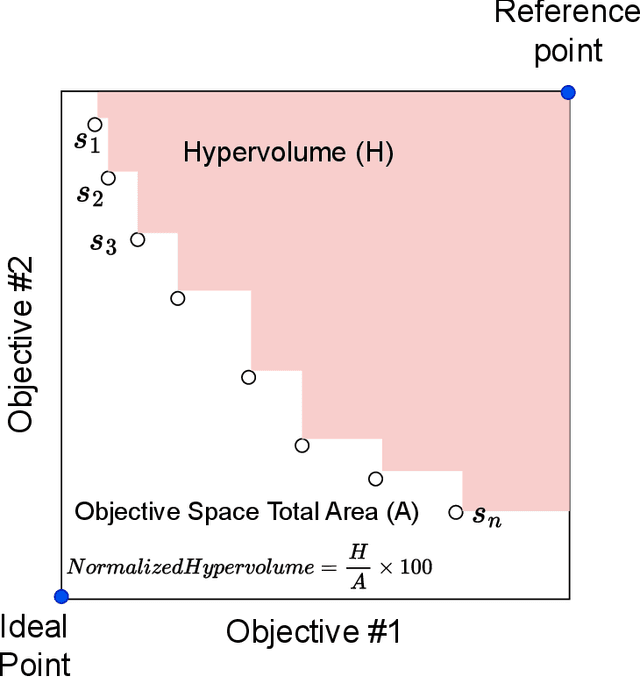

Abstract:Particle accelerator operation requires simultaneous optimization of multiple objectives. Multi-Objective Optimization (MOO) is particularly challenging due to trade-offs between the objectives. Evolutionary algorithms, such as genetic algorithm (GA), have been leveraged for many optimization problems, however, they do not apply to complex control problems by design. This paper demonstrates the power of differentiability for solving MOO problems using a Deep Differentiable Reinforcement Learning (DDRL) algorithm in particle accelerators. We compare DDRL algorithm with Model Free Reinforcement Learning (MFRL), GA and Bayesian Optimization (BO) for simultaneous optimization of heat load and trip rates in the Continuous Electron Beam Accelerator Facility (CEBAF). The underlying problem enforces strict constraints on both individual states and actions as well as cumulative (global) constraint for energy requirements of the beam. A physics-based surrogate model based on real data is developed. This surrogate model is differentiable and allows back-propagation of gradients. The results are evaluated in the form of a Pareto-front for two objectives. We show that the DDRL outperforms MFRL, BO, and GA on high dimensional problems.

Accelerating Cavity Fault Prediction Using Deep Learning at Jefferson Laboratory

Apr 24, 2024

Abstract:Accelerating cavities are an integral part of the Continuous Electron Beam Accelerator Facility (CEBAF) at Jefferson Laboratory. When any of the over 400 cavities in CEBAF experiences a fault, it disrupts beam delivery to experimental user halls. In this study, we propose the use of a deep learning model to predict slowly developing cavity faults. By utilizing pre-fault signals, we train a LSTM-CNN binary classifier to distinguish between radio-frequency (RF) signals during normal operation and RF signals indicative of impending faults. We optimize the model by adjusting the fault confidence threshold and implementing a multiple consecutive window criterion to identify fault events, ensuring a low false positive rate. Results obtained from analysis of a real dataset collected from the accelerating cavities simulating a deployed scenario demonstrate the model's ability to identify normal signals with 99.99% accuracy and correctly predict 80% of slowly developing faults. Notably, these achievements were achieved in the context of a highly imbalanced dataset, and fault predictions were made several hundred milliseconds before the onset of the fault. Anticipating faults enables preemptive measures to improve operational efficiency by preventing or mitigating their occurrence.

Anomaly Detection of Particle Orbit in Accelerator using LSTM Deep Learning Technology

Jan 28, 2024Abstract:A stable, reliable, and controllable orbit lock system is crucial to an electron (or ion) accelerator because the beam orbit and beam energy instability strongly affect the quality of the beam delivered to experimental halls. Currently, when the orbit lock system fails operators must manually intervene. This paper develops a Machine Learning based fault detection methodology to identify orbit lock anomalies and notify accelerator operations staff of the off-normal behavior. Our method is unsupervised, so it does not require labeled data. It uses Long-Short Memory Networks (LSTM) Auto Encoder to capture normal patterns and predict future values of monitoring sensors in the orbit lock system. Anomalies are detected when the prediction error exceeds a threshold. We conducted experiments using monitoring data from Jefferson Lab's Continuous Electron Beam Accelerator Facility (CEBAF). The results are promising: the percentage of real anomalies identified by our solution is 68.6%-89.3% using monitoring data of a single component in the orbit lock control system. The accuracy can be as high as 82%.

Superconducting radio-frequency cavity fault classification using machine learning at Jefferson Laboratory

Jun 11, 2020

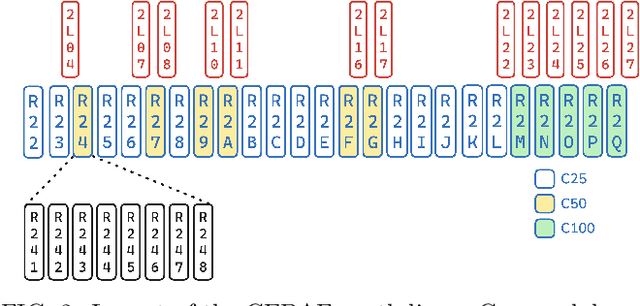

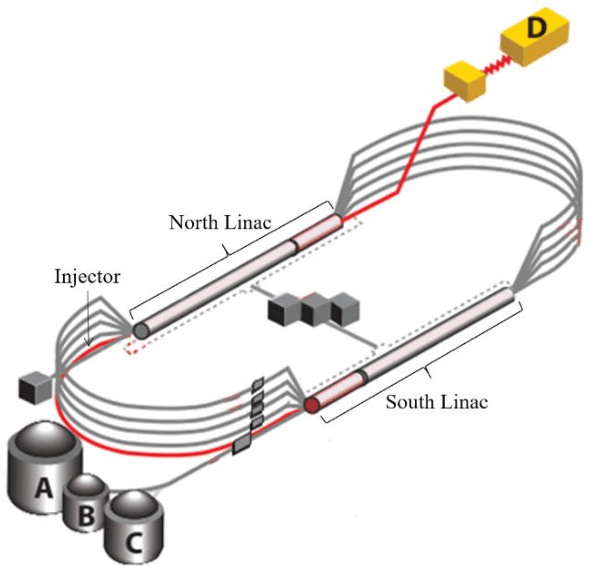

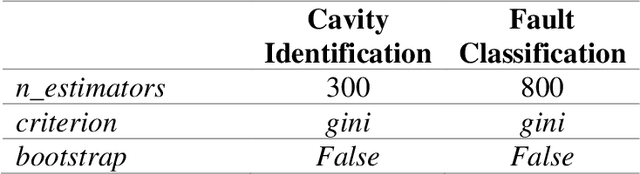

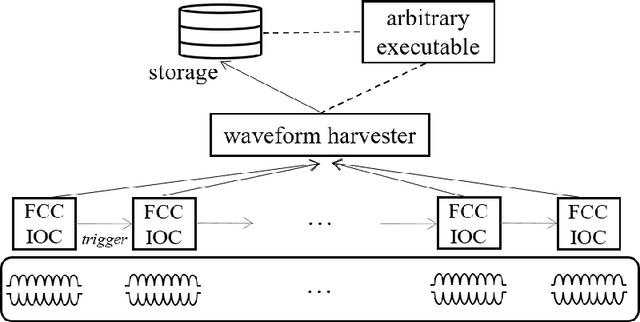

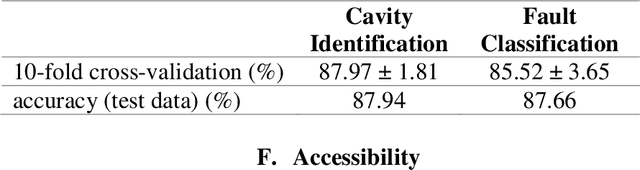

Abstract:We report on the development of machine learning models for classifying C100 superconducting radio-frequency (SRF) cavity faults in the Continuous Electron Beam Accelerator Facility (CEBAF) at Jefferson Lab. CEBAF is a continuous-wave recirculating linac utilizing 418 SRF cavities to accelerate electrons up to 12 GeV through 5-passes. Of these, 96 cavities (12 cryomodules) are designed with a digital low-level RF system configured such that a cavity fault triggers waveform recordings of 17 RF signals for each of the 8 cavities in the cryomodule. Subject matter experts (SME) are able to analyze the collected time-series data and identify which of the eight cavities faulted first and classify the type of fault. This information is used to find trends and strategically deploy mitigations to problematic cryomodules. However manually labeling the data is laborious and time-consuming. By leveraging machine learning, near real-time (rather than post-mortem) identification of the offending cavity and classification of the fault type has been implemented. We discuss performance of the ML models during a recent physics run. Results show the cavity identification and fault classification models have accuracies of 84.9% and 78.2%, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge