Ryan Roussel

A Start To End Machine Learning Approach To Maximize Scientific Throughput From The LCLS-II-HE

May 29, 2025Abstract:With the increasing brightness of Light sources, including the Diffraction-Limited brightness upgrade of APS and the high-repetition-rate upgrade of LCLS, the proposed experiments therein are becoming increasingly complex. For instance, experiments at LCLS-II-HE will require the X-ray beam to be within a fraction of a micron in diameter, with pointing stability of a few nanoradians, at the end of a kilometer-long electron accelerator, a hundred-meter-long undulator section, and tens of meters long X-ray optics. This enhancement of brightness will increase the data production rate to rival the largest data generators in the world. Without real-time active feedback control and an optimized pipeline to transform measurements to scientific information and insights, researchers will drown in a deluge of mostly useless data, and fail to extract the highly sophisticated insights that the recent brightness upgrades promise. In this article, we outline the strategy we are developing at SLAC to implement Machine Learning driven optimization, automation and real-time knowledge extraction from the electron-injector at the start of the electron accelerator, to the multidimensional X-ray optical systems, and till the experimental endstations and the high readout rate, multi-megapixel detectors at LCLS to deliver the design performance to the users. This is illustrated via examples from Accelerator, Optics and End User applications.

Harnessing the Power of Gradient-Based Simulations for Multi-Objective Optimization in Particle Accelerators

Nov 07, 2024

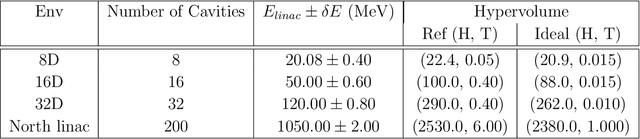

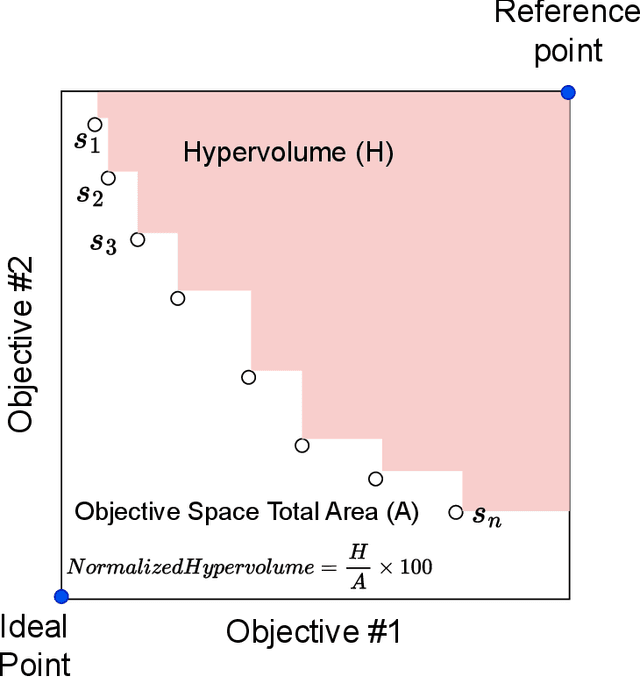

Abstract:Particle accelerator operation requires simultaneous optimization of multiple objectives. Multi-Objective Optimization (MOO) is particularly challenging due to trade-offs between the objectives. Evolutionary algorithms, such as genetic algorithm (GA), have been leveraged for many optimization problems, however, they do not apply to complex control problems by design. This paper demonstrates the power of differentiability for solving MOO problems using a Deep Differentiable Reinforcement Learning (DDRL) algorithm in particle accelerators. We compare DDRL algorithm with Model Free Reinforcement Learning (MFRL), GA and Bayesian Optimization (BO) for simultaneous optimization of heat load and trip rates in the Continuous Electron Beam Accelerator Facility (CEBAF). The underlying problem enforces strict constraints on both individual states and actions as well as cumulative (global) constraint for energy requirements of the beam. A physics-based surrogate model based on real data is developed. This surrogate model is differentiable and allows back-propagation of gradients. The results are evaluated in the form of a Pareto-front for two objectives. We show that the DDRL outperforms MFRL, BO, and GA on high dimensional problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge