Zhuoran Wang

Action Draft and Verify: A Self-Verifying Framework for Vision-Language-Action Model

Mar 18, 2026Abstract:Vision-Language-Action (VLA) models have recently demonstrated strong performance across embodied tasks. Modern VLAs commonly employ diffusion action experts to efficiently generate high-precision continuous action chunks, while auto-regressive generation can be slower and less accurate at low-level control. Yet auto-regressive paradigms still provide complementary priors that can improve robustness and generalization in out-of-distribution environments. To leverage both paradigms, we propose Action-Draft-and-Verify (ADV): diffusion action expert drafts multiple candidate action chunks, and the VLM selects one by scoring all candidates in a single forward pass with a perplexity-style metric. Under matched backbones, training data, and action-chunk length, ADV improves success rate by +4.3 points in simulation and +19.7 points in real-world over diffusion-based baseline, with a single-pass VLM reranking overhead.

KV-CoRE: Benchmarking Data-Dependent Low-Rank Compressibility of KV-Caches in LLMs

Feb 05, 2026Abstract:Large language models rely on kv-caches to avoid redundant computation during autoregressive decoding, but as context length grows, reading and writing the cache can quickly saturate GPU memory bandwidth. Recent work has explored KV-cache compression, yet most approaches neglect the data-dependent nature of kv-caches and their variation across layers. We introduce KV-CoRE KV-cache Compressibility by Rank Evaluation), an SVD-based method for quantifying the data-dependent low-rank compressibility of kv-caches. KV-CoRE computes the optimal low-rank approximation under the Frobenius norm and, being gradient-free and incremental, enables efficient dataset-level, layer-wise evaluation. Using this method, we analyze multiple models and datasets spanning five English domains and sixteen languages, uncovering systematic patterns that link compressibility to model architecture, training data, and language coverage. As part of this analysis, we employ the Normalized Effective Rank as a metric of compressibility and show that it correlates strongly with performance degradation under compression. Our study establishes a principled evaluation framework and the first large-scale benchmark of kv-cache compressibility in LLMs, offering insights for dynamic, data-aware compression and data-centric model development.

Few-Shot-Based Modular Image-to-Video Adapter for Diffusion Models

Dec 23, 2025Abstract:Diffusion models (DMs) have recently achieved impressive photorealism in image and video generation. However, their application to image animation remains limited, even when trained on large-scale datasets. Two primary challenges contribute to this: the high dimensionality of video signals leads to a scarcity of training data, causing DMs to favor memorization over prompt compliance when generating motion; moreover, DMs struggle to generalize to novel motion patterns not present in the training set, and fine-tuning them to learn such patterns, especially using limited training data, is still under-explored. To address these limitations, we propose Modular Image-to-Video Adapter (MIVA), a lightweight sub-network attachable to a pre-trained DM, each designed to capture a single motion pattern and scalable via parallelization. MIVAs can be efficiently trained on approximately ten samples using a single consumer-grade GPU. At inference time, users can specify motion by selecting one or multiple MIVAs, eliminating the need for prompt engineering. Extensive experiments demonstrate that MIVA enables more precise motion control while maintaining, or even surpassing, the generation quality of models trained on significantly larger datasets.

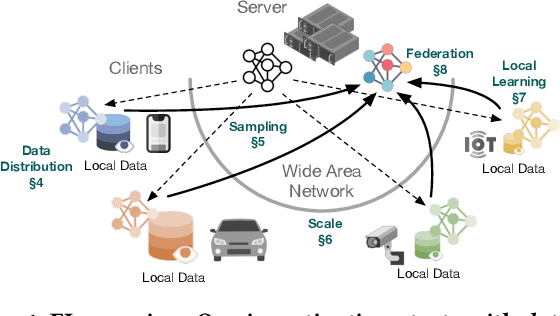

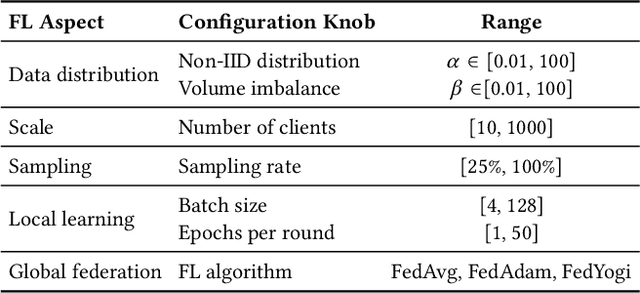

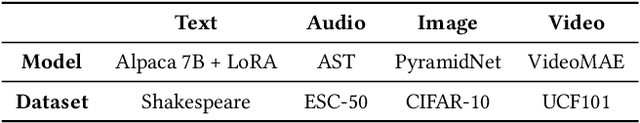

An Empirical Study of the Impact of Federated Learning on Machine Learning Model Accuracy

Mar 27, 2025

Abstract:Federated Learning (FL) enables distributed ML model training on private user data at the global scale. Despite the potential of FL demonstrated in many domains, an in-depth view of its impact on model accuracy remains unclear. In this paper, we investigate, systematically, how this learning paradigm can affect the accuracy of state-of-the-art ML models for a variety of ML tasks. We present an empirical study that involves various data types: text, image, audio, and video, and FL configuration knobs: data distribution, FL scale, client sampling, and local and global computations. Our experiments are conducted in a unified FL framework to achieve high fidelity, with substantial human efforts and resource investments. Based on the results, we perform a quantitative analysis of the impact of FL, and highlight challenging scenarios where applying FL degrades the accuracy of the model drastically and identify cases where the impact is negligible. The detailed and extensive findings can benefit practical deployments and future development of FL.

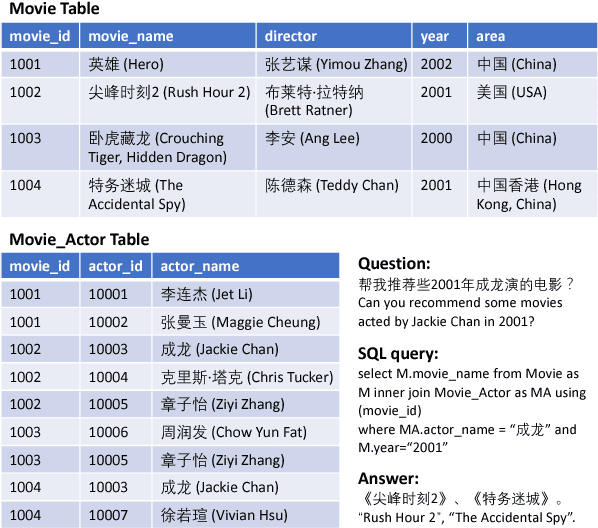

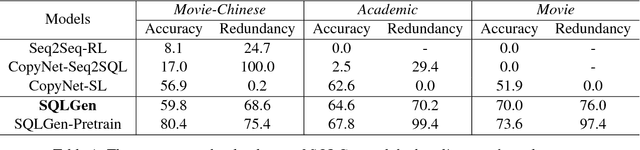

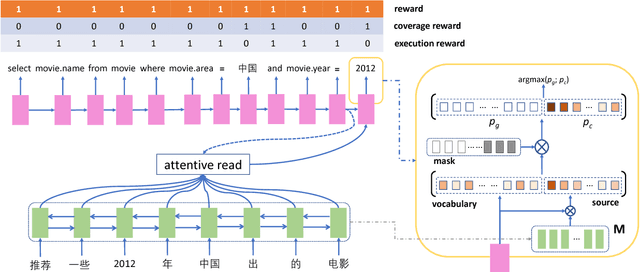

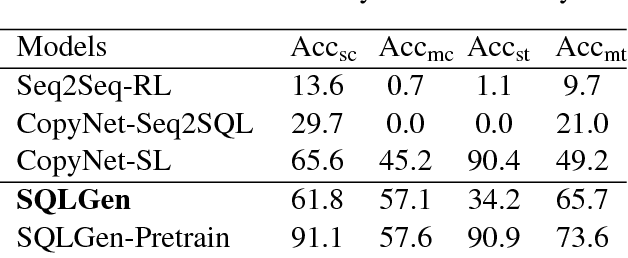

Learning to Generate Structured Queries from Natural Language with Indirect Supervision

Sep 10, 2018

Abstract:Generating structured query language (SQL) from natural language is an emerging research topic. This paper presents a new learning paradigm from indirect supervision of the answers to natural language questions, instead of SQL queries. This paradigm facilitates the acquisition of training data due to the abundant resources of question-answer pairs for various domains in the Internet, and expels the difficult SQL annotation job. An end-to-end neural model integrating with reinforcement learning is proposed to learn SQL generation policy within the answer-driven learning paradigm. The model is evaluated on datasets of different domains, including movie and academic publication. Experimental results show that our model outperforms the baseline models.

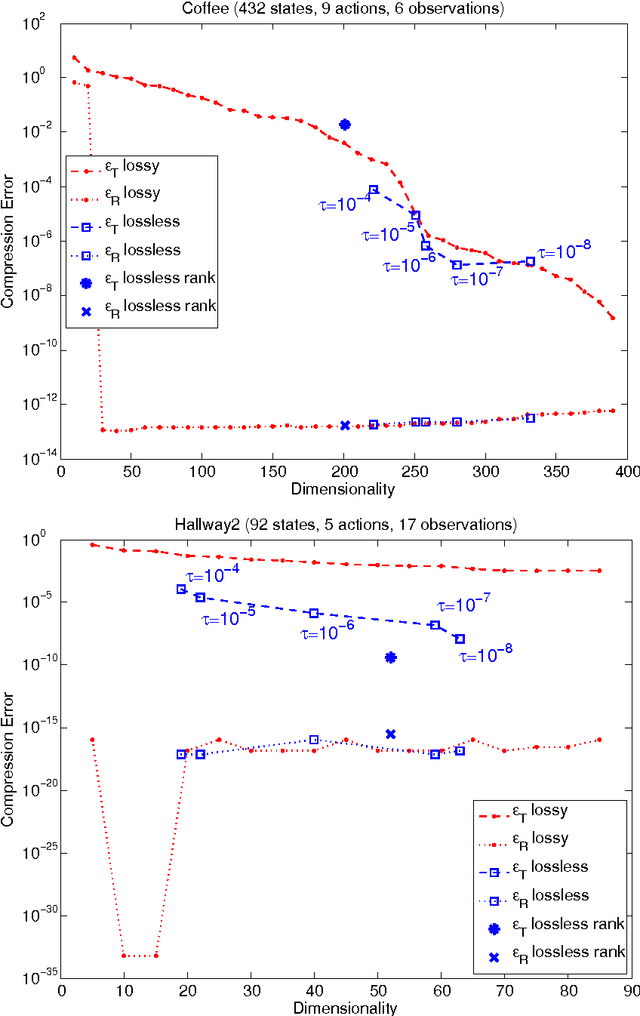

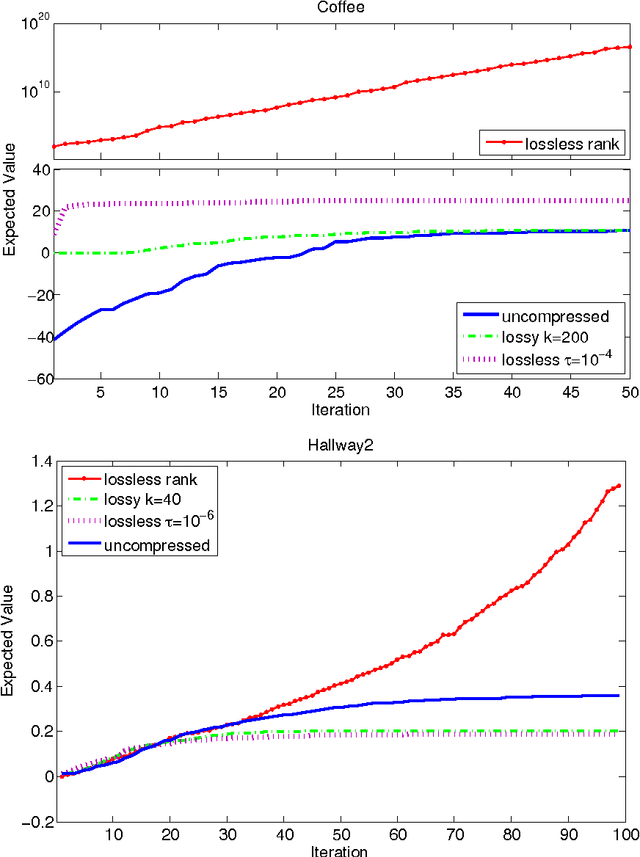

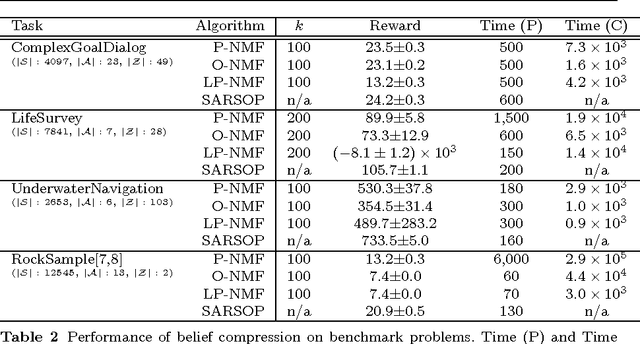

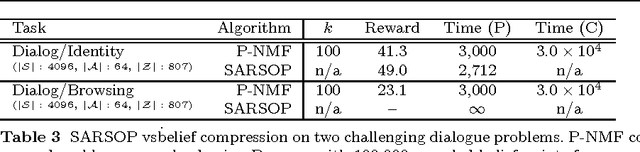

On the Linear Belief Compression of POMDPs: A re-examination of current methods

Aug 05, 2015

Abstract:Belief compression improves the tractability of large-scale partially observable Markov decision processes (POMDPs) by finding projections from high-dimensional belief space onto low-dimensional approximations, where solving to obtain action selection policies requires fewer computations. This paper develops a unified theoretical framework to analyse three existing linear belief compression approaches, including value-directed compression and two non-negative matrix factorisation (NMF) based algorithms. The results indicate that all the three known belief compression methods have their own critical deficiencies. Therefore, projective NMF belief compression is proposed (P-NMF), aiming to overcome the drawbacks of the existing techniques. The performance of the proposed algorithm is examined on four POMDP problems of reasonably large scale, in comparison with existing techniques. Additionally, the competitiveness of belief compression is compared empirically to a state-of-the-art heuristic search based POMDP solver and their relative merits in solving large-scale POMDPs are investigated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge