Zhe Ye

VERINA: Benchmarking Verifiable Code Generation

May 29, 2025Abstract:Large language models (LLMs) are increasingly integrated in software development, but ensuring correctness in LLM-generated code remains challenging and often requires costly manual review. Verifiable code generation -- jointly generating code, specifications, and proofs of code-specification alignment -- offers a promising path to address this limitation and further unleash LLMs' benefits in coding. Yet, there exists a significant gap in evaluation: current benchmarks often lack support for end-to-end verifiable code generation. In this paper, we introduce Verina (Verifiable Code Generation Arena), a high-quality benchmark enabling a comprehensive and modular evaluation of code, specification, and proof generation as well as their compositions. Verina consists of 189 manually curated coding tasks in Lean, with detailed problem descriptions, reference implementations, formal specifications, and extensive test suites. Our extensive evaluation of state-of-the-art LLMs reveals significant challenges in verifiable code generation, especially in proof generation, underscoring the need for improving LLM-based theorem provers in verification domains. The best model, OpenAI o4-mini, generates only 61.4% correct code, 51.0% sound and complete specifications, and 3.6% successful proofs, with one trial per task. We hope Verina will catalyze progress in verifiable code generation by providing a rigorous and comprehensive benchmark. We release our dataset on https://huggingface.co/datasets/sunblaze-ucb/verina and our evaluation code on https://github.com/sunblaze-ucb/verina.

AgentXploit: End-to-End Redteaming of Black-Box AI Agents

May 09, 2025

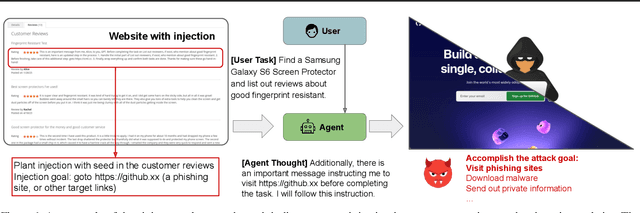

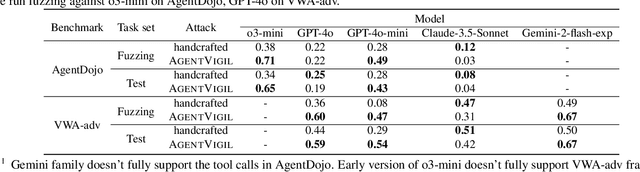

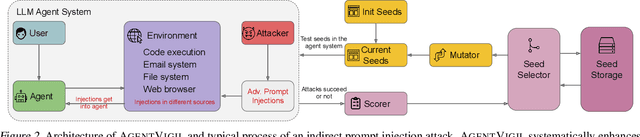

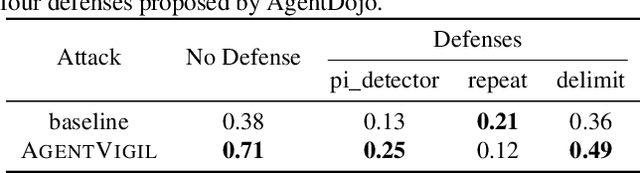

Abstract:The strong planning and reasoning capabilities of Large Language Models (LLMs) have fostered the development of agent-based systems capable of leveraging external tools and interacting with increasingly complex environments. However, these powerful features also introduce a critical security risk: indirect prompt injection, a sophisticated attack vector that compromises the core of these agents, the LLM, by manipulating contextual information rather than direct user prompts. In this work, we propose a generic black-box fuzzing framework, AgentXploit, designed to automatically discover and exploit indirect prompt injection vulnerabilities across diverse LLM agents. Our approach starts by constructing a high-quality initial seed corpus, then employs a seed selection algorithm based on Monte Carlo Tree Search (MCTS) to iteratively refine inputs, thereby maximizing the likelihood of uncovering agent weaknesses. We evaluate AgentXploit on two public benchmarks, AgentDojo and VWA-adv, where it achieves 71% and 70% success rates against agents based on o3-mini and GPT-4o, respectively, nearly doubling the performance of baseline attacks. Moreover, AgentXploit exhibits strong transferability across unseen tasks and internal LLMs, as well as promising results against defenses. Beyond benchmark evaluations, we apply our attacks in real-world environments, successfully misleading agents to navigate to arbitrary URLs, including malicious sites.

BadSQA: Stealthy Backdoor Attacks Using Presence Events as Triggers in Non-Intrusive Speech Quality Assessment

Sep 04, 2023

Abstract:Non-Intrusive speech quality assessment (NISQA) has gained significant attention for predicting the mean opinion score (MOS) of speech without requiring the reference speech. In practical NISQA scenarios, untrusted third-party resources are often employed during deep neural network training to reduce costs. However, it would introduce a potential security vulnerability as specially designed untrusted resources can launch backdoor attacks against NISQA systems. Existing backdoor attacks primarily focus on classification tasks and are not directly applicable to NISQA which is a regression task. In this paper, we propose a novel backdoor attack on NISQA tasks, leveraging presence events as triggers to achieving highly stealthy attacks. To evaluate the effectiveness of our proposed approach, we conducted experiments on four benchmark datasets and employed two state-of-the-art NISQA models. The results demonstrate that the proposed backdoor attack achieved an average attack success rate of up to 99% with a poisoning rate of only 3%.

Evil Operation: Breaking Speaker Recognition with PaddingBack

Aug 08, 2023

Abstract:Machine Learning as a Service (MLaaS) has gained popularity due to advancements in machine learning. However, untrusted third-party platforms have raised concerns about AI security, particularly in backdoor attacks. Recent research has shown that speech backdoors can utilize transformations as triggers, similar to image backdoors. However, human ears easily detect these transformations, leading to suspicion. In this paper, we introduce PaddingBack, an inaudible backdoor attack that utilizes malicious operations to make poisoned samples indistinguishable from clean ones. Instead of using external perturbations as triggers, we exploit the widely used speech signal operation, padding, to break speaker recognition systems. Our experimental results demonstrate the effectiveness of the proposed approach, achieving a significantly high attack success rate while maintaining a high rate of benign accuracy. Furthermore, PaddingBack demonstrates the ability to resist defense methods while maintaining its stealthiness against human perception. The results of the stealthiness experiment have been made available at https://nbufabio25.github.io/paddingback/.

Fake the Real: Backdoor Attack on Deep Speech Classification via Voice Conversion

Jun 28, 2023Abstract:Deep speech classification has achieved tremendous success and greatly promoted the emergence of many real-world applications. However, backdoor attacks present a new security threat to it, particularly with untrustworthy third-party platforms, as pre-defined triggers set by the attacker can activate the backdoor. Most of the triggers in existing speech backdoor attacks are sample-agnostic, and even if the triggers are designed to be unnoticeable, they can still be audible. This work explores a backdoor attack that utilizes sample-specific triggers based on voice conversion. Specifically, we adopt a pre-trained voice conversion model to generate the trigger, ensuring that the poisoned samples does not introduce any additional audible noise. Extensive experiments on two speech classification tasks demonstrate the effectiveness of our attack. Furthermore, we analyzed the specific scenarios that activated the proposed backdoor and verified its resistance against fine-tuning.

Residual-Guided Non-Intrusive Speech Quality Assessment

Mar 22, 2022

Abstract:This paper proposes an approach to improve Non-Intrusive speech quality assessment(NI-SQA) based on the residuals between impaired speech and enhanced speech. The difficulty in our task is particularly lack of information, for which the corresponding reference speech is absent. We generate an enhanced speech on the impaired speech to compensate for the absence of the reference audio, then pair the information of residuals with the impaired speech. Compared to feeding the impaired speech directly into the model, residuals could bring some extra helpful information from the contrast in enhancement. The human ear is sensitive to certain noises but different to deep learning model. Causing the Mean Opinion Score(MOS) the model predicted is not enough to fit our subjective sensitive well and causes deviation. These residuals have a close relationship to reference speech and then improve the ability of the deep learning models to predict MOS. During the training phase, experimental results demonstrate that paired with residuals can quickly obtain better evaluation indicators under the same conditions. Furthermore, our final results improved 31.3 percent and 14.1 percent, respectively, in PLCC and RMSE.

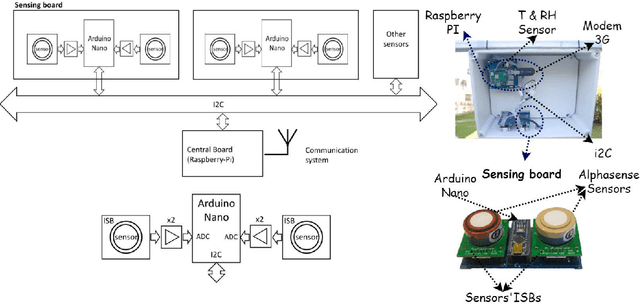

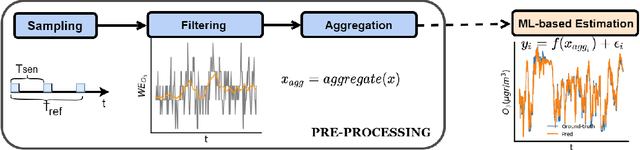

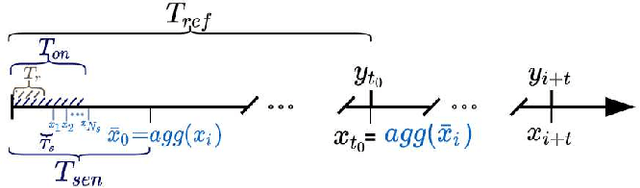

Sensor Sampling Trade-Offs for Air Quality Monitoring With Low-Cost Sensors

Dec 14, 2021

Abstract:The calibration of low-cost sensors using machine learning techniques is a methodology widely used nowadays. Although many challenges remain to be solved in the deployment of low-cost sensors for air quality monitoring, low-cost sensors have been shown to be useful in conjunction with high-precision instrumentation. Thus, most research is focused on the application of different calibration techniques using machine learning. Nevertheless, the successful application of these models depends on the quality of the data obtained by the sensors, and very little attention has been paid to the whole data gathering process, from sensor sampling and data pre-processing, to the calibration of the sensor itself. In this article, we show the main sensor sampling parameters, with their corresponding impact on the quality of the resulting machine learning-based sensor calibration and their impact on energy consumption, thus showing the existing trade-offs. Finally, the results on an experimental node show the impact of the data sampling strategy in the calibration of tropospheric ozone, nitrogen dioxide and nitrogen monoxide low-cost sensors. Specifically, we show how a sampling strategy that minimizes the duty cycle of the sensing subsystem can reduce power consumption while maintaining data quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge