Yuxiao Luo

CREM: Compression-Driven Representation Enhancement for Multimodal Retrieval and Comprehension

Feb 22, 2026Abstract:Multimodal Large Language Models (MLLMs) have shown remarkable success in comprehension tasks such as visual description and visual question answering. However, their direct application to embedding-based tasks like retrieval remains challenging due to the discrepancy between output formats and optimization objectives. Previous approaches often employ contrastive fine-tuning to adapt MLLMs for retrieval, but at the cost of losing their generative capabilities. We argue that both generative and embedding tasks fundamentally rely on shared cognitive mechanisms, specifically cross-modal representation alignment and contextual comprehension. To this end, we propose CREM (Compression-driven Representation Enhanced Model), with a unified framework that enhances multimodal representations for retrieval while preserving generative ability. Specifically, we introduce a compression-based prompt design with learnable chorus tokens to aggregate multimodal semantics and a compression-driven training strategy that integrates contrastive and generative objectives through compression-aware attention. Extensive experiments demonstrate that CREM achieves state-of-the-art retrieval performance on MMEB while maintaining strong generative performance on multiple comprehension benchmarks. Our findings highlight that generative supervision can further improve the representational quality of MLLMs under the proposed compression-driven paradigm.

M-STAR: Multi-Scale Spatiotemporal Autoregression for Human Mobility Modeling

Dec 08, 2025Abstract:Modeling human mobility is vital for extensive applications such as transportation planning and epidemic modeling. With the rise of the Artificial Intelligence Generated Content (AIGC) paradigm, recent works explore synthetic trajectory generation using autoregressive and diffusion models. While these methods show promise for generating single-day trajectories, they remain limited by inefficiencies in long-term generation (e.g., weekly trajectories) and a lack of explicit spatiotemporal multi-scale modeling. This study proposes Multi-Scale Spatio-Temporal AutoRegression (M-STAR), a new framework that generates long-term trajectories through a coarse-to-fine spatiotemporal prediction process. M-STAR combines a Multi-scale Spatiotemporal Tokenizer that encodes hierarchical mobility patterns with a Transformer-based decoder for next-scale autoregressive prediction. Experiments on two real-world datasets show that M-STAR outperforms existing methods in fidelity and significantly improves generation speed. The data and codes are available at https://github.com/YuxiaoLuo0013/M-STAR.

Compression then Matching: An Efficient Pre-training Paradigm for Multimodal Embedding

Nov 11, 2025Abstract:Vision-language models advance multimodal representation learning by acquiring transferable semantic embeddings, thereby substantially enhancing performance across a range of vision-language tasks, including cross-modal retrieval, clustering, and classification. An effective embedding is expected to comprehensively preserve the semantic content of the input while simultaneously emphasizing features that are discriminative for downstream tasks. Recent approaches demonstrate that VLMs can be adapted into competitive embedding models via large-scale contrastive learning, enabling the simultaneous optimization of two complementary objectives. We argue that the two aforementioned objectives can be decoupled: a comprehensive understanding of the input facilitates the embedding model in achieving superior performance in downstream tasks via contrastive learning. In this paper, we propose CoMa, a compressed pre-training phase, which serves as a warm-up stage for contrastive learning. Experiments demonstrate that with only a small amount of pre-training data, we can transform a VLM into a competitive embedding model. CoMa achieves new state-of-the-art results among VLMs of comparable size on the MMEB, realizing optimization in both efficiency and effectiveness.

Can You Really Trust Code Copilots? Evaluating Large Language Models from a Code Security Perspective

May 15, 2025Abstract:Code security and usability are both essential for various coding assistant applications driven by large language models (LLMs). Current code security benchmarks focus solely on single evaluation task and paradigm, such as code completion and generation, lacking comprehensive assessment across dimensions like secure code generation, vulnerability repair and discrimination. In this paper, we first propose CoV-Eval, a multi-task benchmark covering various tasks such as code completion, vulnerability repair, vulnerability detection and classification, for comprehensive evaluation of LLM code security. Besides, we developed VC-Judge, an improved judgment model that aligns closely with human experts and can review LLM-generated programs for vulnerabilities in a more efficient and reliable way. We conduct a comprehensive evaluation of 20 proprietary and open-source LLMs. Overall, while most LLMs identify vulnerable codes well, they still tend to generate insecure codes and struggle with recognizing specific vulnerability types and performing repairs. Extensive experiments and qualitative analyses reveal key challenges and optimization directions, offering insights for future research in LLM code security.

SaRO: Enhancing LLM Safety through Reasoning-based Alignment

Apr 13, 2025Abstract:Current safety alignment techniques for large language models (LLMs) face two key challenges: (1) under-generalization, which leaves models vulnerable to novel jailbreak attacks, and (2) over-alignment, which leads to the excessive refusal of benign instructions. Our preliminary investigation reveals semantic overlap between jailbreak/harmful queries and normal prompts in embedding space, suggesting that more effective safety alignment requires a deeper semantic understanding. This motivates us to incorporate safety-policy-driven reasoning into the alignment process. To this end, we propose the Safety-oriented Reasoning Optimization Framework (SaRO), which consists of two stages: (1) Reasoning-style Warmup (RW) that enables LLMs to internalize long-chain reasoning through supervised fine-tuning, and (2) Safety-oriented Reasoning Process Optimization (SRPO) that promotes safety reflection via direct preference optimization (DPO). Extensive experiments demonstrate the superiority of SaRO over traditional alignment methods.

H-MBA: Hierarchical MamBa Adaptation for Multi-Modal Video Understanding in Autonomous Driving

Jan 08, 2025

Abstract:With the prevalence of Multimodal Large Language Models(MLLMs), autonomous driving has encountered new opportunities and challenges. In particular, multi-modal video understanding is critical to interactively analyze what will happen in the procedure of autonomous driving. However, videos in such a dynamical scene that often contains complex spatial-temporal movements, which restricts the generalization capacity of the existing MLLMs in this field. To bridge the gap, we propose a novel Hierarchical Mamba Adaptation (H-MBA) framework to fit the complicated motion changes in autonomous driving videos. Specifically, our H-MBA consists of two distinct modules, including Context Mamba (C-Mamba) and Query Mamba (Q-Mamba). First, C-Mamba contains various types of structure state space models, which can effectively capture multi-granularity video context for different temporal resolutions. Second, Q-Mamba flexibly transforms the current frame as the learnable query, and attentively selects multi-granularity video context into query. Consequently, it can adaptively integrate all the video contexts of multi-scale temporal resolutions to enhance video understanding. Via a plug-and-play paradigm in MLLMs, our H-MBA shows the remarkable performance on multi-modal video tasks in autonomous driving, e.g., for risk object detection, it outperforms the previous SOTA method with 5.5% mIoU improvement.

Deciphering Human Mobility: Inferring Semantics of Trajectories with Large Language Models

May 30, 2024Abstract:Understanding human mobility patterns is essential for various applications, from urban planning to public safety. The individual trajectory such as mobile phone location data, while rich in spatio-temporal information, often lacks semantic detail, limiting its utility for in-depth mobility analysis. Existing methods can infer basic routine activity sequences from this data, lacking depth in understanding complex human behaviors and users' characteristics. Additionally, they struggle with the dependency on hard-to-obtain auxiliary datasets like travel surveys. To address these limitations, this paper defines trajectory semantic inference through three key dimensions: user occupation category, activity sequence, and trajectory description, and proposes the Trajectory Semantic Inference with Large Language Models (TSI-LLM) framework to leverage LLMs infer trajectory semantics comprehensively and deeply. We adopt spatio-temporal attributes enhanced data formatting (STFormat) and design a context-inclusive prompt, enabling LLMs to more effectively interpret and infer the semantics of trajectory data. Experimental validation on real-world trajectory datasets demonstrates the efficacy of TSI-LLM in deciphering complex human mobility patterns. This study explores the potential of LLMs in enhancing the semantic analysis of trajectory data, paving the way for more sophisticated and accessible human mobility research.

Are All Unseen Data Out-of-Distribution?

Jan 02, 2024

Abstract:Distributions of unseen data have been all treated as out-of-distribution (OOD), making their generalization a significant challenge. Much evidence suggests that the size increase of training data can monotonically decrease generalization errors in test data. However, this is not true from other observations and analysis. In particular, when the training data have multiple source domains and the test data contain distribution drifts, then not all generalization errors on the test data decrease monotonically with the increasing size of training data. Such a non-decreasing phenomenon is formally investigated under a linear setting with empirical verification across varying visual benchmarks. Motivated by these results, we redefine the OOD data as a type of data outside the convex hull of the training domains and prove a new generalization bound based on this new definition. It implies that the effectiveness of a well-trained model can be guaranteed for the unseen data that is within the convex hull of the training domains. But, for some data beyond the convex hull, a non-decreasing error trend can happen. Therefore, we investigate the performance of popular strategies such as data augmentation and pre-training to overcome this issue. Moreover, we propose a novel reinforcement learning selection algorithm in the source domains only that can deliver superior performance over the baseline methods.

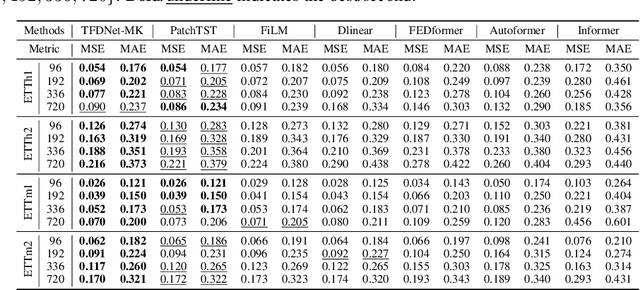

TFDNet: Time-Frequency Enhanced Decomposed Network for Long-term Time Series Forecasting

Aug 25, 2023

Abstract:Long-term time series forecasting is a vital task and has a wide range of real applications. Recent methods focus on capturing the underlying patterns from one single domain (e.g. the time domain or the frequency domain), and have not taken a holistic view to process long-term time series from the time-frequency domains. In this paper, we propose a Time-Frequency Enhanced Decomposed Network (TFDNet) to capture both the long-term underlying patterns and temporal periodicity from the time-frequency domain. In TFDNet, we devise a multi-scale time-frequency enhanced encoder backbone and develop two separate trend and seasonal time-frequency blocks to capture the distinct patterns within the decomposed trend and seasonal components in multi-resolutions. Diverse kernel learning strategies of the kernel operations in time-frequency blocks have been explored, by investigating and incorporating the potential different channel-wise correlation patterns of multivariate time series. Experimental evaluation of eight datasets from five benchmark domains demonstrated that TFDNet is superior to state-of-the-art approaches in both effectiveness and efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge