Yili Wang

HyperD: Hybrid Periodicity Decoupling Framework for Traffic Forecasting

Nov 16, 2025

Abstract:Accurate traffic forecasting plays a vital role in intelligent transportation systems, enabling applications such as congestion control, route planning, and urban mobility optimization. However, traffic forecasting remains challenging due to two key factors: (1) complex spatial dependencies arising from dynamic interactions between road segments and traffic sensors across the network, and (2) the coexistence of multi-scale periodic patterns (e.g., daily and weekly periodic patterns driven by human routines) with irregular fluctuations caused by unpredictable events (e.g., accidents, weather, or construction). To tackle these challenges, we propose HyperD (Hybrid Periodic Decoupling), a novel framework that decouples traffic data into periodic and residual components. The periodic component is handled by the Hybrid Periodic Representation Module, which extracts fine-grained daily and weekly patterns using learnable periodic embeddings and spatial-temporal attention. The residual component, which captures non-periodic, high-frequency fluctuations, is modeled by the Frequency-Aware Residual Representation Module, leveraging complex-valued MLP in frequency domain. To enforce semantic separation between the two components, we further introduce a Dual-View Alignment Loss, which aligns low-frequency information with the periodic branch and high-frequency information with the residual branch. Extensive experiments on four real-world traffic datasets demonstrate that HyperD achieves state-of-the-art prediction accuracy, while offering superior robustness under disturbances and improved computational efficiency compared to existing methods.

Dual Mamba for Node-Specific Representation Learning: Tackling Over-Smoothing with Selective State Space Modeling

Nov 11, 2025Abstract:Over-smoothing remains a fundamental challenge in deep Graph Neural Networks (GNNs), where repeated message passing causes node representations to become indistinguishable. While existing solutions, such as residual connections and skip layers, alleviate this issue to some extent, they fail to explicitly model how node representations evolve in a node-specific and progressive manner across layers. Moreover, these methods do not take global information into account, which is also crucial for mitigating the over-smoothing problem. To address the aforementioned issues, in this work, we propose a Dual Mamba-enhanced Graph Convolutional Network (DMbaGCN), which is a novel framework that integrates Mamba into GNNs to address over-smoothing from both local and global perspectives. DMbaGCN consists of two modules: the Local State-Evolution Mamba (LSEMba) for local neighborhood aggregation and utilizing Mamba's selective state space modeling to capture node-specific representation dynamics across layers, and the Global Context-Aware Mamba (GCAMba) that leverages Mamba's global attention capabilities to incorporate global context for each node. By combining these components, DMbaGCN enhances node discriminability in deep GNNs, thereby mitigating over-smoothing. Extensive experiments on multiple benchmarks demonstrate the effectiveness and efficiency of our method.

BlindGuard: Safeguarding LLM-based Multi-Agent Systems under Unknown Attacks

Aug 11, 2025Abstract:The security of LLM-based multi-agent systems (MAS) is critically threatened by propagation vulnerability, where malicious agents can distort collective decision-making through inter-agent message interactions. While existing supervised defense methods demonstrate promising performance, they may be impractical in real-world scenarios due to their heavy reliance on labeled malicious agents to train a supervised malicious detection model. To enable practical and generalizable MAS defenses, in this paper, we propose BlindGuard, an unsupervised defense method that learns without requiring any attack-specific labels or prior knowledge of malicious behaviors. To this end, we establish a hierarchical agent encoder to capture individual, neighborhood, and global interaction patterns of each agent, providing a comprehensive understanding for malicious agent detection. Meanwhile, we design a corruption-guided detector that consists of directional noise injection and contrastive learning, allowing effective detection model training solely on normal agent behaviors. Extensive experiments show that BlindGuard effectively detects diverse attack types (i.e., prompt injection, memory poisoning, and tool attack) across MAS with various communication patterns while maintaining superior generalizability compared to supervised baselines. The code is available at: https://github.com/MR9812/BlindGuard.

Can LLMs Find Fraudsters? Multi-level LLM Enhanced Graph Fraud Detection

Jul 16, 2025

Abstract:Graph fraud detection has garnered significant attention as Graph Neural Networks (GNNs) have proven effective in modeling complex relationships within multimodal data. However, existing graph fraud detection methods typically use preprocessed node embeddings and predefined graph structures to reveal fraudsters, which ignore the rich semantic cues contained in raw textual information. Although Large Language Models (LLMs) exhibit powerful capabilities in processing textual information, it remains a significant challenge to perform multimodal fusion of processed textual embeddings with graph structures. In this paper, we propose a \textbf{M}ulti-level \textbf{L}LM \textbf{E}nhanced Graph Fraud \textbf{D}etection framework called MLED. In MLED, we utilize LLMs to extract external knowledge from textual information to enhance graph fraud detection methods. To integrate LLMs with graph structure information and enhance the ability to distinguish fraudsters, we design a multi-level LLM enhanced framework including type-level enhancer and relation-level enhancer. One is to enhance the difference between the fraudsters and the benign entities, the other is to enhance the importance of the fraudsters in different relations. The experiments on four real-world datasets show that MLED achieves state-of-the-art performance in graph fraud detection as a generalized framework that can be applied to existing methods.

Understanding the Information Propagation Effects of Communication Topologies in LLM-based Multi-Agent Systems

May 29, 2025Abstract:The communication topology in large language model-based multi-agent systems fundamentally governs inter-agent collaboration patterns, critically shaping both the efficiency and effectiveness of collective decision-making. While recent studies for communication topology automated design tend to construct sparse structures for efficiency, they often overlook why and when sparse and dense topologies help or hinder collaboration. In this paper, we present a causal framework to analyze how agent outputs, whether correct or erroneous, propagate under topologies with varying sparsity. Our empirical studies reveal that moderately sparse topologies, which effectively suppress error propagation while preserving beneficial information diffusion, typically achieve optimal task performance. Guided by this insight, we propose a novel topology design approach, EIB-leanrner, that balances error suppression and beneficial information propagation by fusing connectivity patterns from both dense and sparse graphs. Extensive experiments show the superior effectiveness, communication cost, and robustness of EIB-leanrner.

A Comprehensive Survey of Synthetic Tabular Data Generation

Apr 23, 2025Abstract:Tabular data remains one of the most prevalent and critical data formats across diverse real-world applications. However, its effective use in machine learning (ML) is often constrained by challenges such as data scarcity, privacy concerns, and class imbalance. Synthetic data generation has emerged as a promising solution, leveraging generative models to learn the distribution of real datasets and produce high-fidelity, privacy-preserving samples. Various generative paradigms have been explored, including energy-based models (EBMs), variational autoencoders (VAEs), generative adversarial networks (GANs), large language models (LLMs), and diffusion models. While several surveys have investigated synthetic tabular data generation, most focus on narrow subdomains or specific generative methods, such as GANs, diffusion models, or privacy-preserving techniques. This limited scope often results in fragmented insights, lacking a comprehensive synthesis that bridges diverse approaches. In particular, recent advances driven by LLMs and diffusion-based models remain underexplored. This gap hinders a holistic understanding of the field`s evolution, methodological interplay, and open challenges. To address this, our survey provides a unified and systematic review of synthetic tabular data generation. Our contributions are threefold: (1) we propose a comprehensive taxonomy that organizes existing methods into traditional approaches, diffusion-based methods, and LLM-based models, and provide an in-depth comparative analysis; (2) we detail the complete pipeline for synthetic tabular data generation, including data synthesis, post-processing, and evaluation; (3) we identify major challenges, explore real-world applications, and outline open research questions and future directions to guide future work in this rapidly evolving area.

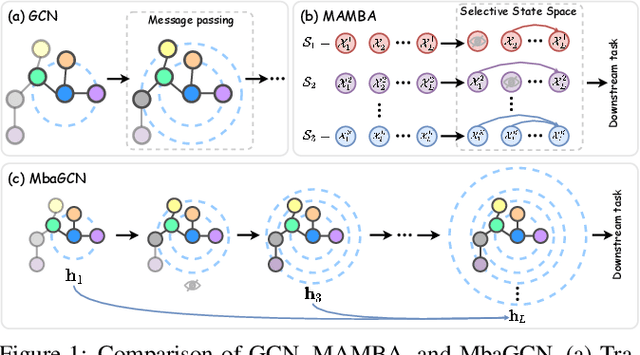

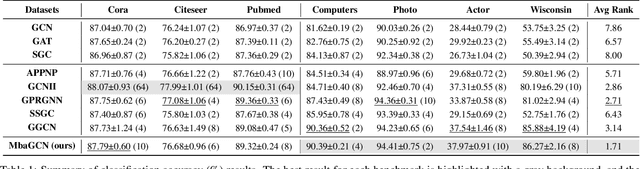

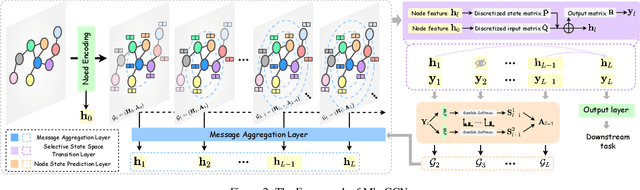

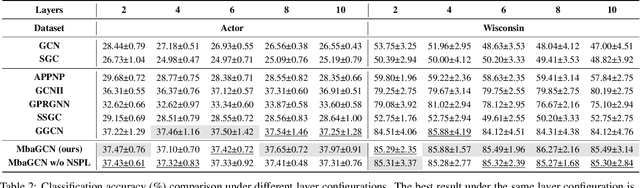

Mamba-Based Graph Convolutional Networks: Tackling Over-smoothing with Selective State Space

Jan 26, 2025

Abstract:Graph Neural Networks (GNNs) have shown great success in various graph-based learning tasks. However, it often faces the issue of over-smoothing as the model depth increases, which causes all node representations to converge to a single value and become indistinguishable. This issue stems from the inherent limitations of GNNs, which struggle to distinguish the importance of information from different neighborhoods. In this paper, we introduce MbaGCN, a novel graph convolutional architecture that draws inspiration from the Mamba paradigm-originally designed for sequence modeling. MbaGCN presents a new backbone for GNNs, consisting of three key components: the Message Aggregation Layer, the Selective State Space Transition Layer, and the Node State Prediction Layer. These components work in tandem to adaptively aggregate neighborhood information, providing greater flexibility and scalability for deep GNN models. While MbaGCN may not consistently outperform all existing methods on each dataset, it provides a foundational framework that demonstrates the effective integration of the Mamba paradigm into graph representation learning. Through extensive experiments on benchmark datasets, we demonstrate that MbaGCN paves the way for future advancements in graph neural network research.

Graph Defense Diffusion Model

Jan 20, 2025Abstract:Graph Neural Networks (GNNs) demonstrate significant potential in various applications but remain highly vulnerable to adversarial attacks, which can greatly degrade their performance. Existing graph purification methods attempt to address this issue by filtering attacked graphs; however, they struggle to effectively defend against multiple types of adversarial attacks simultaneously due to their limited flexibility, and they lack comprehensive modeling of graph data due to their heavy reliance on heuristic prior knowledge. To overcome these challenges, we propose a more versatile approach for defending against adversarial attacks on graphs. In this work, we introduce the Graph Defense Diffusion Model (GDDM), a flexible purification method that leverages the denoising and modeling capabilities of diffusion models. The iterative nature of diffusion models aligns well with the stepwise process of adversarial attacks, making them particularly suitable for defense. By iteratively adding and removing noise, GDDM effectively purifies attacked graphs, restoring their original structure and features. Our GDDM consists of two key components: (1) Graph Structure-Driven Refiner, which preserves the basic fidelity of the graph during the denoising process, and ensures that the generated graph remains consistent with the original scope; and (2) Node Feature-Constrained Regularizer, which removes residual impurities from the denoised graph, further enhances the purification effect. Additionally, we design tailored denoising strategies to handle different types of adversarial attacks, improving the model's adaptability to various attack scenarios. Extensive experiments conducted on three real-world datasets demonstrate that GDDM outperforms state-of-the-art methods in defending against a wide range of adversarial attacks, showcasing its robustness and effectiveness.

Pioneering Reliable Assessment in Text-to-Image Knowledge Editing: Leveraging a Fine-Grained Dataset and an Innovative Criterion

Sep 26, 2024Abstract:During pre-training, the Text-to-Image (T2I) diffusion models encode factual knowledge into their parameters. These parameterized facts enable realistic image generation, but they may become obsolete over time, thereby misrepresenting the current state of the world. Knowledge editing techniques aim to update model knowledge in a targeted way. However, facing the dual challenges posed by inadequate editing datasets and unreliable evaluation criterion, the development of T2I knowledge editing encounter difficulties in effectively generalizing injected knowledge. In this work, we design a T2I knowledge editing framework by comprehensively spanning on three phases: First, we curate a dataset \textbf{CAKE}, comprising paraphrase and multi-object test, to enable more fine-grained assessment on knowledge generalization. Second, we propose a novel criterion, \textbf{adaptive CLIP threshold}, to effectively filter out false successful images under the current criterion and achieve reliable editing evaluation. Finally, we introduce \textbf{MPE}, a simple but effective approach for T2I knowledge editing. Instead of tuning parameters, MPE precisely recognizes and edits the outdated part of the conditioning text-prompt to accommodate the up-to-date knowledge. A straightforward implementation of MPE (Based on in-context learning) exhibits better overall performance than previous model editors. We hope these efforts can further promote faithful evaluation of T2I knowledge editing methods.

Unifying Unsupervised Graph-Level Anomaly Detection and Out-of-Distribution Detection: A Benchmark

Jun 21, 2024Abstract:To build safe and reliable graph machine learning systems, unsupervised graph-level anomaly detection (GLAD) and unsupervised graph-level out-of-distribution (OOD) detection (GLOD) have received significant attention in recent years. Though those two lines of research indeed share the same objective, they have been studied independently in the community due to distinct evaluation setups, creating a gap that hinders the application and evaluation of methods from one to the other. To bridge the gap, in this work, we present a Unified Benchmark for unsupervised Graph-level OOD and anomaly Detection (our method), a comprehensive evaluation framework that unifies GLAD and GLOD under the concept of generalized graph-level OOD detection. Our benchmark encompasses 35 datasets spanning four practical anomaly and OOD detection scenarios, facilitating the comparison of 16 representative GLAD/GLOD methods. We conduct multi-dimensional analyses to explore the effectiveness, generalizability, robustness, and efficiency of existing methods, shedding light on their strengths and limitations. Furthermore, we provide an open-source codebase (https://github.com/UB-GOLD/UB-GOLD) of our method to foster reproducible research and outline potential directions for future investigations based on our insights.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge