Xueping Liu

Stable Time Series Prediction of Enterprise Carbon Emissions Based on Causal Inference

Jan 31, 2026Abstract:Against the backdrop of ongoing carbon peaking and carbon neutrality goals, accurate prediction of enterprise carbon emission trends constitutes an essential foundation for energy structure optimization and low-carbon transformation decision-making. Nevertheless, significant heterogeneity persists across regions, industries and individual enterprises regarding energy structure, production scale, policy intensity and governance efficacy, resulting in pronounced distribution shifts and non-stationarity in carbon emission data across both temporal and spatial dimensions. Such cross-regional and cross-enterprise data drift not only compromises the accuracy of carbon emission reporting but substantially undermines the guidance value of predictive models for production planning and carbon quota trading decisions. To address this critical challenge, we integrate causal inference perspectives with stable learning methodologies and time-series modelling, proposing a stable temporal prediction mechanism tailored to distribution shift environments. This mechanism incorporates enterprise-level energy inputs, capital investment, labour deployment, carbon pricing, governmental interventions and policy implementation intensity, constructing a risk consistency-constrained stable learning framework that extracts causal stable features (robust against external perturbations yet demonstrating long-term stable effects on carbon dioxide emissions) from multi-environment samples across diverse policies, regions and industrial sectors. Furthermore, through adaptive normalization and sample reweighting strategies, the approach dynamically rectifies temporal non-stationarity induced by economic fluctuations and policy transitions, ultimately enhancing model generalization capability and explainability in complex environments.

THUD++: Large-Scale Dynamic Indoor Scene Dataset and Benchmark for Mobile Robots

Dec 11, 2024

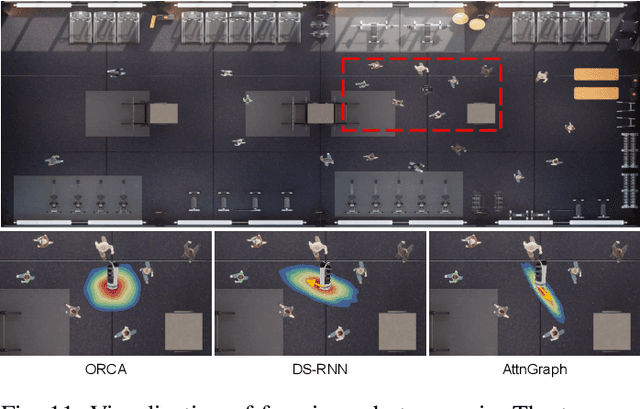

Abstract:Most existing mobile robotic datasets primarily capture static scenes, limiting their utility for evaluating robotic performance in dynamic environments. To address this, we present a mobile robot oriented large-scale indoor dataset, denoted as THUD++ (TsingHua University Dynamic) robotic dataset, for dynamic scene understanding. Our current dataset includes 13 large-scale dynamic scenarios, combining both real-world and synthetic data collected with a real robot platform and a physical simulation platform, respectively. The RGB-D dataset comprises over 90K image frames, 20M 2D/3D bounding boxes of static and dynamic objects, camera poses, and IMU. The trajectory dataset covers over 6,000 pedestrian trajectories in indoor scenes. Additionally, the dataset is augmented with a Unity3D-based simulation platform, allowing researchers to create custom scenes and test algorithms in a controlled environment. We evaluate state-of-the-art methods on THUD++ across mainstream indoor scene understanding tasks, e.g., 3D object detection, semantic segmentation, relocalization, pedestrian trajectory prediction, and navigation. Our experiments highlight the challenges mobile robots encounter in indoor environments, especially when navigating in complex, crowded, and dynamic scenes. By sharing this dataset, we aim to accelerate the development and testing of mobile robot algorithms, contributing to real-world robotic applications.

Mobile Robot Oriented Large-Scale Indoor Dataset for Dynamic Scene Understanding

Jun 28, 2024Abstract:Most existing robotic datasets capture static scene data and thus are limited in evaluating robots' dynamic performance. To address this, we present a mobile robot oriented large-scale indoor dataset, denoted as THUD (Tsinghua University Dynamic) robotic dataset, for training and evaluating their dynamic scene understanding algorithms. Specifically, the THUD dataset construction is first detailed, including organization, acquisition, and annotation methods. It comprises both real-world and synthetic data, collected with a real robot platform and a physical simulation platform, respectively. Our current dataset includes 13 larges-scale dynamic scenarios, 90K image frames, 20M 2D/3D bounding boxes of static and dynamic objects, camera poses, and IMU. The dataset is still continuously expanding. Then, the performance of mainstream indoor scene understanding tasks, e.g. 3D object detection, semantic segmentation, and robot relocalization, is evaluated on our THUD dataset. These experiments reveal serious challenges for some robot scene understanding tasks in dynamic scenes. By sharing this dataset, we aim to foster and iterate new mobile robot algorithms quickly for robot actual working dynamic environment, i.e. complex crowded dynamic scenes.

GaussianRoom: Improving 3D Gaussian Splatting with SDF Guidance and Monocular Cues for Indoor Scene Reconstruction

May 30, 2024

Abstract:Recently, 3D Gaussian Splatting(3DGS) has revolutionized neural rendering with its high-quality rendering and real-time speed. However, when it comes to indoor scenes with a significant number of textureless areas, 3DGS yields incomplete and noisy reconstruction results due to the poor initialization of the point cloud and under-constrained optimization. Inspired by the continuity of signed distance field (SDF), which naturally has advantages in modeling surfaces, we present a unified optimizing framework integrating neural SDF with 3DGS. This framework incorporates a learnable neural SDF field to guide the densification and pruning of Gaussians, enabling Gaussians to accurately model scenes even with poor initialized point clouds. At the same time, the geometry represented by Gaussians improves the efficiency of the SDF field by piloting its point sampling. Additionally, we regularize the optimization with normal and edge priors to eliminate geometry ambiguity in textureless areas and improve the details. Extensive experiments in ScanNet and ScanNet++ show that our method achieves state-of-the-art performance in both surface reconstruction and novel view synthesis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge