Xinzheng Xu

Human-Corrected Labels Learning: Enhancing Labels Quality via Human Correction of VLMs Discrepancies

Nov 14, 2025Abstract:Vision-Language Models (VLMs), with their powerful content generation capabilities, have been successfully applied to data annotation processes. However, the VLM-generated labels exhibit dual limitations: low quality (i.e., label noise) and absence of error correction mechanisms. To enhance label quality, we propose Human-Corrected Labels (HCLs), a novel setting that efficient human correction for VLM-generated noisy labels. As shown in Figure 1(b), HCL strategically deploys human correction only for instances with VLM discrepancies, achieving both higher-quality annotations and reduced labor costs. Specifically, we theoretically derive a risk-consistent estimator that incorporates both human-corrected labels and VLM predictions to train classifiers. Besides, we further propose a conditional probability method to estimate the label distribution using a combination of VLM outputs and model predictions. Extensive experiments demonstrate that our approach achieves superior classification performance and is robust to label noise, validating the effectiveness of HCL in practical weak supervision scenarios. Code https://github.com/Lilianach24/HCL.git

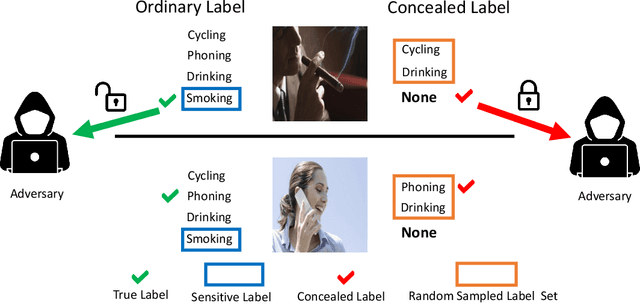

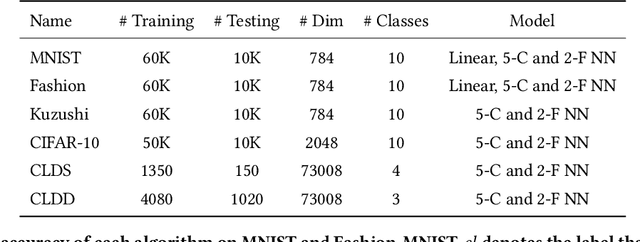

Learning from Concealed Labels

Dec 03, 2024

Abstract:Annotating data for sensitive labels (e.g., disease, smoking) poses a potential threats to individual privacy in many real-world scenarios. To cope with this problem, we propose a novel setting to protect privacy of each instance, namely learning from concealed labels for multi-class classification. Concealed labels prevent sensitive labels from appearing in the label set during the label collection stage, which specifies none and some random sampled insensitive labels as concealed labels set to annotate sensitive data. In this paper, an unbiased estimator can be established from concealed data under mild assumptions, and the learned multi-class classifier can not only classify the instance from insensitive labels accurately but also recognize the instance from the sensitive labels. Moreover, we bound the estimation error and show that the multi-class classifier achieves the optimal parametric convergence rate. Experiments demonstrate the significance and effectiveness of the proposed method for concealed labels in synthetic and real-world datasets.

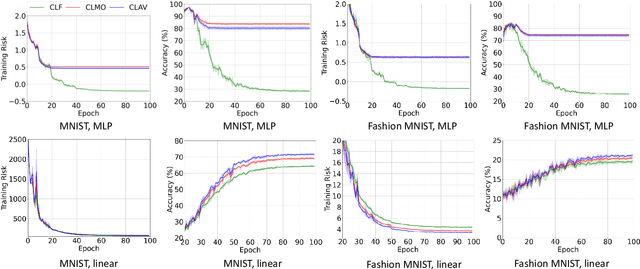

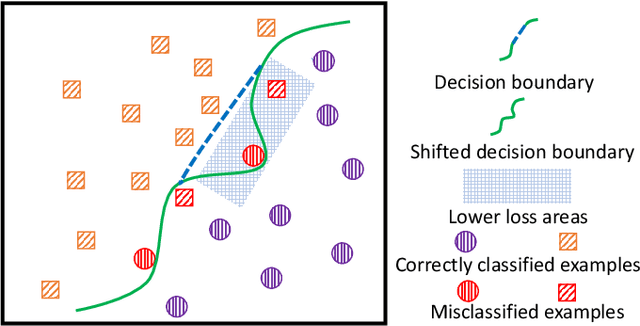

ESA: Example Sieve Approach for Multi-Positive and Unlabeled Learning

Dec 03, 2024

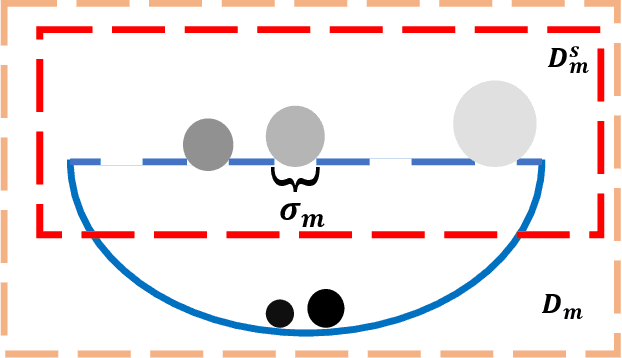

Abstract:Learning from Multi-Positive and Unlabeled (MPU) data has gradually attracted significant attention from practical applications. Unfortunately, the risk of MPU also suffer from the shift of minimum risk, particularly when the models are very flexible as shown in Fig.\ref{moti}. In this paper, to alleviate the shifting of minimum risk problem, we propose an Example Sieve Approach (ESA) to select examples for training a multi-class classifier. Specifically, we sieve out some examples by utilizing the Certain Loss (CL) value of each example in the training stage and analyze the consistency of the proposed risk estimator. Besides, we show that the estimation error of proposed ESA obtains the optimal parametric convergence rate. Extensive experiments on various real-world datasets show the proposed approach outperforms previous methods.

CoA: Chain-of-Action for Generative Semantic Labels

Nov 26, 2024

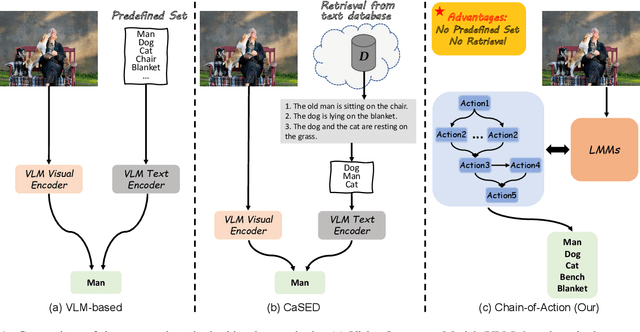

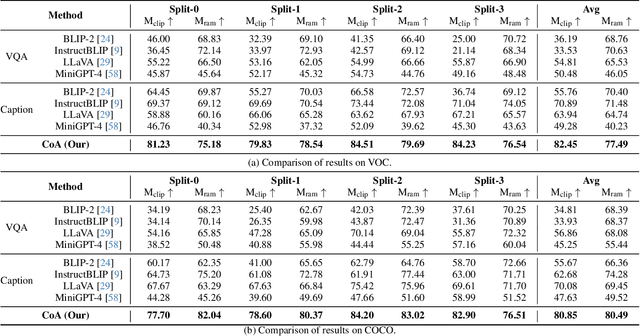

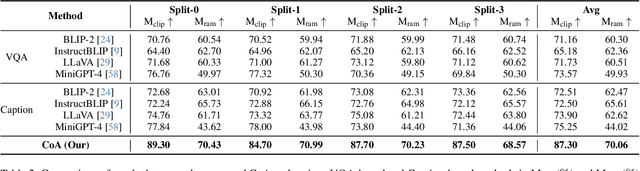

Abstract:Recent advances in vision-language models (VLM) have demonstrated remarkable capability in image classification. These VLMs leverage a predefined set of categories to construct text prompts for zero-shot reasoning. However, in more open-ended domains like autonomous driving, using a predefined set of labels becomes impractical, as the semantic label space is unknown and constantly evolving. Additionally, fixed embedding text prompts often tend to predict a single label (while in reality, multiple labels commonly exist per image). In this paper, we introduce CoA, an innovative Chain-of-Action (CoA) method that generates labels aligned with all contextually relevant features of an image. CoA is designed based on the observation that enriched and valuable contextual information improves generative performance during inference. Traditional vision-language models tend to output singular and redundant responses. Therefore, we employ a tailored CoA to alleviate this problem. We first break down the generative labeling task into detailed actions and construct an CoA leading to the final generative objective. Each action extracts and merges key information from the previous action and passes the enriched information as context to the next action, ultimately improving the VLM in generating comprehensive and accurate semantic labels. We assess the effectiveness of CoA through comprehensive evaluations on widely-used benchmark datasets and the results demonstrate significant improvements across key performance metrics.

Learning from True-False Labels via Multi-modal Prompt Retrieving

May 24, 2024

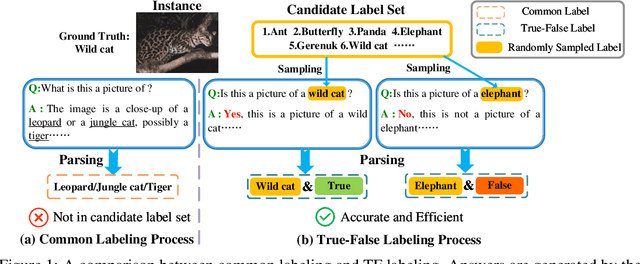

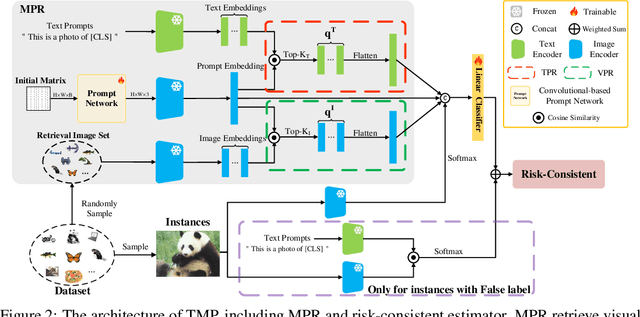

Abstract:Weakly supervised learning has recently achieved considerable success in reducing annotation costs and label noise. Unfortunately, existing weakly supervised learning methods are short of ability in generating reliable labels via pre-trained vision-language models (VLMs). In this paper, we propose a novel weakly supervised labeling setting, namely True-False Labels (TFLs) which can achieve high accuracy when generated by VLMs. The TFL indicates whether an instance belongs to the label, which is randomly and uniformly sampled from the candidate label set. Specifically, we theoretically derive a risk-consistent estimator to explore and utilize the conditional probability distribution information of TFLs. Besides, we propose a convolutional-based Multi-modal Prompt Retrieving (MRP) method to bridge the gap between the knowledge of VLMs and target learning tasks. Experimental results demonstrate the effectiveness of the proposed TFL setting and MRP learning method. The code to reproduce the experiments is at https://github.com/Tranquilxu/TMP.

Learning from Reduced Labels for Long-Tailed Data

Mar 25, 2024

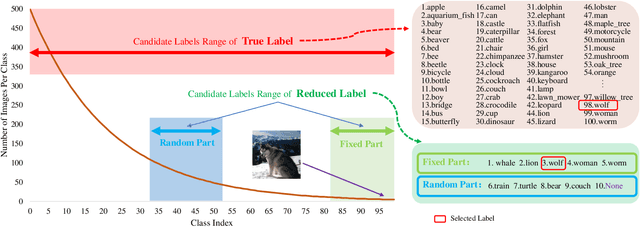

Abstract:Long-tailed data is prevalent in real-world classification tasks and heavily relies on supervised information, which makes the annotation process exceptionally labor-intensive and time-consuming. Unfortunately, despite being a common approach to mitigate labeling costs, existing weakly supervised learning methods struggle to adequately preserve supervised information for tail samples, resulting in a decline in accuracy for the tail classes. To alleviate this problem, we introduce a novel weakly supervised labeling setting called Reduced Label. The proposed labeling setting not only avoids the decline of supervised information for the tail samples, but also decreases the labeling costs associated with long-tailed data. Additionally, we propose an straightforward and highly efficient unbiased framework with strong theoretical guarantees to learn from these Reduced Labels. Extensive experiments conducted on benchmark datasets including ImageNet validate the effectiveness of our approach, surpassing the performance of state-of-the-art weakly supervised methods.

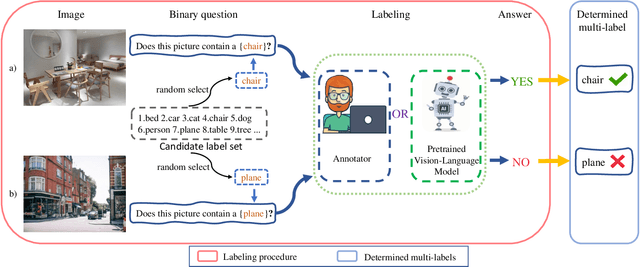

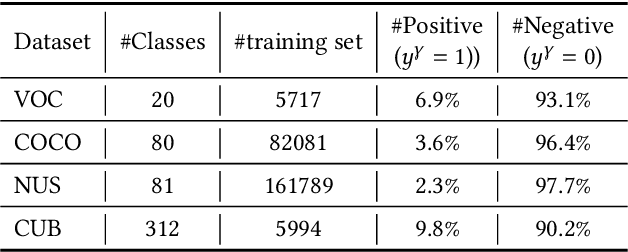

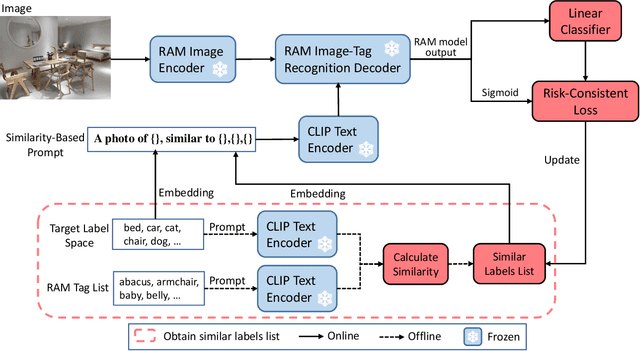

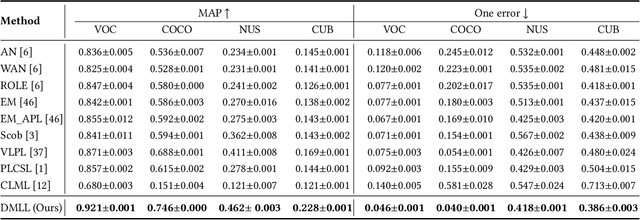

Determined Multi-Label Learning via Similarity-Based Prompt

Mar 25, 2024

Abstract:In multi-label classification, each training instance is associated with multiple class labels simultaneously. Unfortunately, collecting the fully precise class labels for each training instance is time- and labor-consuming for real-world applications. To alleviate this problem, a novel labeling setting termed \textit{Determined Multi-Label Learning} (DMLL) is proposed, aiming to effectively alleviate the labeling cost inherent in multi-label tasks. In this novel labeling setting, each training instance is associated with a \textit{determined label} (either "Yes" or "No"), which indicates whether the training instance contains the provided class label. The provided class label is randomly and uniformly selected from the whole candidate labels set. Besides, each training instance only need to be determined once, which significantly reduce the annotation cost of the labeling task for multi-label datasets. In this paper, we theoretically derive an risk-consistent estimator to learn a multi-label classifier from these determined-labeled training data. Additionally, we introduce a similarity-based prompt learning method for the first time, which minimizes the risk-consistent loss of large-scale pre-trained models to learn a supplemental prompt with richer semantic information. Extensive experimental validation underscores the efficacy of our approach, demonstrating superior performance compared to existing state-of-the-art methods.

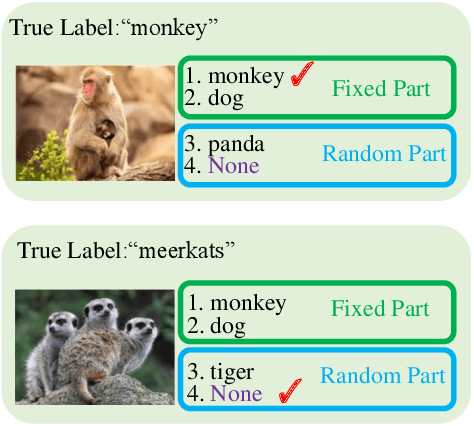

Learning from Stochastic Labels

Feb 01, 2023Abstract:Annotating multi-class instances is a crucial task in the field of machine learning. Unfortunately, identifying the correct class label from a long sequence of candidate labels is time-consuming and laborious. To alleviate this problem, we design a novel labeling mechanism called stochastic label. In this setting, stochastic label includes two cases: 1) identify a correct class label from a small number of randomly given labels; 2) annotate the instance with None label when given labels do not contain correct class label. In this paper, we propose a novel suitable approach to learn from these stochastic labels. We obtain an unbiased estimator that utilizes less supervised information in stochastic labels to train a multi-class classifier. Additionally, it is theoretically justifiable by deriving the estimation error bound of the proposed method. Finally, we conduct extensive experiments on widely-used benchmark datasets to validate the superiority of our method by comparing it with existing state-of-the-art methods.

Complementary Labels Learning with Augmented Classes

Nov 19, 2022

Abstract:Complementary Labels Learning (CLL) arises in many real-world tasks such as private questions classification and online learning, which aims to alleviate the annotation cost compared with standard supervised learning. Unfortunately, most previous CLL algorithms were in a stable environment rather than an open and dynamic scenarios, where data collected from unseen augmented classes in the training process might emerge in the testing phase. In this paper, we propose a novel problem setting called Complementary Labels Learning with Augmented Classes (CLLAC), which brings the challenge that classifiers trained by complementary labels should not only be able to classify the instances from observed classes accurately, but also recognize the instance from the Augmented Classes in the testing phase. Specifically, by using unlabeled data, we propose an unbiased estimator of classification risk for CLLAC, which is guaranteed to be provably consistent. Moreover, we provide generalization error bound for proposed method which shows that the optimal parametric convergence rate is achieved for estimation error. Finally, the experimental results on several benchmark datasets verify the effectiveness of the proposed method.

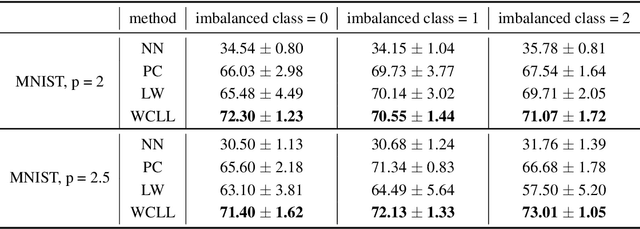

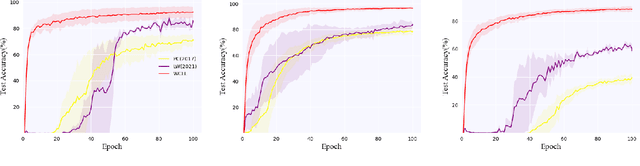

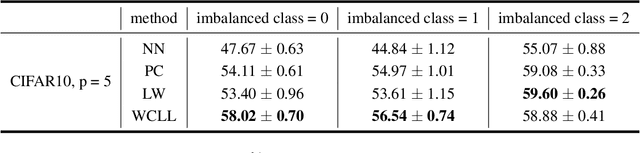

Class-Imbalanced Complementary-Label Learning via Weighted Loss

Sep 28, 2022

Abstract:Complementary-label learning (CLL) is a common application in the scenario of weak supervision. However, in real-world datasets, CLL encounters class-imbalanced training samples, where the quantity of samples of one class is significantly lower than those of other classes. Unfortunately, existing CLL approaches have yet to explore the problem of class-imbalanced samples, which reduces the prediction accuracy, especially in imbalanced classes. In this paper, we propose a novel problem setting to allow learning from class-imbalanced complementarily labeled samples for multi-class classification. Accordingly, to deal with this novel problem, we propose a new CLL approach, called Weighted Complementary-Label Learning (WCLL). The proposed method models a weighted empirical risk minimization loss by utilizing the class-imbalanced complementarily labeled information, which is also applicable to multi-class imbalanced training samples. Furthermore, the estimation error bound of the proposed method was derived to provide a theoretical guarantee. Finally, we do extensive experiments on widely-used benchmark datasets to validate the superiority of our method by comparing it with existing state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge