Wenlong Mou

Continuous-time reinforcement learning: ellipticity enables model-free value function approximation

Feb 06, 2026Abstract:We study off-policy reinforcement learning for controlling continuous-time Markov diffusion processes with discrete-time observations and actions. We consider model-free algorithms with function approximation that learn value and advantage functions directly from data, without unrealistic structural assumptions on the dynamics. Leveraging the ellipticity of the diffusions, we establish a new class of Hilbert-space positive definiteness and boundedness properties for the Bellman operators. Based on these properties, we propose the Sobolev-prox fitted $q$-learning algorithm, which learns value and advantage functions by iteratively solving least-squares regression problems. We derive oracle inequalities for the estimation error, governed by (i) the best approximation error of the function classes, (ii) their localized complexity, (iii) exponentially decaying optimization error, and (iv) numerical discretization error. These results identify ellipticity as a key structural property that renders reinforcement learning with function approximation for Markov diffusions no harder than supervised learning.

Predicting and improving test-time scaling laws via reward tail-guided search

Feb 01, 2026Abstract:Test-time scaling has emerged as a critical avenue for enhancing the reasoning capabilities of Large Language Models (LLMs). Though the straight-forward ''best-of-$N$'' (BoN) strategy has already demonstrated significant improvements in performance, it lacks principled guidance on the choice of $N$, budget allocation, and multi-stage decision-making, thereby leaving substantial room for optimization. While many works have explored such optimization, rigorous theoretical guarantees remain limited. In this work, we propose new methodologies to predict and improve scaling properties via tail-guided search. By estimating the tail distribution of rewards, our method predicts the scaling law of LLMs without the need for exhaustive evaluations. Leveraging this prediction tool, we introduce Scaling-Law Guided (SLG) Search, a new test-time algorithm that dynamically allocates compute to identify and exploit intermediate states with the highest predicted potential. We theoretically prove that SLG achieves vanishing regret compared to perfect-information oracles, and achieves expected rewards that would otherwise require a polynomially larger compute budget required when using BoN. Empirically, we validate our framework across different LLMs and reward models, confirming that tail-guided allocation consistently achieves higher reward yields than Best-of-$N$ under identical compute budgets. Our code is available at https://github.com/PotatoJnny/Scaling-Law-Guided-search.

Reinforcement Learning with Action-Triggered Observations

Oct 02, 2025

Abstract:We study reinforcement learning problems where state observations are stochastically triggered by actions, a constraint common in many real-world applications. This framework is formulated as Action-Triggered Sporadically Traceable Markov Decision Processes (ATST-MDPs), where each action has a specified probability of triggering a state observation. We derive tailored Bellman optimality equations for this framework and introduce the action-sequence learning paradigm in which agents commit to executing a sequence of actions until the next observation arrives. Under the linear MDP assumption, value-functions are shown to admit linear representations in an induced action-sequence feature map. Leveraging this structure, we propose off-policy estimators with statistical error guarantees for such feature maps and introduce ST-LSVI-UCB, a variant of LSVI-UCB adapted for action-triggered settings. ST-LSVI-UCB achieves regret $\widetilde O(\sqrt{Kd^3(1-\gamma)^{-3}})$, where $K$ is the number of episodes, $d$ the feature dimension, and $\gamma$ the discount factor (per-step episode non-termination probability). Crucially, this work establishes the theoretical foundation for learning with sporadic, action-triggered observations while demonstrating that efficient learning remains feasible under such observation constraints.

Statistical guarantees for continuous-time policy evaluation: blessing of ellipticity and new tradeoffs

Feb 06, 2025Abstract:We study the estimation of the value function for continuous-time Markov diffusion processes using a single, discretely observed ergodic trajectory. Our work provides non-asymptotic statistical guarantees for the least-squares temporal-difference (LSTD) method, with performance measured in the first-order Sobolev norm. Specifically, the estimator attains an $O(1 / \sqrt{T})$ convergence rate when using a trajectory of length $T$; notably, this rate is achieved as long as $T$ scales nearly linearly with both the mixing time of the diffusion and the number of basis functions employed. A key insight of our approach is that the ellipticity inherent in the diffusion process ensures robust performance even as the effective horizon diverges to infinity. Moreover, we demonstrate that the Markovian component of the statistical error can be controlled by the approximation error, while the martingale component grows at a slower rate relative to the number of basis functions. By carefully balancing these two sources of error, our analysis reveals novel trade-offs between approximation and statistical errors.

To bootstrap or to rollout? An optimal and adaptive interpolation

Nov 14, 2024Abstract:Bootstrapping and rollout are two fundamental principles for value function estimation in reinforcement learning (RL). We introduce a novel class of Bellman operators, called subgraph Bellman operators, that interpolate between bootstrapping and rollout methods. Our estimator, derived by solving the fixed point of the empirical subgraph Bellman operator, combines the strengths of the bootstrapping-based temporal difference (TD) estimator and the rollout-based Monte Carlo (MC) methods. Specifically, the error upper bound of our estimator approaches the optimal variance achieved by TD, with an additional term depending on the exit probability of a selected subset of the state space. At the same time, the estimator exhibits the finite-sample adaptivity of MC, with sample complexity depending only on the occupancy measure of this subset. We complement the upper bound with an information-theoretic lower bound, showing that the additional term is unavoidable given a reasonable sample size. Together, these results establish subgraph Bellman estimators as an optimal and adaptive framework for reconciling TD and MC methods in policy evaluation.

On Bellman equations for continuous-time policy evaluation I: discretization and approximation

Jul 08, 2024Abstract:We study the problem of computing the value function from a discretely-observed trajectory of a continuous-time diffusion process. We develop a new class of algorithms based on easily implementable numerical schemes that are compatible with discrete-time reinforcement learning (RL) with function approximation. We establish high-order numerical accuracy as well as the approximation error guarantees for the proposed approach. In contrast to discrete-time RL problems where the approximation factor depends on the effective horizon, we obtain a bounded approximation factor using the underlying elliptic structures, even if the effective horizon diverges to infinity.

Kernel-based off-policy estimation without overlap: Instance optimality beyond semiparametric efficiency

Jan 16, 2023

Abstract:We study optimal procedures for estimating a linear functional based on observational data. In many problems of this kind, a widely used assumption is strict overlap, i.e., uniform boundedness of the importance ratio, which measures how well the observational data covers the directions of interest. When it is violated, the classical semi-parametric efficiency bound can easily become infinite, so that the instance-optimal risk depends on the function class used to model the regression function. For any convex and symmetric function class $\mathcal{F}$, we derive a non-asymptotic local minimax bound on the mean-squared error in estimating a broad class of linear functionals. This lower bound refines the classical semi-parametric one, and makes connections to moduli of continuity in functional estimation. When $\mathcal{F}$ is a reproducing kernel Hilbert space, we prove that this lower bound can be achieved up to a constant factor by analyzing a computationally simple regression estimator. We apply our general results to various families of examples, thereby uncovering a spectrum of rates that interpolate between the classical theories of semi-parametric efficiency (with $\sqrt{n}$-consistency) and the slower minimax rates associated with non-parametric function estimation.

Off-policy estimation of linear functionals: Non-asymptotic theory for semi-parametric efficiency

Sep 26, 2022

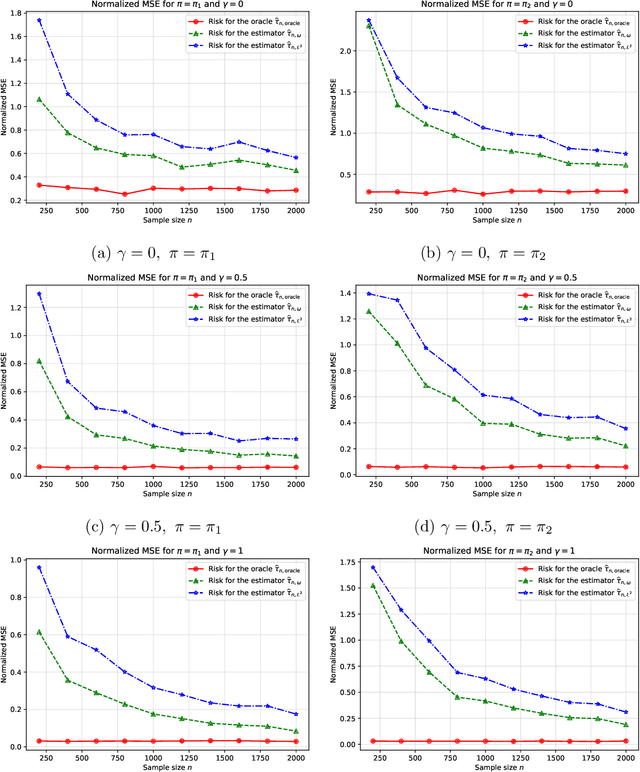

Abstract:The problem of estimating a linear functional based on observational data is canonical in both the causal inference and bandit literatures. We analyze a broad class of two-stage procedures that first estimate the treatment effect function, and then use this quantity to estimate the linear functional. We prove non-asymptotic upper bounds on the mean-squared error of such procedures: these bounds reveal that in order to obtain non-asymptotically optimal procedures, the error in estimating the treatment effect should be minimized in a certain weighted $L^2$-norm. We analyze a two-stage procedure based on constrained regression in this weighted norm, and establish its instance-dependent optimality in finite samples via matching non-asymptotic local minimax lower bounds. These results show that the optimal non-asymptotic risk, in addition to depending on the asymptotically efficient variance, depends on the weighted norm distance between the true outcome function and its approximation by the richest function class supported by the sample size.

Optimal variance-reduced stochastic approximation in Banach spaces

Jan 21, 2022Abstract:We study the problem of estimating the fixed point of a contractive operator defined on a separable Banach space. Focusing on a stochastic query model that provides noisy evaluations of the operator, we analyze a variance-reduced stochastic approximation scheme, and establish non-asymptotic bounds for both the operator defect and the estimation error, measured in an arbitrary semi-norm. In contrast to worst-case guarantees, our bounds are instance-dependent, and achieve the local asymptotic minimax risk non-asymptotically. For linear operators, contractivity can be relaxed to multi-step contractivity, so that the theory can be applied to problems like average reward policy evaluation problem in reinforcement learning. We illustrate the theory via applications to stochastic shortest path problems, two-player zero-sum Markov games, as well as policy evaluation and $Q$-learning for tabular Markov decision processes.

Optimal and instance-dependent guarantees for Markovian linear stochastic approximation

Dec 23, 2021Abstract:We study stochastic approximation procedures for approximately solving a $d$-dimensional linear fixed point equation based on observing a trajectory of length $n$ from an ergodic Markov chain. We first exhibit a non-asymptotic bound of the order $t_{\mathrm{mix}} \tfrac{d}{n}$ on the squared error of the last iterate of a standard scheme, where $t_{\mathrm{mix}}$ is a mixing time. We then prove a non-asymptotic instance-dependent bound on a suitably averaged sequence of iterates, with a leading term that matches the local asymptotic minimax limit, including sharp dependence on the parameters $(d, t_{\mathrm{mix}})$ in the higher order terms. We complement these upper bounds with a non-asymptotic minimax lower bound that establishes the instance-optimality of the averaged SA estimator. We derive corollaries of these results for policy evaluation with Markov noise -- covering the TD($\lambda$) family of algorithms for all $\lambda \in [0, 1)$ -- and linear autoregressive models. Our instance-dependent characterizations open the door to the design of fine-grained model selection procedures for hyperparameter tuning (e.g., choosing the value of $\lambda$ when running the TD($\lambda$) algorithm).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge