Vittorio Fra

Natively neuromorphic LMU architecture for encoding-free SNN-based HAR on commercial edge devices

Jul 04, 2024

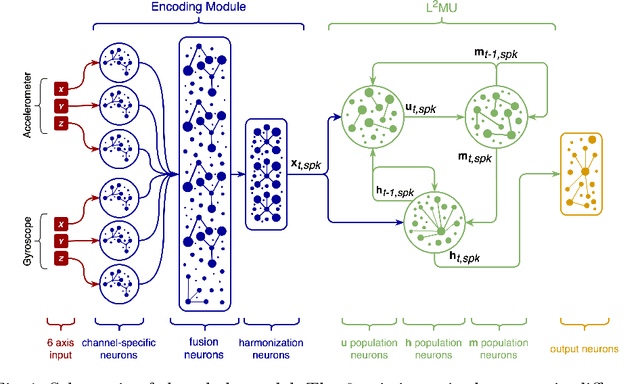

Abstract:Neuromorphic models take inspiration from the human brain by adopting bio-plausible neuron models to build alternatives to traditional Machine Learning (ML) and Deep Learning (DL) solutions. The scarce availability of dedicated hardware able to actualize the emulation of brain-inspired computation, which is otherwise only simulated, yet still hinders the wide adoption of neuromorphic computing for edge devices and embedded systems. With this premise, we adopt the perspective of neuromorphic computing for conventional hardware and we present the L2MU, a natively neuromorphic Legendre Memory Unit (LMU) which entirely relies on Leaky Integrate-and-Fire (LIF) neurons. Specifically, the original recurrent architecture of LMU has been redesigned by modelling every constituent element with neural populations made of LIF or Current-Based (CuBa) LIF neurons. To couple neuromorphic computing and off-the-shelf edge devices, we equipped the L2MU with an input module for the conversion of real values into spikes, which makes it an encoding-free implementation of a Recurrent Spiking Neural Network (RSNN) able to directly work with raw sensor signals on non-dedicated hardware. As a use case to validate our network, we selected the task of Human Activity Recognition (HAR). We benchmarked our L2MU on smartwatch signals from hand-oriented activities, deploying it on three different commercial edge devices in compressed versions too. The reported results remark the possibility of considering neuromorphic models not only in an exclusive relationship with dedicated hardware but also as a suitable choice to work with common sensors and devices.

Neuromorphic Intermediate Representation: A Unified Instruction Set for Interoperable Brain-Inspired Computing

Nov 24, 2023Abstract:Spiking neural networks and neuromorphic hardware platforms that emulate neural dynamics are slowly gaining momentum and entering main-stream usage. Despite a well-established mathematical foundation for neural dynamics, the implementation details vary greatly across different platforms. Correspondingly, there are a plethora of software and hardware implementations with their own unique technology stacks. Consequently, neuromorphic systems typically diverge from the expected computational model, which challenges the reproducibility and reliability across platforms. Additionally, most neuromorphic hardware is limited by its access via a single software frameworks with a limited set of training procedures. Here, we establish a common reference-frame for computations in neuromorphic systems, dubbed the Neuromorphic Intermediate Representation (NIR). NIR defines a set of computational primitives as idealized continuous-time hybrid systems that can be composed into graphs and mapped to and from various neuromorphic technology stacks. By abstracting away assumptions around discretization and hardware constraints, NIR faithfully captures the fundamental computation, while simultaneously exposing the exact differences between the evaluated implementation and the idealized mathematical formalism. We reproduce three NIR graphs across 7 neuromorphic simulators and 4 hardware platforms, demonstrating support for an unprecedented number of neuromorphic systems. With NIR, we decouple the evolution of neuromorphic hardware and software, ultimately increasing the interoperability between platforms and improving accessibility to neuromorphic technologies. We believe that NIR is an important step towards the continued study of brain-inspired hardware and bottom-up approaches aimed at an improved understanding of the computational underpinnings of nervous systems.

WaLiN-GUI: a graphical and auditory tool for neuron-based encoding

Oct 25, 2023Abstract:Neuromorphic computing relies on spike-based, energy-efficient communication, inherently implying the need for conversion between real-valued (sensory) data and binary, sparse spiking representation. This is usually accomplished using the real valued data as current input to a spiking neuron model, and tuning the neuron's parameters to match a desired, often biologically inspired behaviour. We developed a tool, the WaLiN-GUI, that supports the investigation of neuron models and parameter combinations to identify suitable configurations for neuron-based encoding of sample-based data into spike trains. Due to the generalized LIF model implemented by default, next to the LIF and Izhikevich neuron models, many spiking behaviors can be investigated out of the box, thus offering the possibility of tuning biologically plausible responses to the input data. The GUI is provided open source and with documentation, being easy to extend with further neuron models and personalize with data analysis functions.

Braille Letter Reading: A Benchmark for Spatio-Temporal Pattern Recognition on Neuromorphic Hardware

May 30, 2022

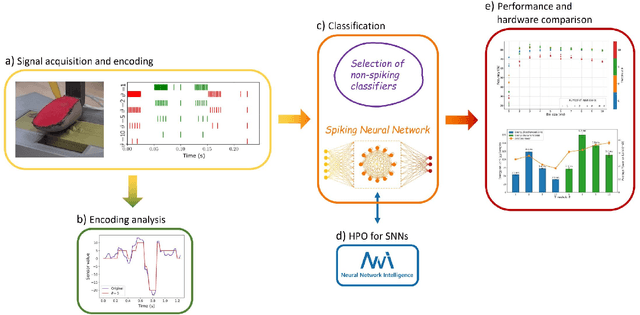

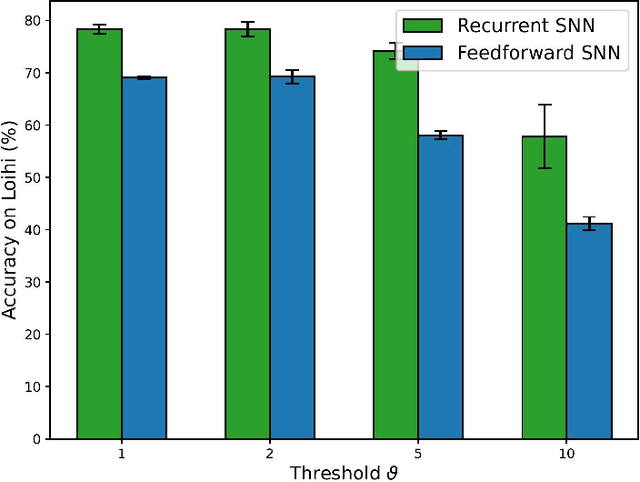

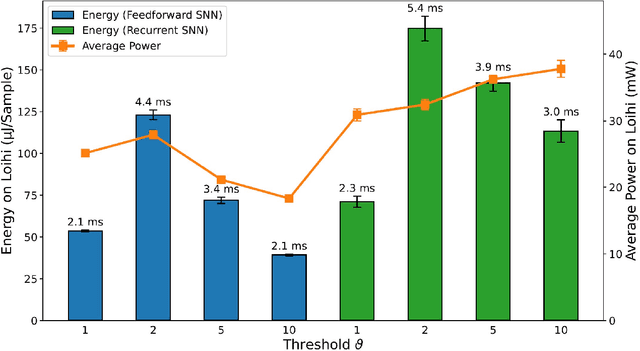

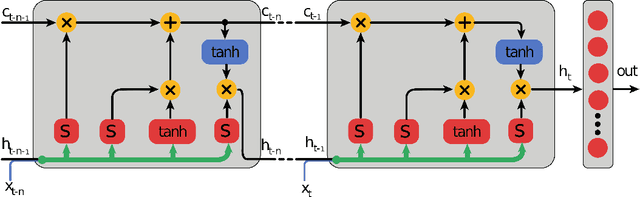

Abstract:Spatio-temporal pattern recognition is a fundamental ability of the brain which is required for numerous real-world applications. Recent deep learning approaches have reached outstanding accuracy in such tasks, but their implementation on conventional embedded solutions is still very computationally and energy expensive. Tactile sensing in robotic applications is a representative example where real-time processing and energy-efficiency are required. Following a brain-inspired computing approach, we propose a new benchmark for spatio-temporal tactile pattern recognition at the edge through braille letters reading. We recorded a new braille letters dataset based on the capacitive tactile sensors/fingertip of the iCub robot, then we investigated the importance of temporal information and the impact of event-based encoding for spike-based/event-based computation. Afterwards, we trained and compared feed-forward and recurrent spiking neural networks (SNNs) offline using back-propagation through time with surrogate gradients, then we deployed them on the Intel Loihi neuromorphic chip for fast and efficient inference. We confronted our approach to standard classifiers, in particular to a Long Short-Term Memory (LSTM) deployed on the embedded Nvidia Jetson GPU in terms of classification accuracy, power/energy consumption and computational delay. Our results show that the LSTM outperforms the recurrent SNN in terms of accuracy by 14%. However, the recurrent SNN on Loihi is 237 times more energy-efficient than the LSTM on Jetson, requiring an average power of only 31mW. This work proposes a new benchmark for tactile sensing and highlights the challenges and opportunities of event-based encoding, neuromorphic hardware and spike-based computing for spatio-temporal pattern recognition at the edge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge