Viswanath Pulabaigari

Hater-O-Genius Aggression Classification using Capsule Networks

May 24, 2021

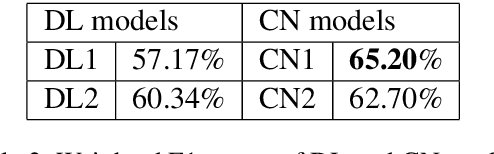

Abstract:Contending hate speech in social media is one of the most challenging social problems of our time. There are various types of anti-social behavior in social media. Foremost of them is aggressive behavior, which is causing many social issues such as affecting the social lives and mental health of social media users. In this paper, we propose an end-to-end ensemble-based architecture to automatically identify and classify aggressive tweets. Tweets are classified into three categories - Covertly Aggressive, Overtly Aggressive, and Non-Aggressive. The proposed architecture is an ensemble of smaller subnetworks that are able to characterize the feature embeddings effectively. We demonstrate qualitatively that each of the smaller subnetworks is able to learn unique features. Our best model is an ensemble of Capsule Networks and results in a 65.2% F1 score on the Facebook test set, which results in a performance gain of 0.95% over the TRAC-2018 winners. The code and the model weights are publicly available at https://github.com/parthpatwa/Hater-O-Genius-Aggression-Classification-using-Capsule-Networks.

Deep Model Compression based on the Training History

Jan 30, 2021

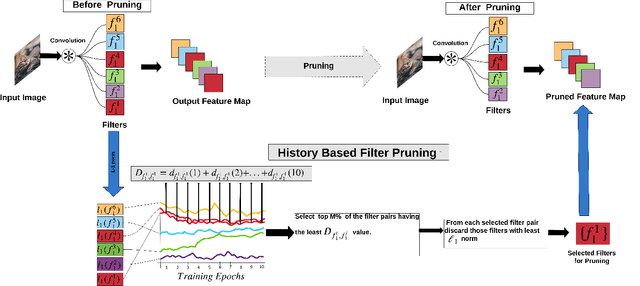

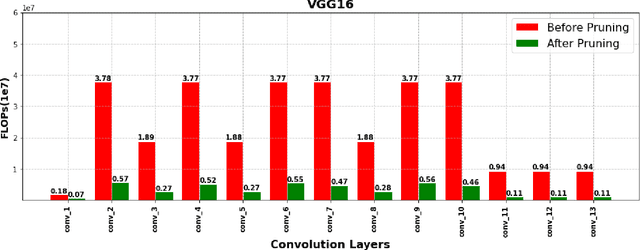

Abstract:Deep Convolutional Neural Networks (DCNNs) have shown promising results in several visual recognition problems which motivated the researchers to propose popular architectures such as LeNet, AlexNet, VGGNet, ResNet, and many more. These architectures come at a cost of high computational complexity and parameter storage. To get rid of storage and computational complexity, deep model compression methods have been evolved. We propose a novel History Based Filter Pruning (HBFP) method that utilizes network training history for filter pruning. Specifically, we prune the redundant filters by observing similar patterns in the L1-norms of filters (absolute sum of weights) over the training epochs. We iteratively prune the redundant filters of a CNN in three steps. First, we train the model and select the filter pairs with redundant filters in each pair. Next, we optimize the network to increase the similarity between the filters in a pair. It facilitates us to prune one filter from each pair based on its importance without much information loss. Finally, we retrain the network to regain the performance, which is dropped due to filter pruning. We test our approach on popular architectures such as LeNet-5 on MNIST dataset and VGG-16, ResNet-56, and ResNet-110 on CIFAR-10 dataset. The proposed pruning method outperforms the state-of-the-art in terms of FLOPs reduction (floating-point operations) by 97.98%, 83.42%, 78.43%, and 74.95% for LeNet-5, VGG-16, ResNet-56, and ResNet-110 models, respectively, while maintaining the less error rate.

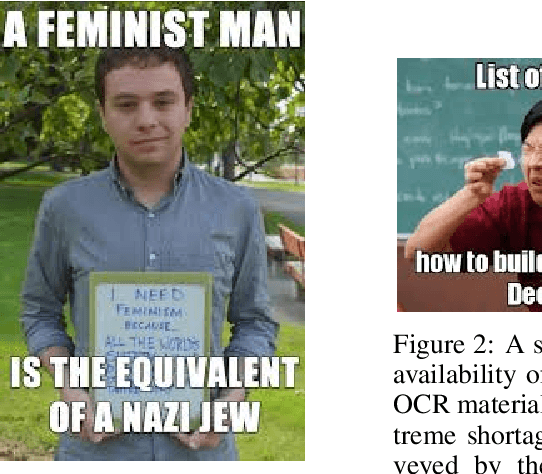

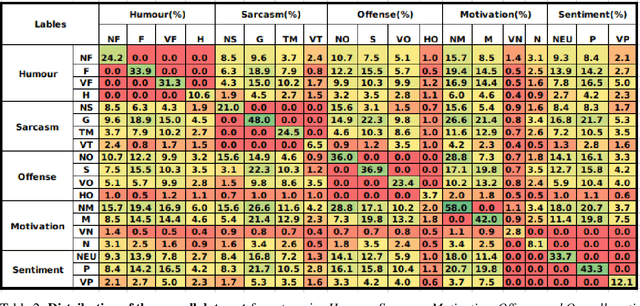

SemEval-2020 Task 8: Memotion Analysis -- The Visuo-Lingual Metaphor!

Aug 09, 2020

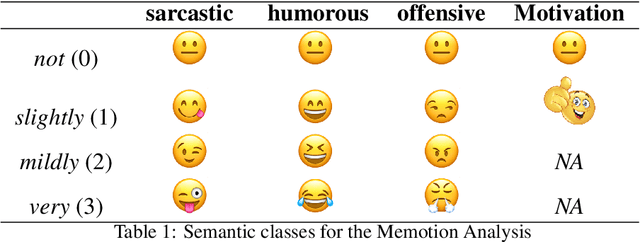

Abstract:Information on social media comprises of various modalities such as textual, visual and audio. NLP and Computer Vision communities often leverage only one prominent modality in isolation to study social media. However, the computational processing of Internet memes needs a hybrid approach. The growing ubiquity of Internet memes on social media platforms such as Facebook, Instagram, and Twiter further suggests that we can not ignore such multimodal content anymore. To the best of our knowledge, there is not much attention towards meme emotion analysis. The objective of this proposal is to bring the attention of the research community towards the automatic processing of Internet memes. The task Memotion analysis released approx 10K annotated memes, with human-annotated labels namely sentiment (positive, negative, neutral), type of emotion (sarcastic, funny, offensive, motivation) and their corresponding intensity. The challenge consisted of three subtasks: sentiment (positive, negative, and neutral) analysis of memes, overall emotion (humour, sarcasm, offensive, and motivational) classification of memes, and classifying intensity of meme emotion. The best performances achieved were F1 (macro average) scores of 0.35, 0.51 and 0.32, respectively for each of the three subtasks.

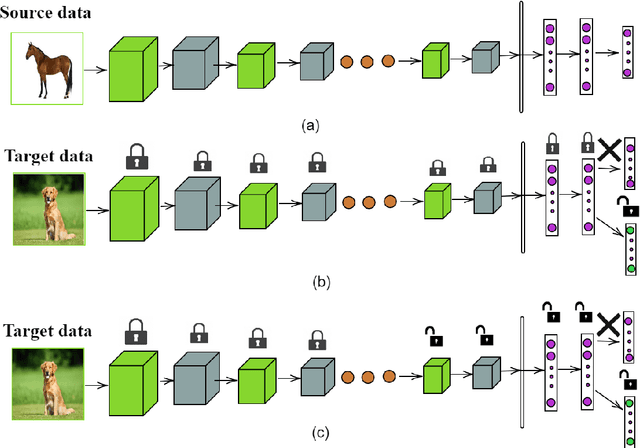

AutoTune: Automatically Tuning Convolutional Neural Networks for Improved Transfer Learning

Apr 25, 2020

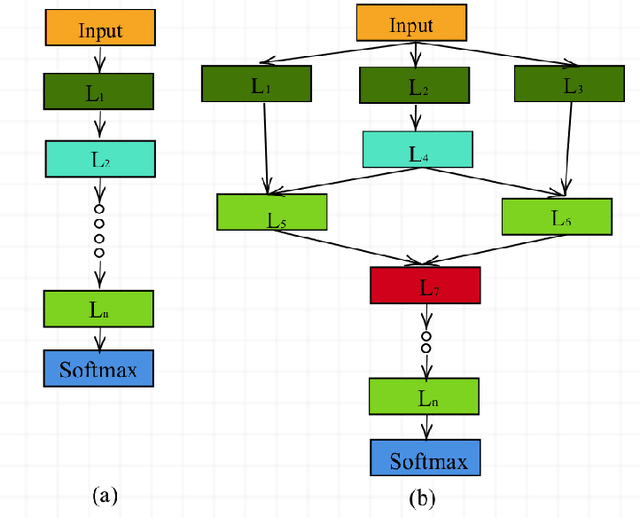

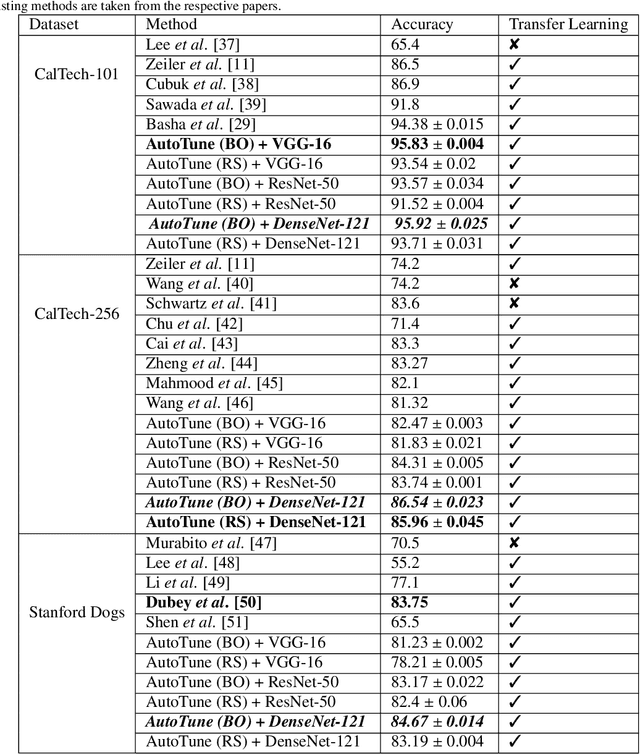

Abstract:Transfer learning enables solving a specific task having limited data by using the pre-trained deep networks trained on large-scale datasets. Typically, while transferring the learned knowledge from source task to the target task, the last few layers are fine-tuned (re-trained) over the target dataset. However, these layers are originally designed for the source task which might not be suitable for the target task. In this paper, we introduce a mechanism for automatically tuning the Convolutional Neural Networks (CNN) for improved transfer learning. The CNN layers are tuned with the knowledge from target data using Bayesian Optimization. Initially, we train the final layer of the base CNN model by replacing the number of neurons in the softmax layer with the number of classes involved in the target task. Next, the CNN is tuned automatically by observing the classification performance on the validation data (greedy criteria). To evaluate the performance of the proposed method, experiments are conducted on three benchmark datasets, e.g., CalTech-101, CalTech-256, and Stanford Dogs. The classification results obtained through the proposed AutoTune method outperforms the standard baseline transfer learning methods over the three datasets by achieving $95.92\%$, $86.54\%$, and $84.67\%$ accuracy over CalTech-101, CalTech-256, and Stanford Dogs, respectively. The experimental results obtained in this study depict that tuning of the pre-trained CNN layers with the knowledge from the target dataset confesses better transfer learning ability.

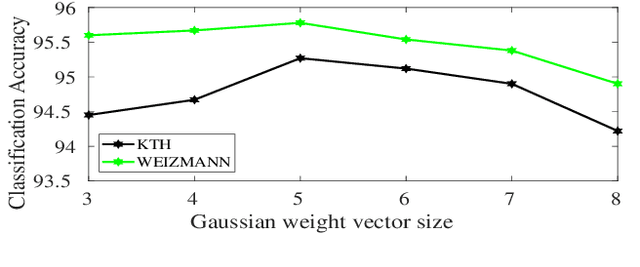

An Information-rich Sampling Technique over Spatio-Temporal CNN for Classification of Human Actions in Videos

Feb 07, 2020

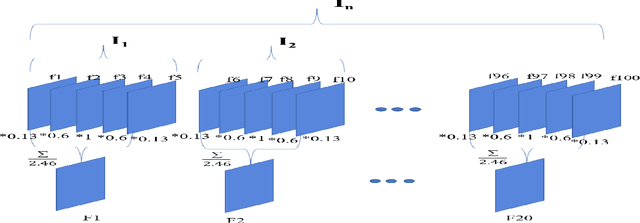

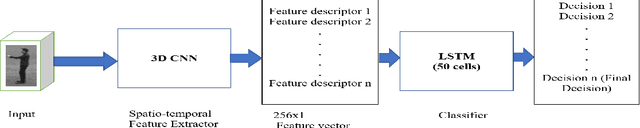

Abstract:We propose a novel scheme for human action recognition in videos, using a 3-dimensional Convolutional Neural Network (3D CNN) based classifier. Traditionally in deep learning based human activity recognition approaches, either a few random frames or every $k^{th}$ frame of the video is considered for training the 3D CNN, where $k$ is a small positive integer, like 4, 5, or 6. This kind of sampling reduces the volume of the input data, which speeds-up training of the network and also avoids over-fitting to some extent, thus enhancing the performance of the 3D CNN model. In the proposed video sampling technique, consecutive $k$ frames of a video are aggregated into a single frame by computing a Gaussian-weighted summation of the $k$ frames. The resulting frame (aggregated frame) preserves the information in a better way than the conventional approaches and experimentally shown to perform better. In this paper, a 3D CNN architecture is proposed to extract the spatio-temporal features and follows Long Short-Term Memory (LSTM) to recognize human actions. The proposed 3D CNN architecture is capable of handling the videos where the camera is placed at a distance from the performer. Experiments are performed with KTH and WEIZMANN human actions datasets, whereby it is shown to produce comparable results with the state-of-the-art techniques.

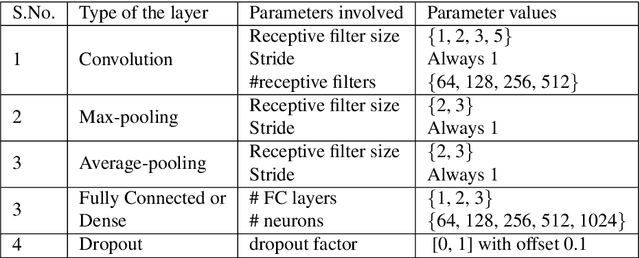

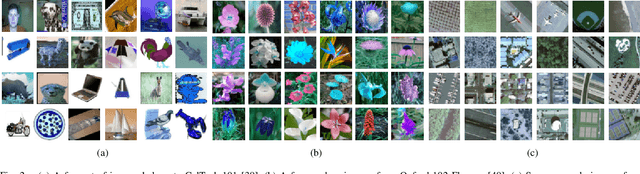

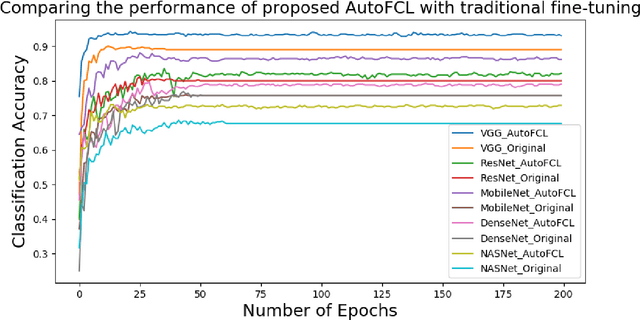

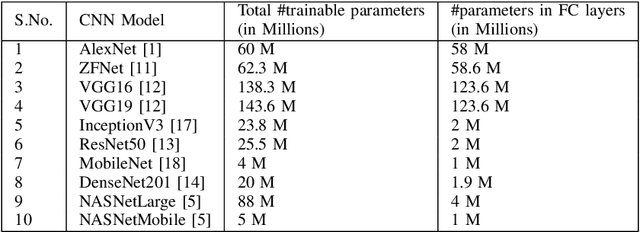

AutoFCL: Automatically Tuning Fully Connected Layers for Transfer Learning

Feb 05, 2020

Abstract:Deep Convolutional Neural Networks (CNN) have evolved as popular machine learning models for image classification during the past few years, due to their ability to learn the problem-specific features directly from the input images. The success of deep learning models solicits architecture engineering rather than hand-engineering the features. However, designing state-of-the-art CNN for a given task remains a non-trivial and challenging task. While transferring the learned knowledge from one task to another, fine-tuning with the target-dependent fully connected layers produces better results over the target task. In this paper, the proposed AutoFCL model attempts to learn the structure of Fully Connected (FC) layers of a CNN automatically using Bayesian optimization. To evaluate the performance of the proposed AutoFCL, we utilize five popular CNN models such as VGG-16, ResNet, DenseNet, MobileNet, and NASNetMobile. The experiments are conducted on three benchmark datasets, namely CalTech-101, Oxford-102 Flowers, and UC Merced Land Use datasets. Fine-tuning the newly learned (target-dependent) FC layers leads to state-of-the-art performance, according to the experiments carried out in this research. The proposed AutoFCL method outperforms the existing methods over CalTech-101 and Oxford-102 Flowers datasets by achieving the accuracy of 94.38% and 98.89%, respectively. However, our method achieves comparable performance on the UC Merced Land Use dataset with 96.83% accuracy.

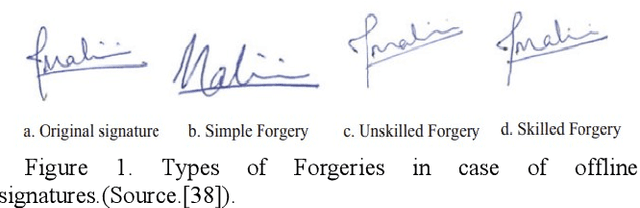

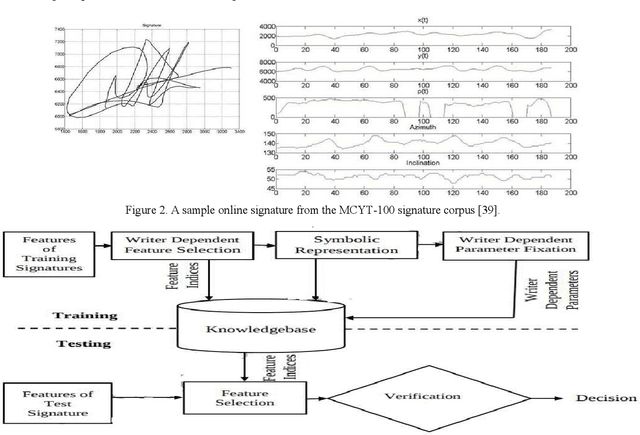

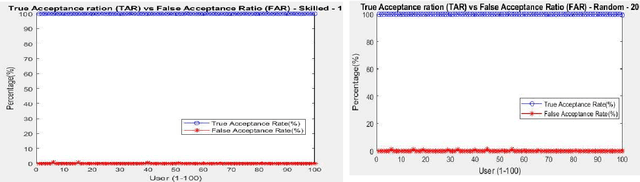

Online Signature Verification Based on Writer Specific Feature Selection and Fuzzy Similarity Measure

May 21, 2019

Abstract:Online Signature Verification (OSV) is a widely used biometric attribute for user behavioral characteristic verification in digital forensics. In this manuscript, owing to large intra-individual variability, a novel method for OSV based on an interval symbolic representation and a fuzzy similarity measure grounded on writer specific parameter selection is proposed. The two parameters, namely, writer specific acceptance threshold and optimal feature set to be used for authenticating the writer are selected based on minimum equal error rate (EER) attained during parameter fixation phase using the training signature samples. This is in variation to current techniques for OSV, which are primarily writer independent, in which a common set of features and acceptance threshold are chosen. To prove the robustness of our system, we have exhaustively assessed our system with four standard datasets i.e. MCYT-100 (DB1), MCYT-330 (DB2), SUSIG-Visual corpus and SVC-2004- Task2. Experimental outcome confirms the effectiveness of fuzzy similarity metric-based writer dependent parameter selection for OSV by achieving a lower error rate as compared to many recent and state-of-the art OSV models.

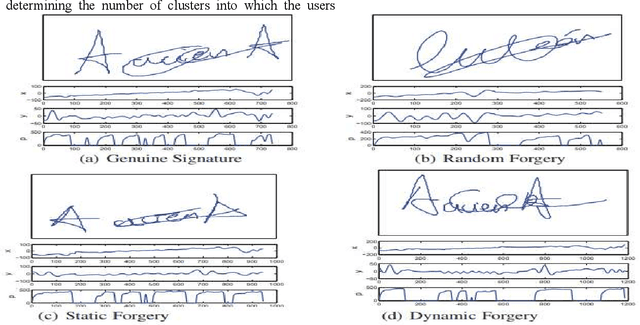

OSVNet: Convolutional Siamese Network for Writer Independent Online Signature Verification

May 21, 2019

Abstract:Online signature verification (OSV) is one of the most challenging tasks in writer identification and digital forensics. Owing to the large intra-individual variability, there is a critical requirement to accurately learn the intra-personal variations of the signature to achieve higher classification accuracy. To achieve this, in this paper, we propose an OSV framework based on deep convolutional Siamese network (DCSN). DCSN automatically extracts robust feature descriptions based on metric-based loss function which decreases intra-writer variability (Genuine-Genuine) and increases inter-individual variability (Genuine-Forgery) and directs the DCSN for effective discriminative representation learning for online signatures and extend it for one shot learning framework. Comprehensive experimentation conducted on three widely accepted benchmark datasets MCYT-100 (DB1), MCYT-330 (DB2) and SVC-2004-Task2 demonstrate the capability of our framework to distinguish the genuine and forgery samples. Experimental results confirm the efficiency of deep convolutional Siamese network based OSV by achieving a lower error rate as compared to many recent and state-of-the art OSV techniques.

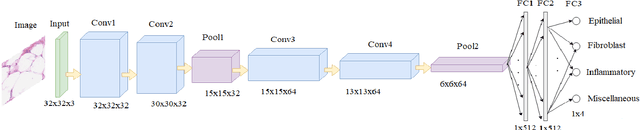

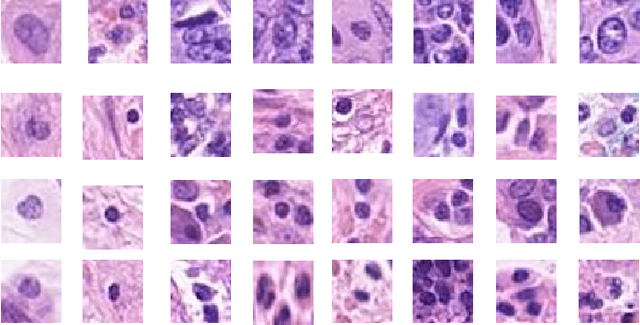

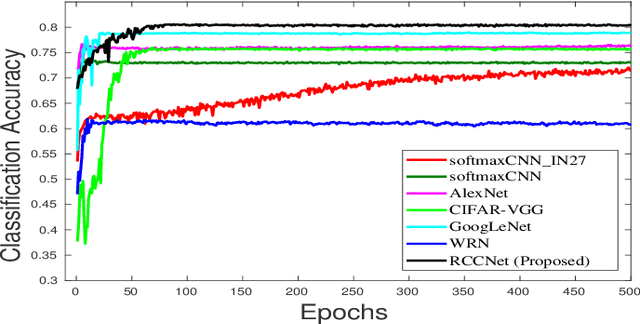

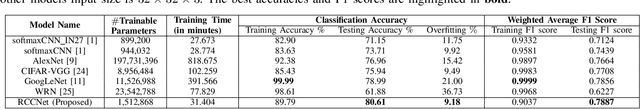

RCCNet: An Efficient Convolutional Neural Network for Histological Routine Colon Cancer Nuclei Classification

Oct 20, 2018

Abstract:Efficient and precise classification of histological cell nuclei is of utmost importance due to its potential applications in the field of medical image analysis. It would facilitate the medical practitioners to better understand and explore various factors for cancer treatment. The classification of histological cell nuclei is a challenging task due to the cellular heterogeneity. This paper proposes an efficient Convolutional Neural Network (CNN) based architecture for classification of histological routine colon cancer nuclei named as RCCNet. The main objective of this network is to keep the CNN model as simple as possible. The proposed RCCNet model consists of only 1,512,868 learnable parameters which are significantly less compared to the popular CNN models such as AlexNet, CIFARVGG, GoogLeNet, and WRN. The experiments are conducted over publicly available routine colon cancer histological dataset "CRCHistoPhenotypes". The results of the proposed RCCNet model are compared with five state-of-the-art CNN models in terms of the accuracy, weighted average F1 score and training time. The proposed method has achieved a classification accuracy of 80.61% and 0.7887 weighted average F1 score. The proposed RCCNet is more efficient and generalized terms of the training time and data over-fitting, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge