Veronika Eyring

DLR, Institut für Physik der Atmosphäre, Oberpfaffenhofen, Germany, University of Bremen, Institute of Environmental Physics, Bremen, Germany

Fisher Information, Training and Bias in Fourier Regression Models

Oct 08, 2025Abstract:Motivated by the growing interest in quantum machine learning, in particular quantum neural networks (QNNs), we study how recently introduced evaluation metrics based on the Fisher information matrix (FIM) are effective for predicting their training and prediction performance. We exploit the equivalence between a broad class of QNNs and Fourier models, and study the interplay between the \emph{effective dimension} and the \emph{bias} of a model towards a given task, investigating how these affect the model's training and performance. We show that for a model that is completely agnostic, or unbiased, towards the function to be learned, a higher effective dimension likely results in a better trainability and performance. On the other hand, for models that are biased towards the function to be learned a lower effective dimension is likely beneficial during training. To obtain these results, we derive an analytical expression of the FIM for Fourier models and identify the features controlling a model's effective dimension. This allows us to construct models with tunable effective dimension and bias, and to compare their training. We furthermore introduce a tensor network representation of the considered Fourier models, which could be a tool of independent interest for the analysis of QNN models. Overall, these findings provide an explicit example of the interplay between geometrical properties, model-task alignment and training, which are relevant for the broader machine learning community.

Towards Physically Consistent Deep Learning For Climate Model Parameterizations

Jun 06, 2024Abstract:Climate models play a critical role in understanding and projecting climate change. Due to their complexity, their horizontal resolution of ~40-100 km remains too coarse to resolve processes such as clouds and convection, which need to be approximated via parameterizations. These parameterizations are a major source of systematic errors and large uncertainties in climate projections. Deep learning (DL)-based parameterizations, trained on computationally expensive, short high-resolution simulations, have shown great promise for improving climate models in that regard. However, their lack of interpretability and tendency to learn spurious non-physical correlations result in reduced trust in the climate simulation. We propose an efficient supervised learning framework for DL-based parameterizations that leads to physically consistent models with improved interpretability and negligible computational overhead compared to standard supervised training. First, key features determining the target physical processes are uncovered. Subsequently, the neural network is fine-tuned using only those relevant features. We show empirically that our method robustly identifies a small subset of the inputs as actual physical drivers, therefore, removing spurious non-physical relationships. This results in by design physically consistent and interpretable neural networks while maintaining the predictive performance of standard black-box DL-based parameterizations. Our framework represents a crucial step in addressing a major challenge in data-driven climate model parameterizations by respecting the underlying physical processes, and may also benefit physically consistent deep learning in other research fields.

Transferring climate change knowledge

Sep 26, 2023Abstract:Accurate climate projections are required for climate adaptation and mitigation. Earth system model simulations, used to project climate change, inherently make approximations in their representation of small-scale physical processes, such as clouds, that are at the root of the uncertainties in global mean temperature's response to increased greenhouse gas concentrations. Several approaches have been developed to use historical observations to constrain future projections and reduce uncertainties in climate projections and climate feedbacks. Yet those methods cannot capture the non-linear complexity inherent in the climate system. Using a Transfer Learning approach, we show that Machine Learning, in particular Deep Neural Networks, can be used to optimally leverage and merge the knowledge gained from Earth system model simulations and historical observations to more accurately project global surface temperature fields in the 21st century. For the Shared Socioeconomic Pathways (SSPs) 2-4.5, 3-7.0 and 5-8.5, we refine regional estimates and the global projection of the average global temperature in 2081-2098 (with respect to the period 1850-1900) to 2.73{\deg}C (2.44-3.11{\deg}C), 3.92{\deg}C (3.5-4.47{\deg}C) and 4.53{\deg}C (3.69-5.5{\deg}C), respectively, compared to the unconstrained 2.7{\deg}C (1.65-3.8{\deg}C), 3.71{\deg}C (2.56-4.97{\deg}C) and 4.47{\deg}C (2.95-6.02{\deg}C). Our findings show that the 1.5{\deg}C threshold of the Paris' agreement will be crossed in 2031 (2028-2034) for SSP2-4.5, in 2029 (2027-2031) for SSP3-7.0 and in 2028 (2025-2031) for SSP5-8.5. Similarly, the 2{\deg}C threshold will be exceeded in 2051 (2045-2059), 2044 (2040-2047) and 2042 (2038-2047) respectively. Our new method provides more accurate climate projections urgently required for climate adaptation.

ClimSim: An open large-scale dataset for training high-resolution physics emulators in hybrid multi-scale climate simulators

Jun 16, 2023Abstract:Modern climate projections lack adequate spatial and temporal resolution due to computational constraints. A consequence is inaccurate and imprecise prediction of critical processes such as storms. Hybrid methods that combine physics with machine learning (ML) have introduced a new generation of higher fidelity climate simulators that can sidestep Moore's Law by outsourcing compute-hungry, short, high-resolution simulations to ML emulators. However, this hybrid ML-physics simulation approach requires domain-specific treatment and has been inaccessible to ML experts because of lack of training data and relevant, easy-to-use workflows. We present ClimSim, the largest-ever dataset designed for hybrid ML-physics research. It comprises multi-scale climate simulations, developed by a consortium of climate scientists and ML researchers. It consists of 5.7 billion pairs of multivariate input and output vectors that isolate the influence of locally-nested, high-resolution, high-fidelity physics on a host climate simulator's macro-scale physical state. The dataset is global in coverage, spans multiple years at high sampling frequency, and is designed such that resulting emulators are compatible with downstream coupling into operational climate simulators. We implement a range of deterministic and stochastic regression baselines to highlight the ML challenges and their scoring. The data (https://huggingface.co/datasets/LEAP/ClimSim_high-res) and code (https://leap-stc.github.io/ClimSim) are released openly to support the development of hybrid ML-physics and high-fidelity climate simulations for the benefit of science and society.

Bootstrap aggregation and confidence measures to improve time series causal discovery

Jun 15, 2023Abstract:Causal discovery methods have demonstrated the ability to identify the time series graphs representing the causal temporal dependency structure of dynamical systems. However, they do not include a measure of the confidence of the estimated links. Here, we introduce a novel bootstrap aggregation (bagging) and confidence measure method that is combined with time series causal discovery. This new method allows measuring confidence for the links of the time series graphs calculated by causal discovery methods. This is done by bootstrapping the original times series data set while preserving temporal dependencies. Next to confidence measures, aggregating the bootstrapped graphs by majority voting yields a final aggregated output graph. In this work, we combine our approach with the state-of-the-art conditional-independence-based algorithm PCMCI+. With extensive numerical experiments we empirically demonstrate that, in addition to providing confidence measures for links, Bagged-PCMCI+ improves the precision and recall of its base algorithm PCMCI+. Specifically, Bagged-PCMCI+ has a higher detection power regarding adjacencies and a higher precision in orienting contemporaneous edges while at the same time showing a lower rate of false positives. These performance improvements are especially pronounced in the more challenging settings (short time sample size, large number of variables, high autocorrelation). Our bootstrap approach can also be combined with other time series causal discovery algorithms and can be of considerable use in many real-world applications, especially when confidence measures for the links are desired.

Deep Learning Based Cloud Cover Parameterization for ICON

Dec 21, 2021

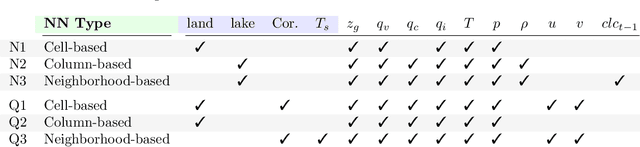

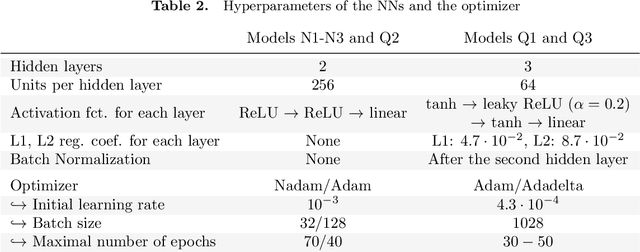

Abstract:A promising approach to improve cloud parameterizations within climate models and thus climate projections is to use deep learning in combination with training data from storm-resolving model (SRM) simulations. The Icosahedral Non-Hydrostatic (ICON) modeling framework permits simulations ranging from numerical weather prediction to climate projections, making it an ideal target to develop neural network (NN) based parameterizations for sub-grid scale processes. Within the ICON framework, we train NN based cloud cover parameterizations with coarse-grained data based on realistic regional and global ICON SRM simulations. We set up three different types of NNs that differ in the degree of vertical locality they assume for diagnosing cloud cover from coarse-grained atmospheric state variables. The NNs accurately estimate sub-grid scale cloud cover from coarse-grained data that has similar geographical characteristics as their training data. Additionally, globally trained NNs can reproduce sub-grid scale cloud cover of the regional SRM simulation. Using the game-theory based interpretability library SHapley Additive exPlanations, we identify an overemphasis on specific humidity and cloud ice as the reason why our column-based NN cannot perfectly generalize from the global to the regional coarse-grained SRM data. The interpretability tool also helps visualize similarities and differences in feature importance between regionally and globally trained column-based NNs, and reveals a local relationship between their cloud cover predictions and the thermodynamic environment. Our results show the potential of deep learning to derive accurate yet interpretable cloud cover parameterizations from global SRMs, and suggest that neighborhood-based models may be a good compromise between accuracy and generalizability.

On the Generalization of Agricultural Drought Classification from Climate Data

Nov 30, 2021

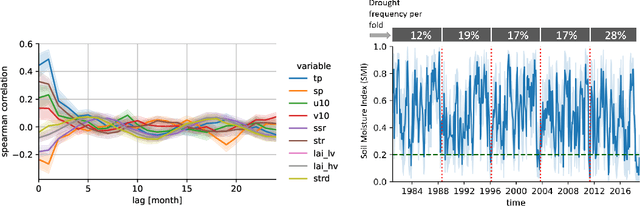

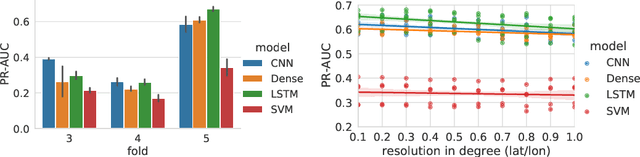

Abstract:Climate change is expected to increase the likelihood of drought events, with severe implications for food security. Unlike other natural disasters, droughts have a slow onset and depend on various external factors, making drought detection in climate data difficult. In contrast to existing works that rely on simple relative drought indices as ground-truth data, we build upon soil moisture index (SMI) obtained from a hydrological model. This index is directly related to insufficiently available water to vegetation. Given ERA5-Land climate input data of six months with land use information from MODIS satellite observation, we compare different models with and without sequential inductive bias in classifying droughts based on SMI. We use PR-AUC as the evaluation measure to account for the class imbalance and obtain promising results despite a challenging time-based split. We further show in an ablation study that the models retain their predictive capabilities given input data of coarser resolutions, as frequently encountered in climate models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge