Vanessa Wirth

3D Face Reconstruction From Radar Images

Dec 03, 2024

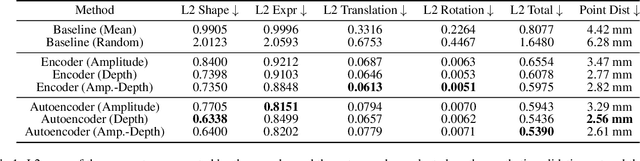

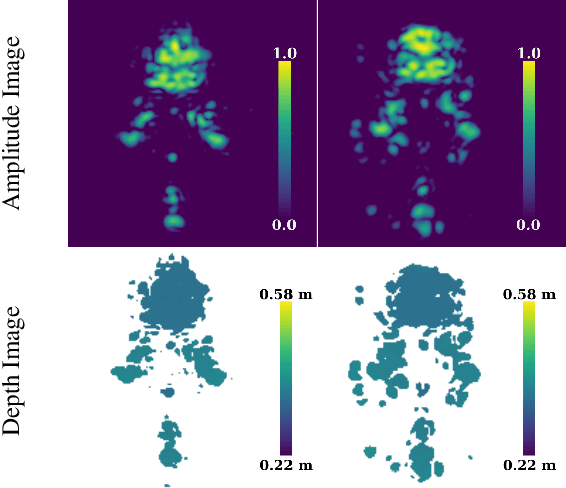

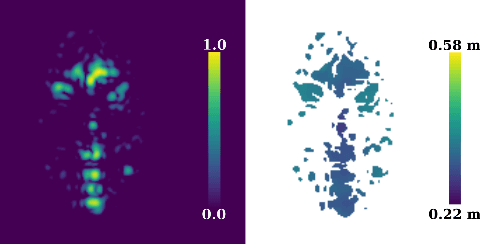

Abstract:The 3D reconstruction of faces gains wide attention in computer vision and is used in many fields of application, for example, animation, virtual reality, and even forensics. This work is motivated by monitoring patients in sleep laboratories. Due to their unique characteristics, sensors from the radar domain have advantages compared to optical sensors, namely penetration of electrically non-conductive materials and independence of light. These advantages of radar signals unlock new applications and require adaptation of 3D reconstruction frameworks. We propose a novel model-based method for 3D reconstruction from radar images. We generate a dataset of synthetic radar images with a physics-based but non-differentiable radar renderer. This dataset is used to train a CNN-based encoder to estimate the parameters of a 3D morphable face model. Whilst the encoder alone already leads to strong reconstructions of synthetic data, we extend our reconstruction in an Analysis-by-Synthesis fashion to a model-based autoencoder. This is enabled by learning the rendering process in the decoder, which acts as an object-specific differentiable radar renderer. Subsequently, the combination of both network parts is trained to minimize both, the loss of the parameters and the loss of the resulting reconstructed radar image. This leads to the additional benefit, that at test time the parameters can be further optimized by finetuning the autoencoder unsupervised on the image loss. We evaluated our framework on generated synthetic face images as well as on real radar images with 3D ground truth of four individuals.

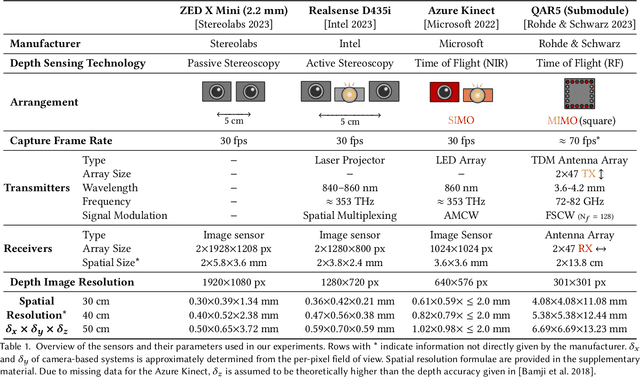

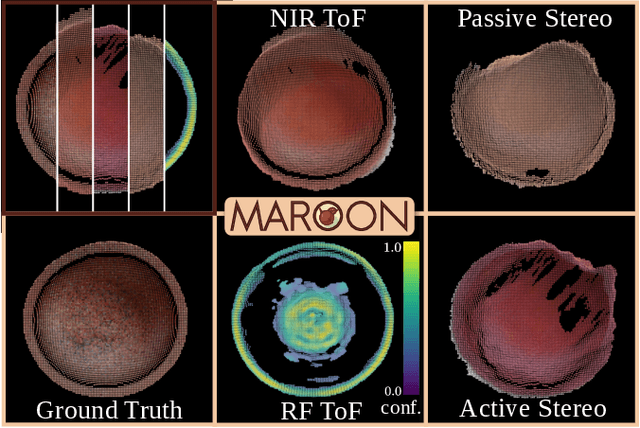

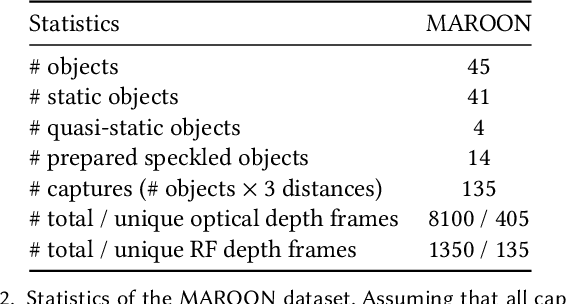

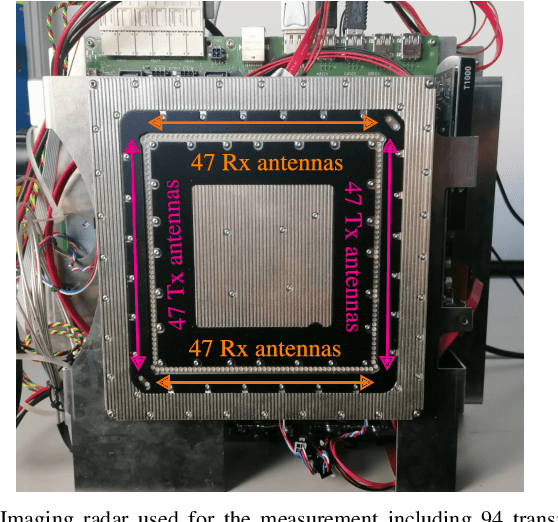

MAROON: A Framework for the Joint Characterization of Near-Field High-Resolution Radar and Optical Depth Imaging Techniques

Nov 01, 2024

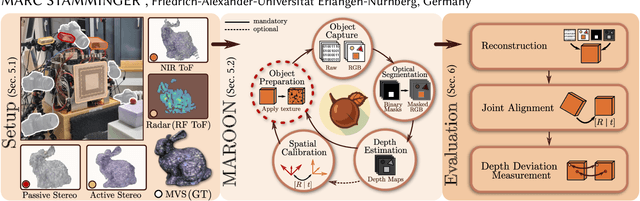

Abstract:Utilizing the complementary strengths of wavelength-specific range or depth sensors is crucial for robust computer-assisted tasks such as autonomous driving. Despite this, there is still little research done at the intersection of optical depth sensors and radars operating close range, where the target is decimeters away from the sensors. Together with a growing interest in high-resolution imaging radars operating in the near field, the question arises how these sensors behave in comparison to their traditional optical counterparts. In this work, we take on the unique challenge of jointly characterizing depth imagers from both, the optical and radio-frequency domain using a multimodal spatial calibration. We collect data from four depth imagers, with three optical sensors of varying operation principle and an imaging radar. We provide a comprehensive evaluation of their depth measurements with respect to distinct object materials, geometries, and object-to-sensor distances. Specifically, we reveal scattering effects of partially transmissive materials and investigate the response of radio-frequency signals. All object measurements will be made public in form of a multimodal dataset, called MAROON.

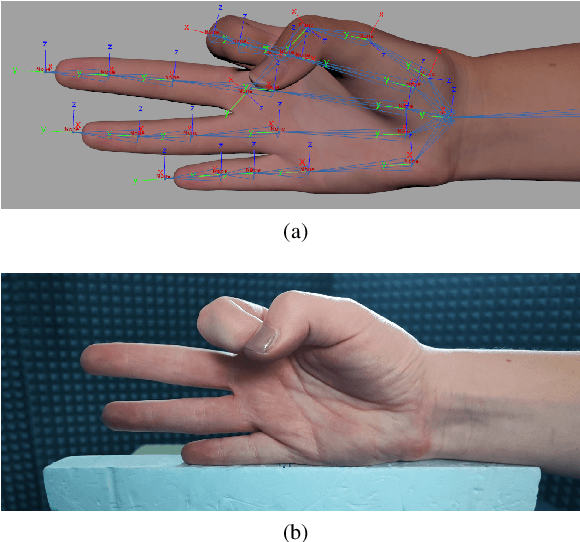

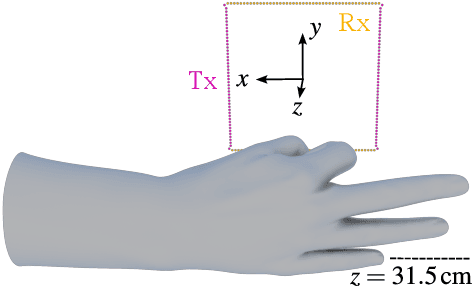

An Efficient yet High-Performance Method for Precise Radar-Based Imaging of Human Hand Poses

Jun 19, 2024Abstract:Contactless hand pose estimation requires sensors that provide precise spatial information and low computational complexity for real-time processing. Unlike vision-based systems, radar offers lighting independence and direct motion assessments. Yet, there is limited research balancing real-time constraints, suitable frame rates for motion evaluations, and the need for precise 3D data. To address this, we extend the ultra-efficient two-tone hand imaging method from our prior work to a three-tone approach. Maintaining high frame rates and real-time constraints, this approach significantly enhances reconstruction accuracy and precision. We assess these measures by evaluating reconstruction results for different hand poses obtained by an imaging radar. Accuracy is assessed against ground truth from a spatially calibrated photogrammetry setup, while precision is measured using 3D-printed hand poses. The results emphasize the method's great potential for future radar-based hand sensing.

Automatic Spatial Calibration of Near-Field MIMO Radar With Respect to Optical Sensors

Mar 16, 2024

Abstract:Despite an emerging interest in MIMO radar, the utilization of its complementary strengths in combination with optical sensors has so far been limited to far-field applications, due to the challenges that arise from mutual sensor calibration in the near field. In fact, most related approaches in the autonomous industry propose target-based calibration methods using corner reflectors that have proven to be unsuitable for the near field. In contrast, we propose a novel, joint calibration approach for optical RGB-D sensors and MIMO radars that is designed to operate in the radar's near-field range, within decimeters from the sensors. Our pipeline consists of a bespoke calibration target, allowing for automatic target detection and localization, followed by the spatial calibration of the two sensor coordinate systems through target registration. We validate our approach using two different depth sensing technologies from the optical domain. The experiments show the efficiency and accuracy of our calibration for various target displacements, as well as its robustness of our localization in terms of signal ambiguities.

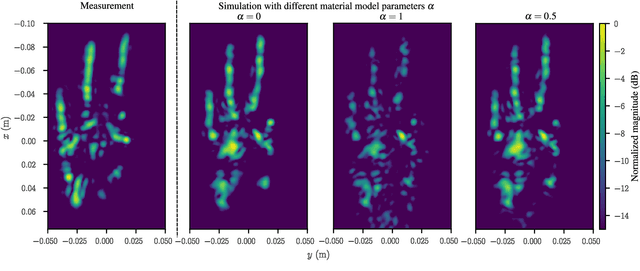

A Realistic Radar Ray Tracing Simulator for Hand Pose Imaging

Jul 28, 2023

Abstract:With the increasing popularity of human-computer interaction applications, there is also growing interest in generating sufficiently large and diverse data sets for automatic radar-based recognition of hand poses and gestures. Radar simulations are a vital approach to generating training data (e.g., for machine learning). Therefore, this work applies a ray tracing method to radar imaging of the hand. The performance of the proposed simulation approach is verified by a comparison of simulation and measurement data based on an imaging radar with a high lateral resolution. In addition, the surface material model incorporated into the ray tracer is highlighted in more detail and parameterized for radar hand imaging. Measurements and simulations show a very high similarity between synthetic and real radar image captures. The presented results demonstrate that it is possible to generate very realistic simulations of radar measurement data even for complex radar hand pose imaging systems.

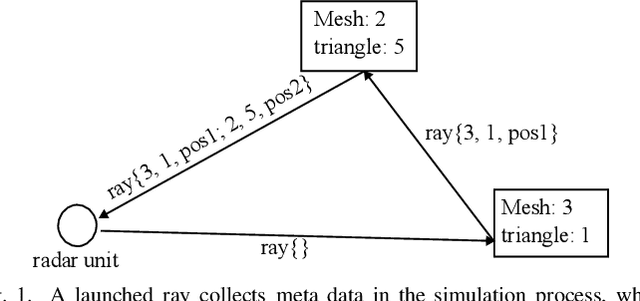

Achieving Efficient and Realistic Full-Radar Simulations and Automatic Data Annotation by exploiting Ray Meta Data of a Radar Ray Tracing Simulator

May 23, 2023

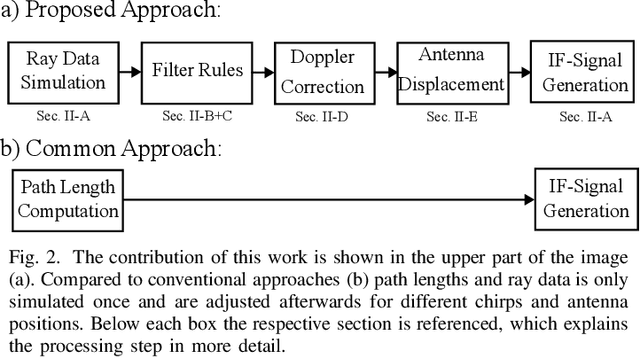

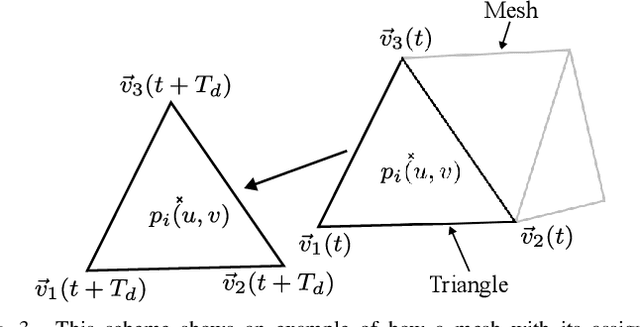

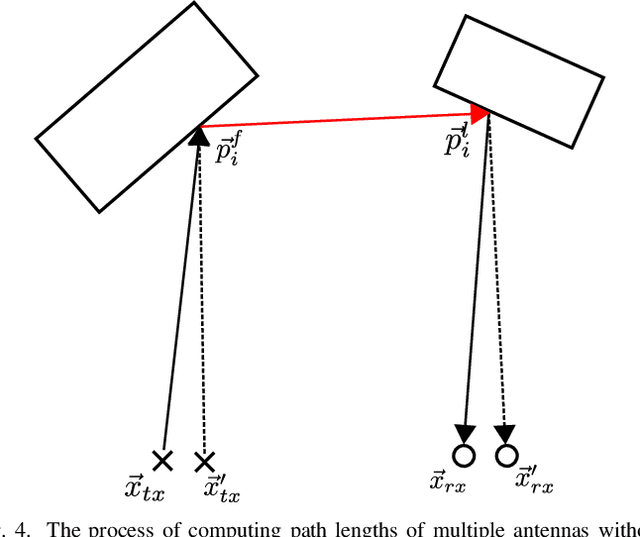

Abstract:In this work a novel radar simulation concept is introduced that allows to simulate realistic radar data for Range, Doppler, and for arbitrary antenna positions in an efficient way. Further, it makes it possible to automatically annotate the simulated radar signal by allowing to decompose it into different parts. This approach allows not only almost perfect annotations possible, but also allows the annotation of exotic effects, such as multi-path effects or to label signal parts originating from different parts of an object. This is possible by adapting the computation process of a Monte Carlo shooting and bouncing rays (SBR) simulator. By considering the hits of each simulated ray, various meta data can be stored such as hit position, mesh pointer, object IDs, and many more. This collected meta data can then be utilized to predict the change of path lengths introduced by object motion to obtain Doppler information or to apply specific ray filter rules in order obtain radar signals that only fulfil specific conditions, such as multiple bounces or containing specific object IDs. Using this approach, perfect and otherwise almost impossible annotations schemes can be realized.

ShaRPy: Shape Reconstruction and Hand Pose Estimation from RGB-D with Uncertainty

Mar 17, 2023Abstract:Despite their potential, markerless hand tracking technologies are not yet applied in practice to the diagnosis or monitoring of the activity in inflammatory musculoskeletal diseases. One reason is that the focus of most methods lies in the reconstruction of coarse, plausible poses for gesture recognition or AR/VR applications, whereas in the clinical context, accurate, interpretable, and reliable results are required. Therefore, we propose ShaRPy, the first RGB-D Shape Reconstruction and hand Pose tracking system, which provides uncertainty estimates of the computed pose to guide clinical decision-making. Our method requires only a light-weight setup with a single consumer-level RGB-D camera yet it is able to distinguish similar poses with only small joint angle deviations. This is achieved by combining a data-driven dense correspondence predictor with traditional energy minimization, optimizing for both, pose and hand shape parameters. We evaluate ShaRPy on a keypoint detection benchmark and show qualitative results on recordings of a patient.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge