Stephen McLaughlin

Graph Attention-Driven Bayesian Deep Unrolling for Dual-Peak Single-Photon Lidar Imaging

Apr 03, 2025

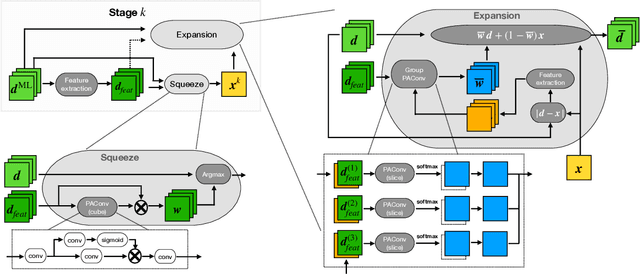

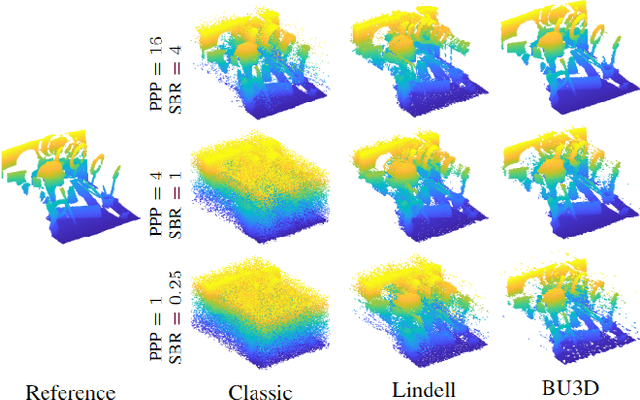

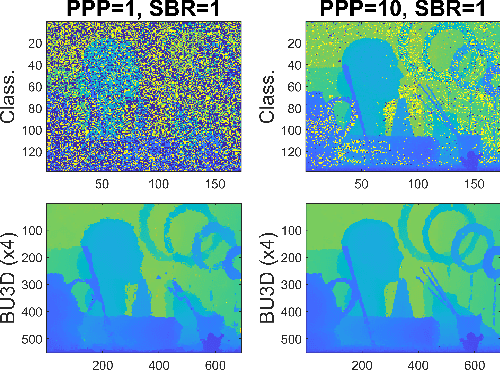

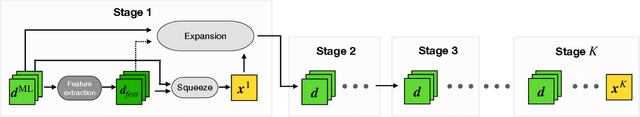

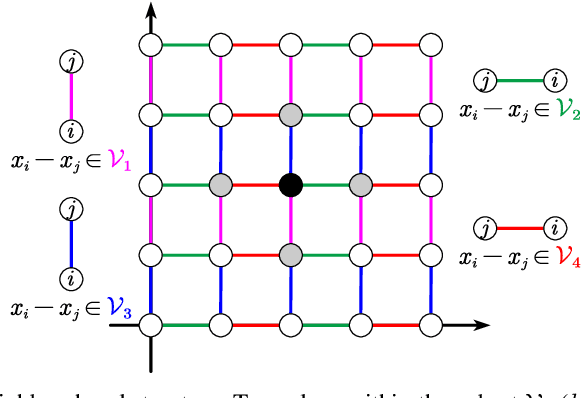

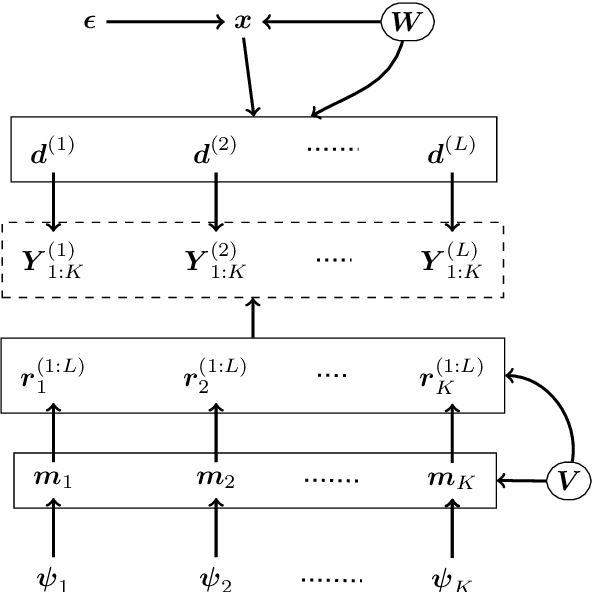

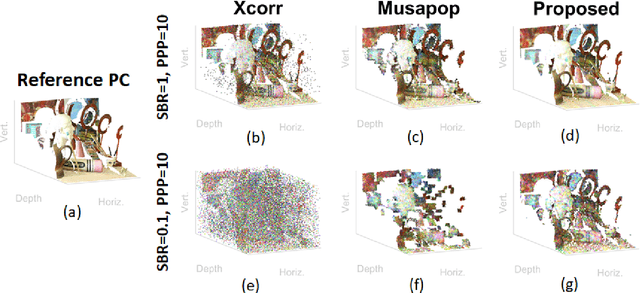

Abstract:Single-photon Lidar imaging offers a significant advantage in 3D imaging due to its high resolution and long-range capabilities, however it is challenging to apply in noisy environments with multiple targets per pixel. To tackle these challenges, several methods have been proposed. Statistical methods demonstrate interpretability on the inferred parameters, but they are often limited in their ability to handle complex scenes. Deep learning-based methods have shown superior performance in terms of accuracy and robustness, but they lack interpretability or they are limited to a single-peak per pixel. In this paper, we propose a deep unrolling algorithm for dual-peak single-photon Lidar imaging. We introduce a hierarchical Bayesian model for multiple targets and propose a neural network that unrolls the underlying statistical method. To support multiple targets, we adopt a dual depth maps representation and exploit geometric deep learning to extract features from the point cloud. The proposed method takes advantages of statistical methods and learning-based methods in terms of accuracy and quantifying uncertainty. The experimental results on synthetic and real data demonstrate the competitive performance when compared to existing methods, while also providing uncertainty information.

Bayesian Based Unrolling for Reconstruction and Super-resolution of Single-Photon Lidar Systems

Jul 24, 2023

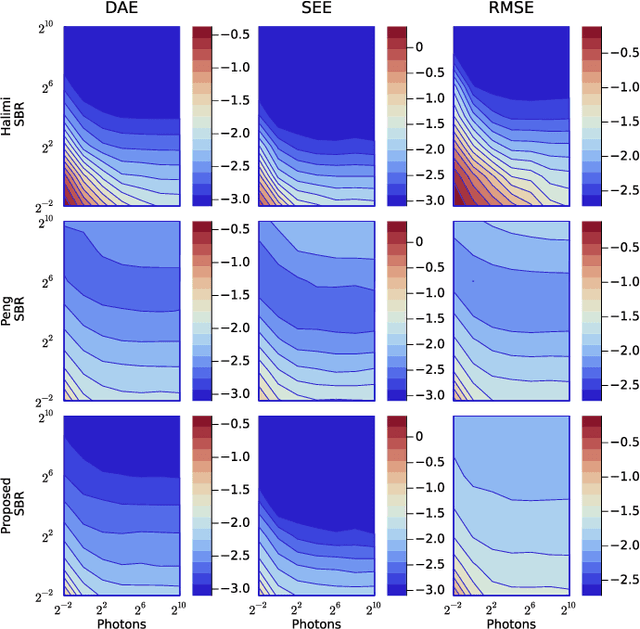

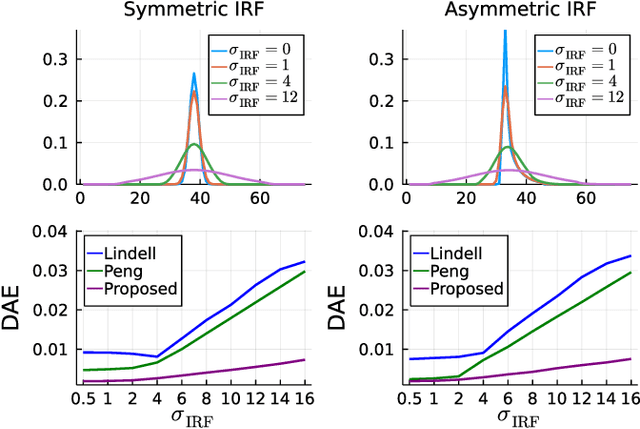

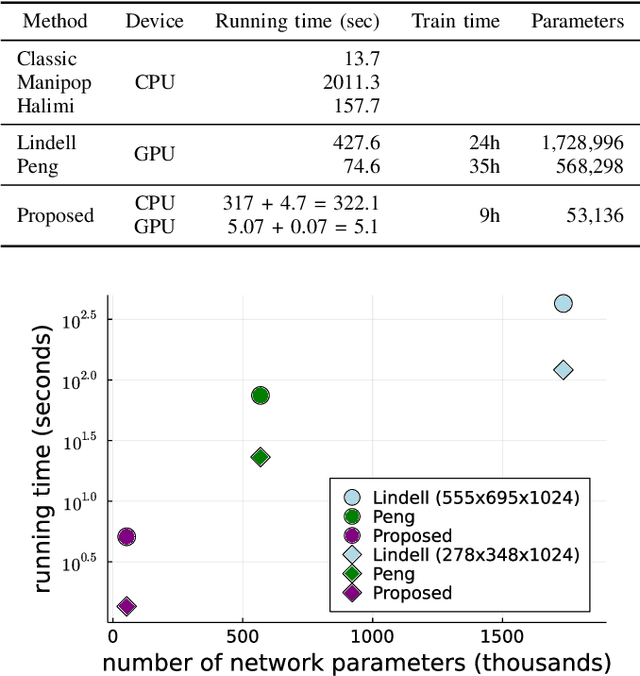

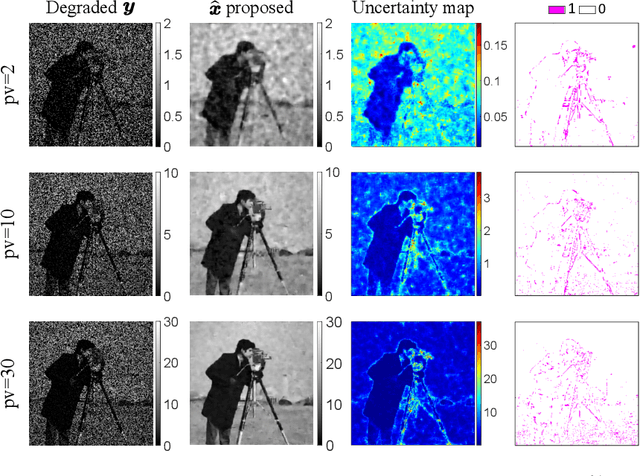

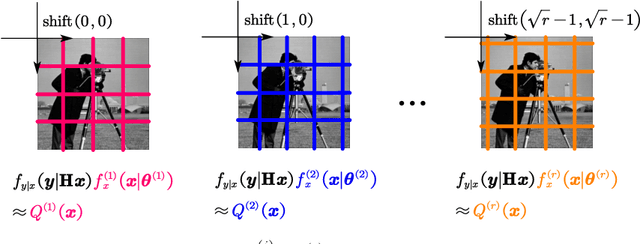

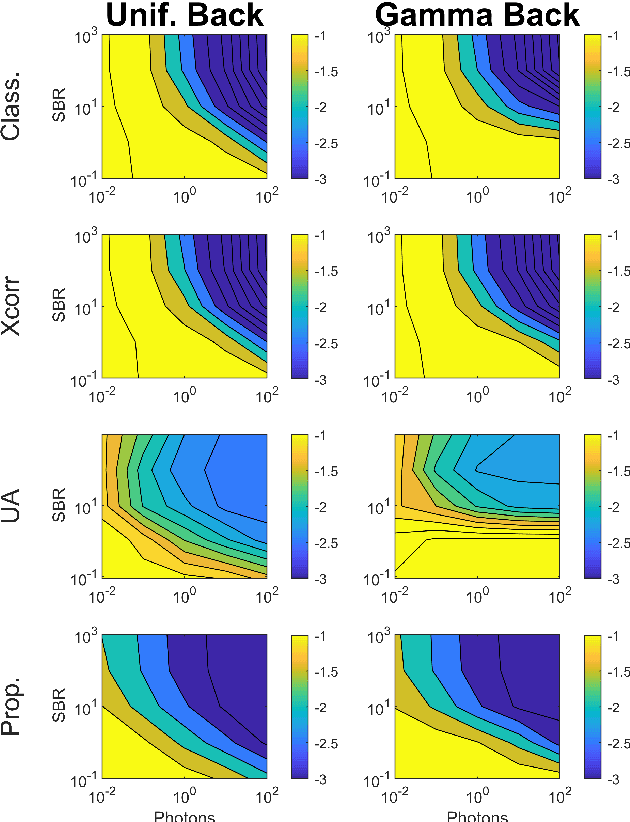

Abstract:Deploying 3D single-photon Lidar imaging in real world applications faces several challenges due to imaging in high noise environments and with sensors having limited resolution. This paper presents a deep learning algorithm based on unrolling a Bayesian model for the reconstruction and super-resolution of 3D single-photon Lidar. The resulting algorithm benefits from the advantages of both statistical and learning based frameworks, providing best estimates with improved network interpretability. Compared to existing learning-based solutions, the proposed architecture requires a reduced number of trainable parameters, is more robust to noise and mismodelling of the system impulse response function, and provides richer information about the estimates including uncertainty measures. Results on synthetic and real data show competitive results regarding the quality of the inference and computational complexity when compared to state-of-the-art algorithms. This short paper is based on contributions published in [1] and [2].

Robust real-time imaging through flexible multimode fibers

Oct 25, 2022Abstract:Conventional endoscopes comprise a bundle of optical fibers, associating one fiber for each pixel in the image. In principle, this can be reduced to a single multimode optical fiber (MMF), the width of a human hair, with one fiber spatial-mode per image pixel. However, images transmitted through a MMF emerge as unrecognisable speckle patterns due to dispersion and coupling between the spatial modes of the fiber. Furthermore, speckle patterns change as the fiber undergoes bending, making the use of MMFs in flexible imaging applications even more complicated. In this paper, we propose a real-time imaging system using flexible MMFs, but which is robust to bending. Our approach does not require access or feedback signal from the distal end of the fiber during imaging. We leverage a variational autoencoder (VAE) to reconstruct and classify images from the speckles and show that these images can still be recovered when the bend configuration of the fiber is changed to one that was not part of the training set. We utilize a MMF $300$ mm long with a 50 $\mu$m core for imaging $10\times 10$ cm objects placed approximately at $20$ cm from the fiber and the system can deal with a change in fiber bend of 50$^\circ$ and range of movement of 8 cm.

A Bayesian Based Deep Unrolling Algorithm for Single-Photon Lidar Systems

Jan 26, 2022

Abstract:Deploying 3D single-photon Lidar imaging in real world applications faces multiple challenges including imaging in high noise environments. Several algorithms have been proposed to address these issues based on statistical or learning-based frameworks. Statistical methods provide rich information about the inferred parameters but are limited by the assumed model correlation structures, while deep learning methods show state-of-the-art performance but limited inference guarantees, preventing their extended use in critical applications. This paper unrolls a statistical Bayesian algorithm into a new deep learning architecture for robust image reconstruction from single-photon Lidar data, i.e., the algorithm's iterative steps are converted into neural network layers. The resulting algorithm benefits from the advantages of both statistical and learning based frameworks, providing best estimates with improved network interpretability. Compared to existing learning-based solutions, the proposed architecture requires a reduced number of trainable parameters, is more robust to noise and mismodelling effects, and provides richer information about the estimates including uncertainty measures. Results on synthetic and real data show competitive results regarding the quality of the inference and computational complexity when compared to state-of-the-art algorithms.

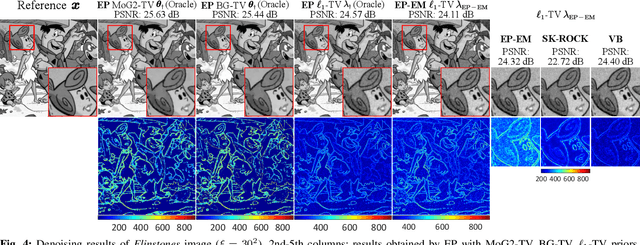

Fast Scalable Image Restoration using Total Variation Priors and Expectation Propagation

Oct 04, 2021

Abstract:This paper presents a scalable approximate Bayesian method for image restoration using total variation (TV) priors. In contrast to most optimization methods based on maximum a posteriori estimation, we use the expectation propagation (EP) framework to approximate minimum mean squared error (MMSE) estimators and marginal (pixel-wise) variances, without resorting to Monte Carlo sampling. For the classical anisotropic TV-based prior, we also propose an iterative scheme to automatically adjust the regularization parameter via expectation-maximization (EM). Using Gaussian approximating densities with diagonal covariance matrices, the resulting method allows highly parallelizable steps and can scale to large images for denoising, deconvolution and compressive sensing (CS) problems. The simulation results illustrate that such EP methods can provide a posteriori estimates on par with those obtained via sampling methods but at a fraction of the computational cost. Moreover, EP does not exhibit strong underestimation of posteriori variances, in contrast to variational Bayes alternatives.

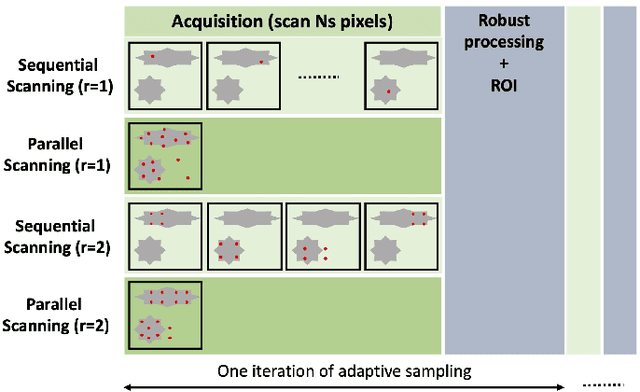

Fast Task-Based Adaptive Sampling for 3D Single-Photon Multispectral Lidar Data

Sep 03, 2021

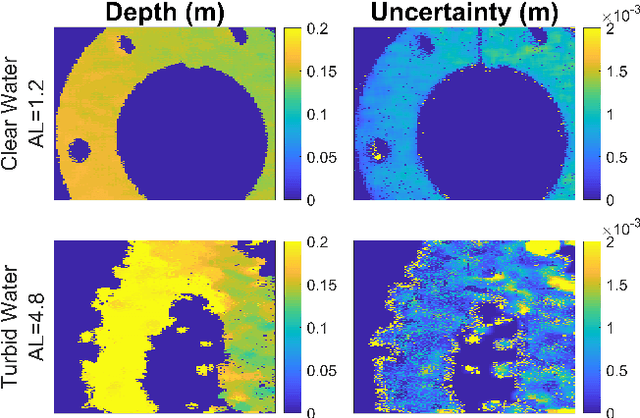

Abstract:3D single-photon LiDAR imaging plays an important role in numerous applications. However, long acquisition times and significant data volumes present a challenge to LiDAR imaging. This paper proposes a task-optimized adaptive sampling framework that enables fast acquisition and processing of high-dimensional single-photon LiDAR data. Given a task of interest, the iterative sampling strategy targets the most informative regions of a scene which are defined as those minimizing parameter uncertainties. The task is performed by considering a Bayesian model that is carefully built to allow fast per-pixel computations while delivering parameter estimates with quantified uncertainties. The framework is demonstrated on multispectral 3D single-photon LiDAR imaging when considering object classification and/or target detection as tasks. It is also analysed for both sequential and parallel scanning modes for different detector array sizes. Results on simulated and real data show the benefit of the proposed optimized sampling strategy when compared to fixed sampling strategies.

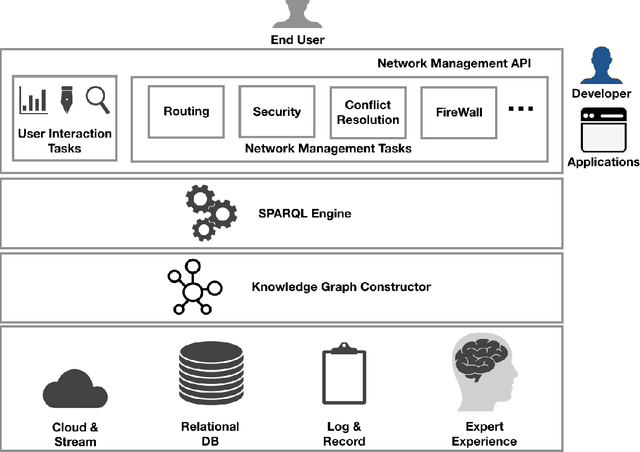

SeaNet -- Towards A Knowledge Graph Based Autonomic Management of Software Defined Networks

Jul 05, 2021

Abstract:Automatic network management driven by Artificial Intelligent technologies has been heatedly discussed over decades. However, current reports mainly focus on theoretic proposals and architecture designs, works on practical implementations on real-life networks are yet to appear. This paper proposes our effort toward the implementation of knowledge graph driven approach for autonomic network management in software defined networks (SDNs), termed as SeaNet. Driven by the ToCo ontology, SeaNet is reprogrammed based on Mininet (a SDN emulator). It consists three core components, a knowledge graph generator, a SPARQL engine, and a network management API. The knowledge graph generator represents the knowledge in the telecommunication network management tasks into formally represented ontology driven model. Expert experience and network management rules can be formalized into knowledge graph and by automatically inferenced by SPARQL engine, Network management API is able to packet technology-specific details and expose technology-independent interfaces to users. The Experiments are carried out to evaluate proposed work by comparing with a commercial SDN controller Ryu implemented by the same language Python. The evaluation results show that SeaNet is considerably faster in most circumstances than Ryu and the SeaNet code is significantly more compact. Benefit from RDF reasoning, SeaNet is able to achieve O(1) time complexity on different scales of the knowledge graph while the traditional database can achieve O(nlogn) at its best. With the developed network management API, SeaNet enables researchers to develop semantic-intelligent applications on their own SDNs.

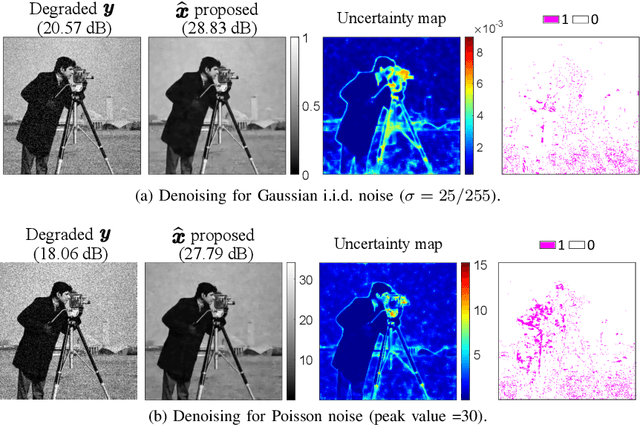

Patch-Based Image Restoration using Expectation Propagation

Jun 18, 2021

Abstract:This paper presents a new Expectation Propagation (EP) framework for image restoration using patch-based prior distributions. While Monte Carlo techniques are classically used to sample from intractable posterior distributions, they can suffer from scalability issues in high-dimensional inference problems such as image restoration. To address this issue, EP is used here to approximate the posterior distributions using products of multivariate Gaussian densities. Moreover, imposing structural constraints on the covariance matrices of these densities allows for greater scalability and distributed computation. While the method is naturally suited to handle additive Gaussian observation noise, it can also be extended to non-Gaussian noise. Experiments conducted for denoising, inpainting and deconvolution problems with Gaussian and Poisson noise illustrate the potential benefits of such flexible approximate Bayesian method for uncertainty quantification in imaging problems, at a reduced computational cost compared to sampling techniques.

Robust and Guided Bayesian Reconstruction of Single-Photon 3D Lidar Data: Application to Multispectral and Underwater Imaging

Mar 18, 2021

Abstract:3D Lidar imaging can be a challenging modality when using multiple wavelengths, or when imaging in high noise environments (e.g., imaging through obscurants). This paper presents a hierarchical Bayesian algorithm for the robust reconstruction of multispectral single-photon Lidar data in such environments. The algorithm exploits multi-scale information to provide robust depth and reflectivity estimates together with their uncertainties to help with decision making. The proposed weight-based strategy allows the use of available guide information that can be obtained by using state-of-the-art learning based algorithms. The proposed Bayesian model and its estimation algorithm are validated on both synthetic and real images showing competitive results regarding the quality of the inferences and the computational complexity when compared to the state-of-the-art algorithms.

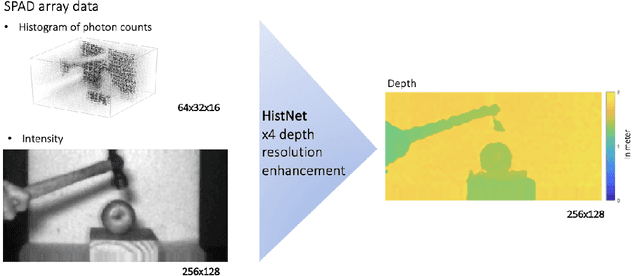

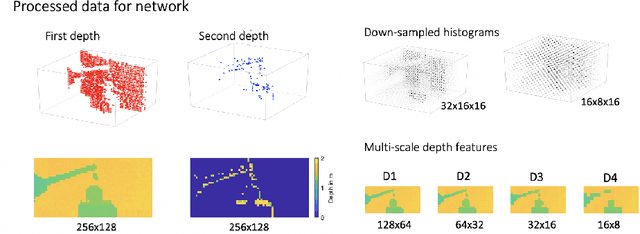

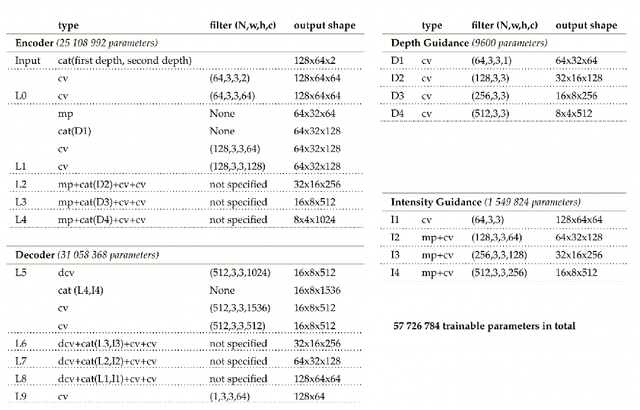

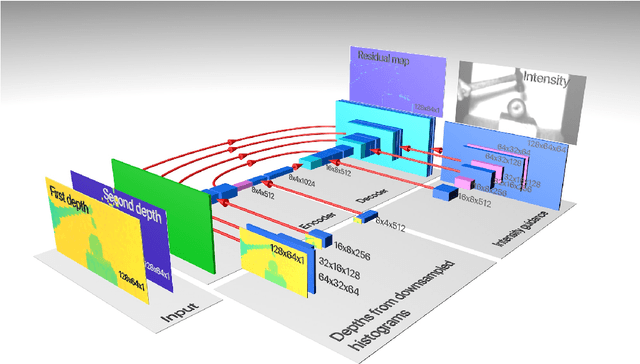

Robust super-resolution depth imaging via a multi-feature fusion deep network

Nov 20, 2020

Abstract:Three-dimensional imaging plays an important role in imaging applications where it is necessary to record depth. The number of applications that use depth imaging is increasing rapidly, and examples include self-driving autonomous vehicles and auto-focus assist on smartphone cameras. Light detection and ranging (LIDAR) via single-photon sensitive detector (SPAD) arrays is an emerging technology that enables the acquisition of depth images at high frame rates. However, the spatial resolution of this technology is typically low in comparison to the intensity images recorded by conventional cameras. To increase the native resolution of depth images from a SPAD camera, we develop a deep network built specifically to take advantage of the multiple features that can be extracted from a camera's histogram data. The network is designed for a SPAD camera operating in a dual-mode such that it captures alternate low resolution depth and high resolution intensity images at high frame rates, thus the system does not require any additional sensor to provide intensity images. The network then uses the intensity images and multiple features extracted from downsampled histograms to guide the upsampling of the depth. Our network provides significant image resolution enhancement and image denoising across a wide range of signal-to-noise ratios and photon levels. We apply the network to a range of 3D data, demonstrating denoising and a four-fold resolution enhancement of depth.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge