Shuan Chen

Synthesizable by Design: A Retrosynthesis-Guided Framework for Molecular Analog Generation

Jul 03, 2025Abstract:The disconnect between AI-generated molecules with desirable properties and their synthetic feasibility remains a critical bottleneck in computational drug and material discovery. While generative AI has accelerated the proposal of candidate molecules, many of these structures prove challenging or impossible to synthesize using established chemical reactions. Here, we introduce SynTwins, a novel retrosynthesis-guided molecular analog design framework that designs synthetically accessible molecular analogs by emulating expert chemist strategies through a three-step process: retrosynthesis, similar building block searching, and virtual synthesis. In comparative evaluations, SynTwins demonstrates superior performance in generating synthetically accessible analogs compared to state-of-the-art machine learning models while maintaining high structural similarity to original target molecules. Furthermore, when integrated with existing molecule optimization frameworks, our hybrid approach produces synthetically feasible molecules with property profiles comparable to unconstrained molecule generators, yet its synthesizability ensured. Our comprehensive benchmarking across diverse molecular datasets demonstrates that SynTwins effectively bridges the gap between computational design and experimental synthesis, providing a practical solution for accelerating the discovery of synthesizable molecules with desired properties for a wide range of applications.

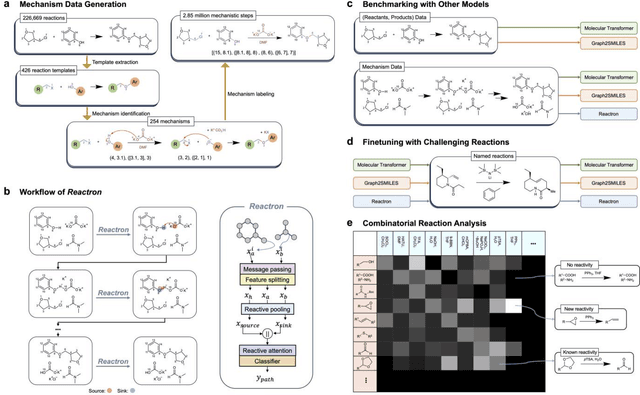

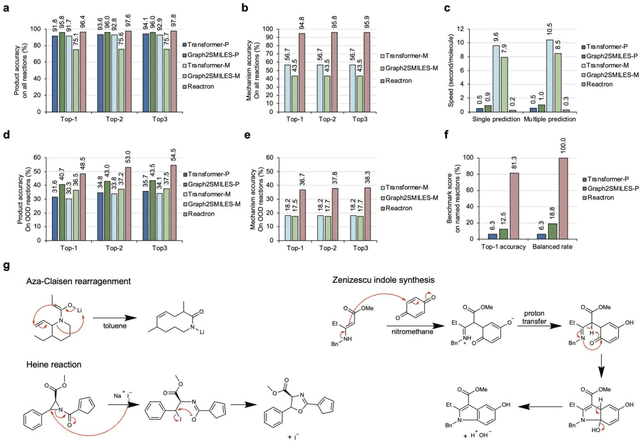

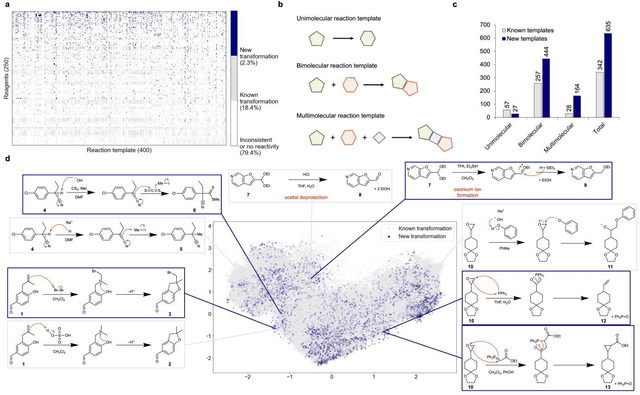

Predicting Chemical Reaction Outcomes Based on Electron Movements Using Machine Learning

Mar 13, 2025

Abstract:Accurately predicting chemical reaction outcomes and potential byproducts is a fundamental task of modern chemistry, enabling the efficient design of synthetic pathways and driving progress in chemical science. Reaction mechanism, which tracks electron movements during chemical reactions, is critical for understanding reaction kinetics and identifying unexpected products. Here, we present Reactron, the first electron-based machine learning model for general reaction prediction. Reactron integrates electron movement into its predictions, generating detailed arrow-pushing diagrams that elucidate each mechanistic step leading to product formation. We demonstrate the high predictive performance of Reactron over existing product-only models by a large-scale reaction outcome prediction benchmark, and the adaptability of the model to learn new reactivity upon providing a few examples. Furthermore, it explores combinatorial reaction spaces, uncovering novel reactivities beyond its training data. With robust performance in both in- and out-of-distribution predictions, Reactron embodies human-like reasoning in chemistry and opens new frontiers in reaction discovery and synthesis design.

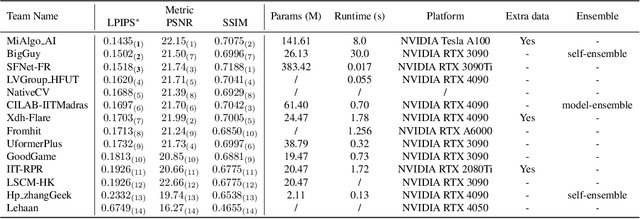

MIPI 2024 Challenge on Nighttime Flare Removal: Methods and Results

Apr 30, 2024

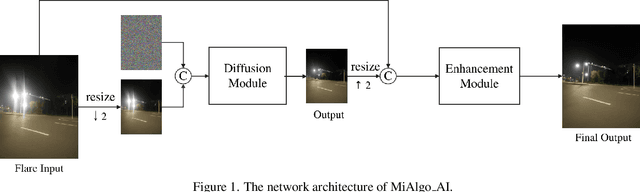

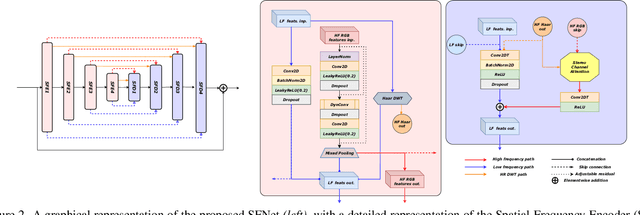

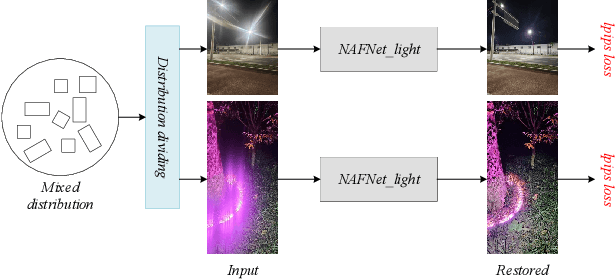

Abstract:The increasing demand for computational photography and imaging on mobile platforms has led to the widespread development and integration of advanced image sensors with novel algorithms in camera systems. However, the scarcity of high-quality data for research and the rare opportunity for in-depth exchange of views from industry and academia constrain the development of mobile intelligent photography and imaging (MIPI). Building on the achievements of the previous MIPI Workshops held at ECCV 2022 and CVPR 2023, we introduce our third MIPI challenge including three tracks focusing on novel image sensors and imaging algorithms. In this paper, we summarize and review the Nighttime Flare Removal track on MIPI 2024. In total, 170 participants were successfully registered, and 14 teams submitted results in the final testing phase. The developed solutions in this challenge achieved state-of-the-art performance on Nighttime Flare Removal. More details of this challenge and the link to the dataset can be found at https://mipi-challenge.org/MIPI2024/.

Assessing the Extrapolation Capability of Template-Free Retrosynthesis Models

Feb 29, 2024

Abstract:Despite the acknowledged capability of template-free models in exploring unseen reaction spaces compared to template-based models for retrosynthesis prediction, their ability to venture beyond established boundaries remains relatively uncharted. In this study, we empirically assess the extrapolation capability of state-of-the-art template-free models by meticulously assembling an extensive set of out-of-distribution (OOD) reactions. Our findings demonstrate that while template-free models exhibit potential in predicting precursors with novel synthesis rules, their top-10 exact-match accuracy in OOD reactions is strikingly modest (< 1%). Furthermore, despite the capability of generating novel reactions, our investigation highlights a recurring issue where more than half of the novel reactions predicted by template-free models are chemically implausible. Consequently, we advocate for the future development of template-free models that integrate considerations of chemical feasibility when navigating unexplored regions of reaction space.

Explaining How Deep Neural Networks Forget by Deep Visualization

May 03, 2020

Abstract:Explaining the behaviors of deep neural networks, usually considered as black boxes, is critical especially when they are now being adopted over diverse aspects of human life. Taking the advantages of interpretable machine learning (interpretable ML), this paper proposes a novel tool called Catastrophic Forgetting Dissector (or CFD) to explain catastrophic forgetting in continual learning settings. We also introduce a new method called Critical Freezing based on the observations of our tool. Experiments on ResNet articulate how catastrophic forgetting happens, particularly showing which components of this famous network are forgetting. Our new continual learning algorithm defeats various recent techniques by a significant margin, proving the capability of the investigation. Critical freezing not only attacks catastrophic forgetting but also exposes explainability.

Dissecting Catastrophic Forgetting in Continual Learning by Deep Visualization

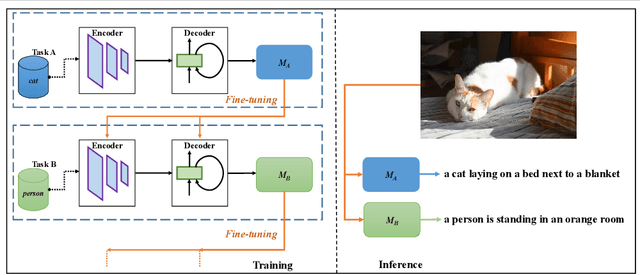

Jan 07, 2020

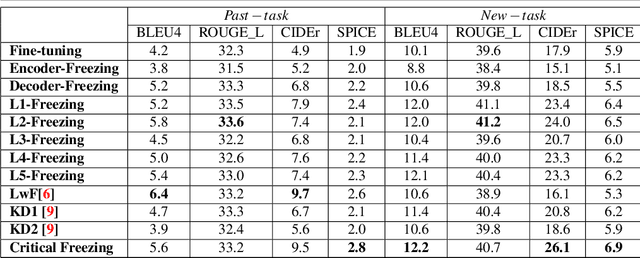

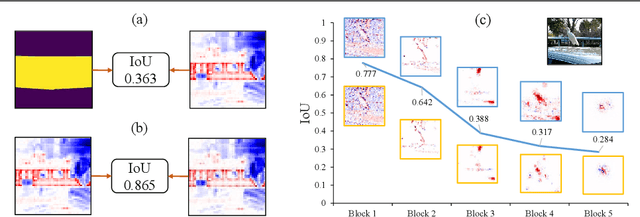

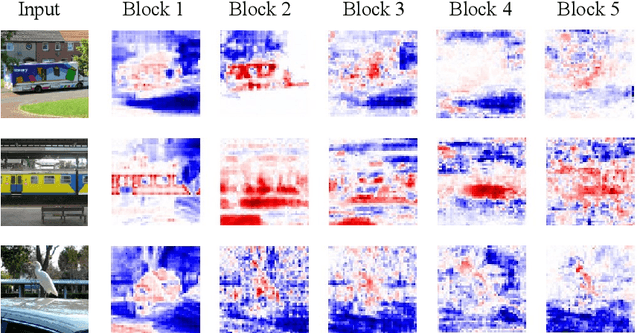

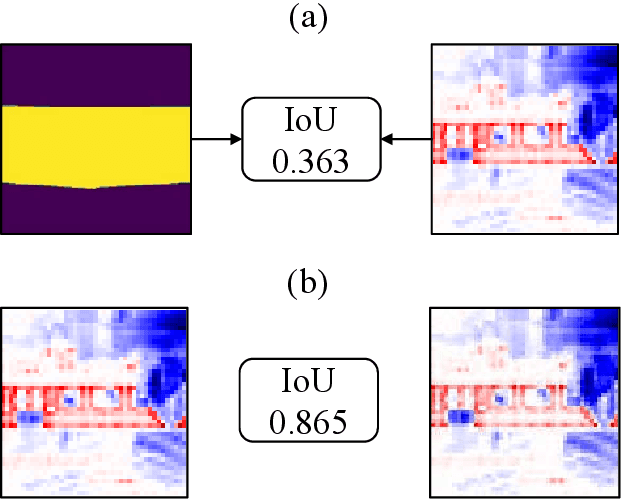

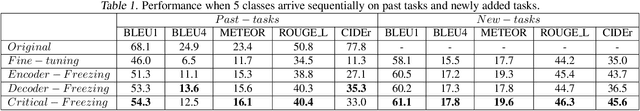

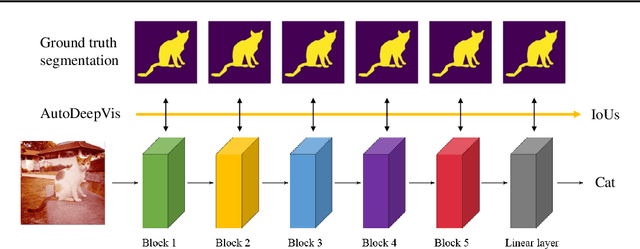

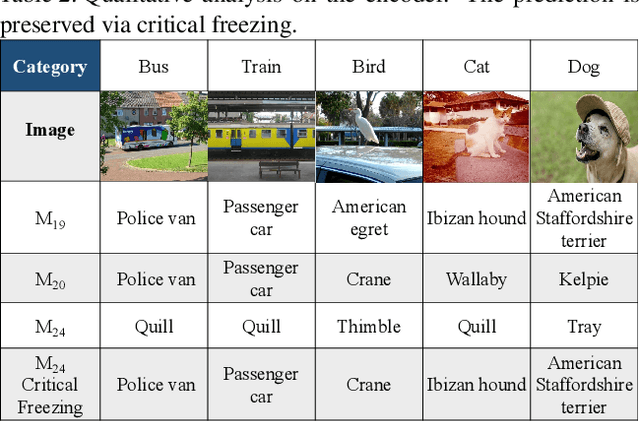

Abstract:Interpreting the behaviors of Deep Neural Networks (usually considered as a black box) is critical especially when they are now being widely adopted over diverse aspects of human life. Taking the advancements from Explainable Artificial Intelligent, this paper proposes a novel technique called Auto DeepVis to dissect catastrophic forgetting in continual learning. A new method to deal with catastrophic forgetting named critical freezing is also introduced upon investigating the dilemma by Auto DeepVis. Experiments on a captioning model meticulously present how catastrophic forgetting happens, particularly showing which components are forgetting or changing. The effectiveness of our technique is then assessed; and more precisely, critical freezing claims the best performance on both previous and coming tasks over baselines, proving the capability of the investigation. Our techniques could not only be supplementary to existing solutions for completely eradicating catastrophic forgetting for life-long learning but also explainable.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge