Shruthi Chari

MetaExplainer: A Framework to Generate Multi-Type User-Centered Explanations for AI Systems

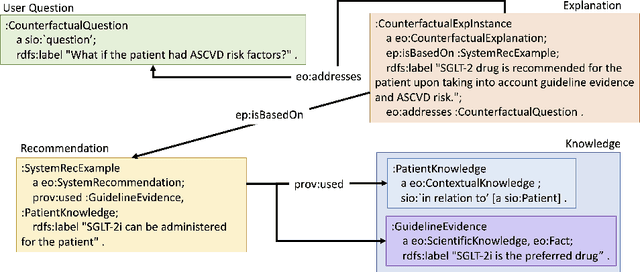

Aug 01, 2025Abstract:Explanations are crucial for building trustworthy AI systems, but a gap often exists between the explanations provided by models and those needed by users. To address this gap, we introduce MetaExplainer, a neuro-symbolic framework designed to generate user-centered explanations. Our approach employs a three-stage process: first, we decompose user questions into machine-readable formats using state-of-the-art large language models (LLM); second, we delegate the task of generating system recommendations to model explainer methods; and finally, we synthesize natural language explanations that summarize the explainer outputs. Throughout this process, we utilize an Explanation Ontology to guide the language models and explainer methods. By leveraging LLMs and a structured approach to explanation generation, MetaExplainer aims to enhance the interpretability and trustworthiness of AI systems across various applications, providing users with tailored, question-driven explanations that better meet their needs. Comprehensive evaluations of MetaExplainer demonstrate a step towards evaluating and utilizing current state-of-the-art explanation frameworks. Our results show high performance across all stages, with a 59.06% F1-score in question reframing, 70% faithfulness in model explanations, and 67% context-utilization in natural language synthesis. User studies corroborate these findings, highlighting the creativity and comprehensiveness of generated explanations. Tested on the Diabetes (PIMA Indian) tabular dataset, MetaExplainer supports diverse explanation types, including Contrastive, Counterfactual, Rationale, Case-Based, and Data explanations. The framework's versatility and traceability from using ontology to guide LLMs suggest broad applicability beyond the tested scenarios, positioning MetaExplainer as a promising tool for enhancing AI explainability across various domains.

An Ontology-Enabled Approach For User-Centered and Knowledge-Enabled Explanations of AI Systems

Oct 23, 2024Abstract:Explainable Artificial Intelligence (AI) focuses on helping humans understand the working of AI systems or their decisions and has been a cornerstone of AI for decades. Recent research in explainability has focused on explaining the workings of AI models or model explainability. There have also been several position statements and review papers detailing the needs of end-users for user-centered explainability but fewer implementations. Hence, this thesis seeks to bridge some gaps between model and user-centered explainability. We create an explanation ontology (EO) to represent literature-derived explanation types via their supporting components. We implement a knowledge-augmented question-answering (QA) pipeline to support contextual explanations in a clinical setting. Finally, we are implementing a system to combine explanations from different AI methods and data modalities. Within the EO, we can represent fifteen different explanation types, and we have tested these representations in six exemplar use cases. We find that knowledge augmentations improve the performance of base large language models in the contextualized QA, and the performance is variable across disease groups. In the same setting, clinicians also indicated that they prefer to see actionability as one of the main foci in explanations. In our explanations combination method, we plan to use similarity metrics to determine the similarity of explanations in a chronic disease detection setting. Overall, through this thesis, we design methods that can support knowledge-enabled explanations across different use cases, accounting for the methods in today's AI era that can generate the supporting components of these explanations and domain knowledge sources that can enhance them.

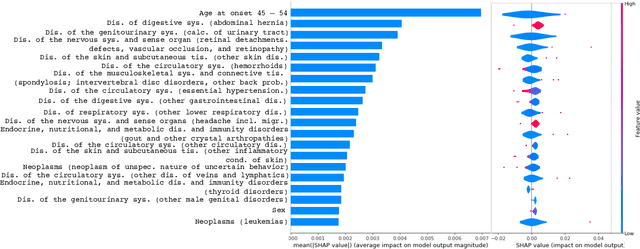

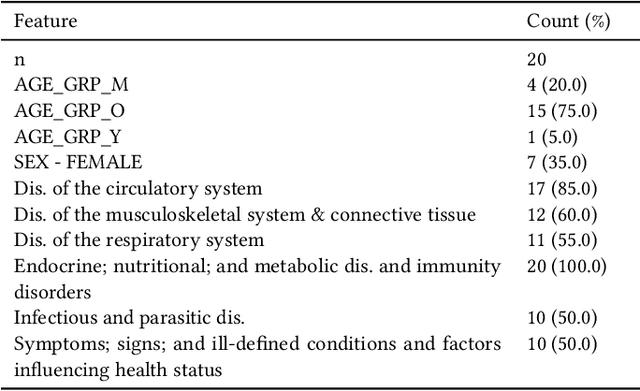

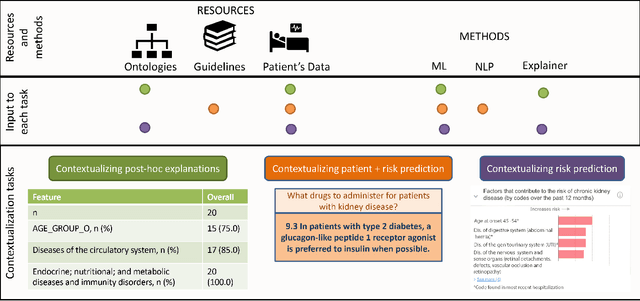

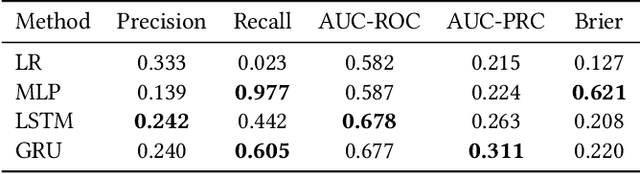

Informing clinical assessment by contextualizing post-hoc explanations of risk prediction models in type-2 diabetes

Feb 11, 2023Abstract:Medical experts may use Artificial Intelligence (AI) systems with greater trust if these are supported by contextual explanations that let the practitioner connect system inferences to their context of use. However, their importance in improving model usage and understanding has not been extensively studied. Hence, we consider a comorbidity risk prediction scenario and focus on contexts regarding the patients clinical state, AI predictions about their risk of complications, and algorithmic explanations supporting the predictions. We explore how relevant information for such dimensions can be extracted from Medical guidelines to answer typical questions from clinical practitioners. We identify this as a question answering (QA) task and employ several state-of-the-art LLMs to present contexts around risk prediction model inferences and evaluate their acceptability. Finally, we study the benefits of contextual explanations by building an end-to-end AI pipeline including data cohorting, AI risk modeling, post-hoc model explanations, and prototyped a visual dashboard to present the combined insights from different context dimensions and data sources, while predicting and identifying the drivers of risk of Chronic Kidney Disease - a common type-2 diabetes comorbidity. All of these steps were performed in engagement with medical experts, including a final evaluation of the dashboard results by an expert medical panel. We show that LLMs, in particular BERT and SciBERT, can be readily deployed to extract some relevant explanations to support clinical usage. To understand the value-add of the contextual explanations, the expert panel evaluated these regarding actionable insights in the relevant clinical setting. Overall, our paper is one of the first end-to-end analyses identifying the feasibility and benefits of contextual explanations in a real-world clinical use case.

Leveraging Clinical Context for User-Centered Explainability: A Diabetes Use Case

Jul 15, 2021

Abstract:Academic advances of AI models in high-precision domains, like healthcare, need to be made explainable in order to enhance real-world adoption. Our past studies and ongoing interactions indicate that medical experts can use AI systems with greater trust if there are ways to connect the model inferences about patients to explanations that are tied back to the context of use. Specifically, risk prediction is a complex problem of diagnostic and interventional importance to clinicians wherein they consult different sources to make decisions. To enable the adoption of the ever improving AI risk prediction models in practice, we have begun to explore techniques to contextualize such models along three dimensions of interest: the patients' clinical state, AI predictions about their risk of complications, and algorithmic explanations supporting the predictions. We validate the importance of these dimensions by implementing a proof-of-concept (POC) in type-2 diabetes (T2DM) use case where we assess the risk of chronic kidney disease (CKD) - a common T2DM comorbidity. Within the POC, we include risk prediction models for CKD, post-hoc explainers of the predictions, and other natural-language modules which operationalize domain knowledge and CPGs to provide context. With primary care physicians (PCP) as our end-users, we present our initial results and clinician feedback in this paper. Our POC approach covers multiple knowledge sources and clinical scenarios, blends knowledge to explain data and predictions to PCPs, and received an enthusiastic response from our medical expert.

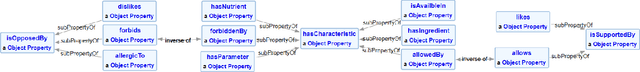

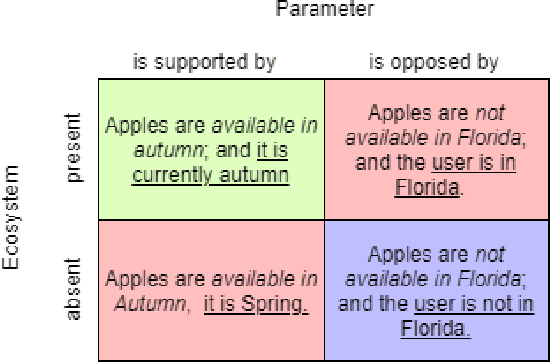

Semantic Modeling for Food Recommendation Explanations

May 04, 2021

Abstract:With the increased use of AI methods to provide recommendations in the health, specifically in the food dietary recommendation space, there is also an increased need for explainability of those recommendations. Such explanations would benefit users of recommendation systems by empowering them with justifications for following the system's suggestions. We present the Food Explanation Ontology (FEO) that provides a formalism for modeling explanations to users for food-related recommendations. FEO models food recommendations, using concepts from the explanation domain to create responses to user questions about food recommendations they receive from AI systems such as personalized knowledge base question answering systems. FEO uses a modular, extensible structure that lends itself to a variety of explanations while still preserving important semantic details to accurately represent explanations of food recommendations. In order to evaluate this system, we used a set of competency questions derived from explanation types present in literature that are relevant to food recommendations. Our motivation with the use of FEO is to empower users to make decisions about their health, fully equipped with an understanding of the AI recommender systems as they relate to user questions, by providing reasoning behind their recommendations in the form of explanations.

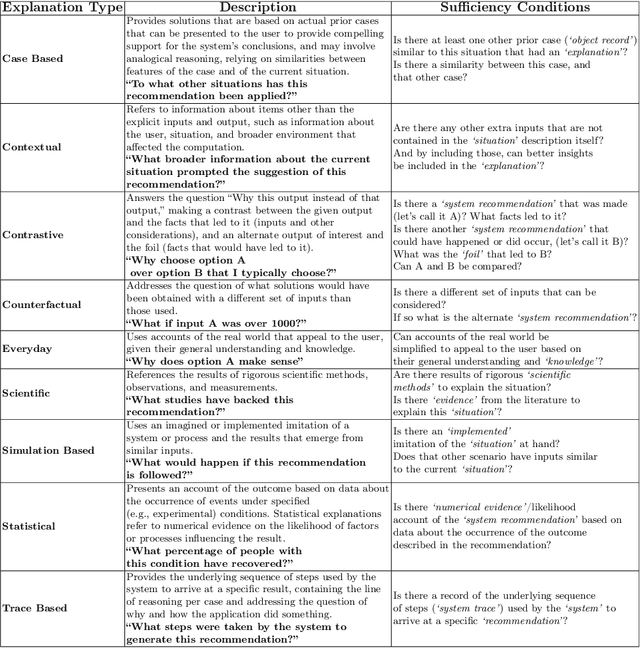

Explanation Ontology: A Model of Explanations for User-Centered AI

Oct 04, 2020

Abstract:Explainability has been a goal for Artificial Intelligence (AI) systems since their conception, with the need for explainability growing as more complex AI models are increasingly used in critical, high-stakes settings such as healthcare. Explanations have often added to an AI system in a non-principled, post-hoc manner. With greater adoption of these systems and emphasis on user-centric explainability, there is a need for a structured representation that treats explainability as a primary consideration, mapping end user needs to specific explanation types and the system's AI capabilities. We design an explanation ontology to model both the role of explanations, accounting for the system and user attributes in the process, and the range of different literature-derived explanation types. We indicate how the ontology can support user requirements for explanations in the domain of healthcare. We evaluate our ontology with a set of competency questions geared towards a system designer who might use our ontology to decide which explanation types to include, given a combination of users' needs and a system's capabilities, both in system design settings and in real-time operations. Through the use of this ontology, system designers will be able to make informed choices on which explanations AI systems can and should provide.

* 16 pages (but 1 reference over on arxiv), 5 tables, 3 code listings, 1 figure

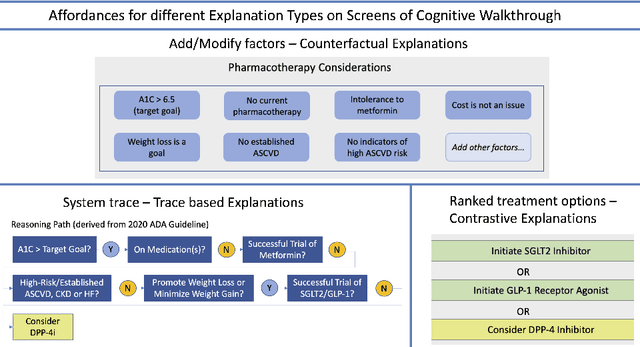

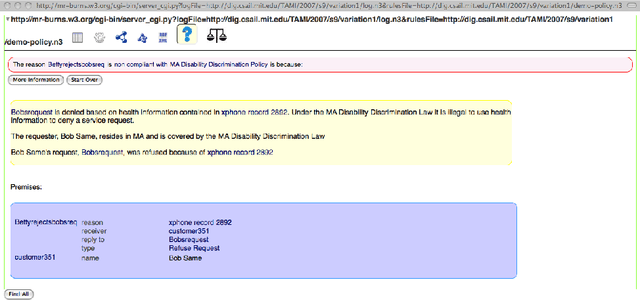

Explanation Ontology in Action: A Clinical Use-Case

Oct 04, 2020

Abstract:We addressed the problem of a lack of semantic representation for user-centric explanations and different explanation types in our Explanation Ontology (https://purl.org/heals/eo). Such a representation is increasingly necessary as explainability has become an important problem in Artificial Intelligence with the emergence of complex methods and an uptake in high-precision and user-facing settings. In this submission, we provide step-by-step guidance for system designers to utilize our ontology, introduced in our resource track paper, to plan and model for explanations during the design of their Artificial Intelligence systems. We also provide a detailed example with our utilization of this guidance in a clinical setting.

* 5 pages, 2 figures, 1 protocol

Directions for Explainable Knowledge-Enabled Systems

Mar 17, 2020

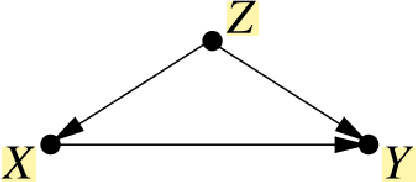

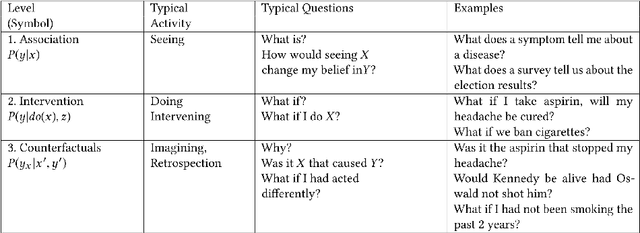

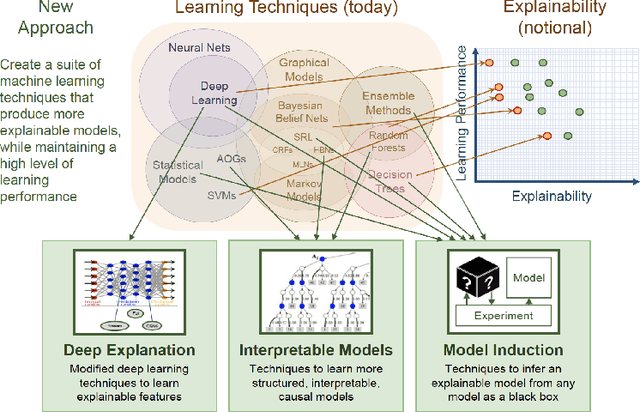

Abstract:Interest in the field of Explainable Artificial Intelligence has been growing for decades and has accelerated recently. As Artificial Intelligence models have become more complex, and often more opaque, with the incorporation of complex machine learning techniques, explainability has become more critical. Recently, researchers have been investigating and tackling explainability with a user-centric focus, looking for explanations to consider trustworthiness, comprehensibility, explicit provenance, and context-awareness. In this chapter, we leverage our survey of explanation literature in Artificial Intelligence and closely related fields and use these past efforts to generate a set of explanation types that we feel reflect the expanded needs of explanation for today's artificial intelligence applications. We define each type and provide an example question that would motivate the need for this style of explanation. We believe this set of explanation types will help future system designers in their generation and prioritization of requirements and further help generate explanations that are better aligned to users' and situational needs.

Foundations of Explainable Knowledge-Enabled Systems

Mar 17, 2020

Abstract:Explainability has been an important goal since the early days of Artificial Intelligence. Several approaches for producing explanations have been developed. However, many of these approaches were tightly coupled with the capabilities of the artificial intelligence systems at the time. With the proliferation of AI-enabled systems in sometimes critical settings, there is a need for them to be explainable to end-users and decision-makers. We present a historical overview of explainable artificial intelligence systems, with a focus on knowledge-enabled systems, spanning the expert systems, cognitive assistants, semantic applications, and machine learning domains. Additionally, borrowing from the strengths of past approaches and identifying gaps needed to make explanations user- and context-focused, we propose new definitions for explanations and explainable knowledge-enabled systems.

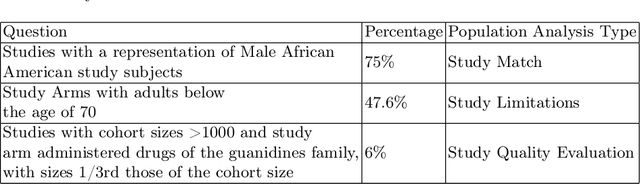

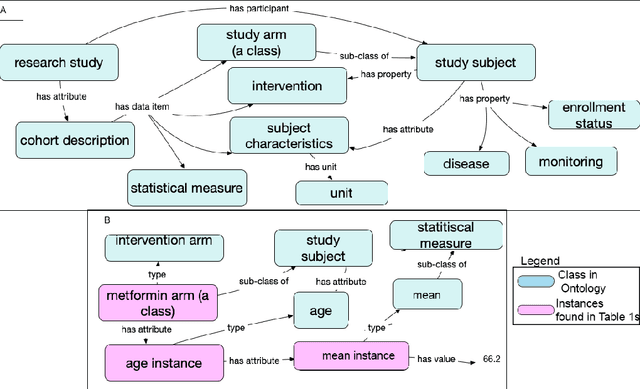

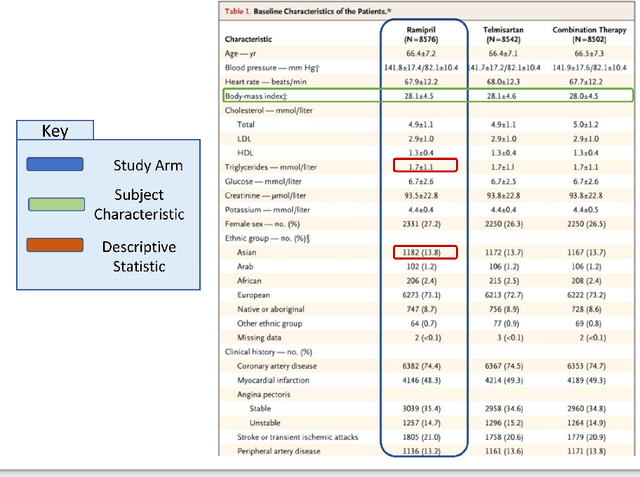

Making Study Populations Visible through Knowledge Graphs

Jul 09, 2019

Abstract:Treatment recommendations within Clinical Practice Guidelines (CPGs) are largely based on findings from clinical trials and case studies, referred to here as research studies, that are often based on highly selective clinical populations, referred to here as study cohorts. When medical practitioners apply CPG recommendations, they need to understand how well their patient population matches the characteristics of those in the study cohort, and thus are confronted with the challenges of locating the study cohort information and making an analytic comparison. To address these challenges, we develop an ontology-enabled prototype system, which exposes the population descriptions in research studies in a declarative manner, with the ultimate goal of allowing medical practitioners to better understand the applicability and generalizability of treatment recommendations. We build a Study Cohort Ontology (SCO) to encode the vocabulary of study population descriptions, that are often reported in the first table in the published work, thus they are often referred to as Table 1. We leverage the well-used Semanticscience Integrated Ontology (SIO) for defining property associations between classes. Further, we model the key components of Table 1s, i.e., collections of study subjects, subject characteristics, and statistical measures in RDF knowledge graphs. We design scenarios for medical practitioners to perform population analysis, and generate cohort similarity visualizations to determine the applicability of a study population to the clinical population of interest. Our semantic approach to make study populations visible, by standardized representations of Table 1s, allows users to quickly derive clinically relevant inferences about study populations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge