Shaoming Song

MAP: Model Aggregation and Personalization in Federated Learning with Incomplete Classes

Apr 14, 2024

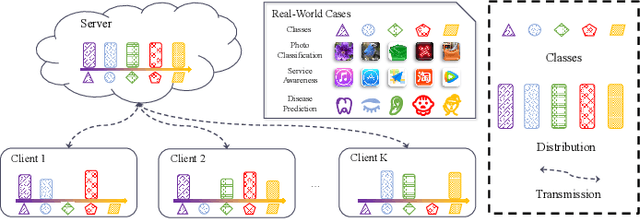

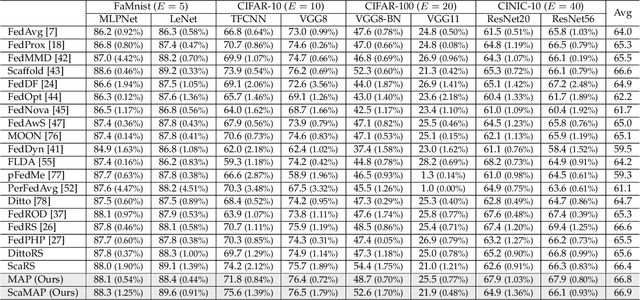

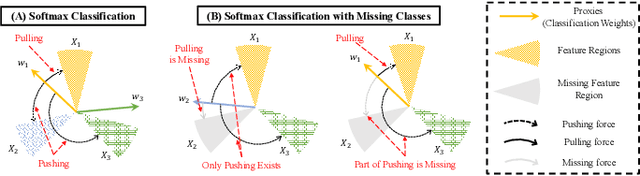

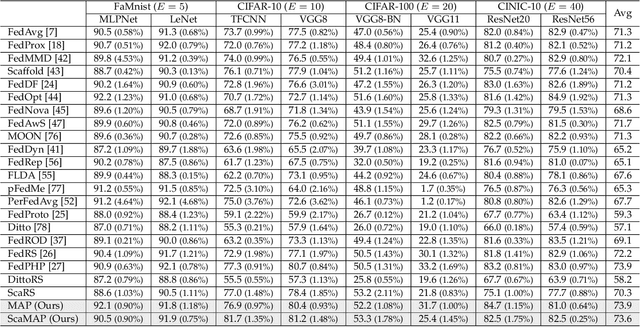

Abstract:In some real-world applications, data samples are usually distributed on local devices, where federated learning (FL) techniques are proposed to coordinate decentralized clients without directly sharing users' private data. FL commonly follows the parameter server architecture and contains multiple personalization and aggregation procedures. The natural data heterogeneity across clients, i.e., Non-I.I.D. data, challenges both the aggregation and personalization goals in FL. In this paper, we focus on a special kind of Non-I.I.D. scene where clients own incomplete classes, i.e., each client can only access a partial set of the whole class set. The server aims to aggregate a complete classification model that could generalize to all classes, while the clients are inclined to improve the performance of distinguishing their observed classes. For better model aggregation, we point out that the standard softmax will encounter several problems caused by missing classes and propose "restricted softmax" as an alternative. For better model personalization, we point out that the hard-won personalized models are not well exploited and propose "inherited private model" to store the personalization experience. Our proposed algorithm named MAP could simultaneously achieve the aggregation and personalization goals in FL. Abundant experimental studies verify the superiorities of our algorithm.

Asymmetric Temperature Scaling Makes Larger Networks Teach Well Again

Oct 11, 2022

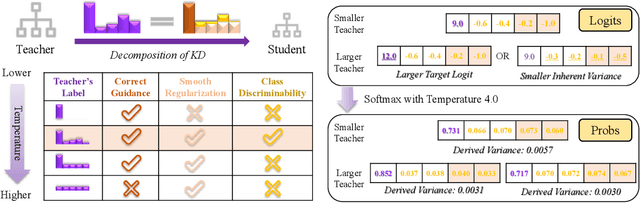

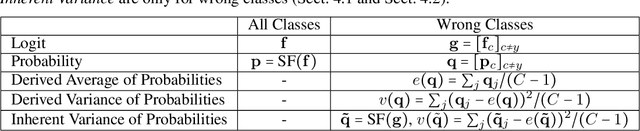

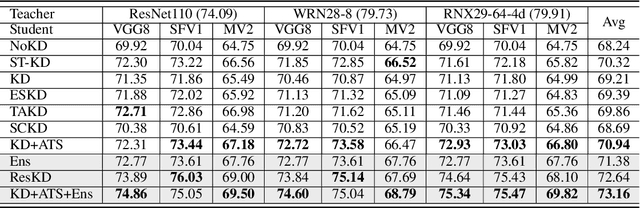

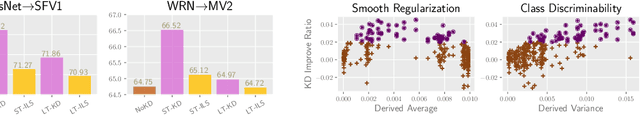

Abstract:Knowledge Distillation (KD) aims at transferring the knowledge of a well-performed neural network (the {\it teacher}) to a weaker one (the {\it student}). A peculiar phenomenon is that a more accurate model doesn't necessarily teach better, and temperature adjustment can neither alleviate the mismatched capacity. To explain this, we decompose the efficacy of KD into three parts: {\it correct guidance}, {\it smooth regularization}, and {\it class discriminability}. The last term describes the distinctness of {\it wrong class probabilities} that the teacher provides in KD. Complex teachers tend to be over-confident and traditional temperature scaling limits the efficacy of {\it class discriminability}, resulting in less discriminative wrong class probabilities. Therefore, we propose {\it Asymmetric Temperature Scaling (ATS)}, which separately applies a higher/lower temperature to the correct/wrong class. ATS enlarges the variance of wrong class probabilities in the teacher's label and makes the students grasp the absolute affinities of wrong classes to the target class as discriminative as possible. Both theoretical analysis and extensive experimental results demonstrate the effectiveness of ATS. The demo developed in Mindspore is available at \url{https://gitee.com/lxcnju/ats-mindspore} and will be available at \url{https://gitee.com/mindspore/models/tree/master/research/cv/ats}.

Avoid Overfitting User Specific Information in Federated Keyword Spotting

Jun 17, 2022

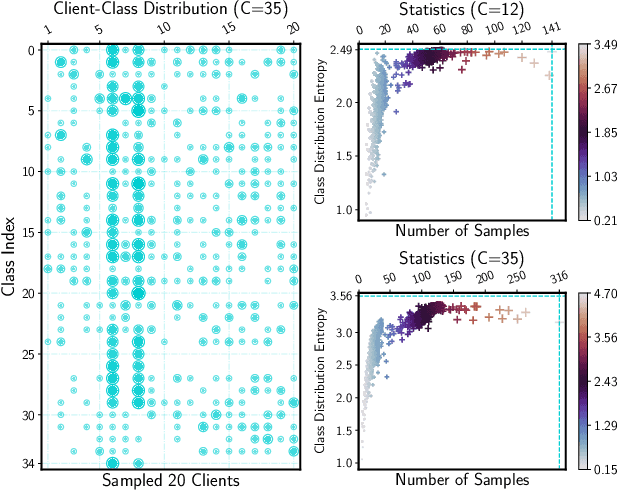

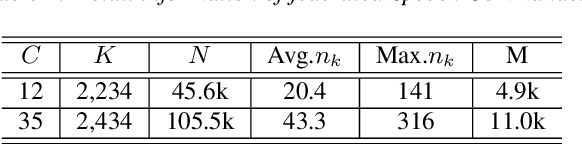

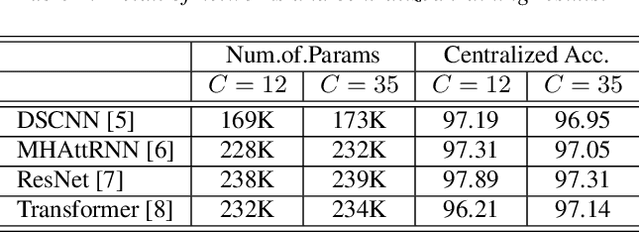

Abstract:Keyword spotting (KWS) aims to discriminate a specific wake-up word from other signals precisely and efficiently for different users. Recent works utilize various deep networks to train KWS models with all users' speech data centralized without considering data privacy. Federated KWS (FedKWS) could serve as a solution without directly sharing users' data. However, the small amount of data, different user habits, and various accents could lead to fatal problems, e.g., overfitting or weight divergence. Hence, we propose several strategies to encourage the model not to overfit user-specific information in FedKWS. Specifically, we first propose an adversarial learning strategy, which updates the downloaded global model against an overfitted local model and explicitly encourages the global model to capture user-invariant information. Furthermore, we propose an adaptive local training strategy, letting clients with more training data and more uniform class distributions undertake more local update steps. Equivalently, this strategy could weaken the negative impacts of those users whose data is less qualified. Our proposed FedKWS-UI could explicitly and implicitly learn user-invariant information in FedKWS. Abundant experimental results on federated Google Speech Commands verify the effectiveness of FedKWS-UI.

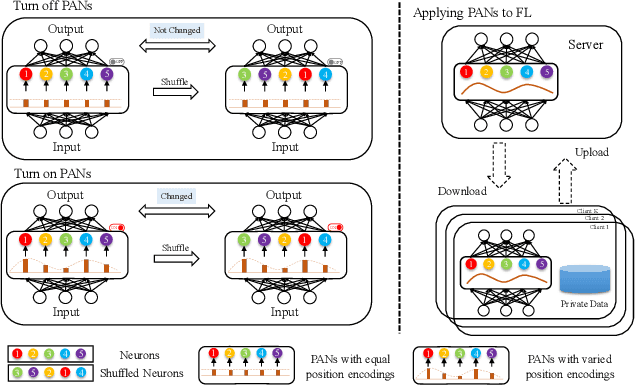

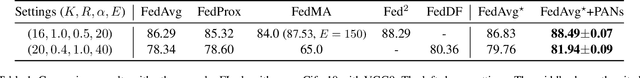

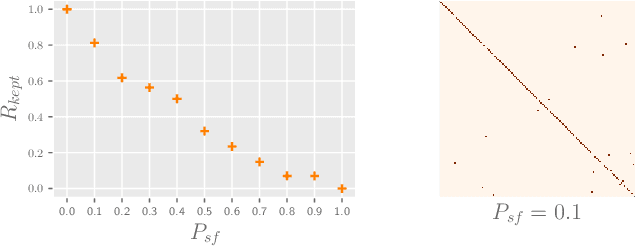

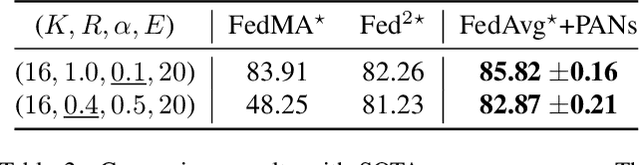

Federated Learning with Position-Aware Neurons

Apr 02, 2022

Abstract:Federated Learning (FL) fuses collaborative models from local nodes without centralizing users' data. The permutation invariance property of neural networks and the non-i.i.d. data across clients make the locally updated parameters imprecisely aligned, disabling the coordinate-based parameter averaging. Traditional neurons do not explicitly consider position information. Hence, we propose Position-Aware Neurons (PANs) as an alternative, fusing position-related values (i.e., position encodings) into neuron outputs. PANs couple themselves to their positions and minimize the possibility of dislocation, even updating on heterogeneous data. We turn on/off PANs to disable/enable the permutation invariance property of neural networks. PANs are tightly coupled with positions when applied to FL, making parameters across clients pre-aligned and facilitating coordinate-based parameter averaging. PANs are algorithm-agnostic and could universally improve existing FL algorithms. Furthermore, "FL with PANs" is simple to implement and computationally friendly.

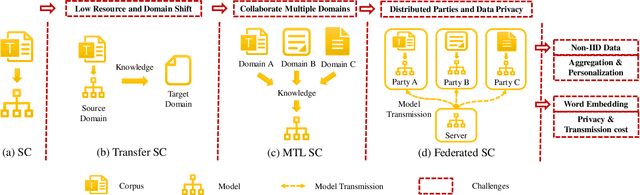

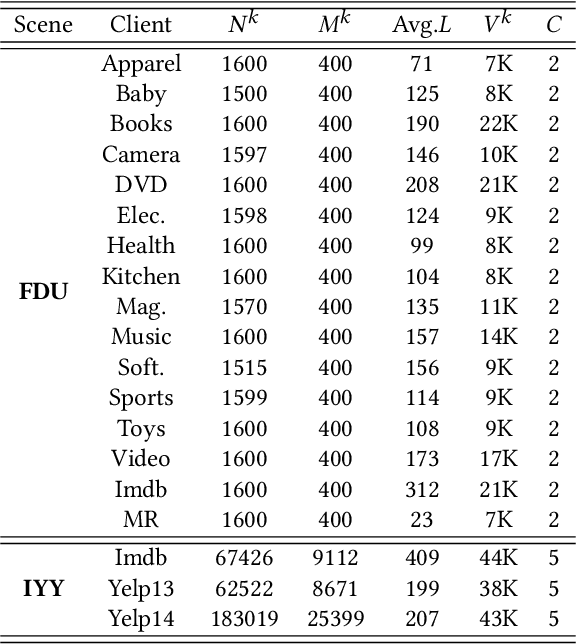

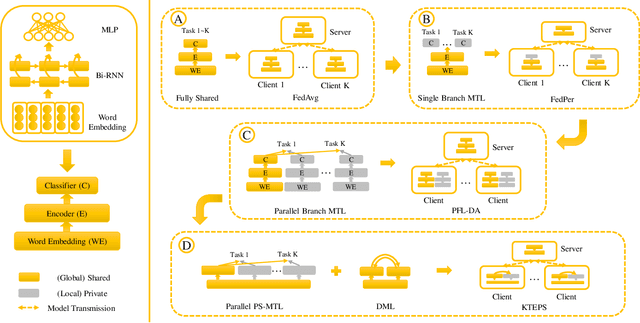

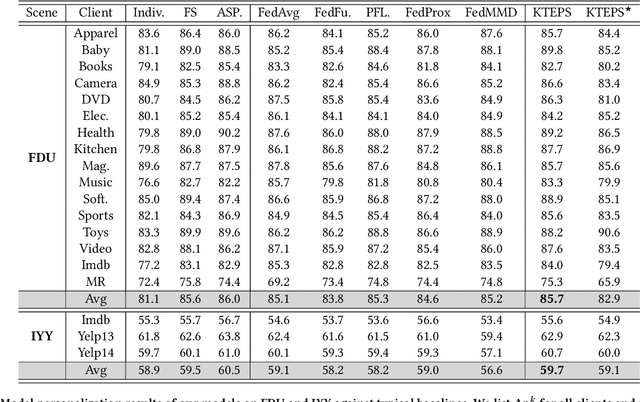

Preliminary Steps Towards Federated Sentiment Classification

Jul 26, 2021

Abstract:Automatically mining sentiment tendency contained in natural language is a fundamental research to some artificial intelligent applications, where solutions alternate with challenges. Transfer learning and multi-task learning techniques have been leveraged to mitigate the supervision sparsity and collaborate multiple heterogeneous domains correspondingly. Recent years, the sensitive nature of users' private data raises another challenge for sentiment classification, i.e., data privacy protection. In this paper, we resort to federated learning for multiple domain sentiment classification under the constraint that the corpora must be stored on decentralized devices. In view of the heterogeneous semantics across multiple parties and the peculiarities of word embedding, we pertinently provide corresponding solutions. First, we propose a Knowledge Transfer Enhanced Private-Shared (KTEPS) framework for better model aggregation and personalization in federated sentiment classification. Second, we propose KTEPS$^\star$ with the consideration of the rich semantic and huge embedding size properties of word vectors, utilizing Projection-based Dimension Reduction (PDR) methods for privacy protection and efficient transmission simultaneously. We propose two federated sentiment classification scenes based on public benchmarks, and verify the superiorities of our proposed methods with abundant experimental investigations.

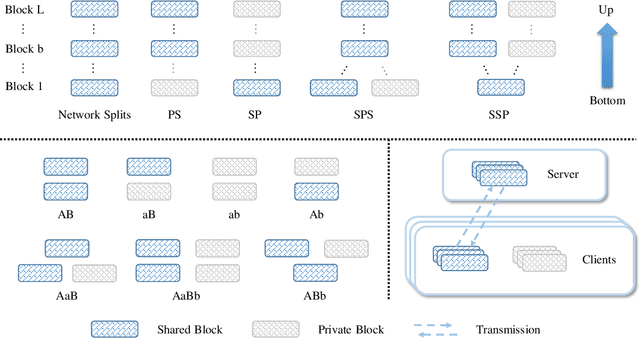

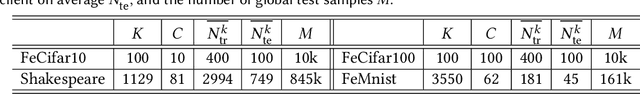

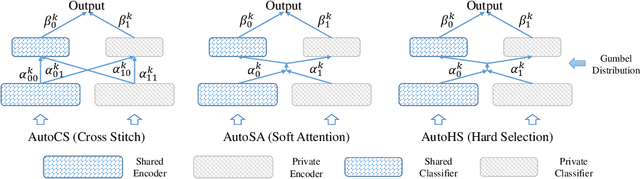

Aggregate or Not? Exploring Where to Privatize in DNN Based Federated Learning Under Different Non-IID Scenes

Jul 26, 2021

Abstract:Although federated learning (FL) has recently been proposed for efficient distributed training and data privacy protection, it still encounters many obstacles. One of these is the naturally existing statistical heterogeneity among clients, making local data distributions non independently and identically distributed (i.e., non-iid), which poses challenges for model aggregation and personalization. For FL with a deep neural network (DNN), privatizing some layers is a simple yet effective solution for non-iid problems. However, which layers should we privatize to facilitate the learning process? Do different categories of non-iid scenes have preferred privatization ways? Can we automatically learn the most appropriate privatization way during FL? In this paper, we answer these questions via abundant experimental studies on several FL benchmarks. First, we present the detailed statistics of these benchmarks and categorize them into covariate and label shift non-iid scenes. Then, we investigate both coarse-grained and fine-grained network splits and explore whether the preferred privatization ways have any potential relations to the specific category of a non-iid scene. Our findings are exciting, e.g., privatizing the base layers could boost the performances even in label shift non-iid scenes, which are inconsistent with some natural conjectures. We also find that none of these privatization ways could improve the performances on the Shakespeare benchmark, and we guess that Shakespeare may not be a seriously non-iid scene. Finally, we propose several approaches to automatically learn where to aggregate via cross-stitch, soft attention, and hard selection. We advocate the proposed methods could serve as a preliminary try to explore where to privatize for a novel non-iid scene.

Loosely Coupled Federated Learning Over Generative Models

Sep 28, 2020

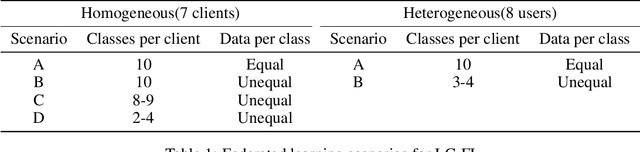

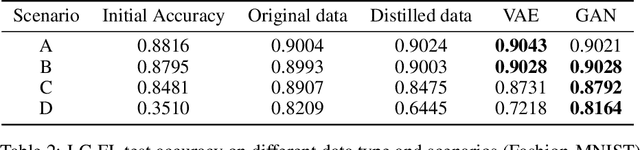

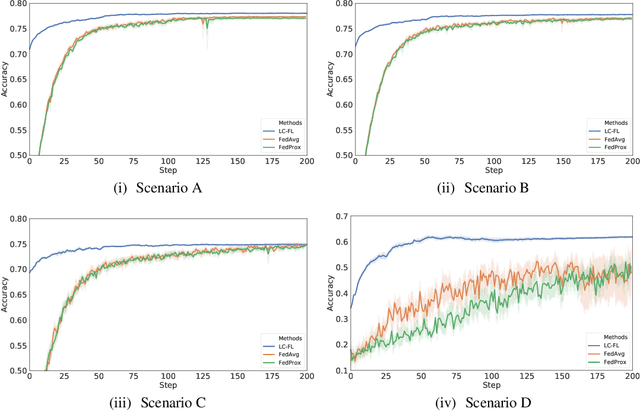

Abstract:Federated learning (FL) was proposed to achieve collaborative machine learning among various clients without uploading private data. However, due to model aggregation strategies, existing frameworks require strict model homogeneity, limiting the application in more complicated scenarios. Besides, the communication cost of FL's model and gradient transmission is extremely high. This paper proposes Loosely Coupled Federated Learning (LC-FL), a framework using generative models as transmission media to achieve low communication cost and heterogeneous federated learning. LC-FL can be applied on scenarios where clients possess different kinds of machine learning models. Experiments on real-world datasets covering different multiparty scenarios demonstrate the effectiveness of our proposal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge