Santiago Estrada

FastSurfer-HypVINN: Automated sub-segmentation of the hypothalamus and adjacent structures on high-resolutional brain MRI

Aug 24, 2023Abstract:The hypothalamus plays a crucial role in the regulation of a broad range of physiological, behavioural, and cognitive functions. However, despite its importance, only a few small-scale neuroimaging studies have investigated its substructures, likely due to the lack of fully automated segmentation tools to address scalability and reproducibility issues of manual segmentation. While the only previous attempt to automatically sub-segment the hypothalamus with a neural network showed promise for 1.0 mm isotropic T1-weighted (T1w) MRI, there is a need for an automated tool to sub-segment also high-resolutional (HiRes) MR scans, as they are becoming widely available, and include structural detail also from multi-modal MRI. We, therefore, introduce a novel, fast, and fully automated deep learning method named HypVINN for sub-segmentation of the hypothalamus and adjacent structures on 0.8 mm isotropic T1w and T2w brain MR images that is robust to missing modalities. We extensively validate our model with respect to segmentation accuracy, generalizability, in-session test-retest reliability, and sensitivity to replicate hypothalamic volume effects (e.g. sex-differences). The proposed method exhibits high segmentation performance both for standalone T1w images as well as for T1w/T2w image pairs. Even with the additional capability to accept flexible inputs, our model matches or exceeds the performance of state-of-the-art methods with fixed inputs. We, further, demonstrate the generalizability of our method in experiments with 1.0 mm MR scans from both the Rhineland Study and the UK Biobank. Finally, HypVINN can perform the segmentation in less than a minute (GPU) and will be available in the open source FastSurfer neuroimaging software suite, offering a validated, efficient, and scalable solution for evaluating imaging-derived phenotypes of the hypothalamus.

Automated Olfactory Bulb Segmentation on High Resolutional T2-Weighted MRI

Aug 09, 2021

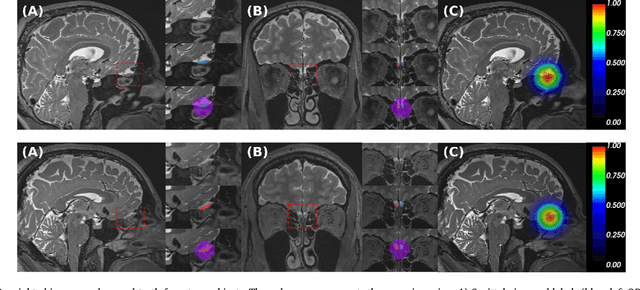

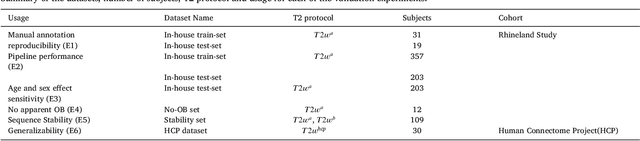

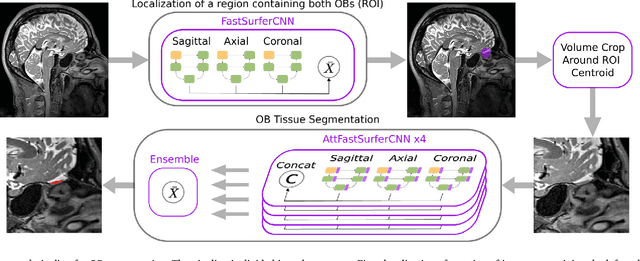

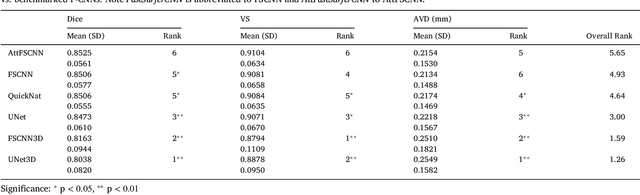

Abstract:The neuroimage analysis community has neglected the automated segmentation of the olfactory bulb (OB) despite its crucial role in olfactory function. The lack of an automatic processing method for the OB can be explained by its challenging properties. Nonetheless, recent advances in MRI acquisition techniques and resolution have allowed raters to generate more reliable manual annotations. Furthermore, the high accuracy of deep learning methods for solving semantic segmentation problems provides us with an option to reliably assess even small structures. In this work, we introduce a novel, fast, and fully automated deep learning pipeline to accurately segment OB tissue on sub-millimeter T2-weighted (T2w) whole-brain MR images. To this end, we designed a three-stage pipeline: (1) Localization of a region containing both OBs using FastSurferCNN, (2) Segmentation of OB tissue within the localized region through four independent AttFastSurferCNN - a novel deep learning architecture with a self-attention mechanism to improve modeling of contextual information, and (3) Ensemble of the predicted label maps. The OB pipeline exhibits high performance in terms of boundary delineation, OB localization, and volume estimation across a wide range of ages in 203 participants of the Rhineland Study. Moreover, it also generalizes to scans of an independent dataset never encountered during training, the Human Connectome Project (HCP), with different acquisition parameters and demographics, evaluated in 30 cases at the native 0.7mm HCP resolution, and the default 0.8mm pipeline resolution. We extensively validated our pipeline not only with respect to segmentation accuracy but also to known OB volume effects, where it can sensitively replicate age effects.

FastSurfer -- A fast and accurate deep learning based neuroimaging pipeline

Oct 09, 2019

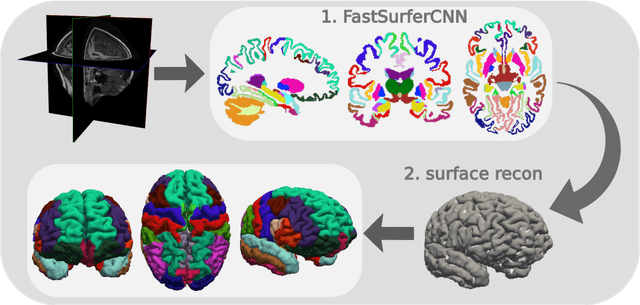

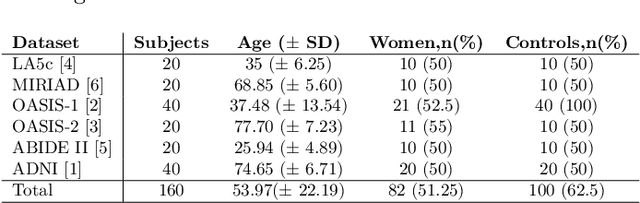

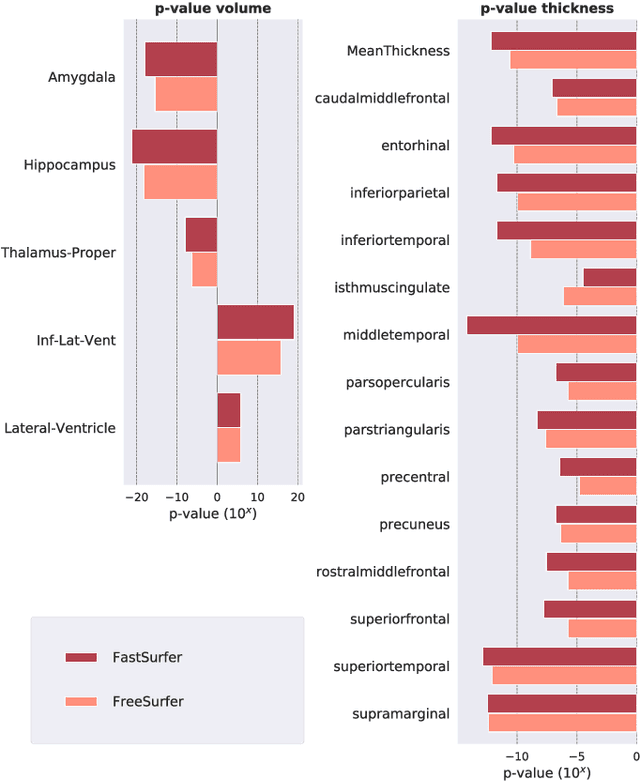

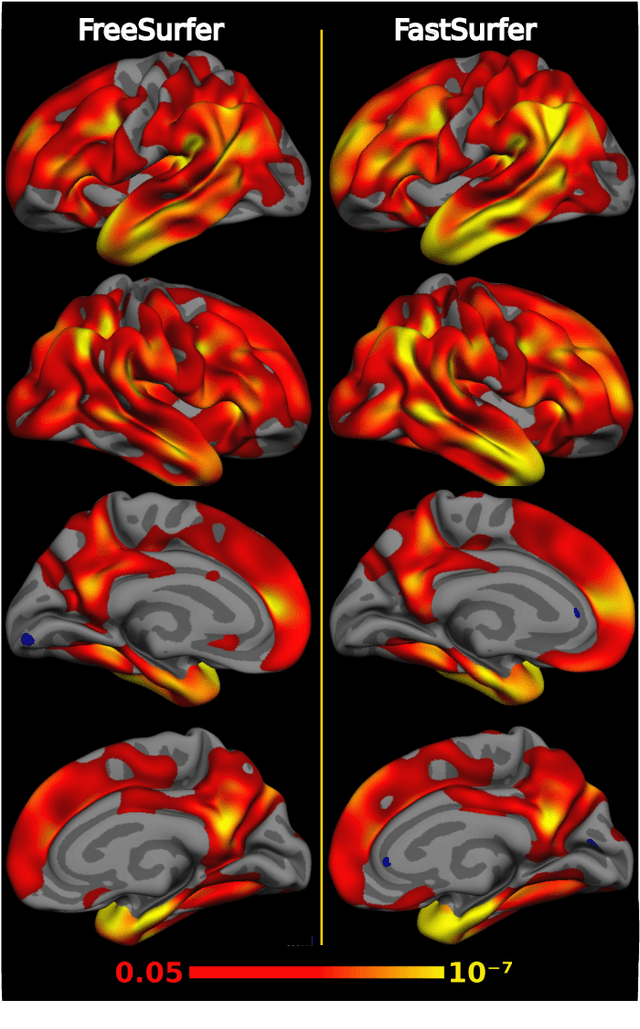

Abstract:Traditional neuroimage analysis pipelines involve computationally intensive, time-consuming optimization steps, and thus, do not scale well to large cohort studies with thousands or tens of thousands of individuals. In this work we propose a fast and accurate deep learning based neuroimaging pipeline for the automated processing of structural human brain MRI scans, including surface reconstruction and cortical parcellation. To this end, we introduce an advanced deep learning architecture capable of whole brain segmentation into 95 classes in under 1 minute, mimicking FreeSurfer's anatomical segmentation and cortical parcellation. The network architecture incorporates local and global competition via competitive dense blocks and competitive skip pathways, as well as multi-slice information aggregation that specifically tailor network performance towards accurate segmentation of both cortical and sub-cortical structures. Further, we perform fast cortical surface reconstruction and thickness analysis by introducing a spectral spherical embedding and by directly mapping the cortical labels from the image to the surface. This approach provides a full FreeSurfer alternative for volumetric analysis (within 1 minute) and surface-based thickness analysis (within only around 1h run time). For sustainability of this approach we perform extensive validation: we assert high segmentation accuracy on several unseen datasets, measure generalizability and demonstrate increased test-retest reliability, and increased sensitivity to disease effects relative to traditional FreeSurfer.

FatSegNet : A Fully Automated Deep Learning Pipeline for Adipose Tissue Segmentation on Abdominal Dixon MRI

Apr 03, 2019

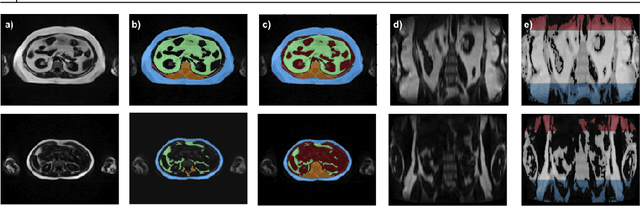

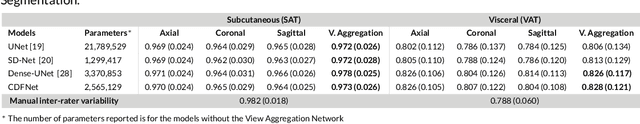

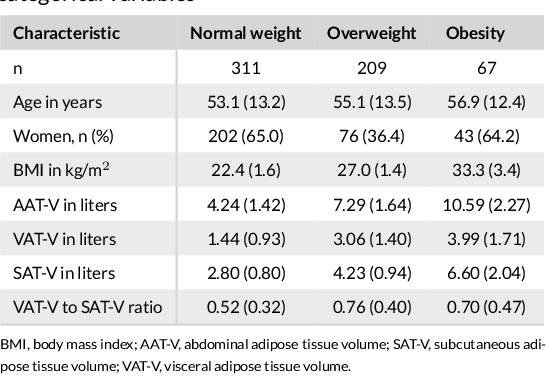

Abstract:Purpose: Development of a fast and fully automated deep learning pipeline (FatSegNet) to accurately identify, segment, and quantify abdominal adipose tissue on Dixon MRI from the Rhineland Study - a large prospective population-based study. Method: FatSegNet is composed of three stages: (i) consistent localization of the abdominal region using two 2D-Competitive Dense Fully Convolutional Networks (CDFNet), (ii) segmentation of adipose tissue on three views by independent CDFNets, and (iii) view aggregation. FatSegNet is trained with 33 manually annotated subjects, and validated by: 1) comparison of segmentation accuracy against a testingset covering a wide range of body mass index (BMI), 2) test-retest reliability, and 3) robustness in a large cohort study. Results: The CDFNet demonstrates increased robustness compared to traditional deep learning networks. FatSegNet dice score outperforms manual raters on the abdominal visceral adipose tissue (VAT, 0.828 vs. 0.788), and produces comparable results on subcutaneous adipose tissue (SAT, 0.973 vs. 0.982). The pipeline has very small test-retest absolute percentage difference and excellent agreement between scan sessions (VAT: APD = 2.957%, ICC=0.998 and SAT: APD= 3.254%, ICC=0.996). Conclusion: FatSegNet can reliably analyze a 3D Dixon MRI in1 min. It generalizes well to different body shapes, sensitively replicates known VAT and SAT volume effects in a large cohort study, and permits localized analysis of fat compartments.

Competition vs. Concatenation in Skip Connections of Fully Convolutional Networks

Jul 20, 2018

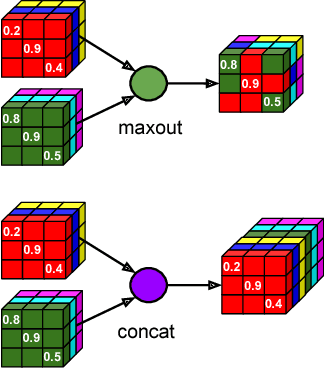

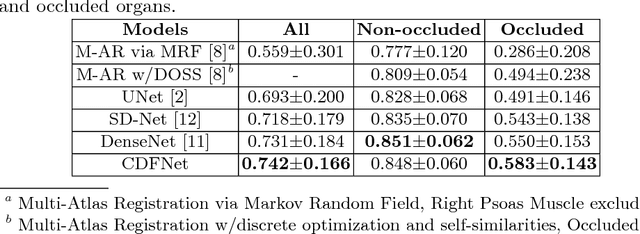

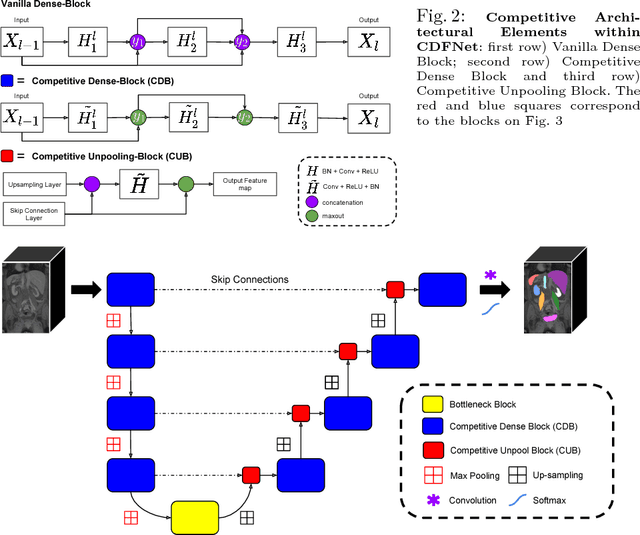

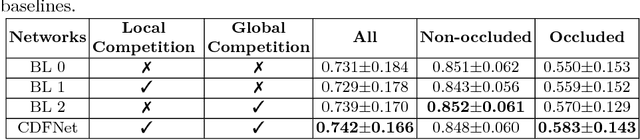

Abstract:Increased information sharing through short and long-range skip connections between layers in fully convolutional networks have demonstrated significant improvement in performance for semantic segmentation. In this paper, we propose Competitive Dense Fully Convolutional Networks (CDFNet) by introducing competitive maxout activations in place of naive feature concatenation for inducing competition amongst layers. Within CDFNet, we propose two architectural contributions, namely competitive dense block (CDB) and competitive unpooling block (CUB) to induce competition at local and global scales for short and long-range skip connections respectively. This extension is demonstrated to boost learning of specialized sub-networks targeted at segmenting specific anatomies, which in turn eases the training of complex tasks. We present the proof-of-concept on the challenging task of whole body segmentation in the publicly available VISCERAL benchmark and demonstrate improved performance over multiple learning and registration based state-of-the-art methods.

Complex Fully Convolutional Neural Networks for MR Image Reconstruction

Jul 09, 2018

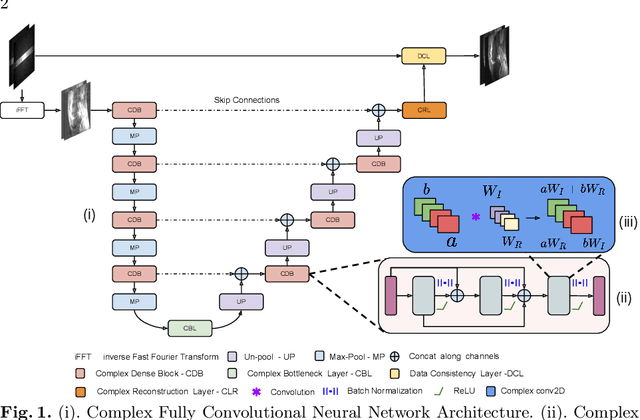

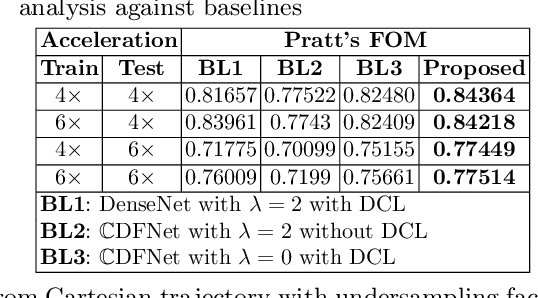

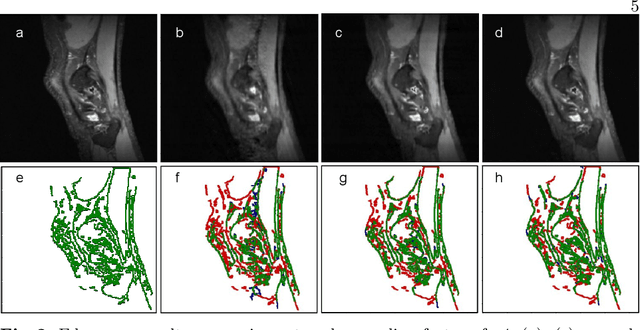

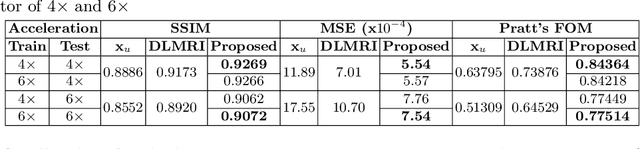

Abstract:Undersampling the k-space data is widely adopted for acceleration of Magnetic Resonance Imaging (MRI). Current deep learning based approaches for supervised learning of MRI image reconstruction employ real-valued operations and representations by treating complex valued k-space/spatial-space as real values. In this paper, we propose complex dense fully convolutional neural network ($\mathbb{C}$DFNet) for learning to de-alias the reconstruction artifacts within undersampled MRI images. We fashioned a densely-connected fully convolutional block tailored for complex-valued inputs by introducing dedicated layers such as complex convolution, batch normalization, non-linearities etc. $\mathbb{C}$DFNet leverages the inherently complex-valued nature of input k-space and learns richer representations. We demonstrate improved perceptual quality and recovery of anatomical structures through $\mathbb{C}$DFNet in contrast to its real-valued counterparts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge