Roman Genov

Lumosaic: Hyperspectral Video via Active Illumination and Coded-Exposure Pixels

Feb 25, 2026Abstract:We present Lumosaic, a compact active hyperspectral video system designed for real-time capture of dynamic scenes. Our approach combines a narrowband LED array with a coded-exposure-pixel (CEP) camera capable of high-speed, per-pixel exposure control, enabling joint encoding of scene information across space, time, and wavelength within each video frame. Unlike passive snapshot systems that divide light across multiple spectral channels simultaneously and assume no motion during a frame's exposure, Lumosaic actively synchronizes illumination and pixel-wise exposure, improving photon utilization and preserving spectral fidelity under motion. A learning-based reconstruction pipeline then recovers 31-channel hyperspectral (400-700 nm) video at 30 fps and VGA resolution, producing temporally coherent and spectrally accurate reconstructions. Experiments on synthetic and real data demonstrate that Lumosaic significantly improves reconstruction fidelity and temporal stability over existing snapshot hyperspectral imaging systems, enabling robust hyperspectral video across diverse materials and motion conditions.

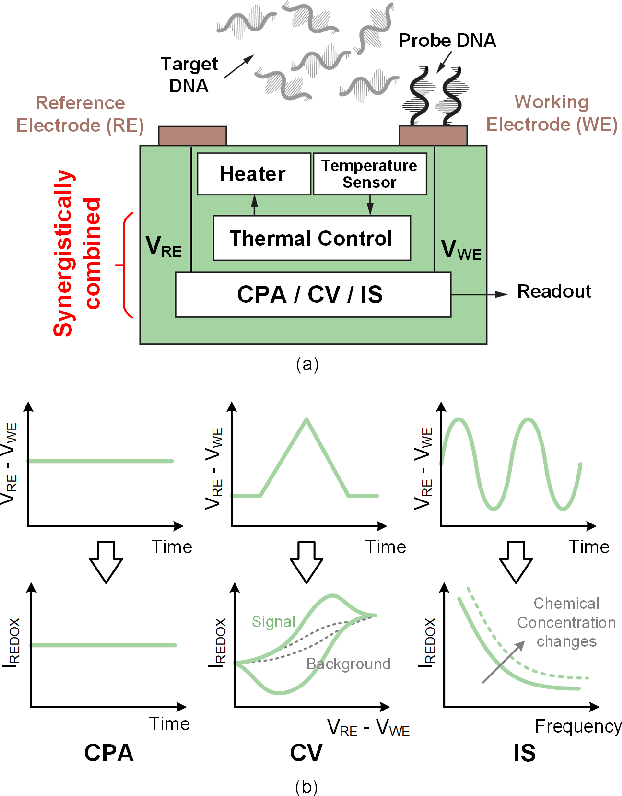

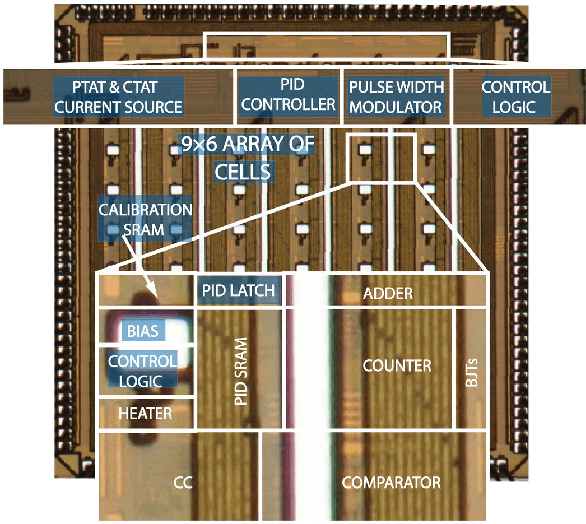

On-CMOS High-Throughput Multi-Modal Amperometric DNA Analysis with Distributed Thermal Regulation

Jul 30, 2022

Abstract:Accurate temperature regulation is critical for amperometric DNA analysis to achieve high fidelity, reliability, and throughput. In this work, a 9x6 cell array of mixed-signal CMOS distributed temperature regulators for on-CMOS multi-modal amperometric DNA analysis is presented. Three DNA analysis methods are supported, including constant potential amperometry (CPA), cyclic voltammetry (CV), and impedance spectroscopy (IS). In-cell heating and temperature sensing elements are implemented in standard CMOS technology without post-processing. Using proportional-integral-derivative (PID) control, the local temperature can be regulated to within +/-0.5C of any desired value between 20C and 90C. The two computationally intensive operations in the PID algorithm, multiplication, and subtraction, are performed by an in-cell dual-slope multiplying ADC in the mixed-signal domain, resulting in a small area and low power consumption. Over 95% of the circuit blocks are synergistically shared among the four operating modes, including CPA, CV, IS, and the proposed temperature regulation mode. A 3mmx3mm CMOS prototype fabricated in a 0.13um CMOS technology has been fully experimentally characterized. Each channel occupies an area of 0.06mm2 and consumes 42uW from a 1.2V supply. The proposed distributed temperature regulation design and the mixed-signal PID implementation can be applied to a wide range of sensory and other applications.

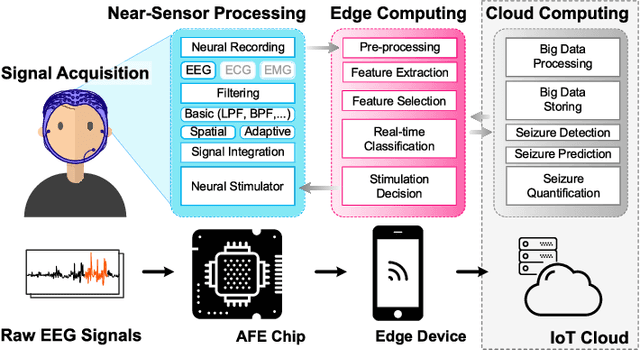

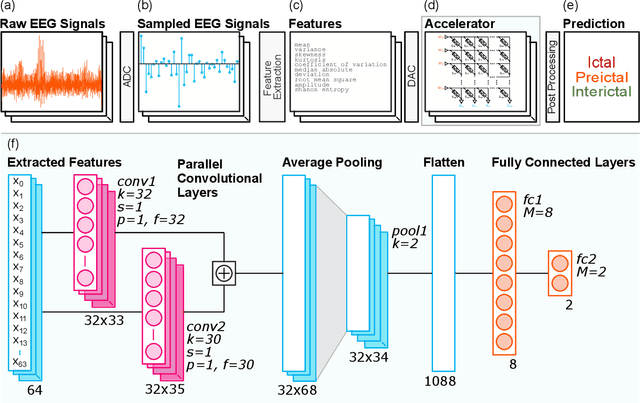

Seizure Detection and Prediction by Parallel Memristive Convolutional Neural Networks

Jun 20, 2022

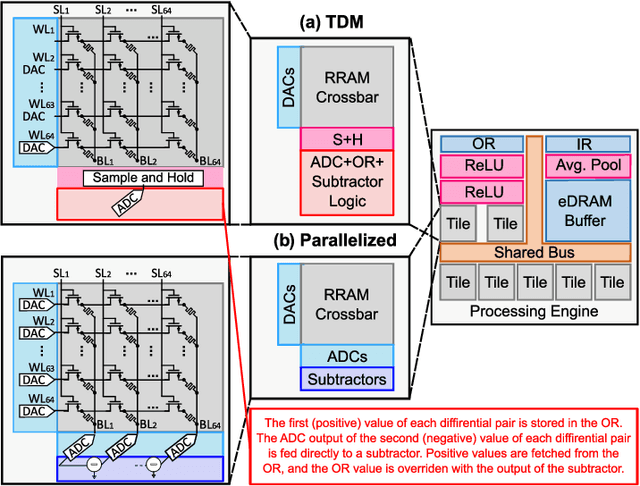

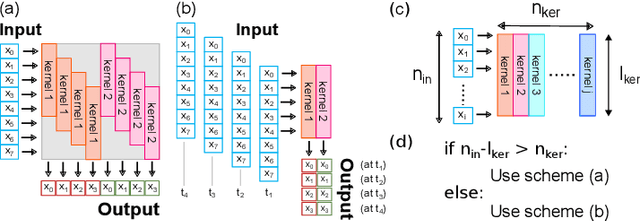

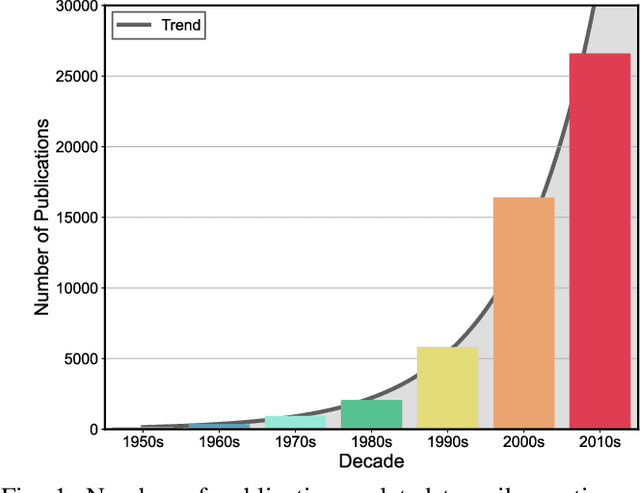

Abstract:During the past two decades, epileptic seizure detection and prediction algorithms have evolved rapidly. However, despite significant performance improvements, their hardware implementation using conventional technologies, such as Complementary Metal-Oxide-Semiconductor (CMOS), in power and area-constrained settings remains a challenging task; especially when many recording channels are used. In this paper, we propose a novel low-latency parallel Convolutional Neural Network (CNN) architecture that has between 2-2,800x fewer network parameters compared to SOTA CNN architectures and achieves 5-fold cross validation accuracy of 99.84% for epileptic seizure detection, and 99.01% and 97.54% for epileptic seizure prediction, when evaluated using the University of Bonn Electroencephalogram (EEG), CHB-MIT and SWEC-ETHZ seizure datasets, respectively. We subsequently implement our network onto analog crossbar arrays comprising Resistive Random-Access Memory (RRAM) devices, and provide a comprehensive benchmark by simulating, laying out, and determining hardware requirements of the CNN component of our system. To the best of our knowledge, we are the first to parallelize the execution of convolution layer kernels on separate analog crossbars to enable 2 orders of magnitude reduction in latency compared to SOTA hybrid Memristive-CMOS DL accelerators. Furthermore, we investigate the effects of non-idealities on our system and investigate Quantization Aware Training (QAT) to mitigate the performance degradation due to low ADC/DAC resolution. Finally, we propose a stuck weight offsetting methodology to mitigate performance degradation due to stuck RON/ROFF memristor weights, recovering up to 32% accuracy, without requiring retraining. The CNN component of our platform is estimated to consume approximately 2.791W of power while occupying an area of 31.255mm$^2$ in a 22nm FDSOI CMOS process.

* Accepted by IEEE Transactions on Biomedical Circuits and Systems

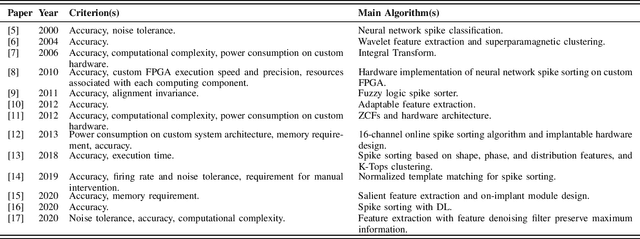

Toward A Formalized Approach for Spike Sorting Algorithms and Hardware Evaluation

May 13, 2022

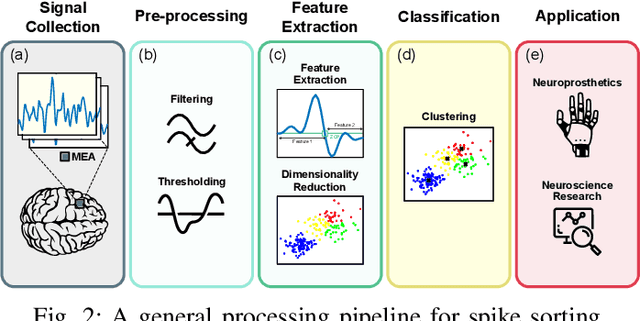

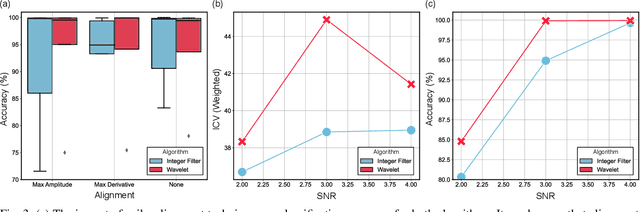

Abstract:Spike sorting algorithms are used to separate extracellular recordings of neuronal populations into single-unit spike activities. The development of customized hardware implementing spike sorting algorithms is burgeoning. However, there is a lack of a systematic approach and a set of standardized evaluation criteria to facilitate direct comparison of both software and hardware implementations. In this paper, we formalize a set of standardized criteria and a publicly available synthetic dataset entitled Synthetic Simulations Of Extracellular Recordings (SSOER), which was constructed by aggregating existing synthetic datasets with varying Signal-To-Noise Ratios (SNRs). Furthermore, we present a benchmark for future comparison, and use our criteria to evaluate a simulated Resistive Random-Access Memory (RRAM) In-Memory Computing (IMC) system using the Discrete Wavelet Transform (DWT) for feature extraction. Our system consumes approximately (per channel) 10.72mW and occupies an area of 0.66mm$^2$ in a 22nm FDSOI Complementary Metal-Oxide-Semiconductor (CMOS) process.

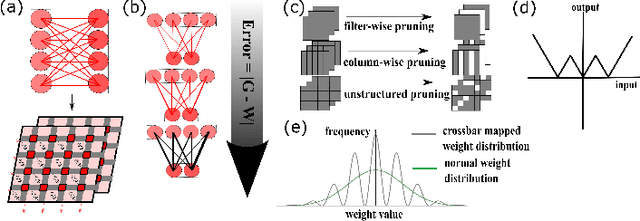

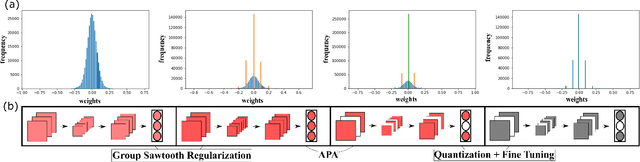

PRUNIX: Non-Ideality Aware Convolutional Neural Network Pruning for Memristive Accelerators

Feb 03, 2022

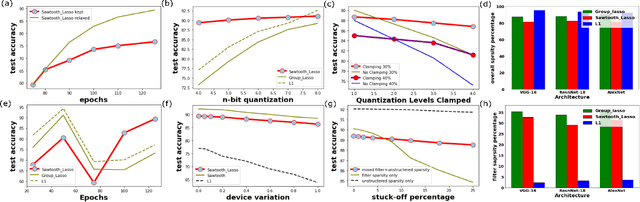

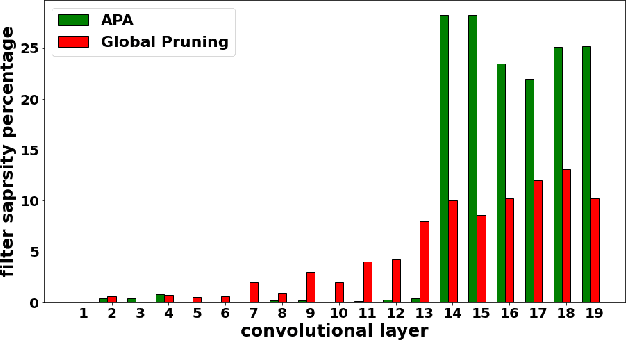

Abstract:In this work, PRUNIX, a framework for training and pruning convolutional neural networks is proposed for deployment on memristor crossbar based accelerators. PRUNIX takes into account the numerous non-ideal effects of memristor crossbars including weight quantization, state-drift, aging and stuck-at-faults. PRUNIX utilises a novel Group Sawtooth Regularization intended to improve non-ideality tolerance as well as sparsity, and a novel Adaptive Pruning Algorithm (APA) intended to minimise accuracy loss by considering the sensitivity of different layers of a CNN to pruning. We compare our regularization and pruning methods with other standards on multiple CNN architectures, and observe an improvement of 13% test accuracy when quantization and other non-ideal effects are accounted for with an overall sparsity of 85%, which is similar to other methods

HYPERLOCK: In-Memory Hyperdimensional Encryption in Memristor Crossbar Array

Jan 27, 2022

Abstract:We present a novel cryptography architecture based on memristor crossbar array, binary hypervectors, and neural network. Utilizing the stochastic and unclonable nature of memristor crossbar and error tolerance of binary hypervectors and neural network, implementation of the algorithm on memristor crossbar simulation is made possible. We demonstrate that with an increasing dimension of the binary hypervectors, the non-idealities in the memristor circuit can be effectively controlled. At the fine level of controlled crossbar non-ideality, noise from memristor circuit can be used to encrypt data while being sufficiently interpretable by neural network for decryption. We applied our algorithm on image cryptography for proof of concept, and to text en/decryption with 100% decryption accuracy despite crossbar noises. Our work shows the potential and feasibility of using memristor crossbars as an unclonable stochastic encoder unit of cryptography on top of their existing functionality as a vector-matrix multiplication acceleration device.

Design Space Exploration of Dense and Sparse Mapping Schemes for RRAM Architectures

Jan 25, 2022Abstract:The impact of device and circuit-level effects in mixed-signal Resistive Random Access Memory (RRAM) accelerators typically manifest as performance degradation of Deep Learning (DL) algorithms, but the degree of impact varies based on algorithmic features. These include network architecture, capacity, weight distribution, and the type of inter-layer connections. Techniques are continuously emerging to efficiently train sparse neural networks, which may have activation sparsity, quantization, and memristive noise. In this paper, we present an extended Design Space Exploration (DSE) methodology to quantify the benefits and limitations of dense and sparse mapping schemes for a variety of network architectures. While sparsity of connectivity promotes less power consumption and is often optimized for extracting localized features, its performance on tiled RRAM arrays may be more susceptible to noise due to under-parameterization, when compared to dense mapping schemes. Moreover, we present a case study quantifying and formalizing the trade-offs of typical non-idealities introduced into 1-Transistor-1-Resistor (1T1R) tiled memristive architectures and the size of modular crossbar tiles using the CIFAR-10 dataset.

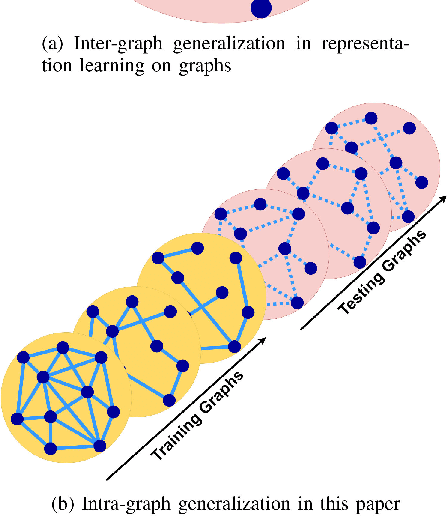

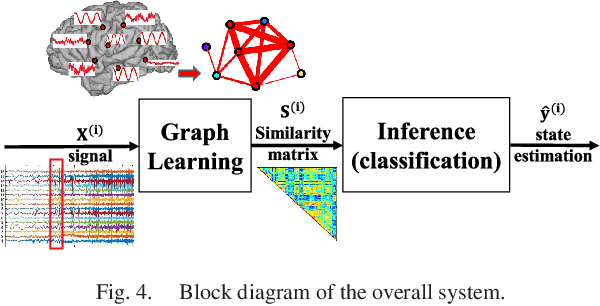

Node-Centric Graph Learning from Data for Brain State Identification

Nov 04, 2020

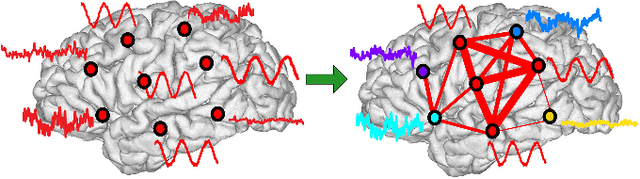

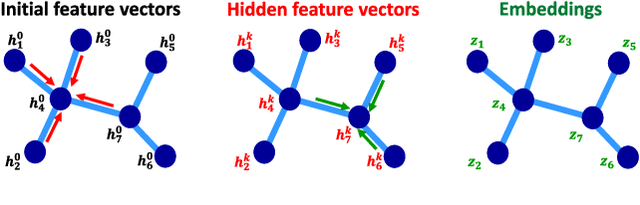

Abstract:Data-driven graph learning models a network by determining the strength of connections between its nodes. The data refers to a graph signal which associates a value with each graph node. Existing graph learning methods either use simplified models for the graph signal, or they are prohibitively expensive in terms of computational and memory requirements. This is particularly true when the number of nodes is high or there are temporal changes in the network. In order to consider richer models with a reasonable computational tractability, we introduce a graph learning method based on representation learning on graphs. Representation learning generates an embedding for each graph node, taking the information from neighbouring nodes into account. Our graph learning method further modifies the embeddings to compute the graph similarity matrix. In this work, graph learning is used to examine brain networks for brain state identification. We infer time-varying brain graphs from an extensive dataset of intracranial electroencephalographic (iEEG) signals from ten patients. We then apply the graphs as input to a classifier to distinguish seizure vs. non-seizure brain states. Using the binary classification metric of area under the receiver operating characteristic curve (AUC), this approach yields an average of 9.13 percent improvement when compared to two widely used brain network modeling methods.

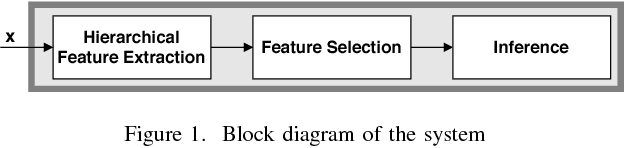

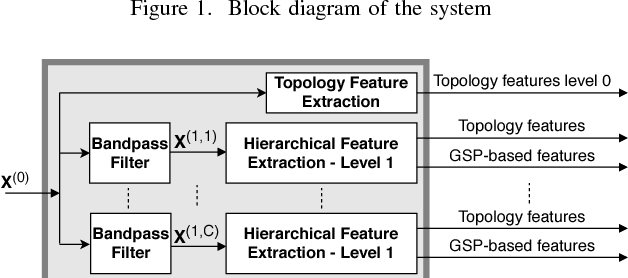

A Hierarchical Graph Signal Processing Approach to Inference from Spatiotemporal Signals

Oct 25, 2020

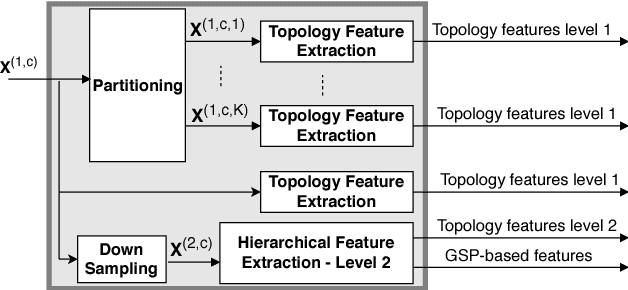

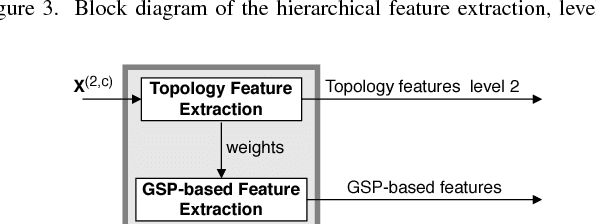

Abstract:Motivated by the emerging area of graph signal processing (GSP), we introduce a novel method to draw inference from spatiotemporal signals. Data acquisition in different locations over time is common in sensor networks, for diverse applications ranging from object tracking in wireless networks to medical uses such as electroencephalography (EEG) signal processing. In this paper we leverage novel techniques of GSP to develop a hierarchical feature extraction approach by mapping the data onto a series of spatiotemporal graphs. Such a model maps signals onto vertices of a graph and the time-space dependencies among signals are modeled by the edge weights. Signal components acquired from different locations and time often have complicated functional dependencies. Accordingly, their corresponding graph weights are learned from data and used in two ways. First, they are used as a part of the embedding related to the topology of graph, such as density. Second, they provide the connectivities of the base graph for extracting higher level GSP-based features. The latter include the energies of the signal's graph Fourier transform in different frequency bands. We test our approach on the intracranial EEG (iEEG) data set of the Kaggle epileptic seizure detection contest. In comparison to the winning code, the results show a slight net improvement and up to 6 percent improvement in per subject analysis, while the number of features are decreased by 75 percent on average.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge