Rishubh Parihar

MonoPlace3D: Learning 3D-Aware Object Placement for 3D Monocular Detection

Apr 10, 2025Abstract:Current monocular 3D detectors are held back by the limited diversity and scale of real-world datasets. While data augmentation certainly helps, it's particularly difficult to generate realistic scene-aware augmented data for outdoor settings. Most current approaches to synthetic data generation focus on realistic object appearance through improved rendering techniques. However, we show that where and how objects are positioned is just as crucial for training effective 3D monocular detectors. The key obstacle lies in automatically determining realistic object placement parameters - including position, dimensions, and directional alignment when introducing synthetic objects into actual scenes. To address this, we introduce MonoPlace3D, a novel system that considers the 3D scene content to create realistic augmentations. Specifically, given a background scene, MonoPlace3D learns a distribution over plausible 3D bounding boxes. Subsequently, we render realistic objects and place them according to the locations sampled from the learned distribution. Our comprehensive evaluation on two standard datasets KITTI and NuScenes, demonstrates that MonoPlace3D significantly improves the accuracy of multiple existing monocular 3D detectors while being highly data efficient.

Compass Control: Multi Object Orientation Control for Text-to-Image Generation

Apr 10, 2025Abstract:Existing approaches for controlling text-to-image diffusion models, while powerful, do not allow for explicit 3D object-centric control, such as precise control of object orientation. In this work, we address the problem of multi-object orientation control in text-to-image diffusion models. This enables the generation of diverse multi-object scenes with precise orientation control for each object. The key idea is to condition the diffusion model with a set of orientation-aware \textbf{compass} tokens, one for each object, along with text tokens. A light-weight encoder network predicts these compass tokens taking object orientation as the input. The model is trained on a synthetic dataset of procedurally generated scenes, each containing one or two 3D assets on a plain background. However, direct training this framework results in poor orientation control as well as leads to entanglement among objects. To mitigate this, we intervene in the generation process and constrain the cross-attention maps of each compass token to its corresponding object regions. The trained model is able to achieve precise orientation control for a) complex objects not seen during training and b) multi-object scenes with more than two objects, indicating strong generalization capabilities. Further, when combined with personalization methods, our method precisely controls the orientation of the new object in diverse contexts. Our method achieves state-of-the-art orientation control and text alignment, quantified with extensive evaluations and a user study.

PreciseControl: Enhancing Text-To-Image Diffusion Models with Fine-Grained Attribute Control

Jul 24, 2024Abstract:Recently, we have seen a surge of personalization methods for text-to-image (T2I) diffusion models to learn a concept using a few images. Existing approaches, when used for face personalization, suffer to achieve convincing inversion with identity preservation and rely on semantic text-based editing of the generated face. However, a more fine-grained control is desired for facial attribute editing, which is challenging to achieve solely with text prompts. In contrast, StyleGAN models learn a rich face prior and enable smooth control towards fine-grained attribute editing by latent manipulation. This work uses the disentangled $\mathcal{W+}$ space of StyleGANs to condition the T2I model. This approach allows us to precisely manipulate facial attributes, such as smoothly introducing a smile, while preserving the existing coarse text-based control inherent in T2I models. To enable conditioning of the T2I model on the $\mathcal{W+}$ space, we train a latent mapper to translate latent codes from $\mathcal{W+}$ to the token embedding space of the T2I model. The proposed approach excels in the precise inversion of face images with attribute preservation and facilitates continuous control for fine-grained attribute editing. Furthermore, our approach can be readily extended to generate compositions involving multiple individuals. We perform extensive experiments to validate our method for face personalization and fine-grained attribute editing.

Text2Place: Affordance-aware Text Guided Human Placement

Jul 22, 2024Abstract:For a given scene, humans can easily reason for the locations and pose to place objects. Designing a computational model to reason about these affordances poses a significant challenge, mirroring the intuitive reasoning abilities of humans. This work tackles the problem of realistic human insertion in a given background scene termed as \textbf{Semantic Human Placement}. This task is extremely challenging given the diverse backgrounds, scale, and pose of the generated person and, finally, the identity preservation of the person. We divide the problem into the following two stages \textbf{i)} learning \textit{semantic masks} using text guidance for localizing regions in the image to place humans and \textbf{ii)} subject-conditioned inpainting to place a given subject adhering to the scene affordance within the \textit{semantic masks}. For learning semantic masks, we leverage rich object-scene priors learned from the text-to-image generative models and optimize a novel parameterization of the semantic mask, eliminating the need for large-scale training. To the best of our knowledge, we are the first ones to provide an effective solution for realistic human placements in diverse real-world scenes. The proposed method can generate highly realistic scene compositions while preserving the background and subject identity. Further, we present results for several downstream tasks - scene hallucination from a single or multiple generated persons and text-based attribute editing. With extensive comparisons against strong baselines, we show the superiority of our method in realistic human placement.

Balancing Act: Distribution-Guided Debiasing in Diffusion Models

Feb 28, 2024Abstract:Diffusion Models (DMs) have emerged as powerful generative models with unprecedented image generation capability. These models are widely used for data augmentation and creative applications. However, DMs reflect the biases present in the training datasets. This is especially concerning in the context of faces, where the DM prefers one demographic subgroup vs others (eg. female vs male). In this work, we present a method for debiasing DMs without relying on additional data or model retraining. Specifically, we propose Distribution Guidance, which enforces the generated images to follow the prescribed attribute distribution. To realize this, we build on the key insight that the latent features of denoising UNet hold rich demographic semantics, and the same can be leveraged to guide debiased generation. We train Attribute Distribution Predictor (ADP) - a small mlp that maps the latent features to the distribution of attributes. ADP is trained with pseudo labels generated from existing attribute classifiers. The proposed Distribution Guidance with ADP enables us to do fair generation. Our method reduces bias across single/multiple attributes and outperforms the baseline by a significant margin for unconditional and text-conditional diffusion models. Further, we present a downstream task of training a fair attribute classifier by rebalancing the training set with our generated data.

Exploring Attribute Variations in Style-based GANs using Diffusion Models

Nov 27, 2023Abstract:Existing attribute editing methods treat semantic attributes as binary, resulting in a single edit per attribute. However, attributes such as eyeglasses, smiles, or hairstyles exhibit a vast range of diversity. In this work, we formulate the task of \textit{diverse attribute editing} by modeling the multidimensional nature of attribute edits. This enables users to generate multiple plausible edits per attribute. We capitalize on disentangled latent spaces of pretrained GANs and train a Denoising Diffusion Probabilistic Model (DDPM) to learn the latent distribution for diverse edits. Specifically, we train DDPM over a dataset of edit latent directions obtained by embedding image pairs with a single attribute change. This leads to latent subspaces that enable diverse attribute editing. Applying diffusion in the highly compressed latent space allows us to model rich distributions of edits within limited computational resources. Through extensive qualitative and quantitative experiments conducted across a range of datasets, we demonstrate the effectiveness of our approach for diverse attribute editing. We also showcase the results of our method applied for 3D editing of various face attributes.

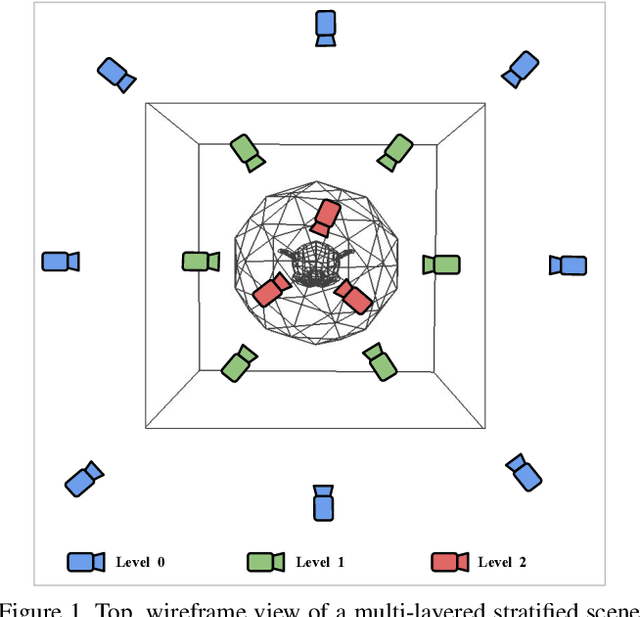

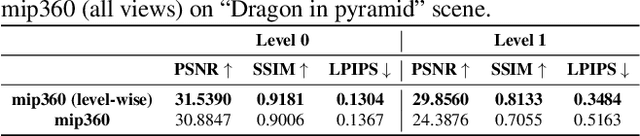

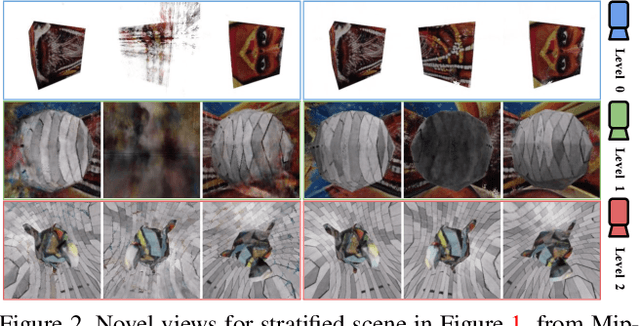

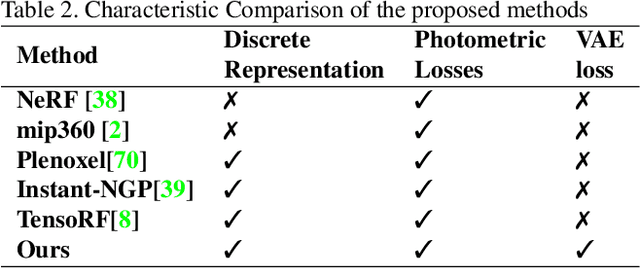

Strata-NeRF : Neural Radiance Fields for Stratified Scenes

Aug 20, 2023

Abstract:Neural Radiance Field (NeRF) approaches learn the underlying 3D representation of a scene and generate photo-realistic novel views with high fidelity. However, most proposed settings concentrate on modelling a single object or a single level of a scene. However, in the real world, we may capture a scene at multiple levels, resulting in a layered capture. For example, tourists usually capture a monument's exterior structure before capturing the inner structure. Modelling such scenes in 3D with seamless switching between levels can drastically improve immersive experiences. However, most existing techniques struggle in modelling such scenes. We propose Strata-NeRF, a single neural radiance field that implicitly captures a scene with multiple levels. Strata-NeRF achieves this by conditioning the NeRFs on Vector Quantized (VQ) latent representations which allow sudden changes in scene structure. We evaluate the effectiveness of our approach in multi-layered synthetic dataset comprising diverse scenes and then further validate its generalization on the real-world RealEstate10K dataset. We find that Strata-NeRF effectively captures stratified scenes, minimizes artifacts, and synthesizes high-fidelity views compared to existing approaches.

We never go out of Style: Motion Disentanglement by Subspace Decomposition of Latent Space

Jun 01, 2023

Abstract:Real-world objects perform complex motions that involve multiple independent motion components. For example, while talking, a person continuously changes their expressions, head, and body pose. In this work, we propose a novel method to decompose motion in videos by using a pretrained image GAN model. We discover disentangled motion subspaces in the latent space of widely used style-based GAN models that are semantically meaningful and control a single explainable motion component. The proposed method uses only a few $(\approx10)$ ground truth video sequences to obtain such subspaces. We extensively evaluate the disentanglement properties of motion subspaces on face and car datasets, quantitatively and qualitatively. Further, we present results for multiple downstream tasks such as motion editing, and selective motion transfer, e.g. transferring only facial expressions without training for it.

Hierarchical Semantic Regularization of Latent Spaces in StyleGANs

Aug 07, 2022

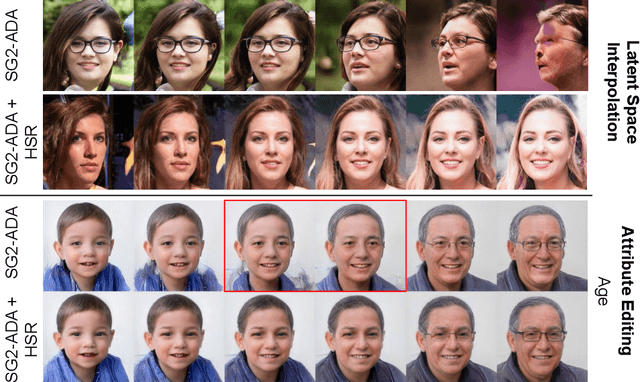

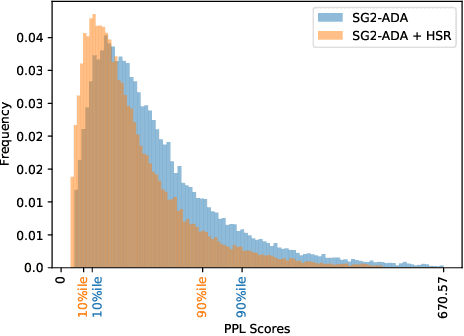

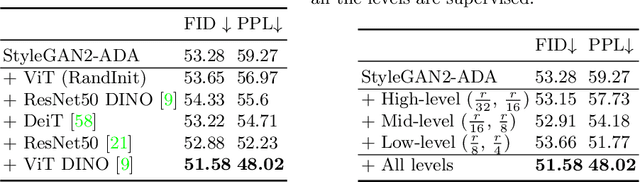

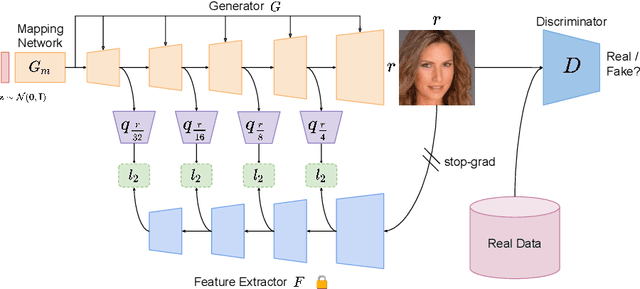

Abstract:Progress in GANs has enabled the generation of high-resolution photorealistic images of astonishing quality. StyleGANs allow for compelling attribute modification on such images via mathematical operations on the latent style vectors in the W/W+ space that effectively modulate the rich hierarchical representations of the generator. Such operations have recently been generalized beyond mere attribute swapping in the original StyleGAN paper to include interpolations. In spite of many significant improvements in StyleGANs, they are still seen to generate unnatural images. The quality of the generated images is predicated on two assumptions; (a) The richness of the hierarchical representations learnt by the generator, and, (b) The linearity and smoothness of the style spaces. In this work, we propose a Hierarchical Semantic Regularizer (HSR) which aligns the hierarchical representations learnt by the generator to corresponding powerful features learnt by pretrained networks on large amounts of data. HSR is shown to not only improve generator representations but also the linearity and smoothness of the latent style spaces, leading to the generation of more natural-looking style-edited images. To demonstrate improved linearity, we propose a novel metric - Attribute Linearity Score (ALS). A significant reduction in the generation of unnatural images is corroborated by improvement in the Perceptual Path Length (PPL) metric by 16.19% averaged across different standard datasets while simultaneously improving the linearity of attribute-change in the attribute editing tasks.

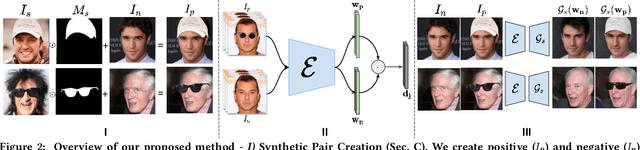

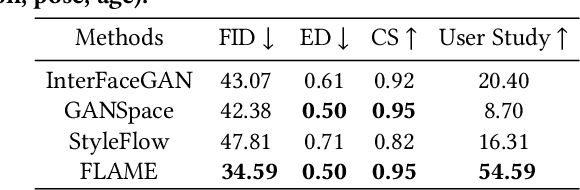

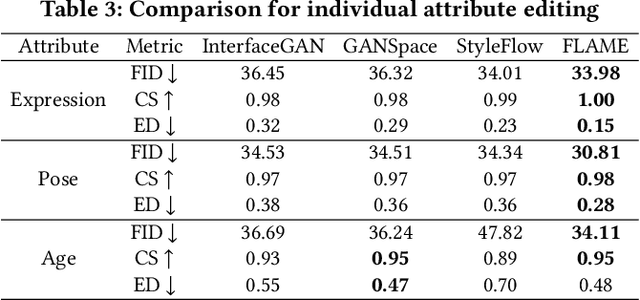

Everything is There in Latent Space: Attribute Editing and Attribute Style Manipulation by StyleGAN Latent Space Exploration

Jul 20, 2022

Abstract:Unconstrained Image generation with high realism is now possible using recent Generative Adversarial Networks (GANs). However, it is quite challenging to generate images with a given set of attributes. Recent methods use style-based GAN models to perform image editing by leveraging the semantic hierarchy present in the layers of the generator. We present Few-shot Latent-based Attribute Manipulation and Editing (FLAME), a simple yet effective framework to perform highly controlled image editing by latent space manipulation. Specifically, we estimate linear directions in the latent space (of a pre-trained StyleGAN) that controls semantic attributes in the generated image. In contrast to previous methods that either rely on large-scale attribute labeled datasets or attribute classifiers, FLAME uses minimal supervision of a few curated image pairs to estimate disentangled edit directions. FLAME can perform both individual and sequential edits with high precision on a diverse set of images while preserving identity. Further, we propose a novel task of Attribute Style Manipulation to generate diverse styles for attributes such as eyeglass and hair. We first encode a set of synthetic images of the same identity but having different attribute styles in the latent space to estimate an attribute style manifold. Sampling a new latent from this manifold will result in a new attribute style in the generated image. We propose a novel sampling method to sample latent from the manifold, enabling us to generate a diverse set of attribute styles beyond the styles present in the training set. FLAME can generate diverse attribute styles in a disentangled manner. We illustrate the superior performance of FLAME against previous image editing methods by extensive qualitative and quantitative comparisons. FLAME also generalizes well on multiple datasets such as cars and churches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge