Richard E. Carson

Noise-aware Dynamic Image Denoising and Positron Range Correction for Rubidium-82 Cardiac PET Imaging via Self-supervision

Sep 17, 2024

Abstract:Rb-82 is a radioactive isotope widely used for cardiac PET imaging. Despite numerous benefits of 82-Rb, there are several factors that limits its image quality and quantitative accuracy. First, the short half-life of 82-Rb results in noisy dynamic frames. Low signal-to-noise ratio would result in inaccurate and biased image quantification. Noisy dynamic frames also lead to highly noisy parametric images. The noise levels also vary substantially in different dynamic frames due to radiotracer decay and short half-life. Existing denoising methods are not applicable for this task due to the lack of paired training inputs/labels and inability to generalize across varying noise levels. Second, 82-Rb emits high-energy positrons. Compared with other tracers such as 18-F, 82-Rb travels a longer distance before annihilation, which negatively affect image spatial resolution. Here, the goal of this study is to propose a self-supervised method for simultaneous (1) noise-aware dynamic image denoising and (2) positron range correction for 82-Rb cardiac PET imaging. Tested on a series of PET scans from a cohort of normal volunteers, the proposed method produced images with superior visual quality. To demonstrate the improvement in image quantification, we compared image-derived input functions (IDIFs) with arterial input functions (AIFs) from continuous arterial blood samples. The IDIF derived from the proposed method led to lower AUC differences, decreasing from 11.09% to 7.58% on average, compared to the original dynamic frames. The proposed method also improved the quantification of myocardium blood flow (MBF), as validated against 15-O-water scans, with mean MBF differences decreased from 0.43 to 0.09, compared to the original dynamic frames. We also conducted a generalizability experiment on 37 patient scans obtained from a different country using a different scanner.

Synthesizing Multi-Tracer PET Images for Alzheimer's Disease Patients using a 3D Unified Anatomy-aware Cyclic Adversarial Network

Jul 12, 2021

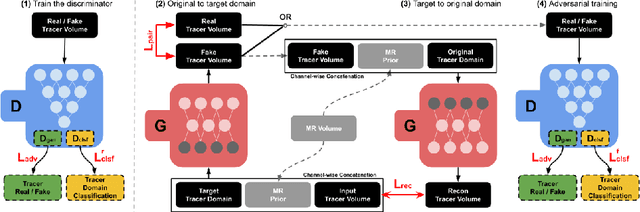

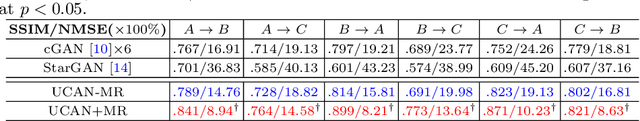

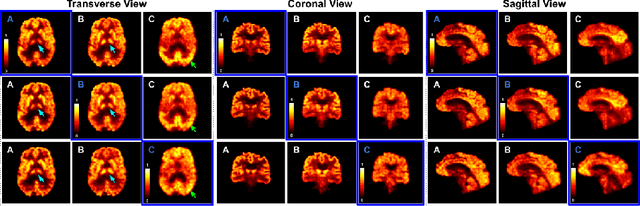

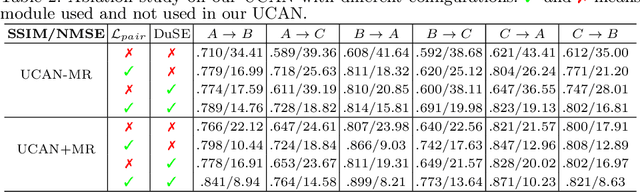

Abstract:Positron Emission Tomography (PET) is an important tool for studying Alzheimer's disease (AD). PET scans can be used as diagnostics tools, and to provide molecular characterization of patients with cognitive disorders. However, multiple tracers are needed to measure glucose metabolism (18F-FDG), synaptic vesicle protein (11C-UCB-J), and $\beta$-amyloid (11C-PiB). Administering multiple tracers to patient will lead to high radiation dose and cost. In addition, access to PET scans using new or less-available tracers with sophisticated production methods and short half-life isotopes may be very limited. Thus, it is desirable to develop an efficient multi-tracer PET synthesis model that can generate multi-tracer PET from single-tracer PET. Previous works on medical image synthesis focus on one-to-one fixed domain translations, and cannot simultaneously learn the feature from multi-tracer domains. Given 3 or more tracers, relying on previous methods will also create a heavy burden on the number of models to be trained. To tackle these issues, we propose a 3D unified anatomy-aware cyclic adversarial network (UCAN) for translating multi-tracer PET volumes with one unified generative model, where MR with anatomical information is incorporated. Evaluations on a multi-tracer PET dataset demonstrate the feasibility that our UCAN can generate high-quality multi-tracer PET volumes, with NMSE less than 15% for all PET tracers.

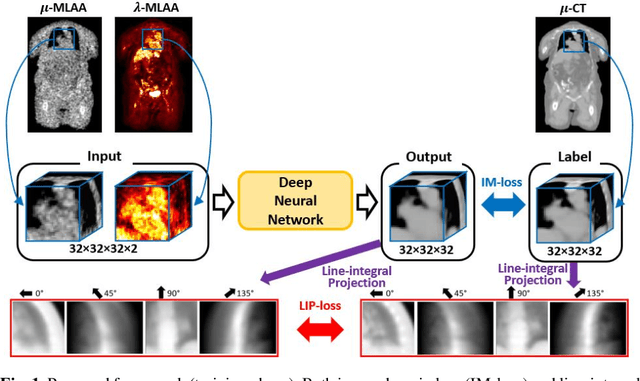

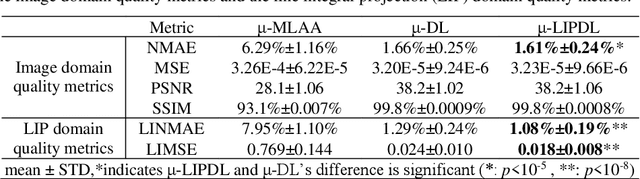

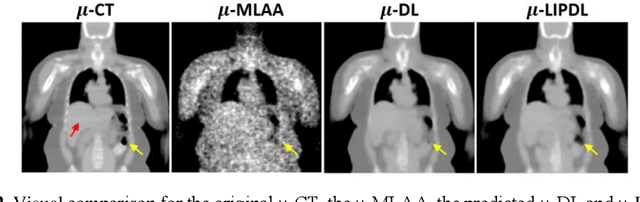

A Novel Loss Function Incorporating Imaging Acquisition Physics for PET Attenuation Map Generation using Deep Learning

Sep 03, 2019

Abstract:In PET/CT imaging, CT is used for PET attenuation correction (AC). Mismatch between CT and PET due to patient body motion results in AC artifacts. In addition, artifact caused by metal, beam-hardening and count-starving in CT itself also introduces inaccurate AC for PET. Maximum likelihood reconstruction of activity and attenuation (MLAA) was proposed to solve those issues by simultaneously reconstructing tracer activity ($\lambda$-MLAA) and attenuation map ($\mu$-MLAA) based on the PET raw data only. However, $\mu$-MLAA suffers from high noise and $\lambda$-MLAA suffers from large bias as compared to the reconstruction using the CT-based attenuation map ($\mu$-CT). Recently, a convolutional neural network (CNN) was applied to predict the CT attenuation map ($\mu$-CNN) from $\lambda$-MLAA and $\mu$-MLAA, in which an image-domain loss (IM-loss) function between the $\mu$-CNN and the ground truth $\mu$-CT was used. However, IM-loss does not directly measure the AC errors according to the PET attenuation physics, where the line-integral projection of the attenuation map ($\mu$) along the path of the two annihilation events, instead of the $\mu$ itself, is used for AC. Therefore, a network trained with the IM-loss may yield suboptimal performance in the $\mu$ generation. Here, we propose a novel line-integral projection loss (LIP-loss) function that incorporates the PET attenuation physics for $\mu$ generation. Eighty training and twenty testing datasets of whole-body 18F-FDG PET and paired ground truth $\mu$-CT were used. Quantitative evaluations showed that the model trained with the additional LIP-loss was able to significantly outperform the model trained solely based on the IM-loss function.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge