Ramchalam Kinattinkara Ramakrishnan

Edge-ASR: Towards Low-Bit Quantization of Automatic Speech Recognition Models

Jul 10, 2025Abstract:Recent advances in Automatic Speech Recognition (ASR) have demonstrated remarkable accuracy and robustness in diverse audio applications, such as live transcription and voice command processing. However, deploying these models on resource constrained edge devices (e.g., IoT device, wearables) still presents substantial challenges due to strict limits on memory, compute and power. Quantization, particularly Post-Training Quantization (PTQ), offers an effective way to reduce model size and inference cost without retraining. Despite its importance, the performance implications of various advanced quantization methods and bit-width configurations on ASR models remain unclear. In this work, we present a comprehensive benchmark of eight state-of-the-art (SOTA) PTQ methods applied to two leading edge-ASR model families, Whisper and Moonshine. We systematically evaluate model performances (i.e., accuracy, memory I/O and bit operations) across seven diverse datasets from the open ASR leaderboard, analyzing the impact of quantization and various configurations on both weights and activations. Built on an extension of the LLM compression toolkit, our framework integrates edge-ASR models, diverse advanced quantization algorithms, a unified calibration and evaluation data pipeline, and detailed analysis tools. Our results characterize the trade-offs between efficiency and accuracy, demonstrating that even 3-bit quantization can succeed on high capacity models when using advanced PTQ techniques. These findings provide valuable insights for optimizing ASR models on low-power, always-on edge devices.

OmniDraft: A Cross-vocabulary, Online Adaptive Drafter for On-device Speculative Decoding

Jul 03, 2025Abstract:Speculative decoding generally dictates having a small, efficient draft model that is either pretrained or distilled offline to a particular target model series, for instance, Llama or Qwen models. However, within online deployment settings, there are two major challenges: 1) usage of a target model that is incompatible with the draft model; 2) expectation of latency improvements over usage and time. In this work, we propose OmniDraft, a unified framework that enables a single draft model to operate with any target model and adapt dynamically to user data. We introduce an online n-gram cache with hybrid distillation fine-tuning to address the cross-vocabulary mismatch across draft and target models; and further improve decoding speed by leveraging adaptive drafting techniques. OmniDraft is particularly suitable for on-device LLM applications where model cost, efficiency and user customization are the major points of contention. This further highlights the need to tackle the above challenges and motivates the \textit{``one drafter for all''} paradigm. We showcase the proficiency of the OmniDraft framework by performing online learning on math reasoning, coding and text generation tasks. Notably, OmniDraft enables a single Llama-68M model to pair with various target models including Vicuna-7B, Qwen2-7B and Llama3-8B models for speculative decoding; and additionally provides up to 1.5-2x speedup.

Stepping Forward on the Last Mile

Nov 06, 2024

Abstract:Continuously adapting pre-trained models to local data on resource constrained edge devices is the $\emph{last mile}$ for model deployment. However, as models increase in size and depth, backpropagation requires a large amount of memory, which becomes prohibitive for edge devices. In addition, most existing low power neural processing engines (e.g., NPUs, DSPs, MCUs, etc.) are designed as fixed-point inference accelerators, without training capabilities. Forward gradients, solely based on directional derivatives computed from two forward calls, have been recently used for model training, with substantial savings in computation and memory. However, the performance of quantized training with fixed-point forward gradients remains unclear. In this paper, we investigate the feasibility of on-device training using fixed-point forward gradients, by conducting comprehensive experiments across a variety of deep learning benchmark tasks in both vision and audio domains. We propose a series of algorithm enhancements that further reduce the memory footprint, and the accuracy gap compared to backpropagation. An empirical study on how training with forward gradients navigates in the loss landscape is further explored. Our results demonstrate that on the last mile of model customization on edge devices, training with fixed-point forward gradients is a feasible and practical approach.

An Empirical Study of Low Precision Quantization for TinyML

Mar 10, 2022

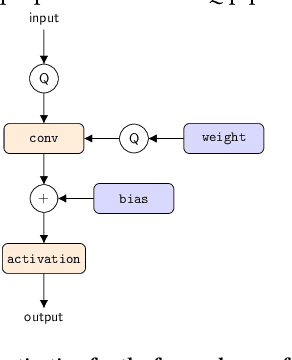

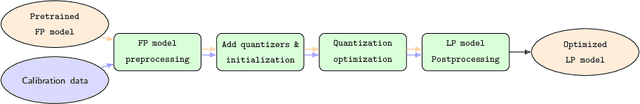

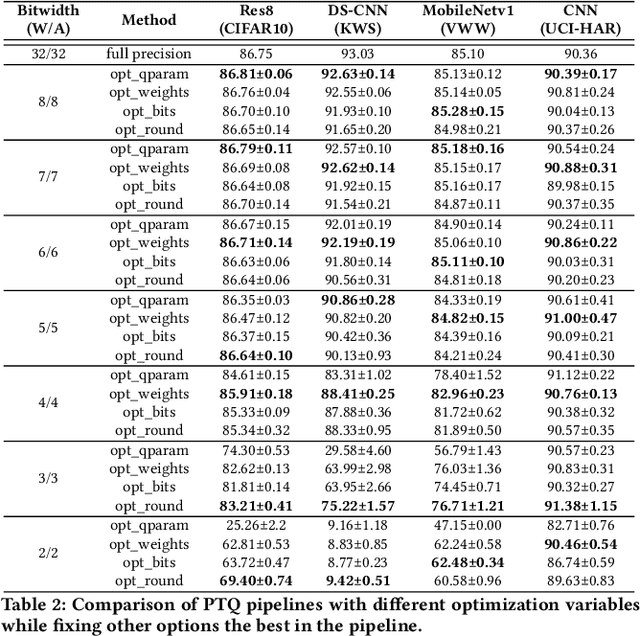

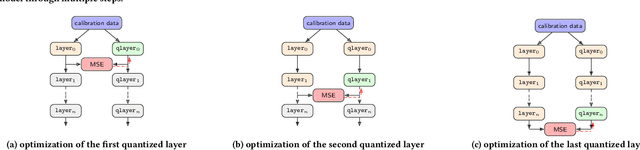

Abstract:Tiny machine learning (tinyML) has emerged during the past few years aiming to deploy machine learning models to embedded AI processors with highly constrained memory and computation capacity. Low precision quantization is an important model compression technique that can greatly reduce both memory consumption and computation cost of model inference. In this study, we focus on post-training quantization (PTQ) algorithms that quantize a model to low-bit (less than 8-bit) precision with only a small set of calibration data and benchmark them on different tinyML use cases. To achieve a fair comparison, we build a simulated quantization framework to investigate recent PTQ algorithms. Furthermore, we break down those algorithms into essential components and re-assembled a generic PTQ pipeline. With ablation study on different alternatives of components in the pipeline, we reveal key design choices when performing low precision quantization. We hope this work could provide useful data points and shed lights on the future research of low precision quantization.

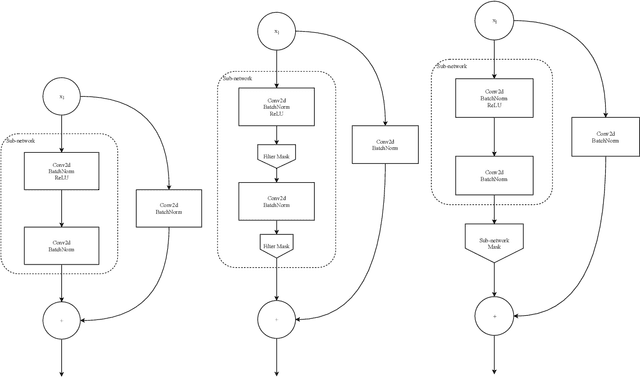

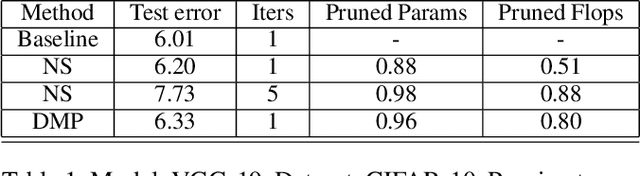

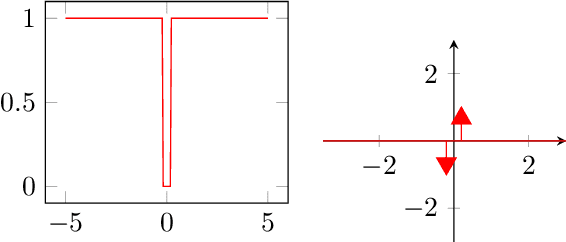

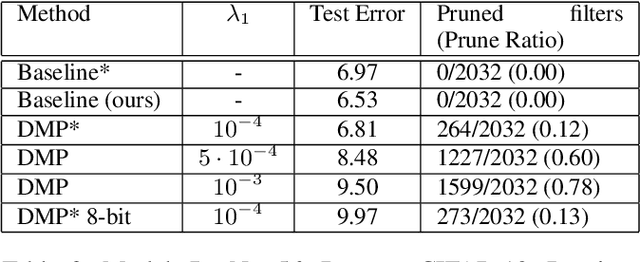

Differentiable Mask Pruning for Neural Networks

Sep 10, 2019

Abstract:Pruning of neural networks is one of the well-known and promising model simplification techniques. Most neural network models are large and require expensive computations to predict new instances. It is imperative to compress the network to deploy models on low resource devices. Most compression techniques, especially pruning have been focusing on computer vision and convolution neural networks. Existing techniques are complex and require multi-stage optimization and fine-tuning to recover the state-of-the-art accuracy. We introduce a \emph{Differentiable Mask Pruning} (DMP), that simplifies the network while training, and can be used to induce sparsity on weight, filter, node or sub-network. Our method achieves competitive results on standard vision and NLP benchmarks, and is easy to integrate within the deep learning toolbox. DMP bridges the gap between neural model compression and differentiable neural architecture search.

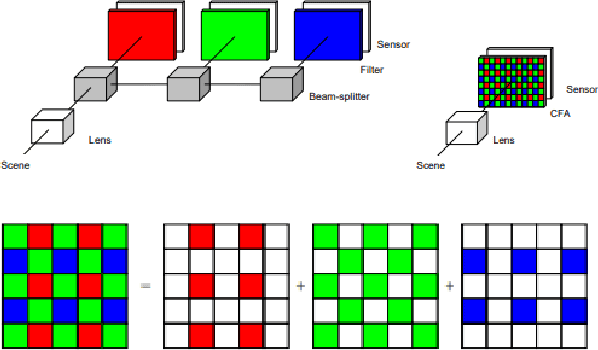

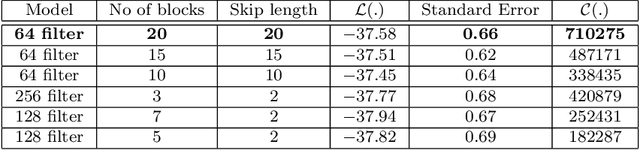

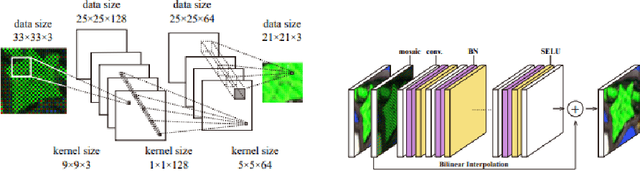

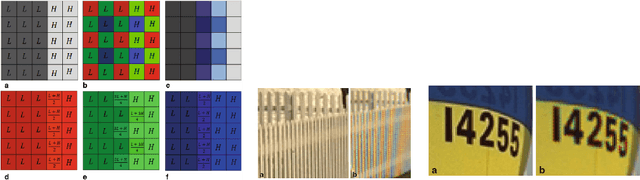

Deep Demosaicing for Edge Implementation

Apr 12, 2019

Abstract:Most digital cameras use sensors coated with a Color Filter Array (CFA) to capture channel components at every pixel location, resulting in a mosaic image that does not contain pixel values in all channels. Current research on reconstructing these missing channels, also known as demosaicing, introduces many artifacts, such as zipper effect and false color. Many deep learning demosaicing techniques outperform other classical techniques in reducing the impact of artifacts. However, most of these models tend to be over-parametrized. Consequently, edge implementation of the state-of-the-art deep learning-based demosaicing algorithms on low-end edge devices is a major challenge. We provide an exhaustive search of deep neural network architectures and obtain a pareto front of Color Peak Signal to Noise Ratio (CPSNR) as the performance criterion versus the number of parameters as the model complexity that beats the state-of-the-art. Architectures on the pareto front can then be used to choose the best architecture for a variety of resource constraints. Simple architecture search methods such as exhaustive search and grid search require some conditions of the loss function to converge to the optimum. We clarify these conditions in a brief theoretical study

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge