Qingjian Lin

Online Target Speaker Voice Activity Detection for Speaker Diarization

Jul 13, 2022

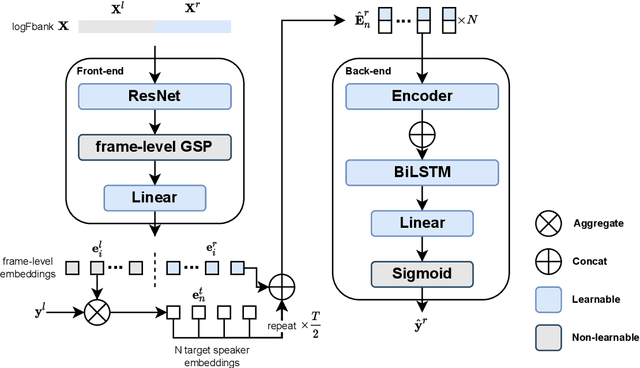

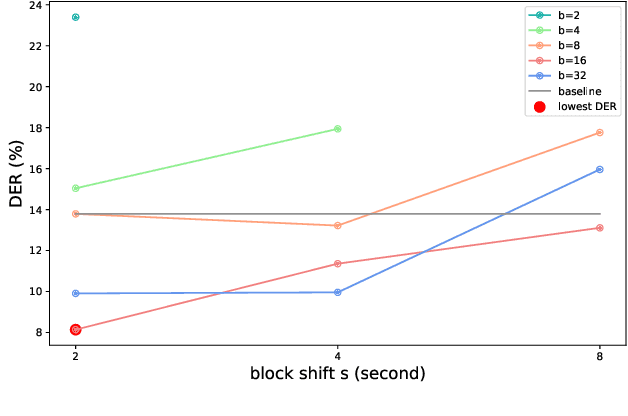

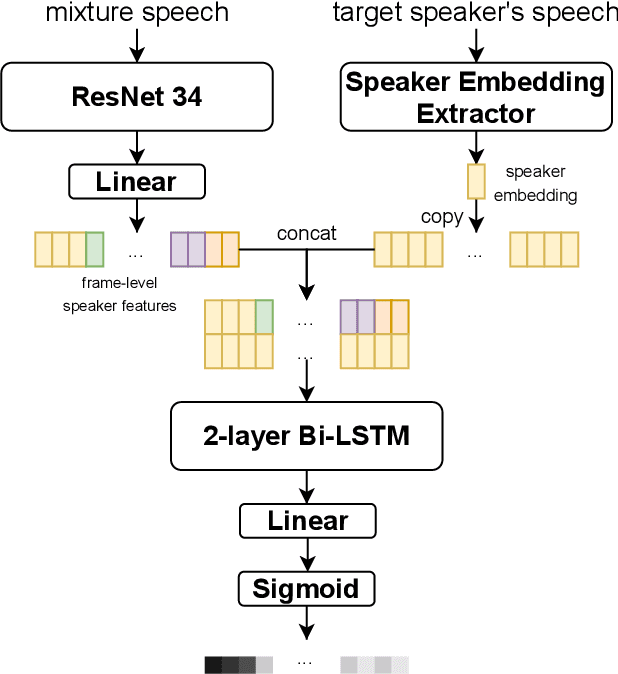

Abstract:This paper proposes an online target speaker voice activity detection system for speaker diarization tasks, which does not require a priori knowledge from the clustering-based diarization system to obtain the target speaker embeddings. First, we employ a ResNet-based front-end model to extract the frame-level speaker embeddings for each coming block of a signal. Next, we predict the detection state of each speaker based on these frame-level speaker embeddings and the previously estimated target speaker embedding. Then, the target speaker embeddings are updated by aggregating these frame-level speaker embeddings according to the predictions in the current block. We iteratively extract the results for each block and update the target speaker embedding until reaching the end of the signal. Experimental results show that the proposed method is better than the offline clustering-based diarization system on the AliMeeting dataset.

Towards Lightweight Applications: Asymmetric Enroll-Verify Structure for Speaker Verification

Oct 09, 2021

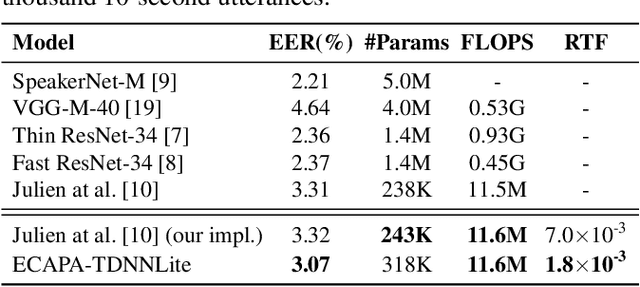

Abstract:With the development of deep learning, automatic speaker verification has made considerable progress over the past few years. However, to design a lightweight and robust system with limited computational resources is still a challenging problem. Traditionally, a speaker verification system is symmetrical, indicating that the same embedding extraction model is applied for both enrollment and verification in inference. In this paper, we come up with an innovative asymmetric structure, which takes the large-scale ECAPA-TDNN model for enrollment and the small-scale ECAPA-TDNNLite model for verification. As a symmetrical system, our proposed ECAPA-TDNNLite model achieves an EER of 3.07% on the Voxceleb1 original test set with only 11.6M FLOPS. Moreover, the asymmetric structure further reduces the EER to 2.31%, without increasing any computational costs during verification.

The DKU-DukeECE-Lenovo System for the Diarization Task of the 2021 VoxCeleb Speaker Recognition Challenge

Sep 07, 2021

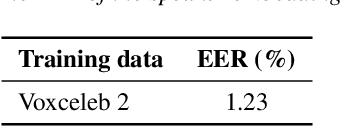

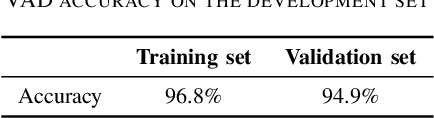

Abstract:This report describes the submission of the DKU-DukeECE-Lenovo team to the VoxCeleb Speaker Recognition Challenge (VoxSRC) 2021 track 4. Our system including a voice activity detection (VAD) model, a speaker embedding model, two clustering-based speaker diarization systems with different similarity measurements, two different overlapped speech detection (OSD) models, and a target-speaker voice activity detection (TS-VAD) model. Our final submission, consisting of 5 independent systems, achieves a DER of 5.07% on the challenge test set.

Sparsely Overlapped Speech Training in the Time Domain: Joint Learning of Target Speech Separation and Personal VAD Benefits

Jun 28, 2021

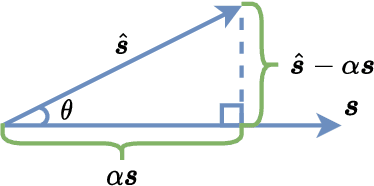

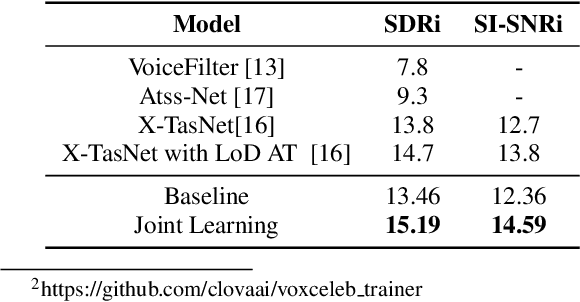

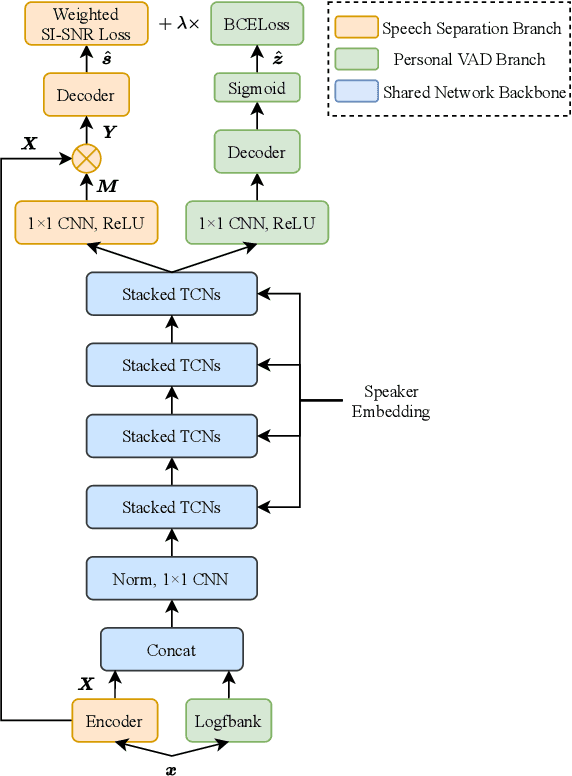

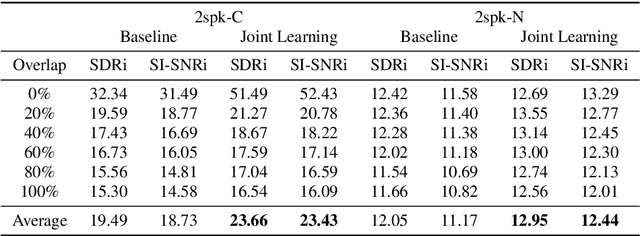

Abstract:Target speech separation is the process of filtering a certain speaker's voice out of speech mixtures according to the additional speaker identity information provided. Recent works have made considerable improvement by processing signals in the time domain directly. The majority of them take fully overlapped speech mixtures for training. However, since most real-life conversations occur randomly and are sparsely overlapped, we argue that training with different overlap ratio data benefits. To do so, an unavoidable problem is that the popularly used SI-SNR loss has no definition for silent sources. This paper proposes the weighted SI-SNR loss, together with the joint learning of target speech separation and personal VAD. The weighted SI-SNR loss imposes a weight factor that is proportional to the target speaker's duration and returns zero when the target speaker is absent. Meanwhile, the personal VAD generates masks and sets non-target speech to silence. Experiments show that our proposed method outperforms the baseline by 1.73 dB in terms of SDR on fully overlapped speech, as well as by 4.17 dB and 0.9 dB on sparsely overlapped speech of clean and noisy conditions. Besides, with slight degradation in performance, our model could reduce the time costs in inference.

The DKU-Duke-Lenovo System Description for the Third DIHARD Speech Diarization Challenge

Feb 06, 2021

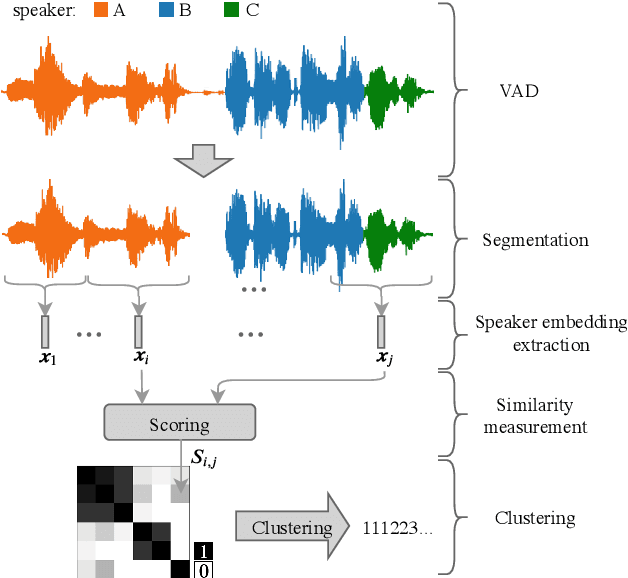

Abstract:In this paper, we present the submitted system for the third DIHARD Speech Diarization Challenge from the DKU-Duke-Lenovo team. Our system consists of several modules: voice activity detection (VAD), segmentation, speaker embedding extraction, attentive similarity scoring, agglomerative hierarchical clustering. In addition, the target speaker VAD (TSVAD) is used for the phone call data to further improve the performance. Our final submitted system achieves a DER of 15.43% for the core evaluation set and 13.39% for the full evaluation set on task 1, and we also get a DER of 21.63% for core evaluation set and 18.90% for full evaluation set on task 2.

DIHARD II is Still Hard: Experimental Results and Discussions from the DKU-LENOVO Team

Feb 23, 2020

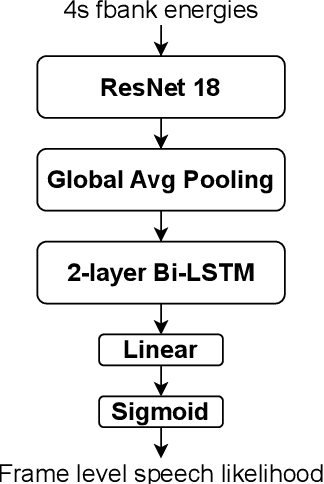

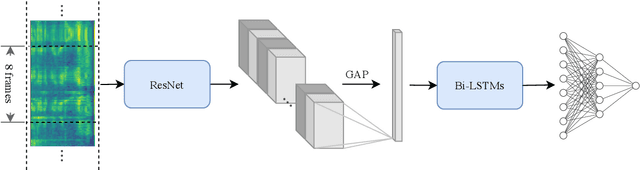

Abstract:In this paper, we present the submitted system for the second DIHARD Speech Diarization Challenge from the DKULENOVO team. Our diarization system includes multiple modules, namely voice activity detection (VAD), segmentation, speaker embedding extraction, similarity scoring, clustering, resegmentation and overlap detection. For each module, we explore different techniques to enhance performance. Our final submission employs the ResNet-LSTM based VAD, the Deep ResNet based speaker embedding, the LSTM based similarity scoring and spectral clustering. Variational Bayes (VB) diarization is applied in the resegmentation stage and overlap detection also brings slight improvement. Our proposed system achieves 18.84% DER in Track1 and 27.90% DER in Track2. Although our systems have reduced the DERs by 27.5% and 31.7% relatively against the official baselines, we believe that the diarization task is still very difficult.

LSTM based Similarity Measurement with Spectral Clustering for Speaker Diarization

Jul 23, 2019

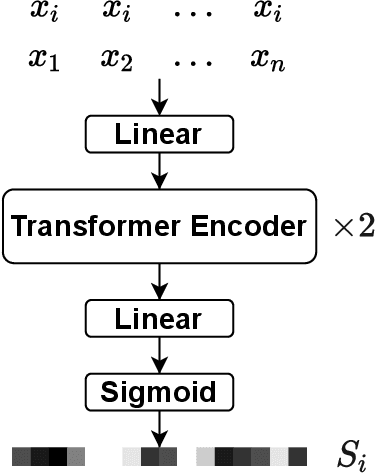

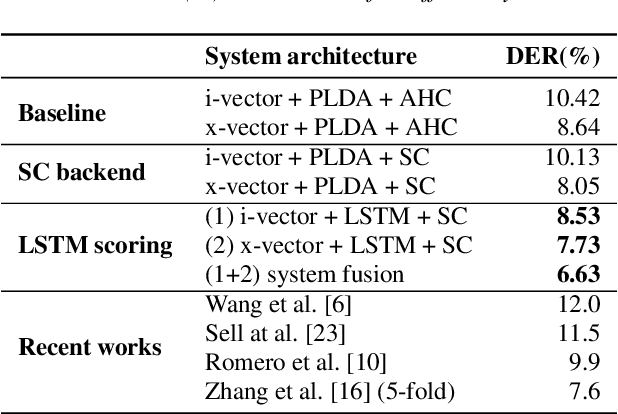

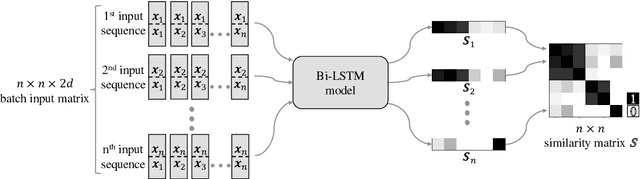

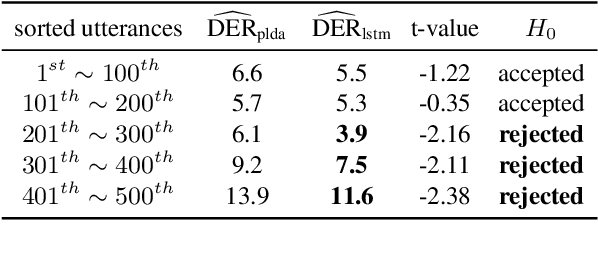

Abstract:More and more neural network approaches have achieved considerable improvement upon submodules of speaker diarization system, including speaker change detection and segment-wise speaker embedding extraction. Still, in the clustering stage, traditional algorithms like probabilistic linear discriminant analysis (PLDA) are widely used for scoring the similarity between two speech segments. In this paper, we propose a supervised method to measure the similarity matrix between all segments of an audio recording with sequential bidirectional long short-term memory networks (Bi-LSTM). Spectral clustering is applied on top of the similarity matrix to further improve the performance. Experimental results show that our system significantly outperforms the state-of-the-art methods and achieves a diarization error rate of 6.63% on the NIST SRE 2000 CALLHOME database.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge