Philipp Seeböck

Department of Biomedical Imaging and Image-guided Therapy, Computational Imaging Research Lab, Medical University Vienna, Austria, Christian Doppler Laboratory for Ophthalmic Image Analysis, Department of Ophthalmology and Optometry, Medical University Vienna, Austria

AREPAS: Anomaly Detection in Fine-Grained Anatomy with Reconstruction-Based Semantic Patch-Scoring

Sep 16, 2025

Abstract:Early detection of newly emerging diseases, lesion severity assessment, differentiation of medical conditions and automated screening are examples for the wide applicability and importance of anomaly detection (AD) and unsupervised segmentation in medicine. Normal fine-grained tissue variability such as present in pulmonary anatomy is a major challenge for existing generative AD methods. Here, we propose a novel generative AD approach addressing this issue. It consists of an image-to-image translation for anomaly-free reconstruction and a subsequent patch similarity scoring between observed and generated image-pairs for precise anomaly localization. We validate the new method on chest computed tomography (CT) scans for the detection and segmentation of infectious disease lesions. To assess generalizability, we evaluate the method on an ischemic stroke lesion segmentation task in T1-weighted brain MRI. Results show improved pixel-level anomaly segmentation in both chest CTs and brain MRIs, with relative DICE score improvements of +1.9% and +4.4%, respectively, compared to other state-of-the-art reconstruction-based methods.

Detection of Emerging Infectious Diseases in Lung CT based on Spatial Anomaly Patterns

Oct 25, 2024Abstract:Fast detection of emerging diseases is important for containing their spread and treating patients effectively. Local anomalies are relevant, but often novel diseases involve familiar disease patterns in new spatial distributions. Therefore, established local anomaly detection approaches may fail to identify them as new. Here, we present a novel approach to detect the emergence of new disease phenotypes exhibiting distinct patterns of the spatial distribution of lesions. We first identify anomalies in lung CT data, and then compare their distribution in a continually acquired new patient cohorts with historic patient population observed over a long prior period. We evaluate how accumulated evidence collected in the stream of patients is able to detect the onset of an emerging disease. In a gram-matrix based representation derived from the intermediate layers of a three-dimensional convolutional neural network, newly emerging clusters indicate emerging diseases.

Rigid Single-Slice-in-Volume registration via rotation-equivariant 2D/3D feature matching

Oct 24, 2024Abstract:2D to 3D registration is essential in tasks such as diagnosis, surgical navigation, environmental understanding, navigation in robotics, autonomous systems, or augmented reality. In medical imaging, the aim is often to place a 2D image in a 3D volumetric observation to w. Current approaches for rigid single slice in volume registration are limited by requirements such as pose initialization, stacks of adjacent slices, or reliable anatomical landmarks. Here, we propose a self-supervised 2D/3D registration approach to match a single 2D slice to the corresponding 3D volume. The method works in data without anatomical priors such as images of tumors. It addresses the dimensionality disparity and establishes correspondences between 2D in-plane and 3D out-of-plane rotation-equivariant features by using group equivariant CNNs. These rotation-equivariant features are extracted from the 2D query slice and aligned with their 3D counterparts. Results demonstrate the robustness of the proposed slice-in-volume registration on the NSCLC-Radiomics CT and KIRBY21 MRI datasets, attaining an absolute median angle error of less than 2 degrees and a mean-matching feature accuracy of 89% at a tolerance of 3 pixels.

Exploiting Epistemic Uncertainty of Anatomy Segmentation for Anomaly Detection in Retinal OCT

May 29, 2019

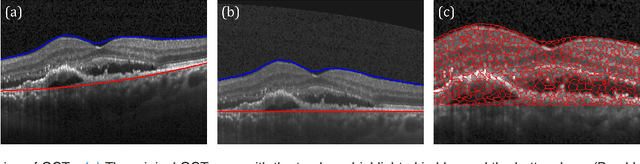

Abstract:Diagnosis and treatment guidance are aided by detecting relevant biomarkers in medical images. Although supervised deep learning can perform accurate segmentation of pathological areas, it is limited by requiring a-priori definitions of these regions, large-scale annotations, and a representative patient cohort in the training set. In contrast, anomaly detection is not limited to specific definitions of pathologies and allows for training on healthy samples without annotation. Anomalous regions can then serve as candidates for biomarker discovery. Knowledge about normal anatomical structure brings implicit information for detecting anomalies. We propose to take advantage of this property using bayesian deep learning, based on the assumption that epistemic uncertainties will correlate with anatomical deviations from a normal training set. A Bayesian U-Net is trained on a well-defined healthy environment using weak labels of healthy anatomy produced by existing methods. At test time, we capture epistemic uncertainty estimates of our model using Monte Carlo dropout. A novel post-processing technique is then applied to exploit these estimates and transfer their layered appearance to smooth blob-shaped segmentations of the anomalies. We experimentally validated this approach in retinal optical coherence tomography (OCT) images, using weak labels of retinal layers. Our method achieved a Dice index of 0.789 in an independent anomaly test set of age-related macular degeneration (AMD) cases. The resulting segmentations allowed very high accuracy for separating healthy and diseased cases with late wet AMD, dry geographic atrophy (GA), diabetic macular edema (DME) and retinal vein occlusion (RVO). Finally, we qualitatively observed that our approach can also detect other deviations in normal scans such as cut edge artifacts.

Using CycleGANs for effectively reducing image variability across OCT devices and improving retinal fluid segmentation

Jan 25, 2019

Abstract:Optical coherence tomography (OCT) has become the most important imaging modality in ophthalmology. A substantial amount of research has recently been devoted to the development of machine learning (ML) models for the identification and quantification of pathological features in OCT images. Among the several sources of variability the ML models have to deal with, a major factor is the acquisition device, which can limit the ML model's generalizability. In this paper, we propose to reduce the image variability across different OCT devices (Spectralis and Cirrus) by using CycleGAN, an unsupervised unpaired image transformation algorithm. The usefulness of this approach is evaluated in the setting of retinal fluid segmentation, namely intraretinal cystoid fluid (IRC) and subretinal fluid (SRF). First, we train a segmentation model on images acquired with a source OCT device. Then we evaluate the model on (1) source, (2) target and (3) transformed versions of the target OCT images. The presented transformation strategy shows an F1 score of 0.4 (0.51) for IRC (SRF) segmentations. Compared with traditional transformation approaches, this means an F1 score gain of 0.2 (0.12).

U2-Net: A Bayesian U-Net model with epistemic uncertainty feedback for photoreceptor layer segmentation in pathological OCT scans

Jan 23, 2019

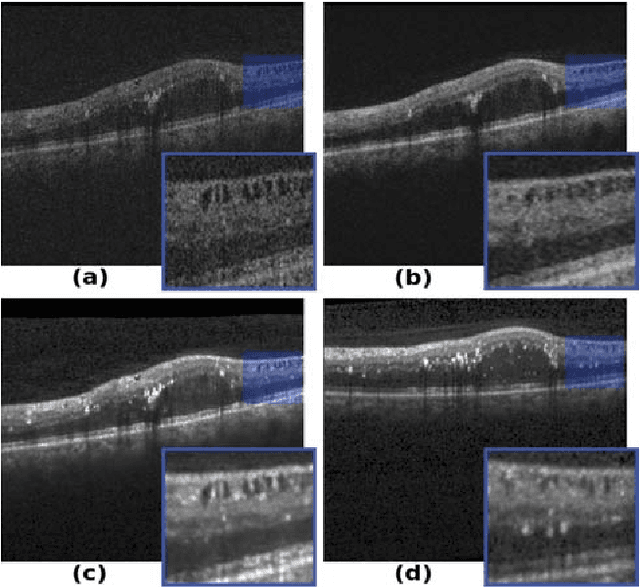

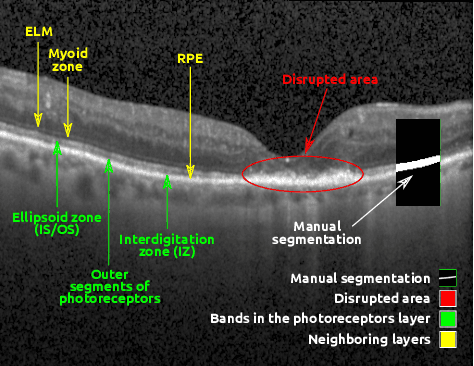

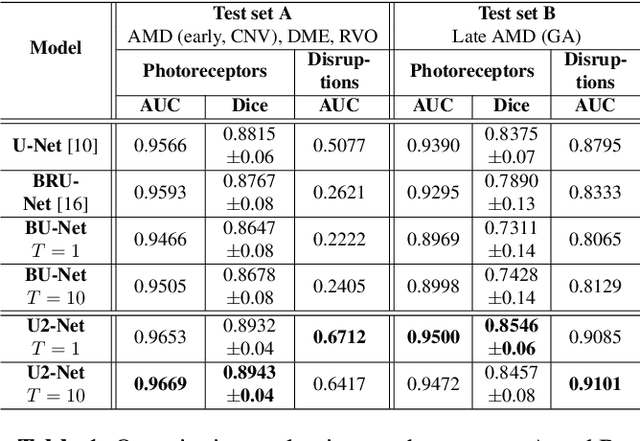

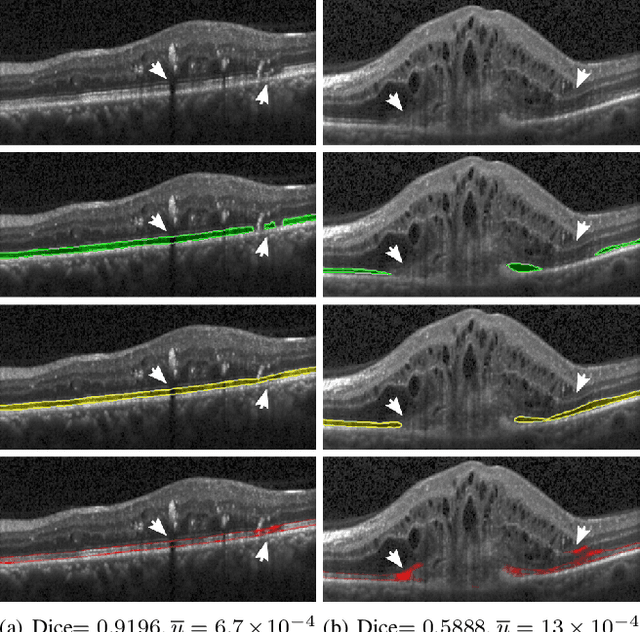

Abstract:In this paper, we introduce a Bayesian deep learning based model for segmenting the photoreceptor layer in pathological OCT scans. Our architecture provides accurate segmentations of the photoreceptor layer and produces pixel-wise epistemic uncertainty maps that highlight potential areas of pathologies or segmentation errors. We empirically evaluated this approach in two sets of pathological OCT scans of patients with age-related macular degeneration, retinal vein oclussion and diabetic macular edema, improving the performance of the baseline U-Net both in terms of the Dice index and the area under the precision/recall curve. We also observed that the uncertainty estimates were inversely correlated with the model performance, underlying its utility for highlighting areas where manual inspection/correction might be needed.

* Accepted for publication at IEEE International Symposium on Biomedical Imaging (ISBI) 2019

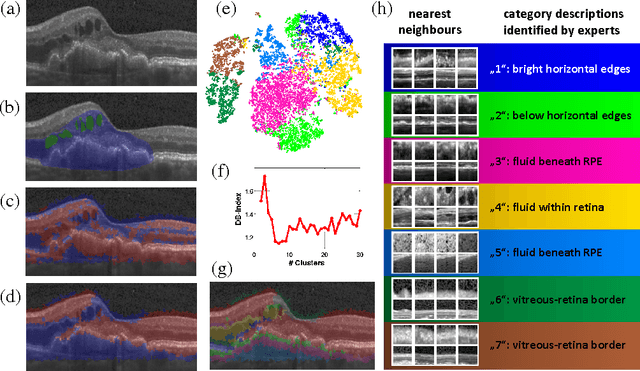

Unsupervised Identification of Disease Marker Candidates in Retinal OCT Imaging Data

Oct 31, 2018

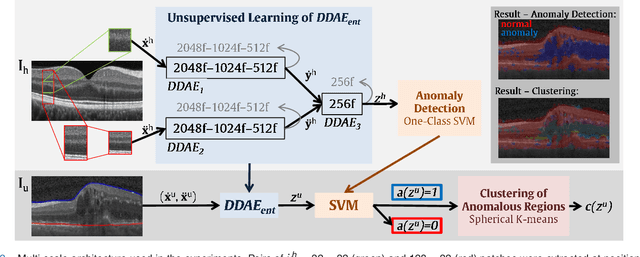

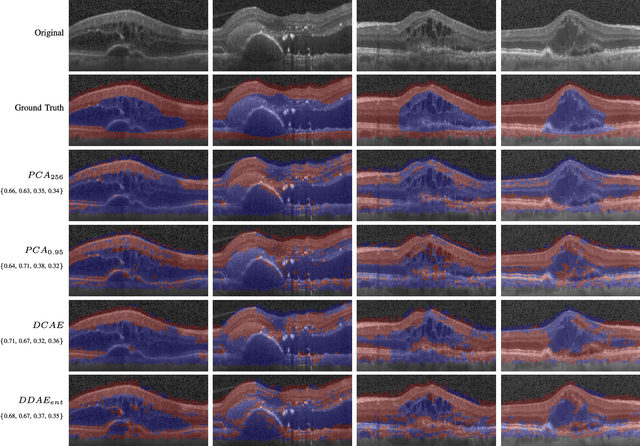

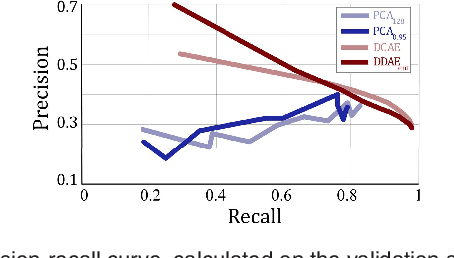

Abstract:The identification and quantification of markers in medical images is critical for diagnosis, prognosis, and disease management. Supervised machine learning enables the detection and exploitation of findings that are known a priori after annotation of training examples by experts. However, supervision does not scale well, due to the amount of necessary training examples, and the limitation of the marker vocabulary to known entities. In this proof-of-concept study, we propose unsupervised identification of anomalies as candidates for markers in retinal Optical Coherence Tomography (OCT) imaging data without a constraint to a priori definitions. We identify and categorize marker candidates occurring frequently in the data, and demonstrate that these markers show predictive value in the task of detecting disease. A careful qualitative analysis of the identified data driven markers reveals how their quantifiable occurrence aligns with our current understanding of disease course, in early- and late age-related macular degeneration (AMD) patients. A multi-scale deep denoising autoencoder is trained on healthy images, and a one-class support vector machine identifies anomalies in new data. Clustering in the anomalies identifies stable categories. Using these markers to classify healthy-, early AMD- and late AMD cases yields an accuracy of 81.40%. In a second binary classification experiment on a publicly available data set (healthy vs. intermediate AMD) the model achieves an area under the ROC curve of 0.944.

Fully Automated Segmentation of Hyperreflective Foci in Optical Coherence Tomography Images

May 08, 2018

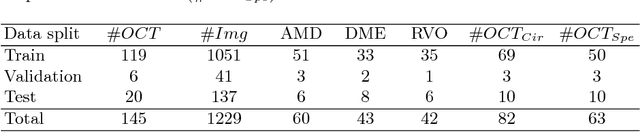

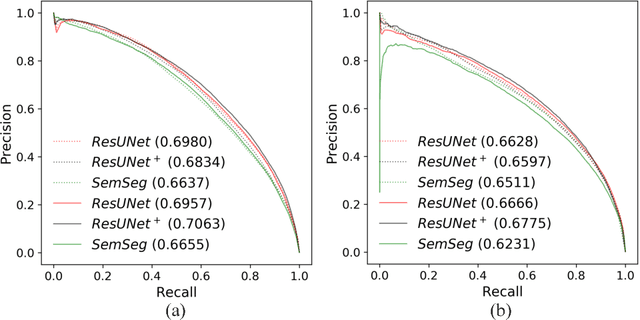

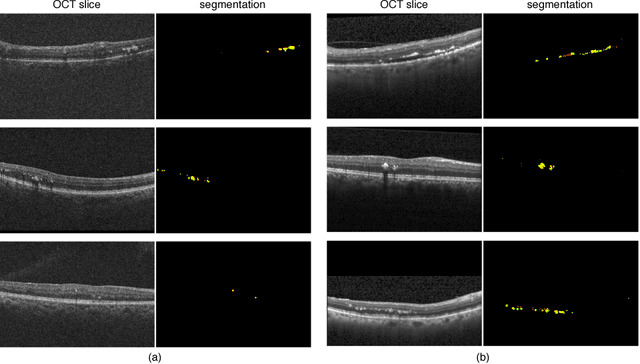

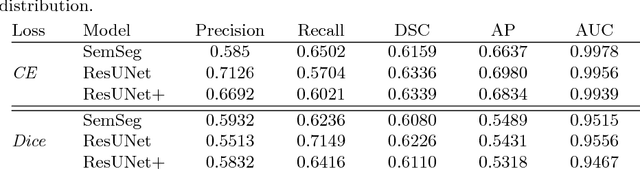

Abstract:The automatic detection of disease related entities in retinal imaging data is relevant for disease- and treatment monitoring. It enables the quantitative assessment of large amounts of data and the corresponding study of disease characteristics. The presence of hyperreflective foci (HRF) is related to disease progression in various retinal diseases. Manual identification of HRF in spectral-domain optical coherence tomography (SD-OCT) scans is error-prone and tedious. We present a fully automated machine learning approach for segmenting HRF in SD-OCT scans. Evaluation on annotated OCT images of the retina demonstrates that a residual U-Net allows to segment HRF with high accuracy. As our dataset comprised data from different retinal diseases including age-related macular degeneration, diabetic macular edema and retinal vein occlusion, the algorithm can safely be applied in all of them though different pathophysiological origins are known.

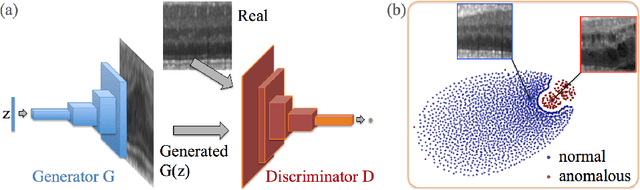

Unsupervised Anomaly Detection with Generative Adversarial Networks to Guide Marker Discovery

Mar 17, 2017

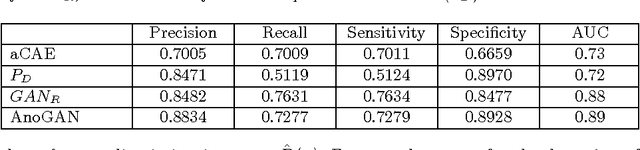

Abstract:Obtaining models that capture imaging markers relevant for disease progression and treatment monitoring is challenging. Models are typically based on large amounts of data with annotated examples of known markers aiming at automating detection. High annotation effort and the limitation to a vocabulary of known markers limit the power of such approaches. Here, we perform unsupervised learning to identify anomalies in imaging data as candidates for markers. We propose AnoGAN, a deep convolutional generative adversarial network to learn a manifold of normal anatomical variability, accompanying a novel anomaly scoring scheme based on the mapping from image space to a latent space. Applied to new data, the model labels anomalies, and scores image patches indicating their fit into the learned distribution. Results on optical coherence tomography images of the retina demonstrate that the approach correctly identifies anomalous images, such as images containing retinal fluid or hyperreflective foci.

Identifying and Categorizing Anomalies in Retinal Imaging Data

Dec 02, 2016

Abstract:The identification and quantification of markers in medical images is critical for diagnosis, prognosis and management of patients in clinical practice. Supervised- or weakly supervised training enables the detection of findings that are known a priori. It does not scale well, and a priori definition limits the vocabulary of markers to known entities reducing the accuracy of diagnosis and prognosis. Here, we propose the identification of anomalies in large-scale medical imaging data using healthy examples as a reference. We detect and categorize candidates for anomaly findings untypical for the observed data. A deep convolutional autoencoder is trained on healthy retinal images. The learned model generates a new feature representation, and the distribution of healthy retinal patches is estimated by a one-class support vector machine. Results demonstrate that we can identify pathologic regions in images without using expert annotations. A subsequent clustering categorizes findings into clinically meaningful classes. In addition the learned features outperform standard embedding approaches in a classification task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge