Pat Pataranutaporn

Cassandra

Human-AI Interaction Alignment: Designing, Evaluating, and Evolving Value-Centered AI For Reciprocal Human-AI Futures

Dec 25, 2025Abstract:The rapid integration of generative AI into everyday life underscores the need to move beyond unidirectional alignment models that only adapt AI to human values. This workshop focuses on bidirectional human-AI alignment, a dynamic, reciprocal process where humans and AI co-adapt through interaction, evaluation, and value-centered design. Building on our past CHI 2025 BiAlign SIG and ICLR 2025 Workshop, this workshop will bring together interdisciplinary researchers from HCI, AI, social sciences and more domains to advance value-centered AI and reciprocal human-AI collaboration. We focus on embedding human and societal values into alignment research, emphasizing not only steering AI toward human values but also enabling humans to critically engage with and evolve alongside AI systems. Through talks, interdisciplinary discussions, and collaborative activities, participants will explore methods for interactive alignment, frameworks for societal impact evaluation, and strategies for alignment in dynamic contexts. This workshop aims to bridge the disciplines' gaps and establish a shared agenda for responsible, reciprocal human-AI futures.

Investigating Affective Use and Emotional Well-being on ChatGPT

Apr 04, 2025Abstract:As AI chatbots see increased adoption and integration into everyday life, questions have been raised about the potential impact of human-like or anthropomorphic AI on users. In this work, we investigate the extent to which interactions with ChatGPT (with a focus on Advanced Voice Mode) may impact users' emotional well-being, behaviors and experiences through two parallel studies. To study the affective use of AI chatbots, we perform large-scale automated analysis of ChatGPT platform usage in a privacy-preserving manner, analyzing over 3 million conversations for affective cues and surveying over 4,000 users on their perceptions of ChatGPT. To investigate whether there is a relationship between model usage and emotional well-being, we conduct an Institutional Review Board (IRB)-approved randomized controlled trial (RCT) on close to 1,000 participants over 28 days, examining changes in their emotional well-being as they interact with ChatGPT under different experimental settings. In both on-platform data analysis and the RCT, we observe that very high usage correlates with increased self-reported indicators of dependence. From our RCT, we find that the impact of voice-based interactions on emotional well-being to be highly nuanced, and influenced by factors such as the user's initial emotional state and total usage duration. Overall, our analysis reveals that a small number of users are responsible for a disproportionate share of the most affective cues.

AI persuading AI vs AI persuading Humans: LLMs' Differential Effectiveness in Promoting Pro-Environmental Behavior

Mar 03, 2025

Abstract:Pro-environmental behavior (PEB) is vital to combat climate change, yet turning awareness into intention and action remains elusive. We explore large language models (LLMs) as tools to promote PEB, comparing their impact across 3,200 participants: real humans (n=1,200), simulated humans based on actual participant data (n=1,200), and fully synthetic personas (n=1,200). All three participant groups faced personalized or standard chatbots, or static statements, employing four persuasion strategies (moral foundations, future self-continuity, action orientation, or "freestyle" chosen by the LLM). Results reveal a "synthetic persuasion paradox": synthetic and simulated agents significantly affect their post-intervention PEB stance, while human responses barely shift. Simulated participants better approximate human trends but still overestimate effects. This disconnect underscores LLM's potential for pre-evaluating PEB interventions but warns of its limits in predicting real-world behavior. We call for refined synthetic modeling and sustained and extended human trials to align conversational AI's promise with tangible sustainability outcomes.

OceanChat: The Effect of Virtual Conversational AI Agents on Sustainable Attitude and Behavior Change

Feb 05, 2025

Abstract:Marine ecosystems face unprecedented threats from climate change and plastic pollution, yet traditional environmental education often struggles to translate awareness into sustained behavioral change. This paper presents OceanChat, an interactive system leveraging large language models to create conversational AI agents represented as animated marine creatures -- specifically a beluga whale, a jellyfish, and a seahorse -- designed to promote environmental behavior (PEB) and foster awareness through personalized dialogue. Through a between-subjects experiment (N=900), we compared three conditions: (1) Static Scientific Information, providing conventional environmental education through text and images; (2) Static Character Narrative, featuring first-person storytelling from 3D-rendered marine creatures; and (3) Conversational Character Narrative, enabling real-time dialogue with AI-powered marine characters. Our analysis revealed that the Conversational Character Narrative condition significantly increased behavioral intentions and sustainable choice preferences compared to static approaches. The beluga whale character demonstrated consistently stronger emotional engagement across multiple measures, including perceived anthropomorphism and empathy. However, impacts on deeper measures like climate policy support and psychological distance were limited, highlighting the complexity of shifting entrenched beliefs. Our work extends research on sustainability interfaces facilitating PEB and offers design principles for creating emotionally resonant, context-aware AI characters. By balancing anthropomorphism with species authenticity, OceanChat demonstrates how interactive narratives can bridge the gap between environmental knowledge and real-world behavior change.

Synthetic Human Memories: AI-Edited Images and Videos Can Implant False Memories and Distort Recollection

Sep 13, 2024

Abstract:AI is increasingly used to enhance images and videos, both intentionally and unintentionally. As AI editing tools become more integrated into smartphones, users can modify or animate photos into realistic videos. This study examines the impact of AI-altered visuals on false memories--recollections of events that didn't occur or deviate from reality. In a pre-registered study, 200 participants were divided into four conditions of 50 each. Participants viewed original images, completed a filler task, then saw stimuli corresponding to their assigned condition: unedited images, AI-edited images, AI-generated videos, or AI-generated videos of AI-edited images. AI-edited visuals significantly increased false recollections, with AI-generated videos of AI-edited images having the strongest effect (2.05x compared to control). Confidence in false memories was also highest for this condition (1.19x compared to control). We discuss potential applications in HCI, such as therapeutic memory reframing, and challenges in ethical, legal, political, and societal domains.

Super-intelligence or Superstition? Exploring Psychological Factors Underlying Unwarranted Belief in AI Predictions

Aug 13, 2024

Abstract:This study investigates psychological factors influencing belief in AI predictions about personal behavior, comparing it to belief in astrology and personality-based predictions. Through an experiment with 238 participants, we examined how cognitive style, paranormal beliefs, AI attitudes, personality traits, and other factors affect perceived validity, reliability, usefulness, and personalization of predictions from different sources. Our findings reveal that belief in AI predictions is positively correlated with belief in predictions based on astrology and personality psychology. Notably, paranormal beliefs and positive AI attitudes significantly increased perceived validity, reliability, usefulness, and personalization of AI predictions. Conscientiousness was negatively correlated with belief in predictions across all sources, and interest in the prediction topic increased believability across predictions. Surprisingly, cognitive style did not significantly influence belief in predictions. These results highlight the "rational superstition" phenomenon in AI, where belief is driven more by mental heuristics and intuition than critical evaluation. We discuss implications for designing AI systems and communication strategies that foster appropriate trust and skepticism. This research contributes to our understanding of the psychology of human-AI interaction and offers insights for the design and deployment of AI systems.

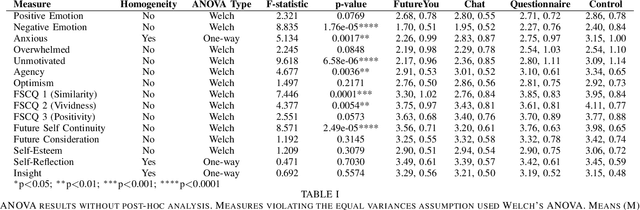

Conversational AI Powered by Large Language Models Amplifies False Memories in Witness Interviews

Aug 08, 2024Abstract:This study examines the impact of AI on human false memories -- recollections of events that did not occur or deviate from actual occurrences. It explores false memory induction through suggestive questioning in Human-AI interactions, simulating crime witness interviews. Four conditions were tested: control, survey-based, pre-scripted chatbot, and generative chatbot using a large language model (LLM). Participants (N=200) watched a crime video, then interacted with their assigned AI interviewer or survey, answering questions including five misleading ones. False memories were assessed immediately and after one week. Results show the generative chatbot condition significantly increased false memory formation, inducing over 3 times more immediate false memories than the control and 1.7 times more than the survey method. 36.4% of users' responses to the generative chatbot were misled through the interaction. After one week, the number of false memories induced by generative chatbots remained constant. However, confidence in these false memories remained higher than the control after one week. Moderating factors were explored: users who were less familiar with chatbots but more familiar with AI technology, and more interested in crime investigations, were more susceptible to false memories. These findings highlight the potential risks of using advanced AI in sensitive contexts, like police interviews, emphasizing the need for ethical considerations.

Deceptive AI systems that give explanations are more convincing than honest AI systems and can amplify belief in misinformation

Jul 31, 2024Abstract:Advanced Artificial Intelligence (AI) systems, specifically large language models (LLMs), have the capability to generate not just misinformation, but also deceptive explanations that can justify and propagate false information and erode trust in the truth. We examined the impact of deceptive AI generated explanations on individuals' beliefs in a pre-registered online experiment with 23,840 observations from 1,192 participants. We found that in addition to being more persuasive than accurate and honest explanations, AI-generated deceptive explanations can significantly amplify belief in false news headlines and undermine true ones as compared to AI systems that simply classify the headline incorrectly as being true/false. Moreover, our results show that personal factors such as cognitive reflection and trust in AI do not necessarily protect individuals from these effects caused by deceptive AI generated explanations. Instead, our results show that the logical validity of AI generated deceptive explanations, that is whether the explanation has a causal effect on the truthfulness of the AI's classification, plays a critical role in countering their persuasiveness - with logically invalid explanations being deemed less credible. This underscores the importance of teaching logical reasoning and critical thinking skills to identify logically invalid arguments, fostering greater resilience against advanced AI-driven misinformation.

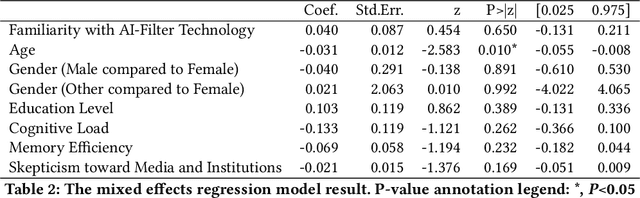

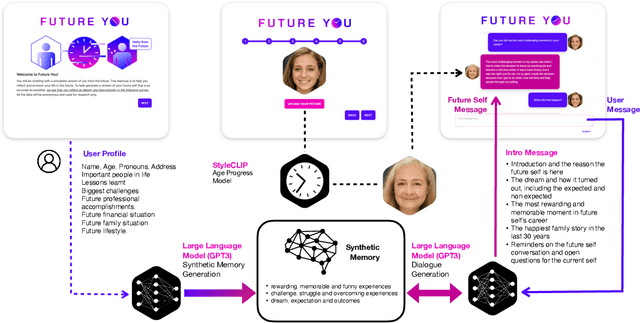

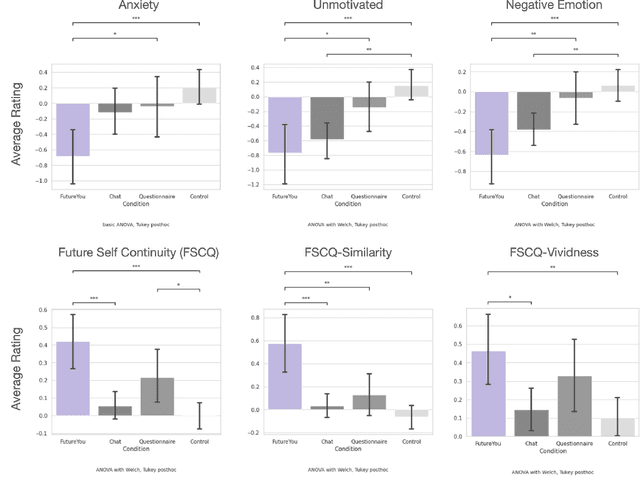

Future You: A Conversation with an AI-Generated Future Self Reduces Anxiety, Negative Emotions, and Increases Future Self-Continuity

May 21, 2024

Abstract:We introduce "Future You," an interactive, brief, single-session, digital chat intervention designed to improve future self-continuity--the degree of connection an individual feels with a temporally distant future self--a characteristic that is positively related to mental health and wellbeing. Our system allows users to chat with a relatable yet AI-powered virtual version of their future selves that is tuned to their future goals and personal qualities. To make the conversation realistic, the system generates a "synthetic memory"--a unique backstory for each user--that creates a throughline between the user's present age (between 18-30) and their life at age 60. The "Future You" character also adopts the persona of an age-progressed image of the user's present self. After a brief interaction with the "Future You" character, users reported decreased anxiety, and increased future self-continuity. This is the first study successfully demonstrating the use of personalized AI-generated characters to improve users' future self-continuity and wellbeing.

Txt2Vid: Ultra-Low Bitrate Compression of Talking-Head Videos via Text

Jun 26, 2021

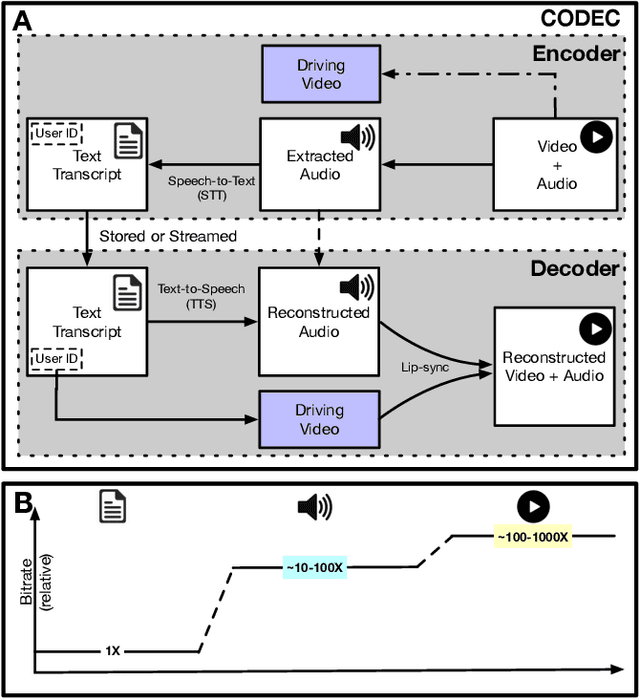

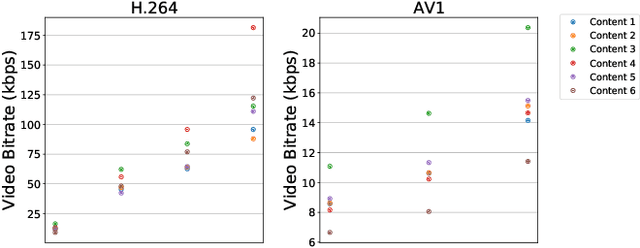

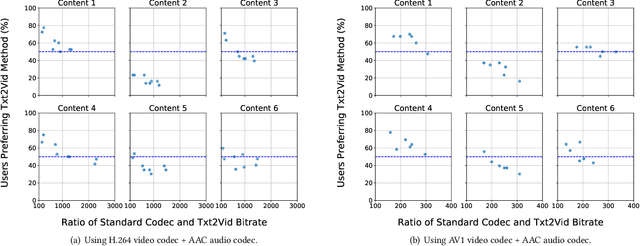

Abstract:Video represents the majority of internet traffic today leading to a continuous technological arms race between generating higher quality content, transmitting larger file sizes and supporting network infrastructure. Adding to this is the recent COVID-19 pandemic fueled surge in the use of video conferencing tools. Since videos take up substantial bandwidth (~100 Kbps to few Mbps), improved video compression can have a substantial impact on network performance for live and pre-recorded content, providing broader access to multimedia content worldwide. In this work, we present a novel video compression pipeline, called Txt2Vid, which substantially reduces data transmission rates by compressing webcam videos ("talking-head videos") to a text transcript. The text is transmitted and decoded into a realistic reconstruction of the original video using recent advances in deep learning based voice cloning and lip syncing models. Our generative pipeline achieves two to three orders of magnitude reduction in the bitrate as compared to the standard audio-video codecs (encoders-decoders), while maintaining equivalent Quality-of-Experience based on a subjective evaluation by users (n=242) in an online study. The code for this work is available at https://github.com/tpulkit/txt2vid.git.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge