Nils Purschke

DepthVision: Robust Vision-Language Understanding through GAN-Based LiDAR-to-RGB Synthesis

Sep 09, 2025

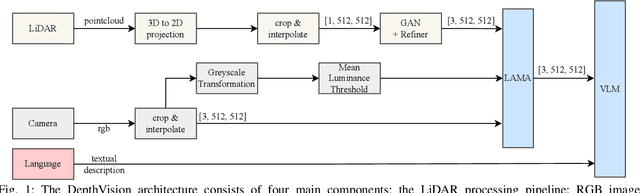

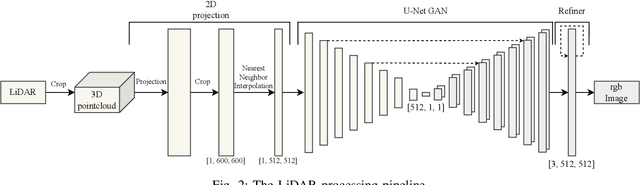

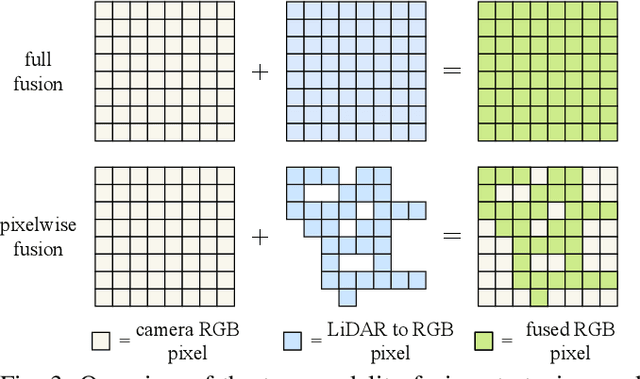

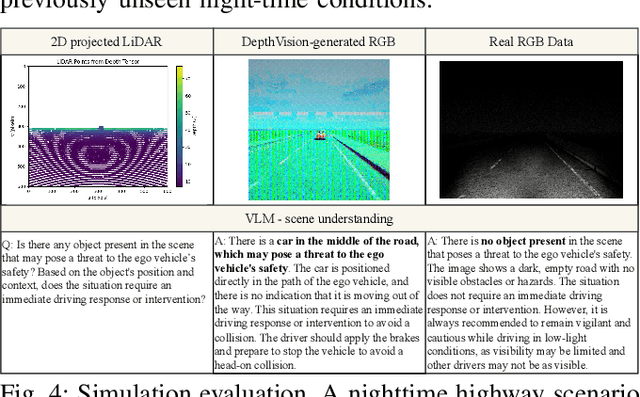

Abstract:Ensuring reliable robot operation when visual input is degraded or insufficient remains a central challenge in robotics. This letter introduces DepthVision, a framework for multimodal scene understanding designed to address this problem. Unlike existing Vision-Language Models (VLMs), which use only camera-based visual input alongside language, DepthVision synthesizes RGB images from sparse LiDAR point clouds using a conditional generative adversarial network (GAN) with an integrated refiner network. These synthetic views are then combined with real RGB data using a Luminance-Aware Modality Adaptation (LAMA), which blends the two types of data dynamically based on ambient lighting conditions. This approach compensates for sensor degradation, such as darkness or motion blur, without requiring any fine-tuning of downstream vision-language models. We evaluate DepthVision on real and simulated datasets across various models and tasks, with particular attention to safety-critical tasks. The results demonstrate that our approach improves performance in low-light conditions, achieving substantial gains over RGB-only baselines while preserving compatibility with frozen VLMs. This work highlights the potential of LiDAR-guided RGB synthesis for achieving robust robot operation in real-world environments.

Autonomous Vehicle Lateral Control Using Deep Reinforcement Learning with MPC-PID Demonstration

Jun 04, 2025Abstract:The controller is one of the most important modules in the autonomous driving pipeline, ensuring the vehicle reaches its desired position. In this work, a reinforcement learning based lateral control approach, despite the imperfections in the vehicle models due to measurement errors and simplifications, is presented. Our approach ensures comfortable, efficient, and robust control performance considering the interface between controlling and other modules. The controller consists of the conventional Model Predictive Control (MPC)-PID part as the basis and the demonstrator, and the Deep Reinforcement Learning (DRL) part which leverages the online information from the MPC-PID part. The controller's performance is evaluated in CARLA using the ground truth of the waypoints as inputs. Experimental results demonstrate the effectiveness of the controller when vehicle information is incomplete, and the training of DRL can be stabilized with the demonstration part. These findings highlight the potential to reduce development and integration efforts for autonomous driving pipelines in the future.

Synergy of Large Language Model and Model Driven Engineering for Automated Development of Centralized Vehicular Systems

Apr 08, 2024Abstract:We present a prototype of a tool leveraging the synergy of model driven engineering (MDE) and Large Language Models (LLM) for the purpose of software development process automation in the automotive industry. In this approach, the user-provided input is free form textual requirements, which are first translated to Ecore model instance representation using an LLM, which is afterwards checked for consistency using Object Constraint Language (OCL) rules. After successful consistency check, the model instance is fed as input to another LLM for the purpose of code generation. The generated code is evaluated in a simulated environment using CARLA simulator connected to an example centralized vehicle architecture, in an emergency brake scenario.

TUMTraf Event: Calibration and Fusion Resulting in a Dataset for Roadside Event-Based and RGB Cameras

Jan 16, 2024

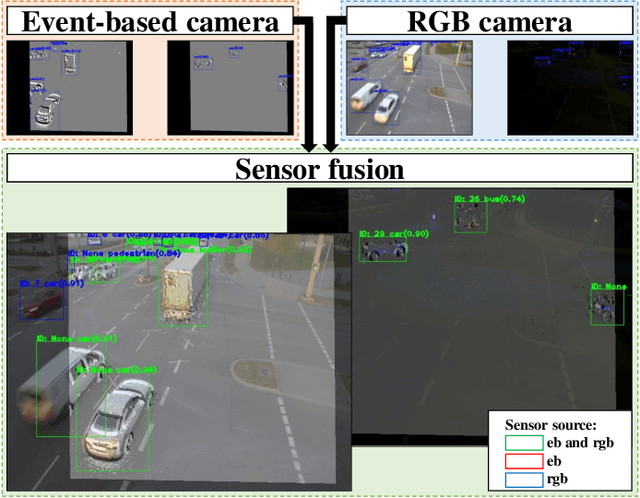

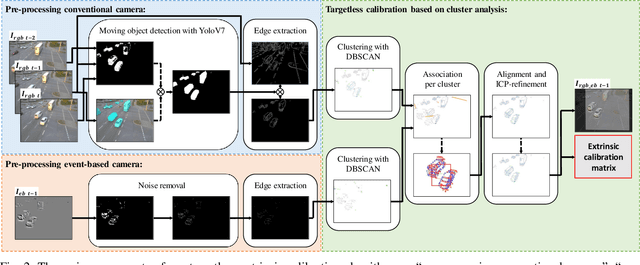

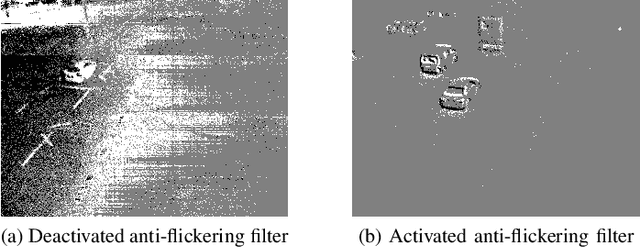

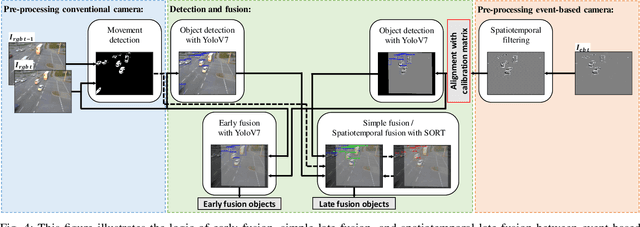

Abstract:Event-based cameras are predestined for Intelligent Transportation Systems (ITS). They provide very high temporal resolution and dynamic range, which can eliminate motion blur and make objects easier to recognize at night. However, event-based images lack color and texture compared to images from a conventional rgb camera. Considering that, data fusion between event-based and conventional cameras can combine the strengths of both modalities. For this purpose, extrinsic calibration is necessary. To the best of our knowledge, no targetless calibration between event-based and rgb cameras can handle multiple moving objects, nor data fusion optimized for the domain of roadside ITS exists, nor synchronized event-based and rgb camera datasets in the field of ITS are known. To fill these research gaps, based on our previous work, we extend our targetless calibration approach with clustering methods to handle multiple moving objects. Furthermore, we develop an early fusion, simple late fusion, and a novel spatiotemporal late fusion method. Lastly, we publish the TUMTraf Event Dataset, which contains more than 4k synchronized event-based and rgb images with 21.9k labeled 2D boxes. During our extensive experiments, we verified the effectiveness of our calibration method with multiple moving objects. Furthermore, compared to a single rgb camera, we increased the detection performance of up to +16% mAP in the day and up to +12% mAP in the challenging night with our presented event-based sensor fusion methods. The TUMTraf Event Dataset is available at https://innovation-mobility.com/tumtraf-dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge