Mingxing Peng

Frank

Coordinated Pandemic Control with Large Language Model Agents as Policymaking Assistants

Jan 14, 2026Abstract:Effective pandemic control requires timely and coordinated policymaking across administrative regions that are intrinsically interdependent. However, human-driven responses are often fragmented and reactive, with policies formulated in isolation and adjusted only after outbreaks escalate, undermining proactive intervention and global pandemic mitigation. To address this challenge, here we propose a large language model (LLM) multi-agent policymaking framework that supports coordinated and proactive pandemic control across regions. Within our framework, each administrative region is assigned an LLM agent as an AI policymaking assistant. The agent reasons over region-specific epidemiological dynamics while communicating with other agents to account for cross-regional interdependencies. By integrating real-world data, a pandemic evolution simulator, and structured inter-agent communication, our framework enables agents to jointly explore counterfactual intervention scenarios and synthesize coordinated policy decisions through a closed-loop simulation process. We validate the proposed framework using state-level COVID-19 data from the United States between April and December 2020, together with real-world mobility records and observed policy interventions. Compared with real-world pandemic outcomes, our approach reduces cumulative infections and deaths by up to 63.7% and 40.1%, respectively, at the individual state level, and by 39.0% and 27.0%, respectively, when aggregated across states. These results demonstrate that LLM multi-agent systems can enable more effective pandemic control with coordinated policymaking...

nuPlan-R: A Closed-Loop Planning Benchmark for Autonomous Driving via Reactive Multi-Agent Simulation

Nov 13, 2025Abstract:Recent advances in closed-loop planning benchmarks have significantly improved the evaluation of autonomous vehicles. However, existing benchmarks still rely on rule-based reactive agents such as the Intelligent Driver Model (IDM), which lack behavioral diversity and fail to capture realistic human interactions, leading to oversimplified traffic dynamics. To address these limitations, we present nuPlan-R, a new reactive closed-loop planning benchmark that integrates learning-based reactive multi-agent simulation into the nuPlan framework. Our benchmark replaces the rule-based IDM agents with noise-decoupled diffusion-based reactive agents and introduces an interaction-aware agent selection mechanism to ensure both realism and computational efficiency. Furthermore, we extend the benchmark with two additional metrics to enable a more comprehensive assessment of planning performance. Extensive experiments demonstrate that our reactive agent model produces more realistic, diverse, and human-like traffic behaviors, leading to a benchmark environment that better reflects real-world interactive driving. We further reimplement a collection of rule-based, learning-based, and hybrid planning approaches within our nuPlan-R benchmark, providing a clearer reflection of planner performance in complex interactive scenarios and better highlighting the advantages of learning-based planners in handling complex and dynamic scenarios. These results establish nuPlan-R as a new standard for fair, reactive, and realistic closed-loop planning evaluation. We will open-source the code for the new benchmark.

LD-Scene: LLM-Guided Diffusion for Controllable Generation of Adversarial Safety-Critical Driving Scenarios

May 16, 2025

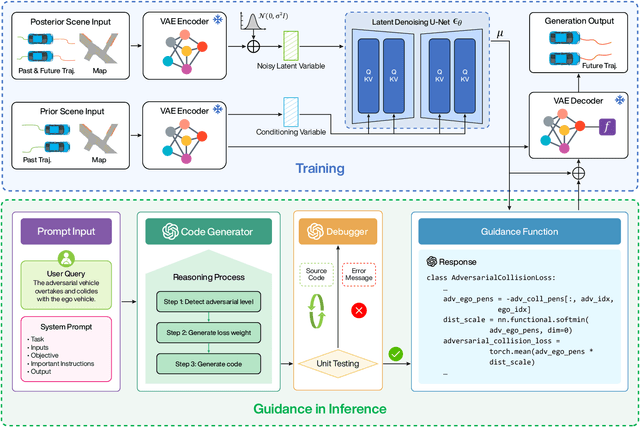

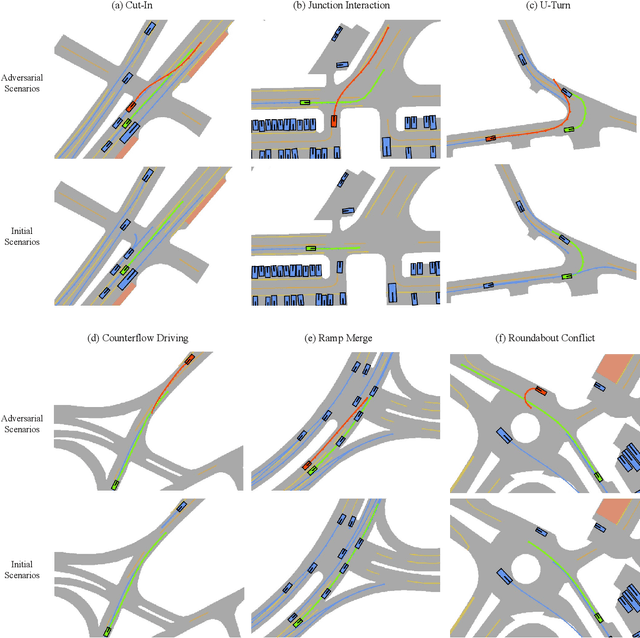

Abstract:Ensuring the safety and robustness of autonomous driving systems necessitates a comprehensive evaluation in safety-critical scenarios. However, these safety-critical scenarios are rare and difficult to collect from real-world driving data, posing significant challenges to effectively assessing the performance of autonomous vehicles. Typical existing methods often suffer from limited controllability and lack user-friendliness, as extensive expert knowledge is essentially required. To address these challenges, we propose LD-Scene, a novel framework that integrates Large Language Models (LLMs) with Latent Diffusion Models (LDMs) for user-controllable adversarial scenario generation through natural language. Our approach comprises an LDM that captures realistic driving trajectory distributions and an LLM-based guidance module that translates user queries into adversarial loss functions, facilitating the generation of scenarios aligned with user queries. The guidance module integrates an LLM-based Chain-of-Thought (CoT) code generator and an LLM-based code debugger, enhancing the controllability and robustness in generating guidance functions. Extensive experiments conducted on the nuScenes dataset demonstrate that LD-Scene achieves state-of-the-art performance in generating realistic, diverse, and effective adversarial scenarios. Furthermore, our framework provides fine-grained control over adversarial behaviors, thereby facilitating more effective testing tailored to specific driving scenarios.

Automating Traffic Model Enhancement with AI Research Agent

Sep 25, 2024

Abstract:Developing efficient traffic models is essential for optimizing transportation systems, yet current approaches remain time-intensive and susceptible to human errors due to their reliance on manual processes. Traditional workflows involve exhaustive literature reviews, formula optimization, and iterative testing, leading to inefficiencies in research. In response, we introduce the Traffic Research Agent (TR-Agent), an AI-driven system designed to autonomously develop and refine traffic models through an iterative, closed-loop process. Specifically, we divide the research pipeline into four key stages: idea generation, theory formulation, theory evaluation, and iterative optimization; and construct TR-Agent with four corresponding modules: Idea Generator, Code Generator, Evaluator, and Analyzer. Working in synergy, these modules retrieve knowledge from external resources, generate novel ideas, implement and debug models, and finally assess them on the evaluation datasets. Furthermore, the system continuously refines these models based on iterative feedback, enhancing research efficiency and model performance. Experimental results demonstrate that TR-Agent achieves significant performance improvements across multiple traffic models, including the Intelligent Driver Model (IDM) for car following, the MOBIL lane-changing model, and the Lighthill-Whitham-Richards (LWR) traffic flow model. Additionally, TR-Agent provides detailed explanations for its optimizations, allowing researchers to verify and build upon its improvements easily. This flexibility makes the framework a powerful tool for researchers in transportation and beyond. To further support research and collaboration, we have open-sourced both the code and data used in our experiments, facilitating broader access and enabling continued advancements in the field.

GenFollower: Enhancing Car-Following Prediction with Large Language Models

Jul 08, 2024

Abstract:Accurate modeling of car-following behaviors is essential for various applications in traffic management and autonomous driving systems. However, current approaches often suffer from limitations like high sensitivity to data quality and lack of interpretability. In this study, we propose GenFollower, a novel zero-shot prompting approach that leverages large language models (LLMs) to address these challenges. We reframe car-following behavior as a language modeling problem and integrate heterogeneous inputs into structured prompts for LLMs. This approach achieves improved prediction performance and interpretability compared to traditional baseline models. Experiments on the Waymo Open datasets demonstrate GenFollower's superior performance and ability to provide interpretable insights into factors influencing car-following behavior. This work contributes to advancing the understanding and prediction of car-following behaviors, paving the way for enhanced traffic management and autonomous driving systems.

Explainable Traffic Flow Prediction with Large Language Models

Apr 13, 2024

Abstract:Traffic flow prediction is crucial for intelligent transportation systems. It has experienced significant advancements thanks to the power of deep learning in capturing latent patterns of traffic data. However, recent deep-learning architectures require intricate model designs and lack an intuitive understanding of the mapping from input data to predicted results. Achieving both accuracy and interpretability in traffic prediction models remains to be a challenge due to the complexity of traffic data and the inherent opacity of deep learning models. To tackle these challenges, we propose a novel approach, Traffic Flow Prediction LLM (TF-LLM), which leverages large language models (LLMs) to generate interpretable traffic flow predictions. By transferring multi-modal traffic data into natural language descriptions, TF-LLM captures complex spatial-temporal patterns and external factors from comprehensive traffic data. The LLM framework is fine-tuned using language-based instructions to align with spatial-temporal traffic flow data. Empirically, TF-LLM shows competitive accuracy compared with deep learning baselines, while providing intuitive and interpretable predictions. We discuss the spatial-temporal and input dependencies for explainable future flow forecasting, showcasing TF-LLM's potential for diverse city prediction tasks. This paper contributes to advancing explainable traffic prediction models and lays a foundation for future exploration of LLM applications in transportation. To the best of our knowledge, this is the first study to use LLM for interpretable prediction of traffic flow.

LC-LLM: Explainable Lane-Change Intention and Trajectory Predictions with Large Language Models

Mar 27, 2024

Abstract:To ensure safe driving in dynamic environments, autonomous vehicles should possess the capability to accurately predict the lane change intentions of surrounding vehicles in advance and forecast their future trajectories. Existing motion prediction approaches have ample room for improvement, particularly in terms of long-term prediction accuracy and interpretability. In this paper, we address these challenges by proposing LC-LLM, an explainable lane change prediction model that leverages the strong reasoning capabilities and self-explanation abilities of Large Language Models (LLMs). Essentially, we reformulate the lane change prediction task as a language modeling problem, processing heterogeneous driving scenario information in natural language as prompts for input into the LLM and employing a supervised fine-tuning technique to tailor the LLM specifically for our lane change prediction task. This allows us to utilize the LLM's powerful common sense reasoning abilities to understand complex interactive information, thereby improving the accuracy of long-term predictions. Furthermore, we incorporate explanatory requirements into the prompts in the inference stage. Therefore, our LC-LLM model not only can predict lane change intentions and trajectories but also provides explanations for its predictions, enhancing the interpretability. Extensive experiments on the large-scale highD dataset demonstrate the superior performance and interpretability of our LC-LLM in lane change prediction task. To the best of our knowledge, this is the first attempt to utilize LLMs for predicting lane change behavior. Our study shows that LLMs can encode comprehensive interaction information for driving behavior understanding.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge