Michael Spannowsky

From Reachability to Learnability: Geometric Design Principles for Quantum Neural Networks

Mar 03, 2026Abstract:Classical deep networks are effective because depth enables adaptive geometric deformation of data representations. In quantum neural networks (QNNs), however, depth or state reachability alone does not guarantee this feature-learning capability. We study this question in the pure-state setting by viewing encoded data as an embedded manifold in $\mathbb{C}P^{2^n-1}$ and analysing infinitesimal unitary actions through Lie-algebra directions. We introduce Classical-to-Lie-algebra (CLA) maps and the criterion of almost Complete Local Selectivity (aCLS), which combines directional completeness with data-dependent local selectivity. Within this framework, we show that data-independent trainable unitaries are complete but non-selective, i.e. learnable rigid reorientations, whereas pure data encodings are selective but non-tunable, i.e. fixed deformations. Hence, geometric flexibility requires a non-trivial joint dependence on data and trainable weights. We further show that accessing high-dimensional deformations of many-qubit state manifolds requires parametrised entangling directions; fixed entanglers such as CNOT alone do not provide adaptive geometric control. Numerical examples validate that CLS-satisfying data re-uploading models outperform non-tunable schemes while requiring only a quarter of the gate operations. Thus, the resulting picture reframes QNN design from state reachability to controllable geometry of hidden quantum representations.

Machine Learning on Heterogeneous, Edge, and Quantum Hardware for Particle Physics (ML-HEQUPP)

Feb 24, 2026Abstract:The next generation of particle physics experiments will face a new era of challenges in data acquisition, due to unprecedented data rates and volumes along with extreme environments and operational constraints. Harnessing this data for scientific discovery demands real-time inference and decision-making, intelligent data reduction, and efficient processing architectures beyond current capabilities. Crucial to the success of this experimental paradigm are several emerging technologies, such as artificial intelligence and machine learning (AI/ML) and silicon microelectronics, and the advent of quantum algorithms and processing. Their intersection includes areas of research such as low-power and low-latency devices for edge computing, heterogeneous accelerator systems, reconfigurable hardware, novel codesign and synthesis strategies, readout for cryogenic or high-radiation environments, and analog computing. This white paper presents a community-driven vision to identify and prioritize research and development opportunities in hardware-based ML systems and corresponding physics applications, contributing towards a successful transition to the new data frontier of fundamental science.

Another Fit Bites the Dust: Conformal Prediction as a Calibration Standard for Machine Learning in High-Energy Physics

Dec 18, 2025Abstract:Machine-learning techniques are essential in modern collider research, yet their probabilistic outputs often lack calibrated uncertainty estimates and finite-sample guarantees, limiting their direct use in statistical inference and decision-making. Conformal prediction (CP) provides a simple, distribution-free framework for calibrating arbitrary predictive models without retraining, yielding rigorous uncertainty quantification with finite-sample coverage guarantees under minimal exchangeability assumptions, without reliance on asymptotics, limit theorems, or Gaussian approximations. In this work, we investigate CP as a unifying calibration layer for machine-learning applications in high-energy physics. Using publicly available collider datasets and a diverse set of models, we show that a single conformal formalism can be applied across regression, binary and multi-class classification, anomaly detection, and generative modelling, converting raw model outputs into statistically valid prediction sets, typicality regions, and p-values with controlled false-positive rates. While conformal prediction does not improve raw model performance, it enforces honest uncertainty quantification and transparent error control. We argue that conformal calibration should be adopted as a standard component of machine-learning pipelines in collider physics, enabling reliable interpretation, robust comparisons, and principled statistical decisions in experimental and phenomenological analyses.

Improved Ground State Estimation in Quantum Field Theories via Normalising Flow-Assisted Neural Quantum States

Jun 13, 2025Abstract:We propose a hybrid variational framework that enhances Neural Quantum States (NQS) with a Normalising Flow-based sampler to improve the expressivity and trainability of quantum many-body wavefunctions. Our approach decouples the sampling task from the variational ansatz by learning a continuous flow model that targets a discretised, amplitude-supported subspace of the Hilbert space. This overcomes limitations of Markov Chain Monte Carlo (MCMC) and autoregressive methods, especially in regimes with long-range correlations and volume-law entanglement. Applied to the transverse-field Ising model with both short- and long-range interactions, our method achieves comparable ground state energy errors with state-of-the-art matrix product states and lower energies than autoregressive NQS. For systems up to 50 spins, we demonstrate high accuracy and robust convergence across a wide range of coupling strengths, including regimes where competing methods fail. Our results showcase the utility of flow-assisted sampling as a scalable tool for quantum simulation and offer a new approach toward learning expressive quantum states in high-dimensional Hilbert spaces.

Communicating Likelihoods with Normalising Flows

Feb 13, 2025

Abstract:We present a machine-learning-based workflow to model an unbinned likelihood from its samples. A key advancement over existing approaches is the validation of the learned likelihood using rigorous statistical tests of the joint distribution, such as the Kolmogorov-Smirnov test of the joint distribution. Our method enables the reliable communication of experimental and phenomenological likelihoods for subsequent analyses. We demonstrate its effectiveness through three case studies in high-energy physics. To support broader adoption, we provide an open-source reference implementation, nabu.

Optimal Equivariant Architectures from the Symmetries of Matrix-Element Likelihoods

Oct 24, 2024Abstract:The Matrix-Element Method (MEM) has long been a cornerstone of data analysis in high-energy physics. It leverages theoretical knowledge of parton-level processes and symmetries to evaluate the likelihood of observed events. In parallel, the advent of geometric deep learning has enabled neural network architectures that incorporate known symmetries directly into their design, leading to more efficient learning. This paper presents a novel approach that combines MEM-inspired symmetry considerations with equivariant neural network design for particle physics analysis. Even though Lorentz invariance and permutation invariance overall reconstructed objects are the largest and most natural symmetry in the input domain, we find that they are sub-optimal in most practical search scenarios. We propose a longitudinal boost-equivariant message-passing neural network architecture that preserves relevant discrete symmetries. We present numerical studies demonstrating MEM-inspired architectures achieve new state-of-the-art performance in distinguishing di-Higgs decays to four bottom quarks from the QCD background, with enhanced sample and parameter efficiencies. This synergy between MEM and equivariant deep learning opens new directions for physics-informed architecture design, promising more powerful tools for probing physics beyond the Standard Model.

Collective variables of neural networks: empirical time evolution and scaling laws

Oct 09, 2024

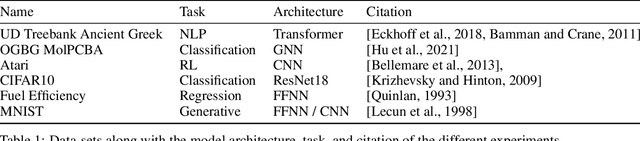

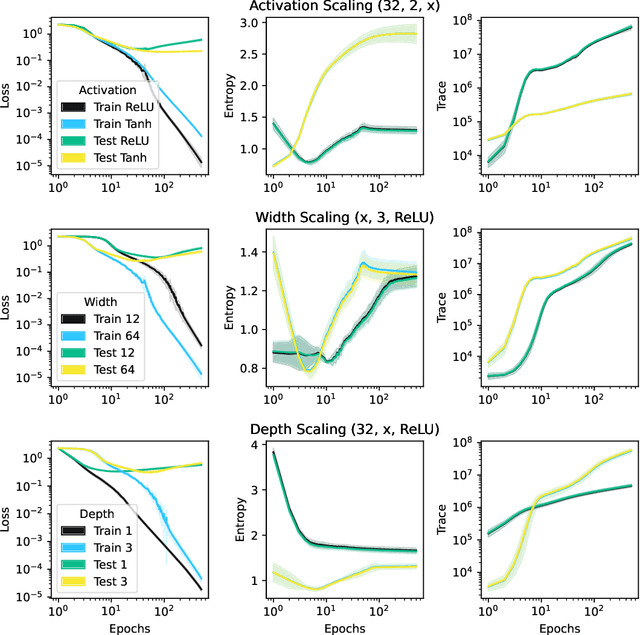

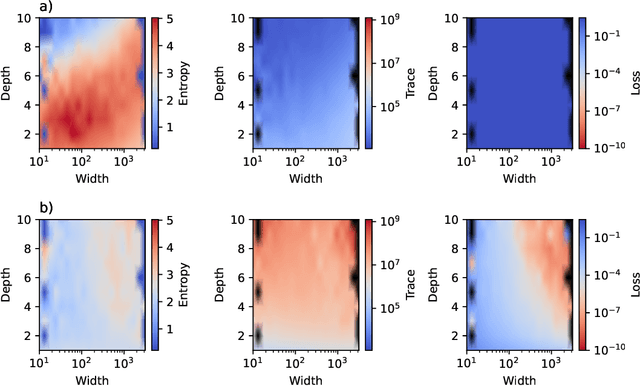

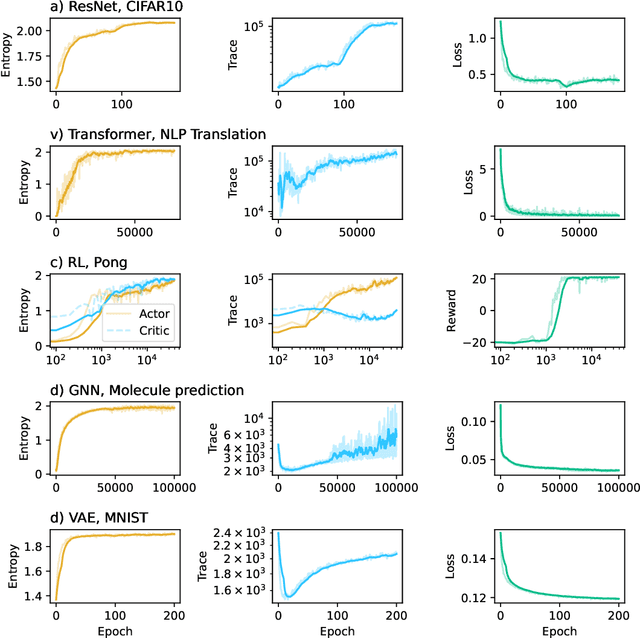

Abstract:This work presents a novel means for understanding learning dynamics and scaling relations in neural networks. We show that certain measures on the spectrum of the empirical neural tangent kernel, specifically entropy and trace, yield insight into the representations learned by a neural network and how these can be improved through architecture scaling. These results are demonstrated first on test cases before being shown on more complex networks, including transformers, auto-encoders, graph neural networks, and reinforcement learning studies. In testing on a wide range of architectures, we highlight the universal nature of training dynamics and further discuss how it can be used to understand the mechanisms behind learning in neural networks. We identify two such dominant mechanisms present throughout machine learning training. The first, information compression, is seen through a reduction in the entropy of the NTK spectrum during training, and occurs predominantly in small neural networks. The second, coined structure formation, is seen through an increasing entropy and thus, the creation of structure in the neural network representations beyond the prior established by the network at initialization. Due to the ubiquity of the latter in deep neural network architectures and its flexibility in the creation of feature-rich representations, we argue that this form of evolution of the network's entropy be considered the onset of a deep learning regime.

The role of data embedding in quantum autoencoders for improved anomaly detection

Sep 06, 2024Abstract:The performance of Quantum Autoencoders (QAEs) in anomaly detection tasks is critically dependent on the choice of data embedding and ansatz design. This study explores the effects of three data embedding techniques, data re-uploading, parallel embedding, and alternate embedding, on the representability and effectiveness of QAEs in detecting anomalies. Our findings reveal that even with relatively simple variational circuits, enhanced data embedding strategies can substantially improve anomaly detection accuracy and the representability of underlying data across different datasets. Starting with toy examples featuring low-dimensional data, we visually demonstrate the effect of different embedding techniques on the representability of the model. We then extend our analysis to complex, higher-dimensional datasets, highlighting the significant impact of embedding methods on QAE performance.

Optimal Symmetries in Binary Classification

Aug 16, 2024Abstract:We explore the role of group symmetries in binary classification tasks, presenting a novel framework that leverages the principles of Neyman-Pearson optimality. Contrary to the common intuition that larger symmetry groups lead to improved classification performance, our findings show that selecting the appropriate group symmetries is crucial for optimising generalisation and sample efficiency. We develop a theoretical foundation for designing group equivariant neural networks that align the choice of symmetries with the underlying probability distributions of the data. Our approach provides a unified methodology for improving classification accuracy across a broad range of applications by carefully tailoring the symmetry group to the specific characteristics of the problem. Theoretical analysis and experimental results demonstrate that optimal classification performance is not always associated with the largest equivariant groups possible in the domain, even when the likelihood ratio is invariant under one of its proper subgroups, but rather with those subgroups themselves. This work offers insights and practical guidelines for constructing more effective group equivariant architectures in diverse machine-learning contexts.

Training Neural Networks with Universal Adiabatic Quantum Computing

Aug 24, 2023Abstract:The training of neural networks (NNs) is a computationally intensive task requiring significant time and resources. This paper presents a novel approach to NN training using Adiabatic Quantum Computing (AQC), a paradigm that leverages the principles of adiabatic evolution to solve optimisation problems. We propose a universal AQC method that can be implemented on gate quantum computers, allowing for a broad range of Hamiltonians and thus enabling the training of expressive neural networks. We apply this approach to various neural networks with continuous, discrete, and binary weights. Our results indicate that AQC can very efficiently find the global minimum of the loss function, offering a promising alternative to classical training methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge