Marko Thiel

UADA3D: Unsupervised Adversarial Domain Adaptation for 3D Object Detection with Sparse LiDAR and Large Domain Gaps

Mar 28, 2024Abstract:In this study, we address a gap in existing unsupervised domain adaptation approaches on LiDAR-based 3D object detection, which have predominantly concentrated on adapting between established, high-density autonomous driving datasets. We focus on sparser point clouds, capturing scenarios from different perspectives: not just from vehicles on the road but also from mobile robots on sidewalks, which encounter significantly different environmental conditions and sensor configurations. We introduce Unsupervised Adversarial Domain Adaptation for 3D Object Detection (UADA3D). UADA3D does not depend on pre-trained source models or teacher-student architectures. Instead, it uses an adversarial approach to directly learn domain-invariant features. We demonstrate its efficacy in various adaptation scenarios, showing significant improvements in both self-driving car and mobile robot domains. Our code is open-source and will be available soon.

MCD: Diverse Large-Scale Multi-Campus Dataset for Robot Perception

Mar 18, 2024Abstract:Perception plays a crucial role in various robot applications. However, existing well-annotated datasets are biased towards autonomous driving scenarios, while unlabelled SLAM datasets are quickly over-fitted, and often lack environment and domain variations. To expand the frontier of these fields, we introduce a comprehensive dataset named MCD (Multi-Campus Dataset), featuring a wide range of sensing modalities, high-accuracy ground truth, and diverse challenging environments across three Eurasian university campuses. MCD comprises both CCS (Classical Cylindrical Spinning) and NRE (Non-Repetitive Epicyclic) lidars, high-quality IMUs (Inertial Measurement Units), cameras, and UWB (Ultra-WideBand) sensors. Furthermore, in a pioneering effort, we introduce semantic annotations of 29 classes over 59k sparse NRE lidar scans across three domains, thus providing a novel challenge to existing semantic segmentation research upon this largely unexplored lidar modality. Finally, we propose, for the first time to the best of our knowledge, continuous-time ground truth based on optimization-based registration of lidar-inertial data on large survey-grade prior maps, which are also publicly released, each several times the size of existing ones. We conduct a rigorous evaluation of numerous state-of-the-art algorithms on MCD, report their performance, and highlight the challenges awaiting solutions from the research community.

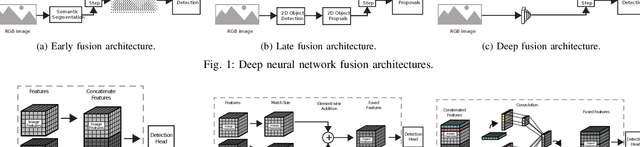

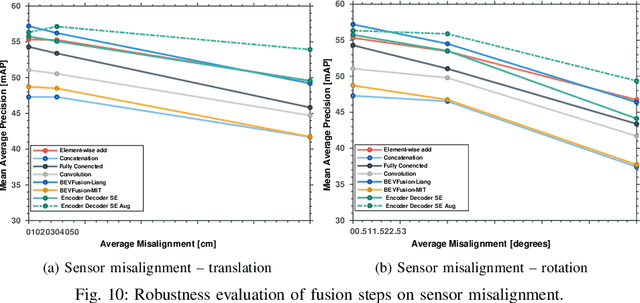

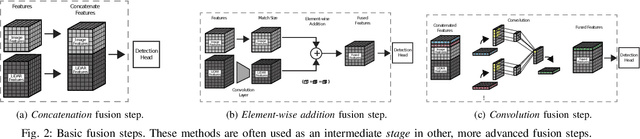

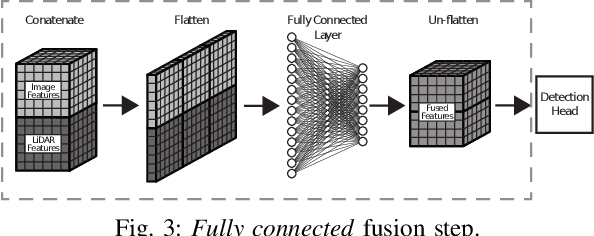

Towards a Robust Sensor Fusion Step for 3D Object Detection on Corrupted Data

Jun 12, 2023

Abstract:Multimodal sensor fusion methods for 3D object detection have been revolutionizing the autonomous driving research field. Nevertheless, most of these methods heavily rely on dense LiDAR data and accurately calibrated sensors which is often not the case in real-world scenarios. Data from LiDAR and cameras often come misaligned due to the miscalibration, decalibration, or different frequencies of the sensors. Additionally, some parts of the LiDAR data may be occluded and parts of the data may be missing due to hardware malfunction or weather conditions. This work presents a novel fusion step that addresses data corruptions and makes sensor fusion for 3D object detection more robust. Through extensive experiments, we demonstrate that our method performs on par with state-of-the-art approaches on normal data and outperforms them on misaligned data.

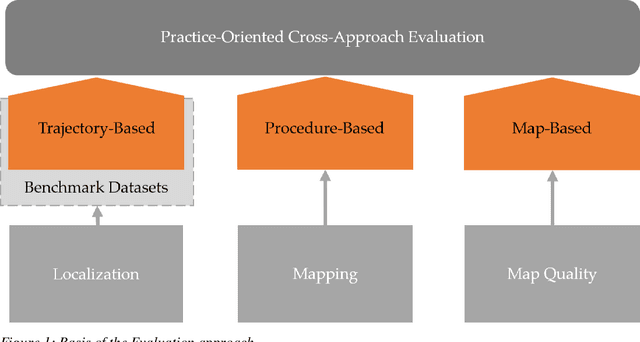

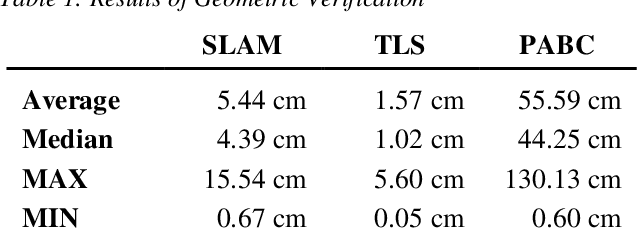

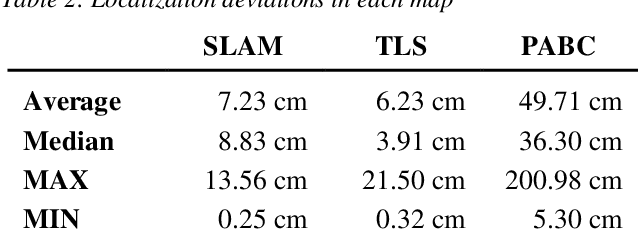

Comparison of Varied 2D Mapping Approaches by Using Practice-Oriented Evaluation Criteria

Oct 19, 2022

Abstract:A key aspect of the precision of a mobile robots localization is the quality and aptness of the map it is using. A variety of mapping approaches are available that can be employed to create such maps with varying degrees of effort, hardware requirements and quality of the resulting maps. To create a better understanding of the applicability of these different approaches to specific applications, this paper evaluates and compares three different mapping approaches based on simultaneous localization and mapping, terrestrial laser scanning as well as publicly accessible building contours.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge