Marissa Radensky

University of Washington

Scideator: Human-LLM Scientific Idea Generation Grounded in Research-Paper Facet Recombination

Sep 23, 2024

Abstract:The scientific ideation process often involves blending salient aspects of existing papers to create new ideas. To see if large language models (LLMs) can assist this process, we contribute Scideator, a novel mixed-initiative tool for scientific ideation. Starting from a user-provided set of papers, Scideator extracts key facets (purposes, mechanisms, and evaluations) from these and relevant papers, allowing users to explore the idea space by interactively recombining facets to synthesize inventive ideas. Scideator also helps users to gauge idea novelty by searching the literature for potential overlaps and showing automated novelty assessments and explanations. To support these tasks, Scideator introduces four LLM-powered retrieval-augmented generation (RAG) modules: Analogous Paper Facet Finder, Faceted Idea Generator, Idea Novelty Checker, and Idea Novelty Iterator. In a within-subjects user study, 19 computer-science researchers identified significantly more interesting ideas using Scideator compared to a strong baseline combining a scientific search engine with LLM interaction.

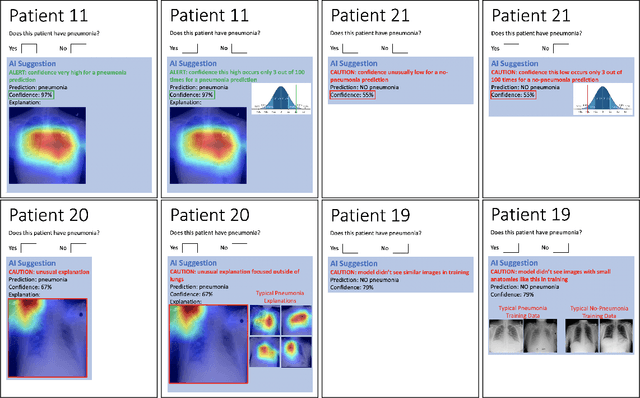

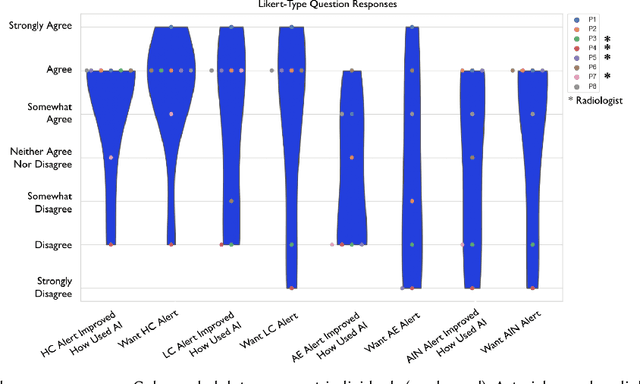

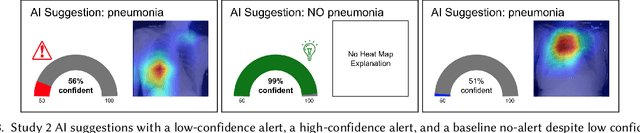

Exploring How Anomalous Model Input and Output Alerts Affect Decision-Making in Healthcare

Apr 27, 2022

Abstract:An important goal in the field of human-AI interaction is to help users more appropriately trust AI systems' decisions. A situation in which the user may particularly benefit from more appropriate trust is when the AI receives anomalous input or provides anomalous output. To the best of our knowledge, this is the first work towards understanding how anomaly alerts may contribute to appropriate trust of AI. In a formative mixed-methods study with 4 radiologists and 4 other physicians, we explore how AI alerts for anomalous input, very high and low confidence, and anomalous saliency-map explanations affect users' experience with mockups of an AI clinical decision support system (CDSS) for evaluating chest x-rays for pneumonia. We find evidence suggesting that the four anomaly alerts are desired by non-radiologists, and the high-confidence alerts are desired by both radiologists and non-radiologists. In a follow-up user study, we investigate how high- and low-confidence alerts affect the accuracy and thus appropriate trust of 33 radiologists working with AI CDSS mockups. We observe that these alerts do not improve users' accuracy or experience and discuss potential reasons why.

Exploring The Role of Local and Global Explanations in Recommender Systems

Sep 27, 2021

Abstract:Explanations are well-known to improve recommender systems' transparency. These explanations may be local, explaining an individual recommendation, or global, explaining the recommender model in general. Despite their widespread use, there has been little investigation into the relative benefits of these two approaches. Do they provide the same benefits to users, or do they serve different purposes? We conducted a 30-participant exploratory study and a 30-participant controlled user study with a research-paper recommender system to analyze how providing participants local, global, or both explanations influences user understanding of system behavior. Our results provide evidence suggesting that both explanations are more helpful than either alone for explaining how to improve recommendations, yet both appeared less helpful than global alone for efficiency in identifying false positives and negatives. However, we note that the two explanation approaches may be better compared in the context of a higher-stakes or more opaque domain.

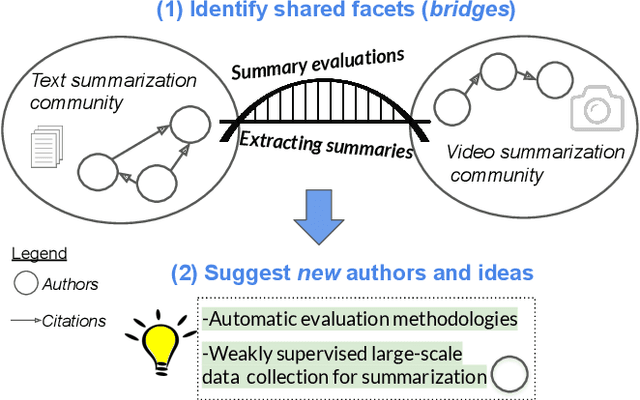

Bursting Scientific Filter Bubbles: Boosting Innovation via Novel Author Discovery

Sep 10, 2021

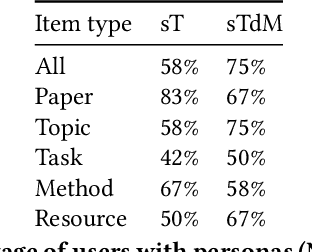

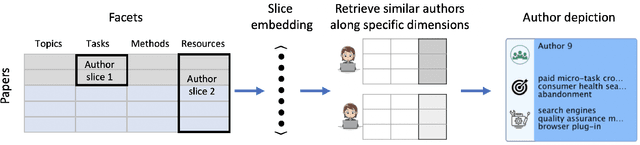

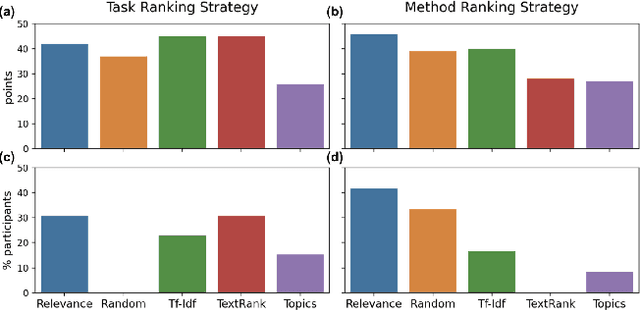

Abstract:Isolated silos of scientific research and the growing challenge of information overload limit awareness across the literature and hinder innovation. Algorithmic curation and recommendation, which often prioritize relevance, can further reinforce these informational "filter bubbles." In response, we describe Bridger, a system for facilitating discovery of scholars and their work, to explore design tradeoffs between relevant and novel recommendations. We construct a faceted representation of authors with information gleaned from their papers and inferred author personas, and use it to develop an approach that locates commonalities ("bridges") and contrasts between scientists -- retrieving partially similar authors rather than aiming for strict similarity. In studies with computer science researchers, this approach helps users discover authors considered useful for generating novel research directions, outperforming a state-of-art neural model. In addition to recommending new content, we also demonstrate an approach for displaying it in a manner that boosts researchers' ability to understand the work of authors with whom they are unfamiliar. Finally, our analysis reveals that Bridger connects authors who have different citation profiles, publish in different venues, and are more distant in social co-authorship networks, raising the prospect of bridging diverse communities and facilitating discovery.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge