Lukas Zingg

Acquiring Submillimeter-Accurate Multi-Task Vision Datasets for Computer-Assisted Orthopedic Surgery

Jan 26, 2025Abstract:Advances in computer vision, particularly in optical image-based 3D reconstruction and feature matching, enable applications like marker-less surgical navigation and digitization of surgery. However, their development is hindered by a lack of suitable datasets with 3D ground truth. This work explores an approach to generating realistic and accurate ex vivo datasets tailored for 3D reconstruction and feature matching in open orthopedic surgery. A set of posed images and an accurately registered ground truth surface mesh of the scene are required to develop vision-based 3D reconstruction and matching methods suitable for surgery. We propose a framework consisting of three core steps and compare different methods for each step: 3D scanning, calibration of viewpoints for a set of high-resolution RGB images, and an optical-based method for scene registration. We evaluate each step of this framework on an ex vivo scoliosis surgery using a pig spine, conducted under real operating room conditions. A mean 3D Euclidean error of 0.35 mm is achieved with respect to the 3D ground truth. The proposed method results in submillimeter accurate 3D ground truths and surgical images with a spatial resolution of 0.1 mm. This opens the door to acquiring future surgical datasets for high-precision applications.

Domain adaptation strategies for 3D reconstruction of the lumbar spine using real fluoroscopy data

Jan 29, 2024

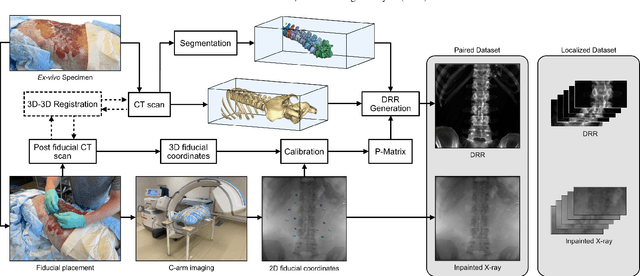

Abstract:This study tackles key obstacles in adopting surgical navigation in orthopedic surgeries, including time, cost, radiation, and workflow integration challenges. Recently, our work X23D showed an approach for generating 3D anatomical models of the spine from only a few intraoperative fluoroscopic images. This negates the need for conventional registration-based surgical navigation by creating a direct intraoperative 3D reconstruction of the anatomy. Despite these strides, the practical application of X23D has been limited by a domain gap between synthetic training data and real intraoperative images. In response, we devised a novel data collection protocol for a paired dataset consisting of synthetic and real fluoroscopic images from the same perspectives. Utilizing this dataset, we refined our deep learning model via transfer learning, effectively bridging the domain gap between synthetic and real X-ray data. A novel style transfer mechanism also allows us to convert real X-rays to mirror the synthetic domain, enabling our in-silico-trained X23D model to achieve high accuracy in real-world settings. Our results demonstrated that the refined model can rapidly generate accurate 3D reconstructions of the entire lumbar spine from as few as three intraoperative fluoroscopic shots. It achieved an 84% F1 score, matching the accuracy of our previous synthetic data-based research. Additionally, with a computational time of only 81.1 ms, our approach provides real-time capabilities essential for surgery integration. Through examining ideal imaging setups and view angle dependencies, we've further confirmed our system's practicality and dependability in clinical settings. Our research marks a significant step forward in intraoperative 3D reconstruction, offering enhancements to surgical planning, navigation, and robotics.

Automatic registration with continuous pose updates for marker-less surgical navigation in spine surgery

Aug 05, 2023Abstract:Established surgical navigation systems for pedicle screw placement have been proven to be accurate, but still reveal limitations in registration or surgical guidance. Registration of preoperative data to the intraoperative anatomy remains a time-consuming, error-prone task that includes exposure to harmful radiation. Surgical guidance through conventional displays has well-known drawbacks, as information cannot be presented in-situ and from the surgeon's perspective. Consequently, radiation-free and more automatic registration methods with subsequent surgeon-centric navigation feedback are desirable. In this work, we present an approach that automatically solves the registration problem for lumbar spinal fusion surgery in a radiation-free manner. A deep neural network was trained to segment the lumbar spine and simultaneously predict its orientation, yielding an initial pose for preoperative models, which then is refined for each vertebra individually and updated in real-time with GPU acceleration while handling surgeon occlusions. An intuitive surgical guidance is provided thanks to the integration into an augmented reality based navigation system. The registration method was verified on a public dataset with a mean of 96\% successful registrations, a target registration error of 2.73 mm, a screw trajectory error of 1.79{\deg} and a screw entry point error of 2.43 mm. Additionally, the whole pipeline was validated in an ex-vivo surgery, yielding a 100\% screw accuracy and a registration accuracy of 1.20 mm. Our results meet clinical demands and emphasize the potential of RGB-D data for fully automatic registration approaches in combination with augmented reality guidance.

Next-generation Surgical Navigation: Multi-view Marker-less 6DoF Pose Estimation of Surgical Instruments

May 05, 2023

Abstract:State-of-the-art research of traditional computer vision is increasingly leveraged in the surgical domain. A particular focus in computer-assisted surgery is to replace marker-based tracking systems for instrument localization with pure image-based 6DoF pose estimation. However, the state of the art has not yet met the accuracy required for surgical navigation. In this context, we propose a high-fidelity marker-less optical tracking system for surgical instrument localization. We developed a multi-view camera setup consisting of static and mobile cameras and collected a large-scale RGB-D video dataset with dedicated synchronization and data fusions methods. Different state-of-the-art pose estimation methods were integrated into a deep learning pipeline and evaluated on multiple camera configurations. Furthermore, the performance impacts of different input modalities and camera positions, as well as training on purely synthetic data, were compared. The best model achieved an average position and orientation error of 1.3 mm and 1.0{\deg} for a surgical drill as well as 3.8 mm and 5.2{\deg} for a screwdriver. These results significantly outperform related methods in the literature and are close to clinical-grade accuracy, demonstrating that marker-less tracking of surgical instruments is becoming a feasible alternative to existing marker-based systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge