Kamil Kowol

Perception Datasets for Anomaly Detection in Autonomous Driving: A Survey

Feb 06, 2023

Abstract:Deep neural networks (DNN) which are employed in perception systems for autonomous driving require a huge amount of data to train on, as they must reliably achieve high performance in all kinds of situations. However, these DNN are usually restricted to a closed set of semantic classes available in their training data, and are therefore unreliable when confronted with previously unseen instances. Thus, multiple perception datasets have been created for the evaluation of anomaly detection methods, which can be categorized into three groups: real anomalies in real-world, synthetic anomalies augmented into real-world and completely synthetic scenes. This survey provides a structured and, to the best of our knowledge, complete overview and comparison of perception datasets for anomaly detection in autonomous driving. Each chapter provides information about tasks and ground truth, context information, and licenses. Additionally, we discuss current weaknesses and gaps in existing datasets to underline the importance of developing further data.

Space, Time, and Interaction: A Taxonomy of Corner Cases in Trajectory Datasets for Automated Driving

Oct 17, 2022

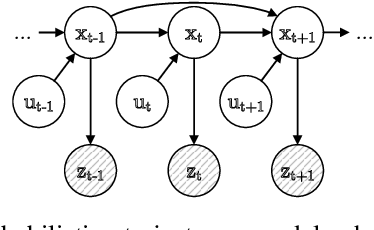

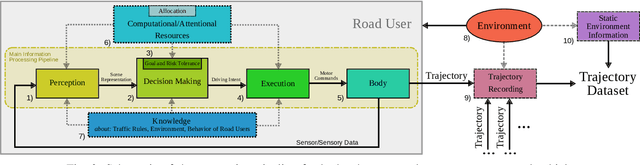

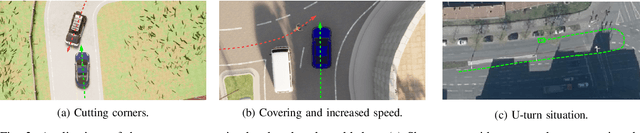

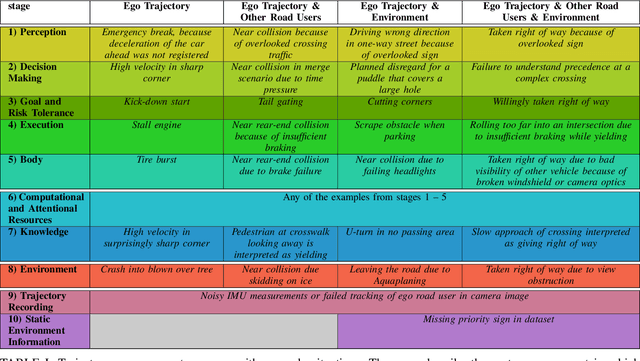

Abstract:Trajectory data analysis is an essential component for highly automated driving. Complex models developed with these data predict other road users' movement and behavior patterns. Based on these predictions - and additional contextual information such as the course of the road, (traffic) rules, and interaction with other road users - the highly automated vehicle (HAV) must be able to reliably and safely perform the task assigned to it, e.g., moving from point A to B. Ideally, the HAV moves safely through its environment, just as we would expect a human driver to do. However, if unusual trajectories occur, so-called trajectory corner cases, a human driver can usually cope well, but an HAV can quickly get into trouble. In the definition of trajectory corner cases, which we provide in this work, we will consider the relevance of unusual trajectories with respect to the task at hand. Based on this, we will also present a taxonomy of different trajectory corner cases. The categorization of corner cases into the taxonomy will be shown with examples and is done by cause and required data sources. To illustrate the complexity between the machine learning (ML) model and the corner case cause, we present a general processing chain underlying the taxonomy.

Two Video Data Sets for Tracking and Retrieval of Out of Distribution Objects

Oct 05, 2022

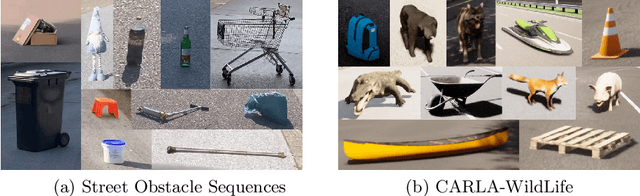

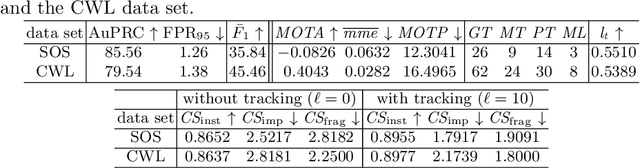

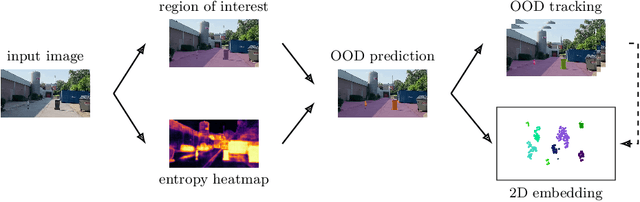

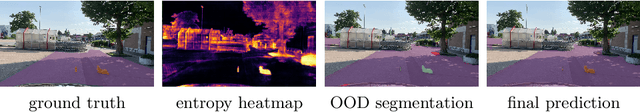

Abstract:In this work we present two video test data sets for the novel computer vision (CV) task of out of distribution tracking (OOD tracking). Here, OOD objects are understood as objects with a semantic class outside the semantic space of an underlying image segmentation algorithm, or an instance within the semantic space which however looks decisively different from the instances contained in the training data. OOD objects occurring on video sequences should be detected on single frames as early as possible and tracked over their time of appearance as long as possible. During the time of appearance, they should be segmented as precisely as possible. We present the SOS data set containing 20 video sequences of street scenes and more than 1000 labeled frames with up to two OOD objects. We furthermore publish the synthetic CARLA-WildLife data set that consists of 26 video sequences containing up to four OOD objects on a single frame. We propose metrics to measure the success of OOD tracking and develop a baseline algorithm that efficiently tracks the OOD objects. As an application that benefits from OOD tracking, we retrieve OOD sequences from unlabeled videos of street scenes containing OOD objects.

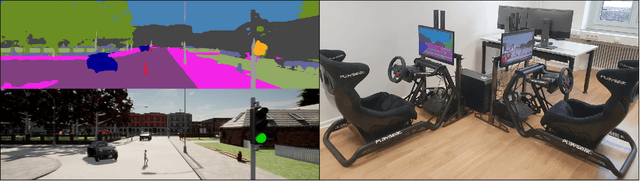

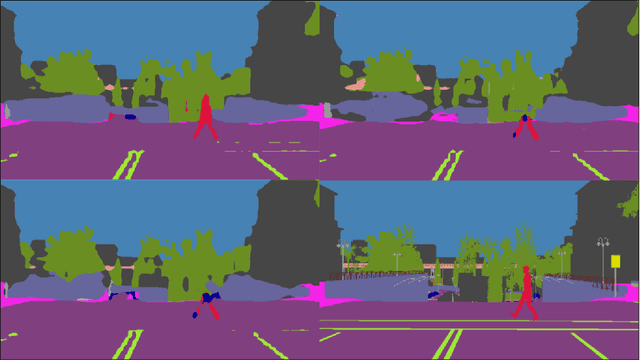

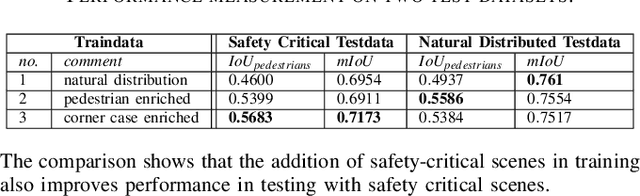

A-Eye: Driving with the Eyes of AI for Corner Case Generation

Feb 22, 2022

Abstract:The overall goal of this work is to enrich training data for automated driving with so called corner cases. In road traffic, corner cases are critical, rare and unusual situations that challenge the perception by AI algorithms. For this purpose, we present the design of a test rig to generate synthetic corner cases using a human-in-the-loop approach. For the test rig, a real-time semantic segmentation network is trained and integrated into the driving simulation software CARLA in such a way that a human can drive on the network's prediction. In addition, a second person gets to see the same scene from the original CARLA output and is supposed to intervene with the help of a second control unit as soon as the semantic driver shows dangerous driving behavior. Interventions potentially indicate poor recognition of a critical scene by the segmentation network and then represents a corner case. In our experiments, we show that targeted enrichment of training data with corner cases leads to improvements in pedestrian detection in safety relevant episodes in road traffic.

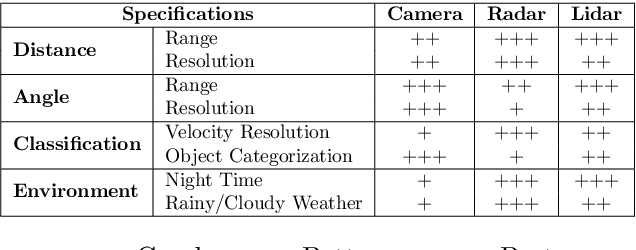

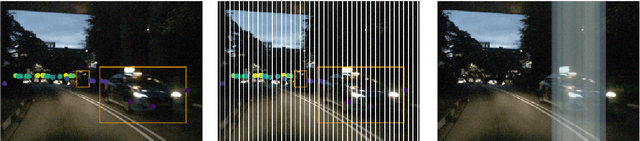

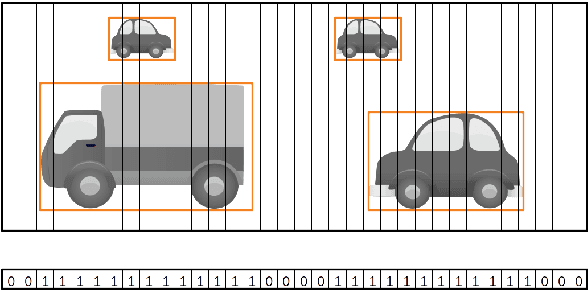

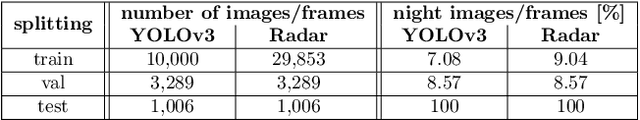

YOdar: Uncertainty-based Sensor Fusion for Vehicle Detection with Camera and Radar Sensors

Oct 07, 2020

Abstract:In this work, we present an uncertainty-based method for sensor fusion with camera and radar data. The outputs of two neural networks, one processing camera and the other one radar data, are combined in an uncertainty aware manner. To this end, we gather the outputs and corresponding meta information for both networks. For each predicted object, the gathered information is post-processed by a gradient boosting method to produce a joint prediction of both networks. In our experiments we combine the YOLOv3 object detection network with a customized $1D$ radar segmentation network and evaluate our method on the nuScenes dataset. In particular we focus on night scenes, where the capability of object detection networks based on camera data is potentially handicapped. Our experiments show, that this approach of uncertainty aware fusion, which is also of very modular nature, significantly gains performance compared to single sensor baselines and is in range of specifically tailored deep learning based fusion approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge