YOdar: Uncertainty-based Sensor Fusion for Vehicle Detection with Camera and Radar Sensors

Paper and Code

Oct 07, 2020

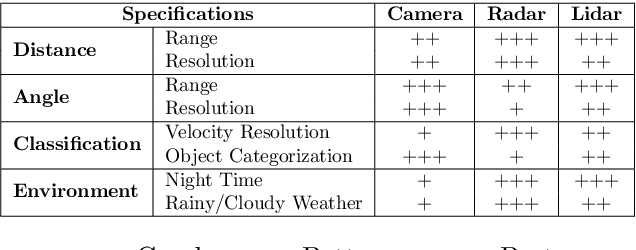

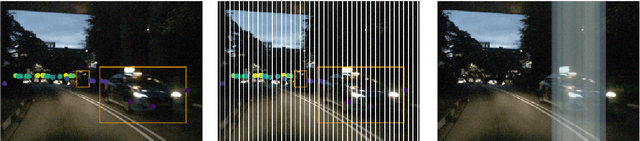

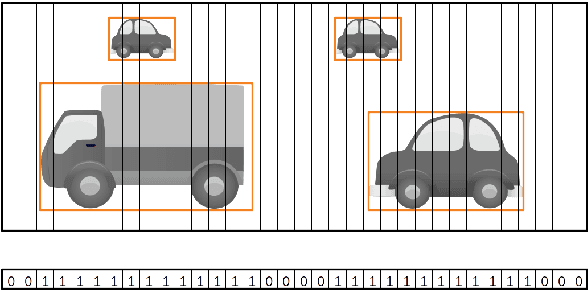

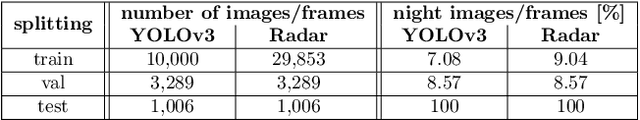

In this work, we present an uncertainty-based method for sensor fusion with camera and radar data. The outputs of two neural networks, one processing camera and the other one radar data, are combined in an uncertainty aware manner. To this end, we gather the outputs and corresponding meta information for both networks. For each predicted object, the gathered information is post-processed by a gradient boosting method to produce a joint prediction of both networks. In our experiments we combine the YOLOv3 object detection network with a customized $1D$ radar segmentation network and evaluate our method on the nuScenes dataset. In particular we focus on night scenes, where the capability of object detection networks based on camera data is potentially handicapped. Our experiments show, that this approach of uncertainty aware fusion, which is also of very modular nature, significantly gains performance compared to single sensor baselines and is in range of specifically tailored deep learning based fusion approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge