Junmei Yang

Don't Forget Its Variance! The Minimum Path Variance Principle for Accurate and Stable Score-Based Density Ratio Estimation

Jan 31, 2026Abstract:Score-based methods have emerged as a powerful framework for density ratio estimation (DRE), but they face an important paradox in that, while theoretically path-independent, their practical performance depends critically on the chosen path schedule. We resolve this issue by proving that tractable training objectives differ from the ideal, ground-truth objective by a crucial, overlooked term: the path variance of the time score. To address this, we propose MinPV (\textbf{Min}imum \textbf{P}ath \textbf{V}ariance) Principle, which introduces a principled heuristic to minimize the overlooked path variance. Our key contribution is the derivation of a closed-form expression for the variance, turning an intractable problem into a tractable optimization. By parameterizing the path with a flexible Kumaraswamy Mixture Model, our method learns a data-adaptive, low-variance path without heuristic selection. This principled optimization of the complete objective yields more accurate and stable estimators, establishing new state-of-the-art results on challenging benchmarks.

Any-Step Density Ratio Estimation via Interval-Annealed Secant Alignment

Sep 05, 2025

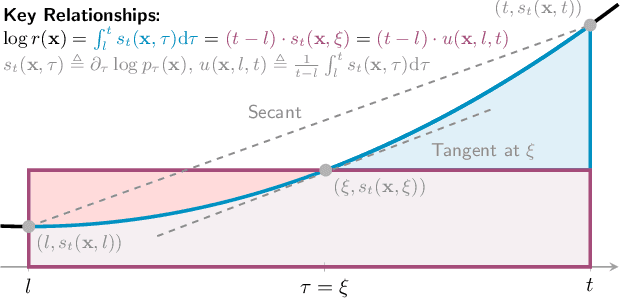

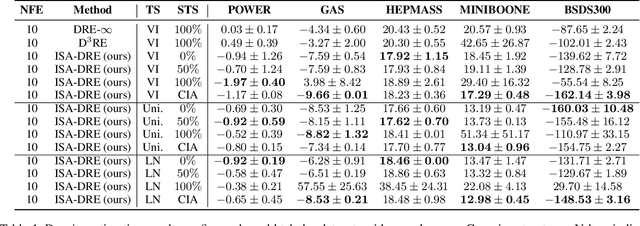

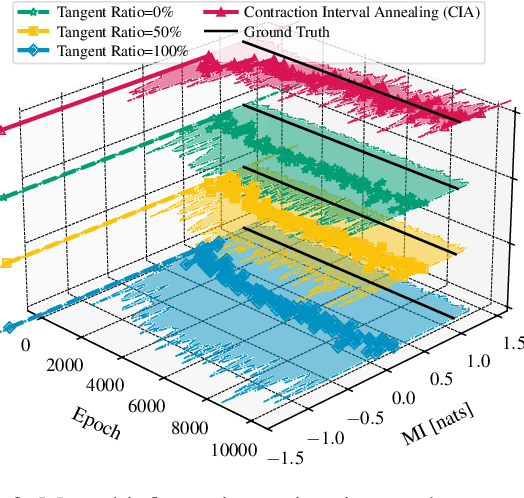

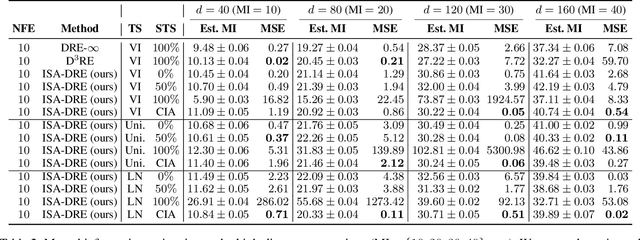

Abstract:Estimating density ratios is a fundamental problem in machine learning, but existing methods often trade off accuracy for efficiency. We propose \textit{Interval-annealed Secant Alignment Density Ratio Estimation (ISA-DRE)}, a framework that enables accurate, any-step estimation without numerical integration. Instead of modeling infinitesimal tangents as in prior methods, ISA-DRE learns a global secant function, defined as the expectation of all tangents over an interval, with provably lower variance, making it more suitable for neural approximation. This is made possible by the \emph{Secant Alignment Identity}, a self-consistency condition that formally connects the secant with its underlying tangent representations. To mitigate instability during early training, we introduce \emph{Contraction Interval Annealing}, a curriculum strategy that gradually expands the alignment interval during training. This process induces a contraction mapping, which improves convergence and training stability. Empirically, ISA-DRE achieves competitive accuracy with significantly fewer function evaluations compared to prior methods, resulting in much faster inference and making it well suited for real-time and interactive applications.

Dequantified Diffusion Schrödinger Bridge for Density Ratio Estimation

May 08, 2025Abstract:Density ratio estimation is fundamental to tasks involving $f$-divergences, yet existing methods often fail under significantly different distributions or inadequately overlap supports, suffering from the \textit{density-chasm} and the \textit{support-chasm} problems. Additionally, prior approaches yield divergent time scores near boundaries, leading to instability. We propose $\text{D}^3\text{RE}$, a unified framework for robust and efficient density ratio estimation. It introduces the Dequantified Diffusion-Bridge Interpolant (DDBI), which expands support coverage and stabilizes time scores via diffusion bridges and Gaussian dequantization. Building on DDBI, the Dequantified Schr\"odinger-Bridge Interpolant (DSBI) incorporates optimal transport to solve the Schr\"odinger bridge problem, enhancing accuracy and efficiency. Our method offers uniform approximation and bounded time scores in theory, and outperforms baselines empirically in mutual information and density estimation tasks.

Variational Learning of Gaussian Process Latent Variable Models through Stochastic Gradient Annealed Importance Sampling

Aug 13, 2024

Abstract:Gaussian Process Latent Variable Models (GPLVMs) have become increasingly popular for unsupervised tasks such as dimensionality reduction and missing data recovery due to their flexibility and non-linear nature. An importance-weighted version of the Bayesian GPLVMs has been proposed to obtain a tighter variational bound. However, this version of the approach is primarily limited to analyzing simple data structures, as the generation of an effective proposal distribution can become quite challenging in high-dimensional spaces or with complex data sets. In this work, we propose an Annealed Importance Sampling (AIS) approach to address these issues. By transforming the posterior into a sequence of intermediate distributions using annealing, we combine the strengths of Sequential Monte Carlo samplers and VI to explore a wider range of posterior distributions and gradually approach the target distribution. We further propose an efficient algorithm by reparameterizing all variables in the evidence lower bound (ELBO). Experimental results on both toy and image datasets demonstrate that our method outperforms state-of-the-art methods in terms of tighter variational bounds, higher log-likelihoods, and more robust convergence.

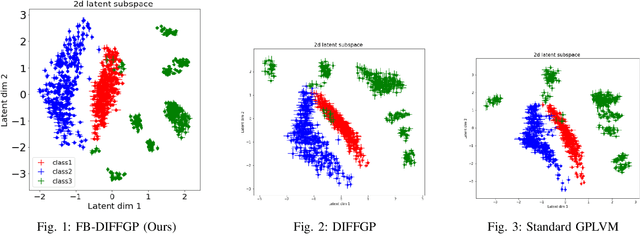

Fully Bayesian Differential Gaussian Processes through Stochastic Differential Equations

Aug 12, 2024

Abstract:Traditional deep Gaussian processes model the data evolution using a discrete hierarchy, whereas differential Gaussian processes (DIFFGPs) represent the evolution as an infinitely deep Gaussian process. However, prior DIFFGP methods often overlook the uncertainty of kernel hyperparameters and assume them to be fixed and time-invariant, failing to leverage the unique synergy between continuous-time models and approximate inference. In this work, we propose a fully Bayesian approach that treats the kernel hyperparameters as random variables and constructs coupled stochastic differential equations (SDEs) to learn their posterior distribution and that of inducing points. By incorporating estimation uncertainty on hyperparameters, our method enhances the model's flexibility and adaptability to complex dynamics. Additionally, our approach provides a time-varying, comprehensive, and realistic posterior approximation through coupling variables using SDE methods. Experimental results demonstrate the advantages of our method over traditional approaches, showcasing its superior performance in terms of flexibility, accuracy, and other metrics. Our work opens up exciting research avenues for advancing Bayesian inference and offers a powerful modeling tool for continuous-time Gaussian processes.

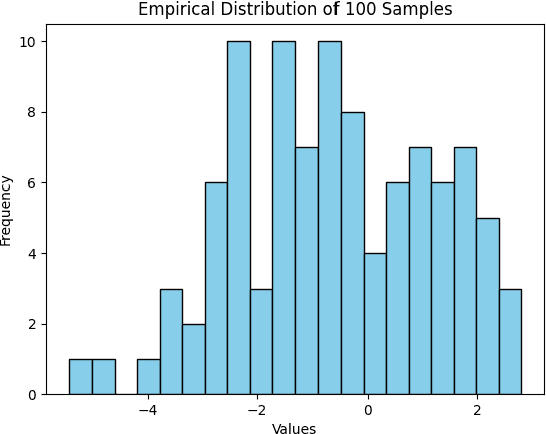

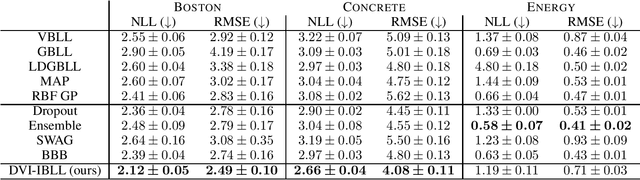

Flexible Bayesian Last Layer Models Using Implicit Priors and Diffusion Posterior Sampling

Aug 07, 2024

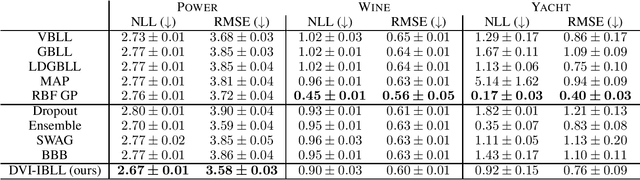

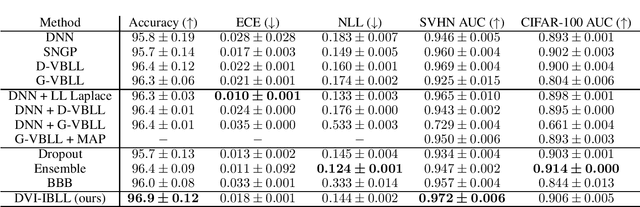

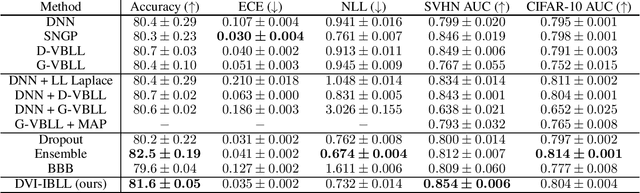

Abstract:Bayesian Last Layer (BLL) models focus solely on uncertainty in the output layer of neural networks, demonstrating comparable performance to more complex Bayesian models. However, the use of Gaussian priors for last layer weights in Bayesian Last Layer (BLL) models limits their expressive capacity when faced with non-Gaussian, outlier-rich, or high-dimensional datasets. To address this shortfall, we introduce a novel approach that combines diffusion techniques and implicit priors for variational learning of Bayesian last layer weights. This method leverages implicit distributions for modeling weight priors in BLL, coupled with diffusion samplers for approximating true posterior predictions, thereby establishing a comprehensive Bayesian prior and posterior estimation strategy. By delivering an explicit and computationally efficient variational lower bound, our method aims to augment the expressive abilities of BLL models, enhancing model accuracy, calibration, and out-of-distribution detection proficiency. Through detailed exploration and experimental validation, We showcase the method's potential for improving predictive accuracy and uncertainty quantification while ensuring computational efficiency.

Neural Operator Variational Inference based on Regularized Stein Discrepancy for Deep Gaussian Processes

Sep 22, 2023

Abstract:Deep Gaussian Process (DGP) models offer a powerful nonparametric approach for Bayesian inference, but exact inference is typically intractable, motivating the use of various approximations. However, existing approaches, such as mean-field Gaussian assumptions, limit the expressiveness and efficacy of DGP models, while stochastic approximation can be computationally expensive. To tackle these challenges, we introduce Neural Operator Variational Inference (NOVI) for Deep Gaussian Processes. NOVI uses a neural generator to obtain a sampler and minimizes the Regularized Stein Discrepancy in L2 space between the generated distribution and true posterior. We solve the minimax problem using Monte Carlo estimation and subsampling stochastic optimization techniques. We demonstrate that the bias introduced by our method can be controlled by multiplying the Fisher divergence with a constant, which leads to robust error control and ensures the stability and precision of the algorithm. Our experiments on datasets ranging from hundreds to tens of thousands demonstrate the effectiveness and the faster convergence rate of the proposed method. We achieve a classification accuracy of 93.56 on the CIFAR10 dataset, outperforming SOTA Gaussian process methods. Furthermore, our method guarantees theoretically controlled prediction error for DGP models and demonstrates remarkable performance on various datasets. We are optimistic that NOVI has the potential to enhance the performance of deep Bayesian nonparametric models and could have significant implications for various practical applications

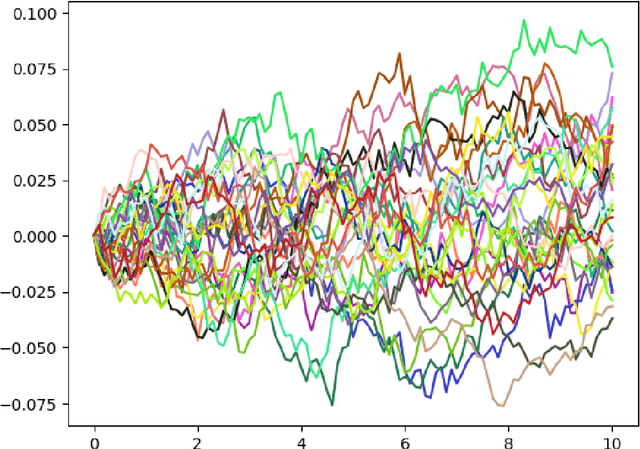

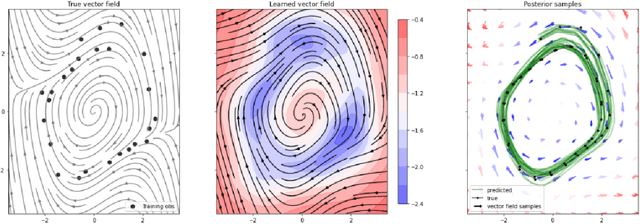

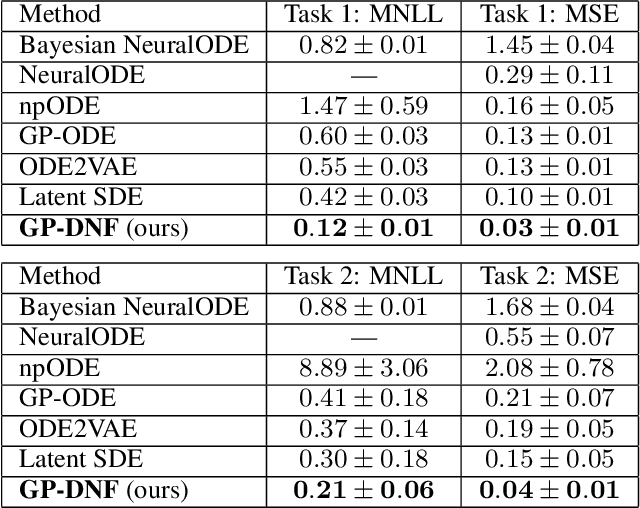

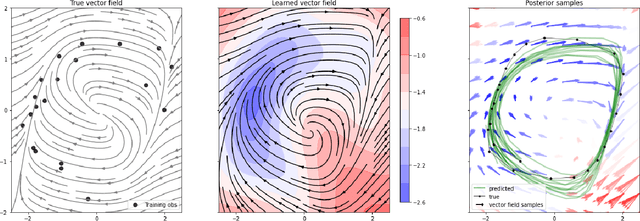

Double Normalizing Flows: Flexible Bayesian Gaussian Process ODEs Learning

Sep 17, 2023

Abstract:Recently, Gaussian processes have been utilized to model the vector field of continuous dynamical systems. Bayesian inference for such models \cite{hegde2022variational} has been extensively studied and has been applied in tasks such as time series prediction, providing uncertain estimates. However, previous Gaussian Process Ordinary Differential Equation (ODE) models may underperform on datasets with non-Gaussian process priors, as their constrained priors and mean-field posteriors may lack flexibility. To address this limitation, we incorporate normalizing flows to reparameterize the vector field of ODEs, resulting in a more flexible and expressive prior distribution. Additionally, due to the analytically tractable probability density functions of normalizing flows, we apply them to the posterior inference of GP ODEs, generating a non-Gaussian posterior. Through these dual applications of normalizing flows, our model improves accuracy and uncertainty estimates for Bayesian Gaussian Process ODEs. The effectiveness of our approach is demonstrated on simulated dynamical systems and real-world human motion data, including tasks such as time series prediction and missing data recovery. Experimental results indicate that our proposed method effectively captures model uncertainty while improving accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge