Juan Andrade-Cetto

SDformerFlow: Spatiotemporal swin spikeformer for event-based optical flow estimation

Sep 06, 2024

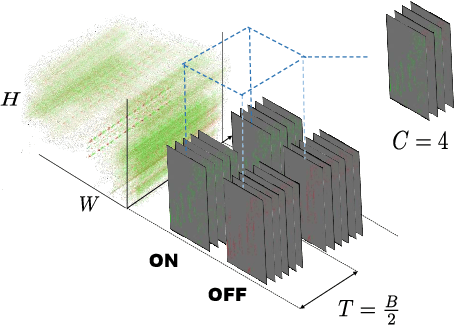

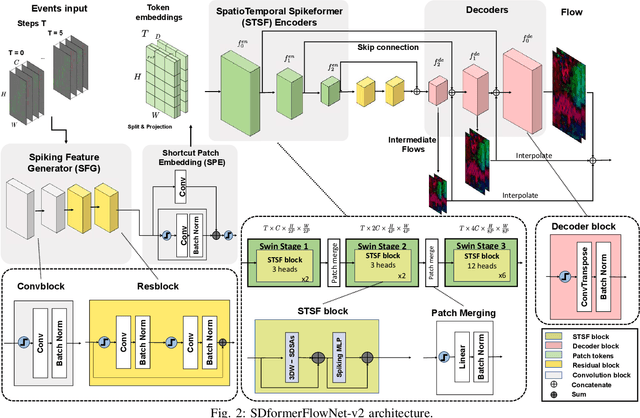

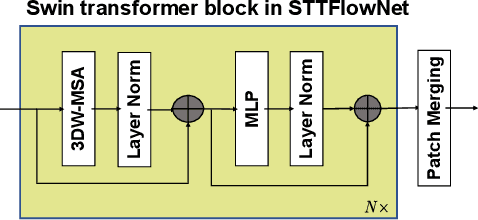

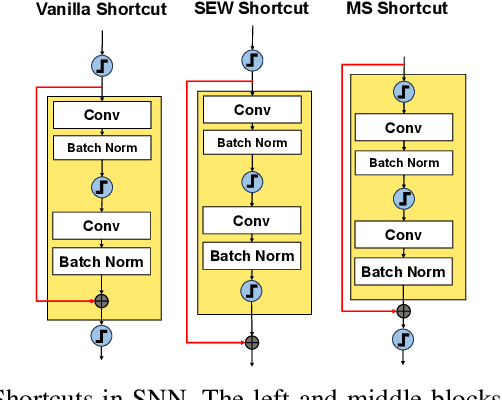

Abstract:Event cameras generate asynchronous and sparse event streams capturing changes in light intensity. They offer significant advantages over conventional frame-based cameras, such as a higher dynamic range and an extremely faster data rate, making them particularly useful in scenarios involving fast motion or challenging lighting conditions. Spiking neural networks (SNNs) share similar asynchronous and sparse characteristics and are well-suited for processing data from event cameras. Inspired by the potential of transformers and spike-driven transformers (spikeformers) in other computer vision tasks, we propose two solutions for fast and robust optical flow estimation for event cameras: STTFlowNet and SDformerFlow. STTFlowNet adopts a U-shaped artificial neural network (ANN) architecture with spatiotemporal shifted window self-attention (swin) transformer encoders, while SDformerFlow presents its fully spiking counterpart, incorporating swin spikeformer encoders. Furthermore, we present two variants of the spiking version with different neuron models. Our work is the first to make use of spikeformers for dense optical flow estimation. We conduct end-to-end training for all models using supervised learning. Our results yield state-of-the-art performance among SNN-based event optical flow methods on both the DSEC and MVSEC datasets, and show significant reduction in power consumption compared to the equivalent ANNs.

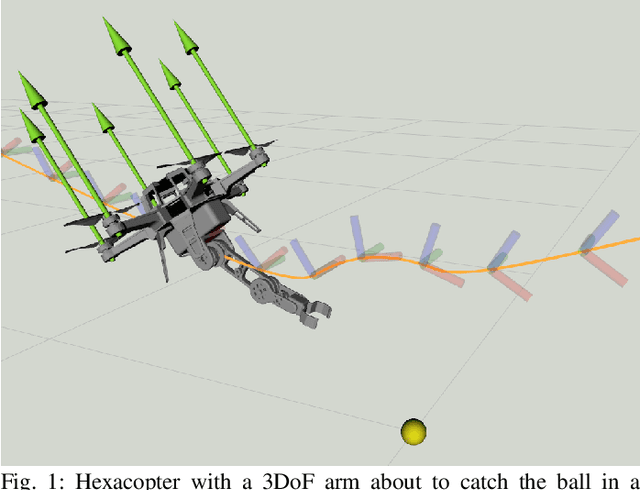

Borinot: an open thrust-torque-controlled robot for research on agile aerial-contact motion

Jul 27, 2023

Abstract:This paper introduces Borinot, an open-source aerial robotic platform designed to conduct research on hybrid agile locomotion and manipulation using flight and contacts. This platform features an agile and powerful hexarotor that can be outfitted with torque-actuated limbs of diverse architecture, allowing for whole-body dynamic control. As a result, Borinot can perform agile tasks such as aggressive or acrobatic maneuvers with the participation of the whole-body dynamics. The limbs attached to Borinot can be utilized in various ways; during contact, they can be used as legs to create contact-based locomotion, or as arms to manipulate objects. In free flight, they can be used as tails to contribute to dynamics, mimicking the movements of many animals. This allows for any hybridization of these dynamic modes, making Borinot an ideal open-source platform for research on hybrid aerial-contact agile motion. To demonstrate the key capabilities of Borinot in terms of agility with hybrid motion modes, we have fitted a planar 2DoF limb and implemented a whole-body torque-level model-predictive-control. The result is a capable and adaptable platform that, we believe, opens up new avenues of research in the field of agile robotics. Interesting links\footnote{Documentation: \url{www.iri.upc.edu/borinot}}\footnote{Video: \url{https://youtu.be/Ob7IIVB6P_A}}.

WOLF: A modular estimation framework for robotics based on factor graphs

Oct 25, 2021

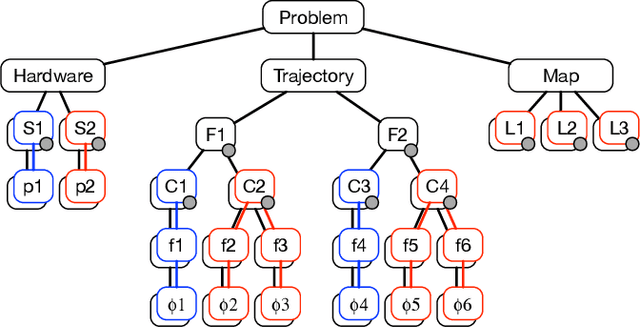

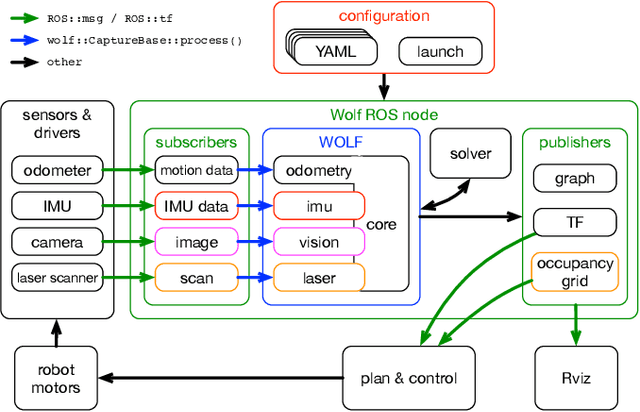

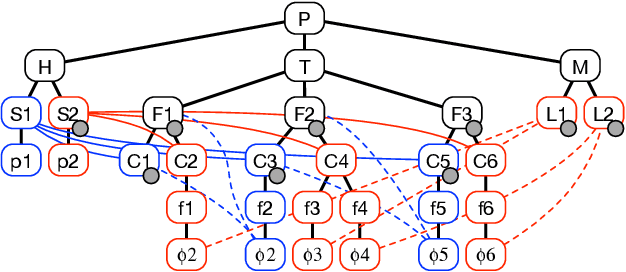

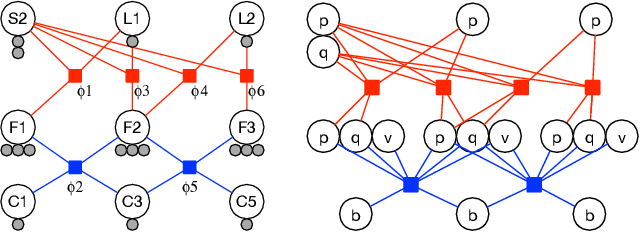

Abstract:This paper introduces WOLF, a C++ estimation framework based on factor graphs and targeted at mobile robotics. WOLF extends the applications of factor graphs from the typical problems of SLAM and odometry to a general estimation framework able to handle self-calibration, model identification, or the observation of dynamic quantities other than localization. WOLF produces high throughput estimates at sensor rates up to the kHz range, which can be used for feedback control of highly dynamic robots such as humanoids, quadrupeds or aerial manipulators. Departing from the factor graph paradigm, the architecture of WOLF allows for a modular yet tightly-coupled estimator. Modularity is based on plugins that are loaded at runtime. Then, integration is achieved simply through YAML files, allowing users to configure a wide range of applications without the need of writing or compiling code. Synchronization of incoming data and their processing into a unique factor graph is achieved through a decentralized strategy of frame creation and joining. Most algorithmic assets are coded as abstract algorithms in base classes with varying levels of specialization. Overall, these assets allow for coherent processing and favor code reusability and scalability. WOLF can be interfaced with different solvers, and we provide a wrapper to Google Ceres. Likewise, we offer ROS integration, providing a generic ROS node and specialized packages with subscribers and publishers. WOLF is made publicly available and open to collaboration.

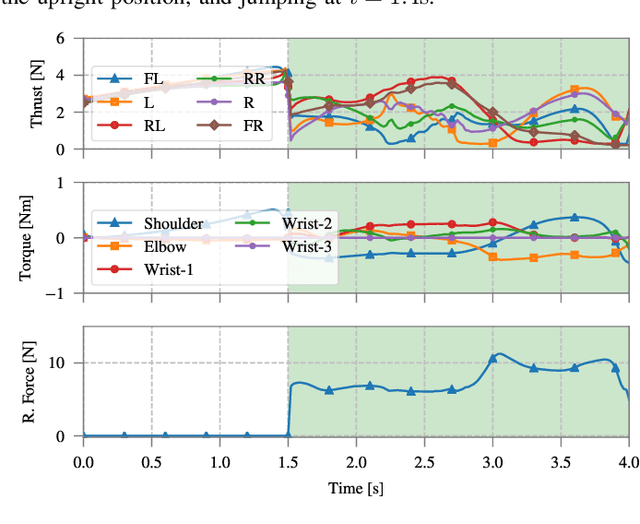

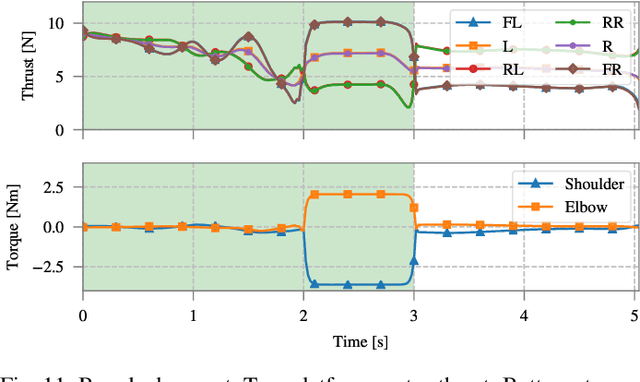

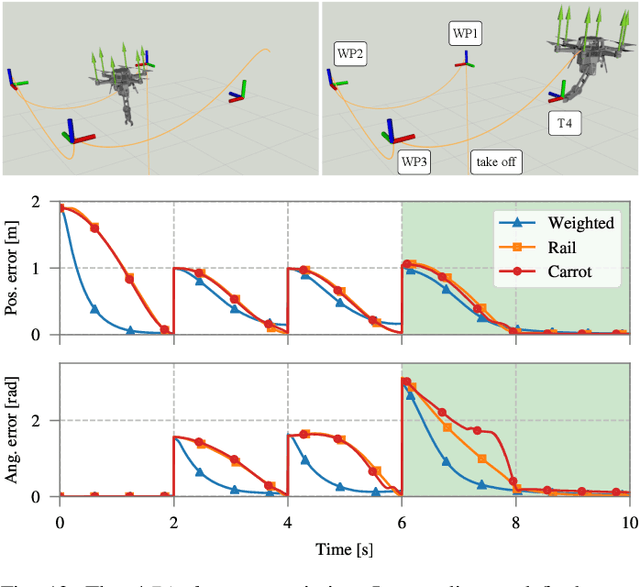

Full-Body Torque-Level Non-linear Model Predictive Control for Aerial Manipulation

Jul 08, 2021

Abstract:Non-linear model predictive control (nMPC) is a powerful approach to control complex robots (such as humanoids, quadrupeds, or unmanned aerial manipulators (UAMs)) as it brings important advantages over other existing techniques. The full-body dynamics, along with the prediction capability of the optimal control problem (OCP) solved at the core of the controller, allows to actuate the robot in line with its dynamics. This fact enhances the robot capabilities and allows, e.g., to perform intricate maneuvers at high dynamics while optimizing the amount of energy used. Despite the many similarities between humanoids or quadrupeds and UAMs, full-body torque-level nMPC has rarely been applied to UAMs. This paper provides a thorough description of how to use such techniques in the field of aerial manipulation. We give a detailed explanation of the different parts involved in the OCP, from the UAM dynamical model to the residuals in the cost function. We develop and compare three different nMPC controllers: Weighted MPC, Rail MPC, and Carrot MPC, which differ on the structure of their OCPs and on how these are updated at every time step. To validate the proposed framework, we present a wide variety of simulated case studies. First, we evaluate the trajectory generation problem, i.e., optimal control problems solved offline, involving different kinds of motions (e.g., aggressive maneuvers or contact locomotion) for different types of UAMs. Then, we assess the performance of the three nMPC controllers, i.e., closed-loop controllers solved online, through a variety of realistic simulations. For the benefit of the community, we have made available the source code related to this work.

High Speed Event Camera TRacking

Oct 13, 2020

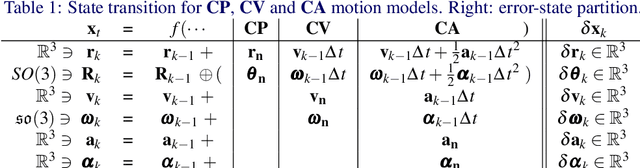

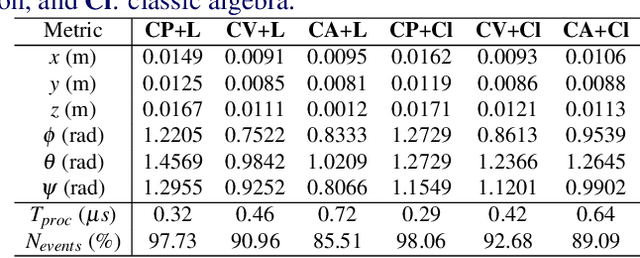

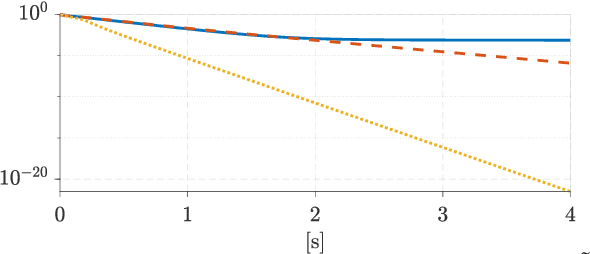

Abstract:Event cameras are bioinspired sensors with reaction times in the order of microseconds. This property makes them appealing for use in highly-dynamic computer vision applications. In this work,we explore the limits of this sensing technology and present an ultra-fast tracking algorithm able to estimate six-degree-of-freedom motion with dynamics over 25.8 g, at a throughput of 10 kHz,processing over a million events per second. Our method is capable of tracking either camera motion or the motion of an object in front of it, using an error-state Kalman filter formulated in a Lie-theoretic sense. The method includes a robust mechanism for the matching of events with projected line segments with very fast outlier rejection. Meticulous treatment of sparse matrices is applied to achieve real-time performance. Different motion models of varying complexity are considered for the sake of comparison and performance analysis

Multi-task closed-loop inverse kinematics stability through semidefinite programming

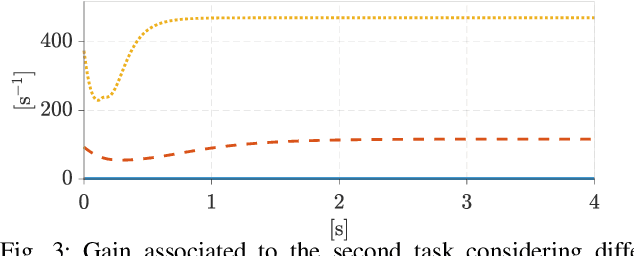

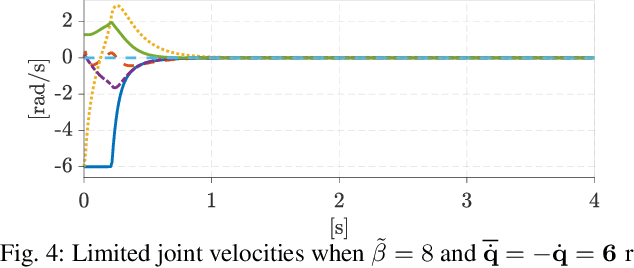

Apr 23, 2020

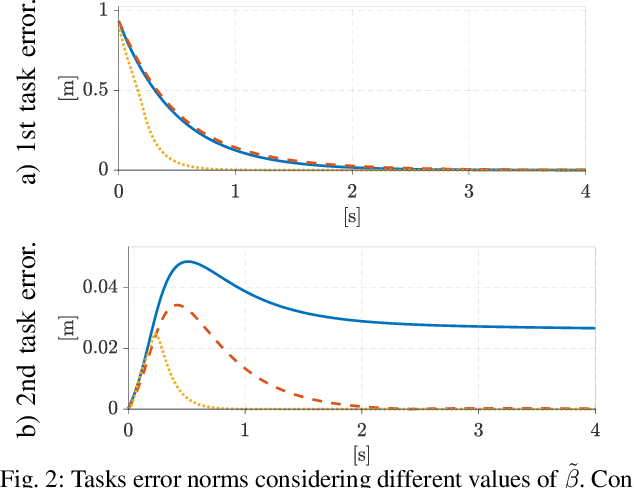

Abstract:Today's complex robotic designs comprise in some cases a large number of degrees of freedom, enabling for multi-objective task resolution (e.g., humanoid robots or aerial manipulators). This paper tackles the stability problem of a hierarchical losed-loop inverse kinematics algorithm for such highly redundant robots. We present a method to guarantee system stability by performing an online tuning of the closedloop control gains. We define a semi-definite programming problem (SDP) with these gains as decision variables and a discrete-time Lyapunov stability condition as a linear matrix inequality, constraining the SDP optimization problem and guaranteeing the stability of the prioritized tasks. To the best of authors' knowledge, this work represents the first mathematical development of an SDP formulation that introduces stability conditions for a multi-objective closed-loop inverse kinematic problem for highly redundant robots. The validity of the proposed approach is demonstrated through simulation case studies, including didactic examples and a Matlab toolbox for the benefit of the community.

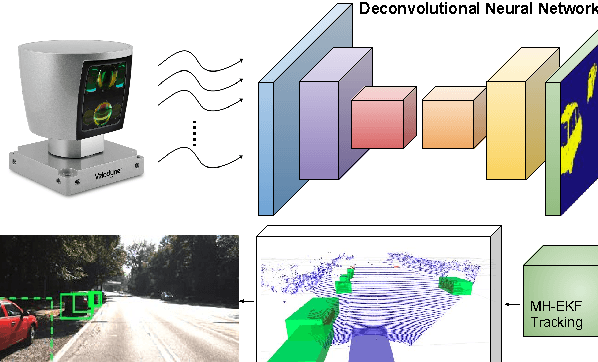

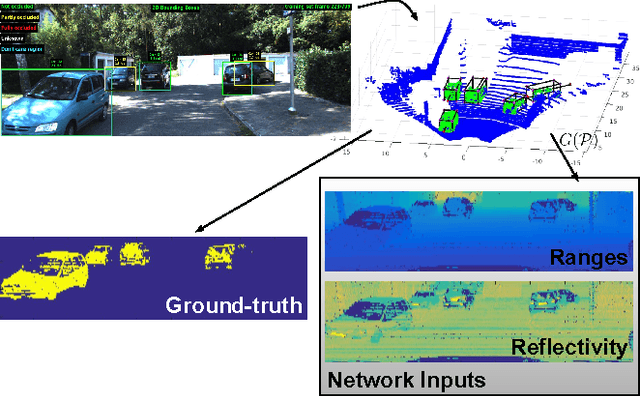

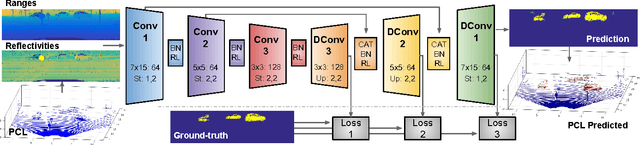

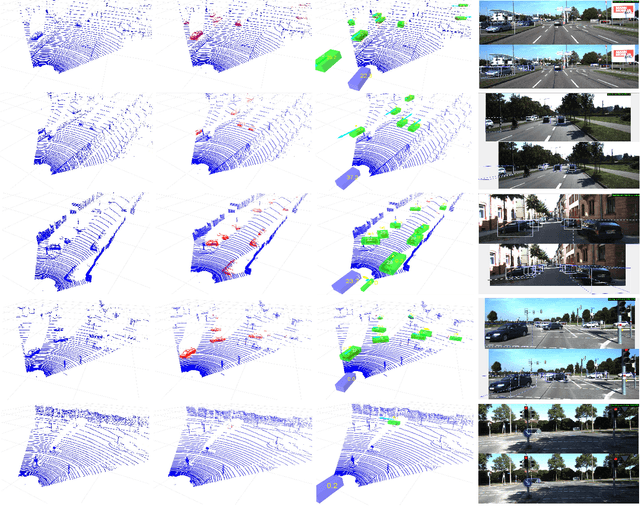

Deconvolutional Networks for Point-Cloud Vehicle Detection and Tracking in Driving Scenarios

Aug 23, 2018

Abstract:Vehicle detection and tracking is a core ingredient for developing autonomous driving applications in urban scenarios. Recent image-based Deep Learning (DL) techniques are obtaining breakthrough results in these perceptive tasks. However, DL research has not yet advanced much towards processing 3D point clouds from lidar range-finders. These sensors are very common in autonomous vehicles since, despite not providing as semantically rich information as images, their performance is more robust under harsh weather conditions than vision sensors. In this paper we present a full vehicle detection and tracking system that works with 3D lidar information only. Our detection step uses a Convolutional Neural Network (CNN) that receives as input a featured representation of the 3D information provided by a Velodyne HDL-64 sensor and returns a per-point classification of whether it belongs to a vehicle or not. The classified point cloud is then geometrically processed to generate observations for a multi-object tracking system implemented via a number of Multi-Hypothesis Extended Kalman Filters (MH-EKF) that estimate the position and velocity of the surrounding vehicles. The system is thoroughly evaluated on the KITTI tracking dataset, and we show the performance boost provided by our CNN-based vehicle detector over a standard geometric approach. Our lidar-based approach uses about a 4% of the data needed for an image-based detector with similarly competitive results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge