Joseph O. Deasy

Tumor aware recurrent inter-patient deformable image registration of computed tomography scans with lung cancer

Sep 18, 2024Abstract:Background: Voxel-based analysis (VBA) for population level radiotherapy (RT) outcomes modeling requires topology preserving inter-patient deformable image registration (DIR) that preserves tumors on moving images while avoiding unrealistic deformations due to tumors occurring on fixed images. Purpose: We developed a tumor-aware recurrent registration (TRACER) deep learning (DL) method and evaluated its suitability for VBA. Methods: TRACER consists of encoder layers implemented with stacked 3D convolutional long short term memory network (3D-CLSTM) followed by decoder and spatial transform layers to compute dense deformation vector field (DVF). Multiple CLSTM steps are used to compute a progressive sequence of deformations. Input conditioning was applied by including tumor segmentations with 3D image pairs as input channels. Bidirectional tumor rigidity, image similarity, and deformation smoothness losses were used to optimize the network in an unsupervised manner. TRACER and multiple DL methods were trained with 204 3D CT image pairs from patients with lung cancers (LC) and evaluated using (a) Dataset I (N = 308 pairs) with DL segmented LCs, (b) Dataset II (N = 765 pairs) with manually delineated LCs, and (c) Dataset III with 42 LC patients treated with RT. Results: TRACER accurately aligned normal tissues. It best preserved tumors, blackindicated by the smallest tumor volume difference of 0.24\%, 0.40\%, and 0.13 \% and mean square error in CT intensities of 0.005, 0.005, 0.004, computed between original and resampled moving image tumors, for Datasets I, II, and III, respectively. It resulted in the smallest planned RT tumor dose difference computed between original and resampled moving images of 0.01 Gy and 0.013 Gy when using a female and a male reference.

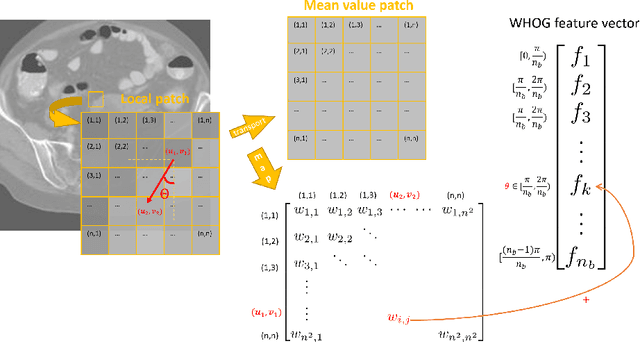

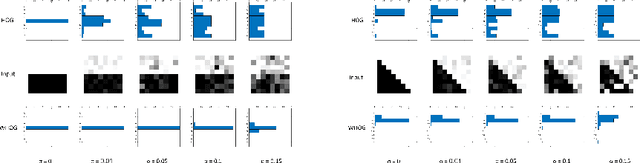

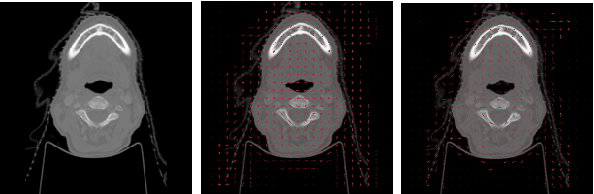

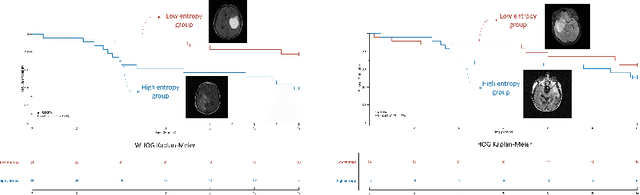

Wasserstein Image Local Analysis: Histogram of Orientations, Smoothing and Edge Detection

May 11, 2022

Abstract:The Histogram of Oriented Gradient is a widely used image feature, which describes local image directionality based on numerical differentiation. Due to its ill-posed nature, small noise may lead to large errors. Conventional HOG may fail to produce meaningful directionality results in the presence of noise, which is common in medical radiographic imaging. We approach the directionality problem from a novel perspective by the use of the optimal transport map of a local image patch to a uni-color patch of its mean. We decompose the transport map into sub-work costs in different directions. We evaluated the ability of the optimal transport to quantify tumor heterogeneity from brain MRI images of patients with glioblastoma multiforme from the TCIA. By considering the entropy difference of the extracted local directionality within tumor regions, we found that patients with higher entropy in their images, had statistically significant worse overall survival (p $=0.008$), which indicates that tumors exhibiting flows in many directions may be more malignant, perhaps reflecting high tumor histologic grade, a reflection of histologic disorganization. We also explored the possibility of solving classical image processing problems such as smoothing and edge detection via optimal transport. By looking for a 2-color patch with minimum transport distance to a local patch, we derive a nonlinear shock filter, which preserves edges. Moreover, we found that the color difference of the computed 2-color patch indicates whether there is a large change in color, i.e., an edge in the given patch. In summary, we expand the usefulness of optimal transport as an image local analysis tool, to extract robust measures of imaging tumor heterogeneity for outcomes prediction as well as image pre-processing. Because of its robust nature, we find it offers several advantages over the classical approaches.

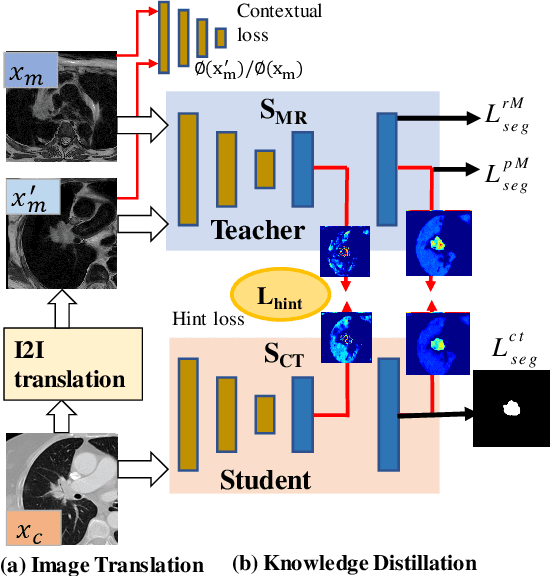

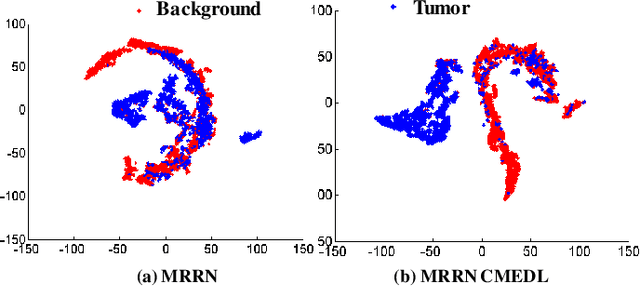

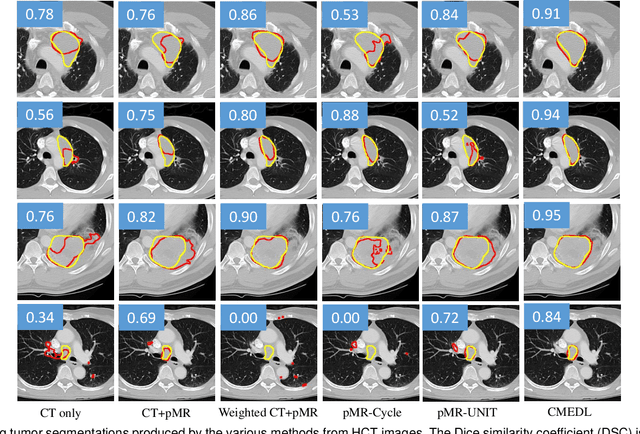

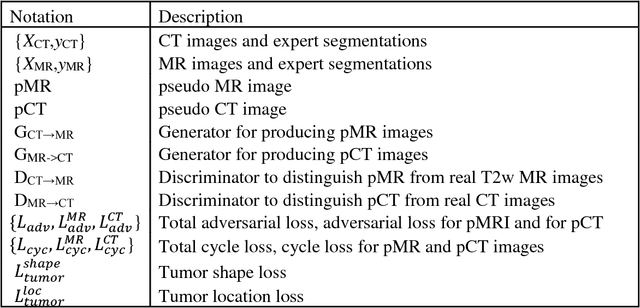

Unpaired cross-modality educed distillation (CMEDL) applied to CT lung tumor segmentation

Jul 16, 2021

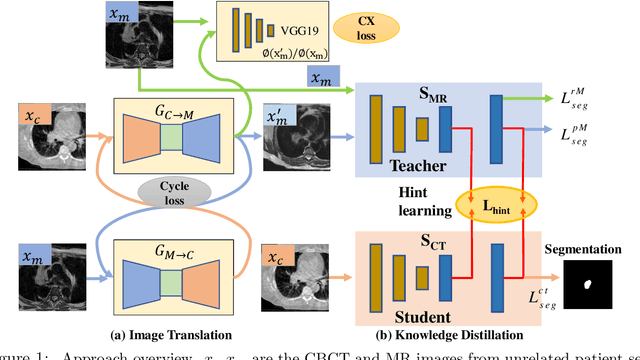

Abstract:Accurate and robust segmentation of lung cancers from CTs is needed to more accurately plan and deliver radiotherapy and to measure treatment response. This is particularly difficult for tumors located close to mediastium, due to low soft-tissue contrast. Therefore, we developed a new cross-modality educed distillation (CMEDL) approach, using unpaired CT and MRI scans, whereby a teacher MRI network guides a student CT network to extract features that signal the difference between foreground and background. Our contribution eliminates two requirements of distillation methods: (i) paired image sets by using an image to image (I2I) translation and (ii) pre-training of the teacher network with a large training set by using concurrent training of all networks. Our framework uses an end-to-end trained unpaired I2I translation, teacher, and student segmentation networks. Our framework can be combined with any I2I and segmentation network. We demonstrate our framework's feasibility using 3 segmentation and 2 I2I methods. All networks were trained with 377 CT and 82 T2w MRI from different sets of patients. Ablation tests and different strategies for incorporating MRI information into CT were performed. Accuracy was measured using Dice similarity (DSC), surface Dice (sDSC), and Hausdorff distance at the 95$^{th}$ percentile (HD95). The CMEDL approach was significantly (p $<$ 0.001) more accurate than non-CMEDL methods, quantitatively and visually. It produced the highest segmentation accuracy (sDSC of 0.83 $\pm$ 0.16 and HD95 of 5.20 $\pm$ 6.86mm). CMEDL was also more accurate than using either pMRI's or the combination of CT's with pMRI's for segmentation.

Deep cross-modality (MR-CT) educed distillation learning for cone beam CT lung tumor segmentation

Feb 26, 2021

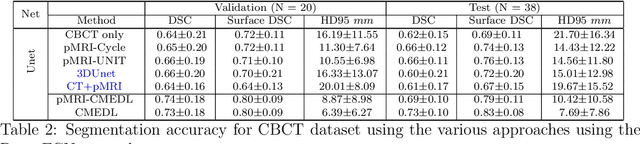

Abstract:Despite the widespread availability of in-treatment room cone beam computed tomography (CBCT) imaging, due to the lack of reliable segmentation methods, CBCT is only used for gross set up corrections in lung radiotherapies. Accurate and reliable auto-segmentation tools could potentiate volumetric response assessment and geometry-guided adaptive radiation therapies. Therefore, we developed a new deep learning CBCT lung tumor segmentation method. Methods: The key idea of our approach called cross modality educed distillation (CMEDL) is to use magnetic resonance imaging (MRI) to guide a CBCT segmentation network training to extract more informative features during training. We accomplish this by training an end-to-end network comprised of unpaired domain adaptation (UDA) and cross-domain segmentation distillation networks (SDN) using unpaired CBCT and MRI datasets. Feature distillation regularizes the student network to extract CBCT features that match the statistical distribution of MRI features extracted by the teacher network and obtain better differentiation of tumor from background.} We also compared against an alternative framework that used UDA with MR segmentation network, whereby segmentation was done on the synthesized pseudo MRI representation. All networks were trained with 216 weekly CBCTs and 82 T2-weighted turbo spin echo MRI acquired from different patient cohorts. Validation was done on 20 weekly CBCTs from patients not used in training. Independent testing was done on 38 weekly CBCTs from patients not used in training or validation. Segmentation accuracy was measured using surface Dice similarity coefficient (SDSC) and Hausdroff distance at 95th percentile (HD95) metrics.

Nested-block self-attention for robust radiotherapy planning segmentation

Feb 26, 2021

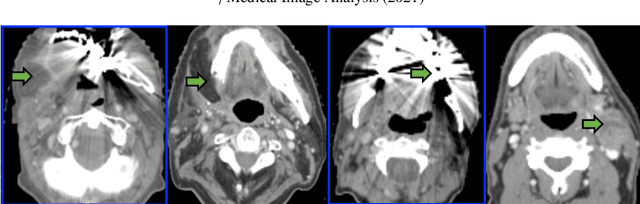

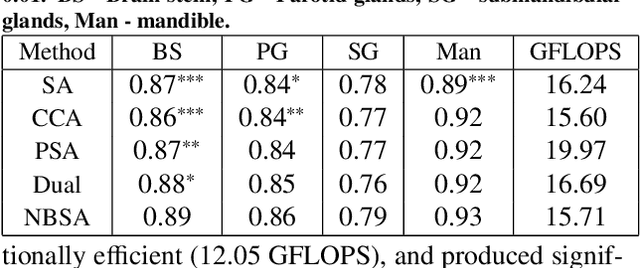

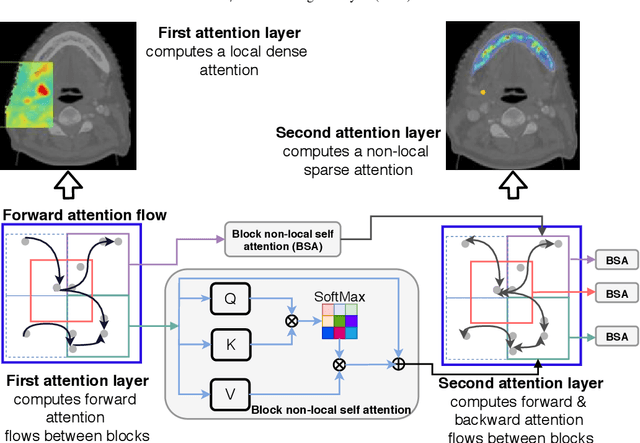

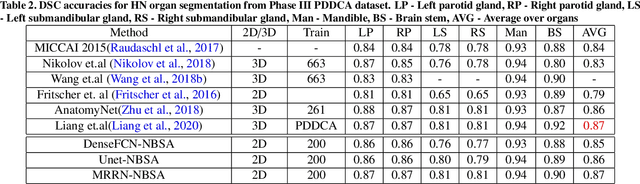

Abstract:Although deep convolutional networks have been widely studied for head and neck (HN) organs at risk (OAR) segmentation, their use for routine clinical treatment planning is limited by a lack of robustness to imaging artifacts, low soft tissue contrast on CT, and the presence of abnormal anatomy. In order to address these challenges, we developed a computationally efficient nested block self-attention (NBSA) method that can be combined with any convolutional network. Our method achieves computational efficiency by performing non-local calculations within memory blocks of fixed spatial extent. Contextual dependencies are captured by passing information in a raster scan order between blocks, as well as through a second attention layer that causes bi-directional attention flow. We implemented our approach on three different networks to demonstrate feasibility. Following training using 200 cases, we performed comprehensive evaluations using conventional and clinical metrics on a separate set of 172 test scans sourced from external and internal institution datasets without any exclusion criteria. NBSA required a similar number of computations (15.7 gflops) as the most efficient criss-cross attention (CCA) method and generated significantly more accurate segmentations for brain stem (Dice of 0.89 vs. 0.86) and parotid glands (0.86 vs. 0.84) than CCA. NBSA's segmentations were less variable than multiple 3D methods, including for small organs with low soft-tissue contrast such as the submandibular glands (surface Dice of 0.90).

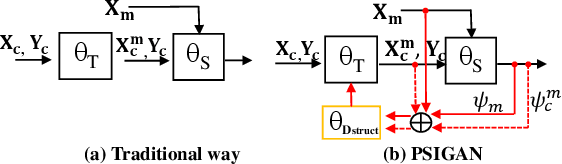

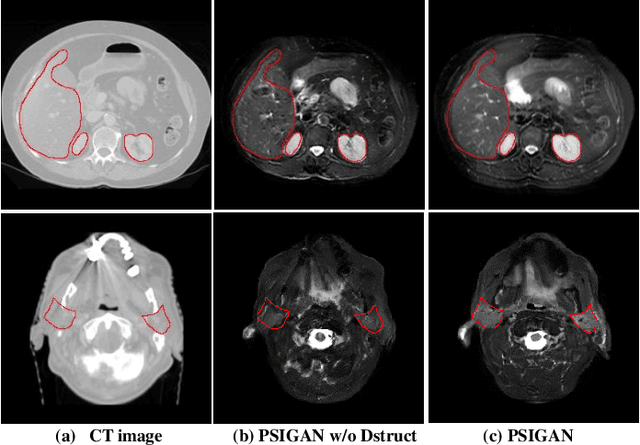

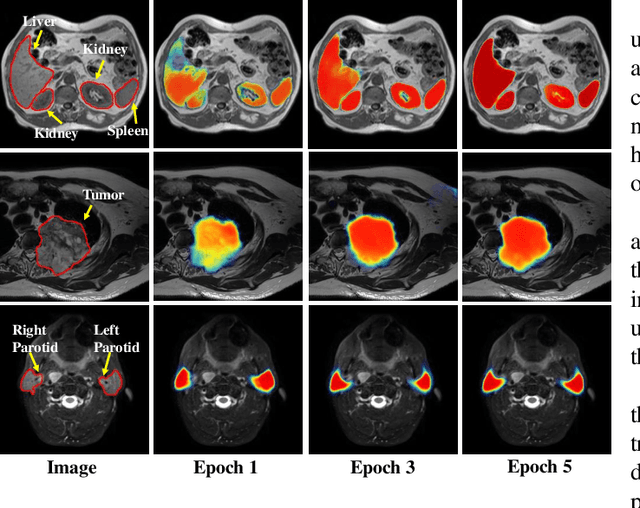

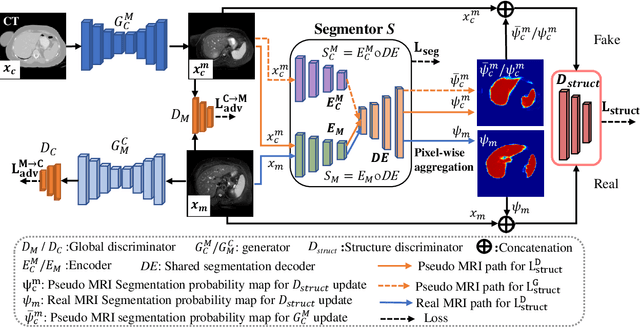

PSIGAN: Joint probabilistic segmentation and image distribution matching for unpaired cross-modality adaptation based MRI segmentation

Jul 18, 2020

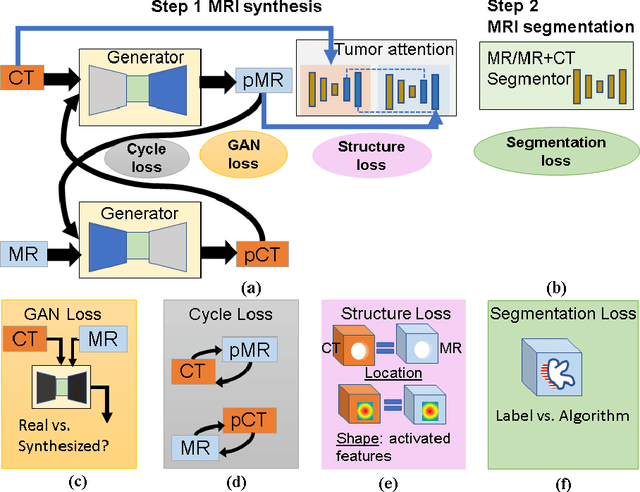

Abstract:We developed a new joint probabilistic segmentation and image distribution matching generative adversarial network (PSIGAN) for unsupervised domain adaptation (UDA) and multi-organ segmentation from magnetic resonance (MRI) images. Our UDA approach models the co-dependency between images and their segmentation as a joint probability distribution using a new structure discriminator. The structure discriminator computes structure of interest focused adversarial loss by combining the generated pseudo MRI with probabilistic segmentations produced by a simultaneously trained segmentation sub-network. The segmentation sub-network is trained using the pseudo MRI produced by the generator sub-network. This leads to a cyclical optimization of both the generator and segmentation sub-networks that are jointly trained as part of an end-to-end network. Extensive experiments and comparisons against multiple state-of-the-art methods were done on four different MRI sequences totalling 257 scans for generating multi-organ and tumor segmentation. The experiments included, (a) 20 T1-weighted (T1w) in-phase mdixon and (b) 20 T2-weighted (T2w) abdominal MRI for segmenting liver, spleen, left and right kidneys, (c) 162 T2-weighted fat suppressed head and neck MRI (T2wFS) for parotid gland segmentation, and (d) 75 T2w MRI for lung tumor segmentation. Our method achieved an overall average DSC of 0.87 on T1w and 0.90 on T2w for the abdominal organs, 0.82 on T2wFS for the parotid glands, and 0.77 on T2w MRI for lung tumors.

* This paper has been accepted by IEEE Transactions on Medical Imaging

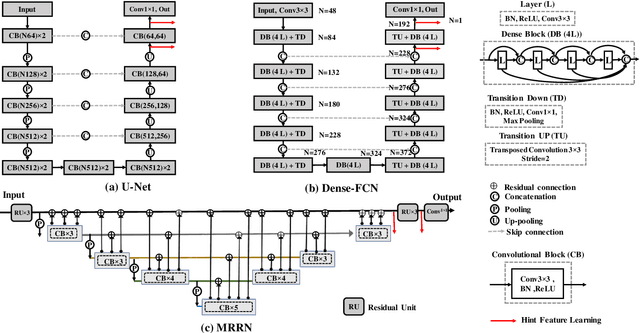

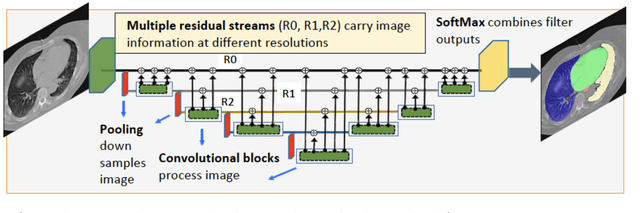

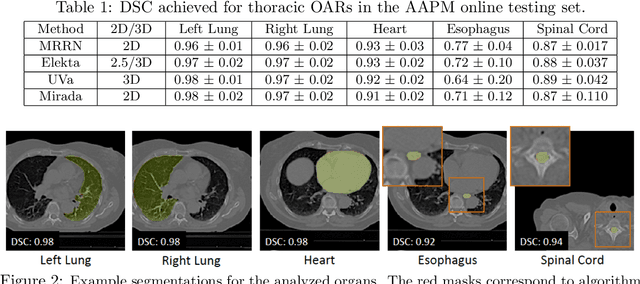

Multiple resolution residual network for automatic thoracic organs-at-risk segmentation from CT

May 31, 2020

Abstract:We implemented and evaluated a multiple resolution residual network (MRRN) for multiple normal organs-at-risk (OAR) segmentation from computed tomography (CT) images for thoracic radiotherapy treatment (RT) planning. Our approach simultaneously combines feature streams computed at multiple image resolutions and feature levels through residual connections. The feature streams at each level are updated as the images are passed through various feature levels. We trained our approach using 206 thoracic CT scans of lung cancer patients with 35 scans held out for validation to segment the left and right lungs, heart, esophagus, and spinal cord. This approach was tested on 60 CT scans from the open-source AAPM Thoracic Auto-Segmentation Challenge dataset. Performance was measured using the Dice Similarity Coefficient (DSC). Our approach outperformed the best-performing method in the grand challenge for hard-to-segment structures like the esophagus and achieved comparable results for all other structures. Median DSC using our method was 0.97 (interquartile range [IQR]: 0.97-0.98) for the left and right lungs, 0.93 (IQR: 0.93-0.95) for the heart, 0.78 (IQR: 0.76-0.80) for the esophagus, and 0.88 (IQR: 0.86-0.89) for the spinal cord.

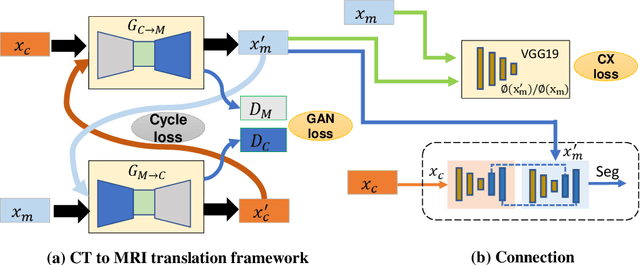

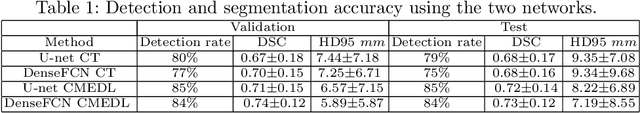

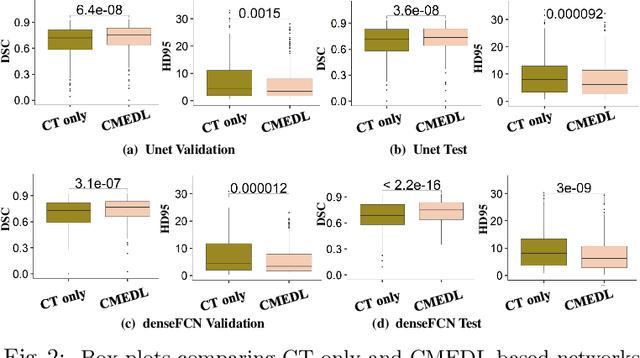

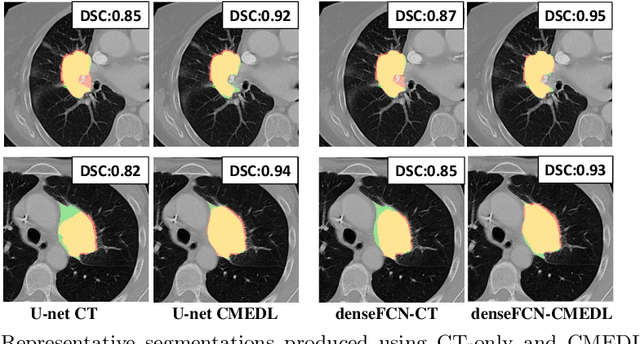

Integrating cross-modality hallucinated MRI with CT to aid mediastinal lung tumor segmentation

Sep 10, 2019

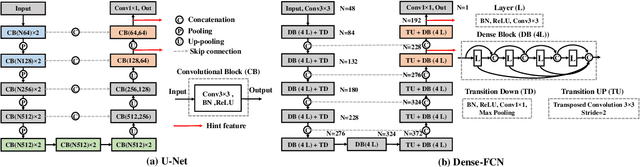

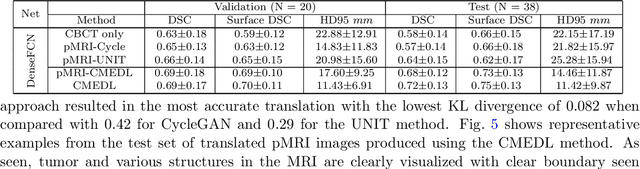

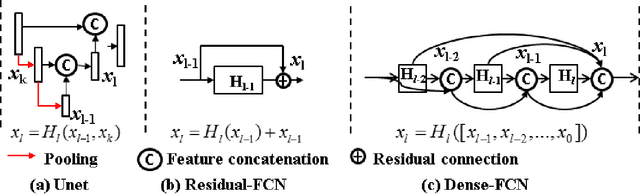

Abstract:Lung tumors, especially those located close to or surrounded by soft tissues like the mediastinum, are difficult to segment due to the low soft tissue contrast on computed tomography images. Magnetic resonance images contain superior soft-tissue contrast information that can be leveraged if both modalities were available for training. Therefore, we developed a cross-modality educed learning approach where MR information that is educed from CT is used to hallucinate MRI and improve CT segmentation. Our approach, called cross-modality educed deep learning segmentation (CMEDL) combines CT and pseudo MR produced from CT by aligning their features to obtain segmentation on CT. Features computed in the last two layers of parallelly trained CT and MR segmentation networks are aligned. We implemented this approach on U-net and dense fully convolutional networks (dense-FCN). Our networks were trained on unrelated cohorts from open-source the Cancer Imaging Archive CT images (N=377), an internal archive T2-weighted MR (N=81), and evaluated using separate validation (N=304) and testing (N=333) CT-delineated tumors. Our approach using both networks were significantly more accurate (U-net $P <0.001$; denseFCN $P <0.001$) than CT-only networks and achieved an accuracy (Dice similarity coefficient) of 0.71$\pm$0.15 (U-net), 0.74$\pm$0.12 (denseFCN) on validation and 0.72$\pm$0.14 (U-net), 0.73$\pm$0.12 (denseFCN) on the testing sets. Our novel approach demonstrated that educing cross-modality information through learned priors enhances CT segmentation performance

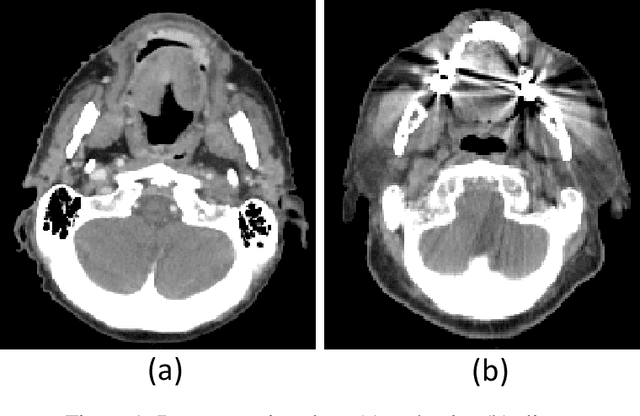

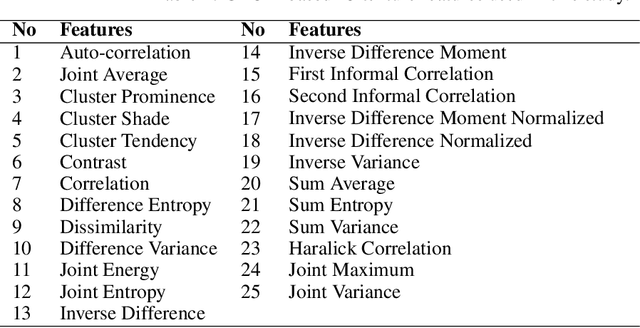

Kernel Wasserstein Distance

May 22, 2019

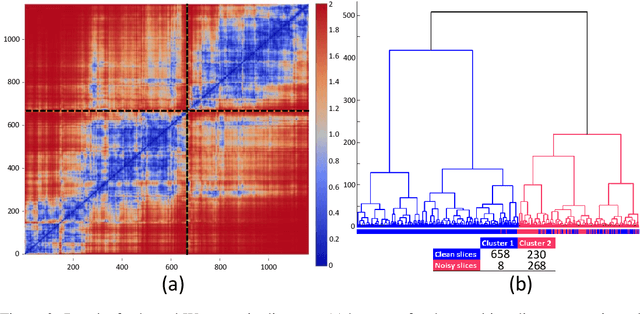

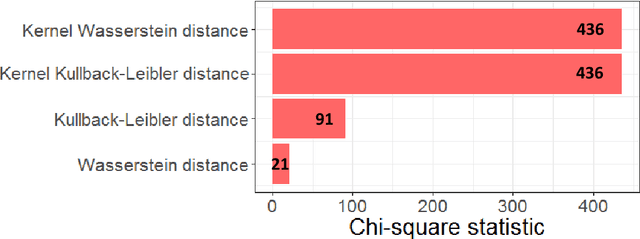

Abstract:The Wasserstein distance is a powerful metric based on the theory of optimal transport. It gives a natural measure of the distance between two distributions with a wide range of applications. In contrast to a number of the common divergences on distributions such as Kullback-Leibler or Jensen-Shannon, it is (weakly) continuous, and thus ideal for analyzing corrupted data. To date, however, no kernel methods for dealing with nonlinear data have been proposed via the Wasserstein distance. In this work, we develop a novel method to compute the L2-Wasserstein distance in a kernel space implemented using the kernel trick. The latter is a general method in machine learning employed to handle data in a nonlinear manner. We evaluate the proposed approach in identifying computerized tomography (CT) slices with dental artifacts in head and neck cancer, performing unsupervised hierarchical clustering on the resulting Wasserstein distance matrix that is computed on imaging texture features extracted from each CT slice. Our experiments show that the kernel approach outperforms classical non-kernel approaches in identifying CT slices with artifacts.

Cross-modality (CT-MRI) prior augmented deep learning for robust lung tumor segmentation from small MR datasets

Feb 27, 2019

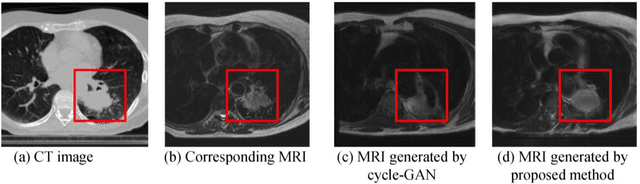

Abstract:Lack of large expert annotated MR datasets makes training deep learning models difficult. Therefore, a cross-modality (MR-CT) deep learning segmentation approach that augments training data using pseudo MR images produced by transforming expert-segmented CT images was developed. Eighty-One T2-weighted MRI scans from 28 patients with non-small cell lung cancers were analyzed. Cross-modality prior encoding the transformation of CT to pseudo MR images resembling T2w MRI was learned as a generative adversarial deep learning model. This model augmented training data arising from 6 expert-segmented T2w MR patient scans with 377 pseudo MRI from non-small cell lung cancer CT patient scans with obtained from the Cancer Imaging Archive. A two-dimensional Unet implemented with batch normalization was trained to segment the tumors from T2w MRI. This method was benchmarked against (a) standard data augmentation and two state-of-the art cross-modality pseudo MR-based augmentation and (b) two segmentation networks. Segmentation accuracy was computed using Dice similarity coefficient (DSC), Hausdroff distance metrics, and volume ratio. The proposed approach produced the lowest statistical variability in the intensity distribution between pseudo and T2w MR images measured as Kullback-Leibler divergence of 0.069. This method produced the highest segmentation accuracy with a DSC of 0.75 and the lowest Hausdroff distance on the test dataset. This approach produced highly similar estimations of tumor growth as an expert (P = 0.37). A novel deep learning MR segmentation was developed that overcomes the limitation of learning robust models from small datasets by leveraging learned cross-modality priors to augment training. The results show the feasibility of the approach and the corresponding improvement over the state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge