Joseph Lemley

Contactless Cardiac Pulse Monitoring Using Event Cameras

May 14, 2025Abstract:Time event cameras are a novel technology for recording scene information at extremely low latency and with low power consumption. Event cameras output a stream of events that encapsulate pixel-level light intensity changes within the scene, capturing information with a higher dynamic range and temporal resolution than traditional cameras. This study investigates the contact-free reconstruction of an individual's cardiac pulse signal from time event recording of their face using a supervised convolutional neural network (CNN) model. An end-to-end model is trained to extract the cardiac signal from a two-dimensional representation of the event stream, with model performance evaluated based on the accuracy of the calculated heart rate. The experimental results confirm that physiological cardiac information in the facial region is effectively preserved within the event stream, showcasing the potential of this novel sensor for remote heart rate monitoring. The model trained on event frames achieves a root mean square error (RMSE) of 3.32 beats per minute (bpm) compared to the RMSE of 2.92 bpm achieved by the baseline model trained on standard camera frames. Furthermore, models trained on event frames generated at 60 and 120 FPS outperformed the 30 FPS standard camera results, achieving an RMSE of 2.54 and 2.13 bpm, respectively.

Autobiasing Event Cameras

Nov 01, 2024

Abstract:This paper presents an autonomous method to address challenges arising from severe lighting conditions in machine vision applications that use event cameras. To manage these conditions, the research explores the built in potential of these cameras to adjust pixel functionality, named bias settings. As cars are driven at various times and locations, shifts in lighting conditions are unavoidable. Consequently, this paper utilizes the neuromorphic YOLO-based face tracking module of a driver monitoring system as the event-based application to study. The proposed method uses numerical metrics to continuously monitor the performance of the event-based application in real-time. When the application malfunctions, the system detects this through a drop in the metrics and automatically adjusts the event cameras bias values. The Nelder-Mead simplex algorithm is employed to optimize this adjustment, with finetuning continuing until performance returns to a satisfactory level. The advantage of bias optimization lies in its ability to handle conditions such as flickering or darkness without requiring additional hardware or software. To demonstrate the capabilities of the proposed system, it was tested under conditions where detecting human faces with default bias values was impossible. These severe conditions were simulated using dim ambient light and various flickering frequencies. Following the automatic and dynamic process of bias modification, the metrics for face detection significantly improved under all conditions. Autobiasing resulted in an increase in the YOLO confidence indicators by more than 33 percent for object detection and 37 percent for face detection highlighting the effectiveness of the proposed method.

Evaluating Image-Based Face and Eye Tracking with Event Cameras

Aug 19, 2024

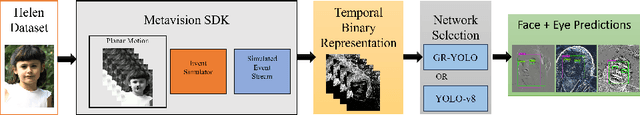

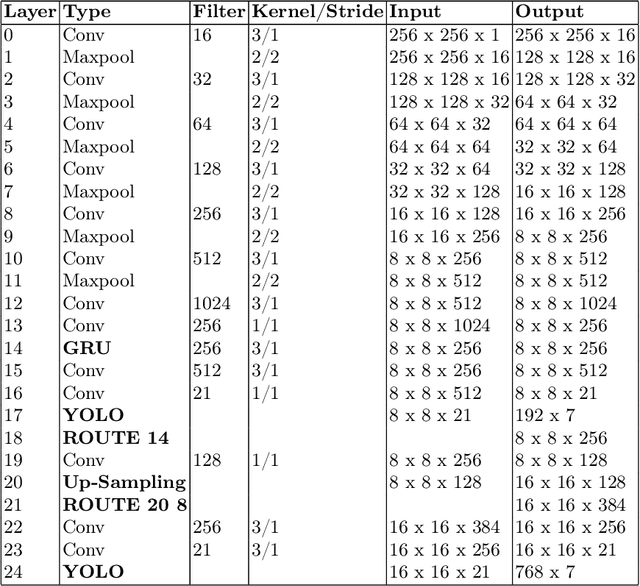

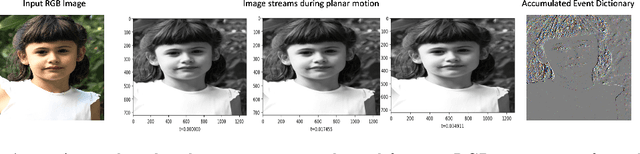

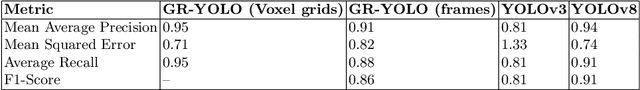

Abstract:Event Cameras, also known as Neuromorphic sensors, capture changes in local light intensity at the pixel level, producing asynchronously generated data termed ``events''. This distinct data format mitigates common issues observed in conventional cameras, like under-sampling when capturing fast-moving objects, thereby preserving critical information that might otherwise be lost. However, leveraging this data often necessitates the development of specialized, handcrafted event representations that can integrate seamlessly with conventional Convolutional Neural Networks (CNNs), considering the unique attributes of event data. In this study, We evaluate event-based Face and Eye tracking. The core objective of our study is to showcase the viability of integrating conventional algorithms with event-based data, transformed into a frame format while preserving the unique benefits of event cameras. To validate our approach, we constructed a frame-based event dataset by simulating events between RGB frames derived from the publicly accessible Helen Dataset. We assess its utility for face and eye detection tasks through the application of GR-YOLO -- a pioneering technique derived from YOLOv3. This evaluation includes a comparative analysis with results derived from training the dataset with YOLOv8. Subsequently, the trained models were tested on real event streams from various iterations of Prophesee's event cameras and further evaluated on the Faces in Event Stream (FES) benchmark dataset. The models trained on our dataset shows a good prediction performance across all the datasets obtained for validation with the best results of a mean Average precision score of 0.91. Additionally, The models trained demonstrated robust performance on real event camera data under varying light conditions.

Spiking-DD: Neuromorphic Event Camera based Driver Distraction Detection with Spiking Neural Network

Jul 30, 2024Abstract:Event camera-based driver monitoring is emerging as a pivotal area of research, driven by its significant advantages such as rapid response, low latency, power efficiency, enhanced privacy, and prevention of undersampling. Effective detection of driver distraction is crucial in driver monitoring systems to enhance road safety and reduce accident rates. The integration of an optimized sensor such as Event Camera with an optimized network is essential for maximizing these benefits. This paper introduces the innovative concept of sensing without seeing to detect driver distraction, leveraging computationally efficient spiking neural networks (SNN). To the best of our knowledge, this study is the first to utilize event camera data with spiking neural networks for driver distraction. The proposed Spiking-DD network not only achieve state of the art performance but also exhibit fewer parameters and provides greater accuracy than current event-based methodologies.

Synthetic Face Ageing: Evaluation, Analysis and Facilitation of Age-Robust Facial Recognition Algorithms

Jun 10, 2024

Abstract:The ability to accurately recognize an individual's face with respect to human aging factor holds significant importance for various private as well as government sectors such as customs and public security bureaus, passport office, and national database systems. Therefore, developing a robust age-invariant face recognition system is of crucial importance to address the challenges posed by ageing and maintain the reliability and accuracy of facial recognition technology. In this research work, the focus is to explore the feasibility of utilizing synthetic ageing data to improve the robustness of face recognition models that can eventually help in recognizing people at broader age intervals. To achieve this, we first design set of experiments to evaluate state-of-the-art synthetic ageing methods. In the next stage we explore the effect of age intervals on a current deep learning-based face recognition algorithm by using synthetic ageing data as well as real ageing data to perform rigorous training and validation. Moreover, these synthetic age data have been used in facilitating face recognition algorithms. Experimental results show that the recognition rate of the model trained on synthetic ageing images is 3.33% higher than the results of the baseline model when tested on images with an age gap of 40 years, which prove the potential of synthetic age data which has been quantified to enhance the performance of age-invariant face recognition systems.

Non-Contact NIR PPG Sensing through Large Sequence Signal Regression

Nov 20, 2023Abstract:Non-Contact sensing is an emerging technology with applications across many industries from driver monitoring in vehicles to patient monitoring in healthcare. Current state-of-the-art implementations focus on RGB video, but this struggles in varying/noisy light conditions and is almost completely unfeasible in the dark. Near Infra-Red (NIR) video, however, does not suffer from these constraints. This paper aims to demonstrate the effectiveness of an alternative Convolution Attention Network (CAN) architecture, to regress photoplethysmography (PPG) signal from a sequence of NIR frames. A combination of two publicly available datasets, which is split into train and test sets, is used for training the CAN. This combined dataset is augmented to reduce overfitting to the 'normal' 60 - 80 bpm heart rate range by providing the full range of heart rates along with corresponding videos for each subject. This CAN, when implemented over video cropped to the subject's head, achieved a Mean Average Error (MAE) of just 0.99 bpm, proving its effectiveness on NIR video and the architecture's feasibility to regress an accurate signal output.

* 4 pages, 3 figures, 3 tables, Irish Machine Vision and Image Processing Conference 2023

Non-Contact Breathing Rate Detection Using Optical Flow

Nov 13, 2023

Abstract:Breathing rate is a vital health metric that is an invaluable indicator of the overall health of a person. In recent years, the non-contact measurement of health signals such as breathing rate has been a huge area of development, with a wide range of applications from telemedicine to driver monitoring systems. This paper presents an investigation into a method of non-contact breathing rate detection using a motion detection algorithm, optical flow. Optical flow is used to successfully measure breathing rate by tracking the motion of specific points on the body. In this study, the success of optical flow when using different sets of points is evaluated. Testing shows that both chest and facial movement can be used to determine breathing rate but to different degrees of success. The chest generates very accurate signals, with an RMSE of 0.63 on the tested videos. Facial points can also generate reliable signals when there is minimal head movement but are much more vulnerable to noise caused by head/body movements. These findings highlight the potential of optical flow as a non-invasive method for breathing rate detection and emphasize the importance of selecting appropriate points to optimize accuracy.

A Comparative Study of Image-to-Image Translation Using GANs for Synthetic Child Race Data

Aug 08, 2023Abstract:The lack of ethnic diversity in data has been a limiting factor of face recognition techniques in the literature. This is particularly the case for children where data samples are scarce and presents a challenge when seeking to adapt machine vision algorithms that are trained on adult data to work on children. This work proposes the utilization of image-to-image transformation to synthesize data of different races and thus adjust the ethnicity of children's face data. We consider ethnicity as a style and compare three different Image-to-Image neural network based methods, specifically pix2pix, CycleGAN, and CUT networks to implement Caucasian child data and Asian child data conversion. Experimental validation results on synthetic data demonstrate the feasibility of using image-to-image transformation methods to generate various synthetic child data samples with broader ethnic diversity.

Will your Doorbell Camera still recognize you as you grow old

Aug 08, 2023

Abstract:Robust authentication for low-power consumer devices such as doorbell cameras poses a valuable and unique challenge. This work explores the effect of age and aging on the performance of facial authentication methods. Two public age datasets, AgeDB and Morph-II have been used as baselines in this work. A photo-realistic age transformation method has been employed to augment a set of high-quality facial images with various age effects. Then the effect of these synthetic aging data on the high-performance deep-learning-based face recognition model is quantified by using various metrics including Receiver Operating Characteristic (ROC) curves and match score distributions. Experimental results demonstrate that long-term age effects are still a significant challenge for the state-of-the-art facial authentication method.

Dataset Creation Pipeline for Camera-Based Heart Rate Estimation

Mar 02, 2023Abstract:Heart rate is one of the most vital health metrics which can be utilized to investigate and gain intuitions into various human physiological and psychological information. Estimating heart rate without the constraints of contact-based sensors thus presents itself as a very attractive field of research as it enables well-being monitoring in a wider variety of scenarios. Consequently, various techniques for camera-based heart rate estimation have been developed ranging from classical image processing to convoluted deep learning models and architectures. At the heart of such research efforts lies health and visual data acquisition, cleaning, transformation, and annotation. In this paper, we discuss how to prepare data for the task of developing or testing an algorithm or machine learning model for heart rate estimation from images of facial regions. The data prepared is to include camera frames as well as sensor readings from an electrocardiograph sensor. The proposed pipeline is divided into four main steps, namely removal of faulty data, frame and electrocardiograph timestamp de-jittering, signal denoising and filtering, and frame annotation creation. Our main contributions are a novel technique of eliminating jitter from health sensor and camera timestamps and a method to accurately time align both visual frame and electrocardiogram sensor data which is also applicable to other sensor types.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge