Joël Mathys

Synthetic Data for Blood Vessel Network Extraction

Apr 16, 2025Abstract:Blood vessel networks in the brain play a crucial role in stroke research, where understanding their topology is essential for analyzing blood flow dynamics. However, extracting detailed topological vessel network information from microscopy data remains a significant challenge, mainly due to the scarcity of labeled training data and the need for high topological accuracy. This work combines synthetic data generation with deep learning to automatically extract vessel networks as graphs from volumetric microscopy data. To combat data scarcity, we introduce a comprehensive pipeline for generating large-scale synthetic datasets that mirror the characteristics of real vessel networks. Our three-stage approach progresses from abstract graph generation through vessel mask creation to realistic medical image synthesis, incorporating biological constraints and imaging artifacts at each stage. Using this synthetic data, we develop a two-stage deep learning pipeline of 3D U-Net-based models for node detection and edge prediction. Fine-tuning on real microscopy data shows promising adaptation, improving edge prediction F1 scores from 0.496 to 0.626 by training on merely 5 manually labeled samples. These results suggest that automated vessel network extraction is becoming practically feasible, opening new possibilities for large-scale vascular analysis in stroke research.

Beyond Interpolation: Extrapolative Reasoning with Reinforcement Learning and Graph Neural Networks

Feb 06, 2025

Abstract:Despite incredible progress, many neural architectures fail to properly generalize beyond their training distribution. As such, learning to reason in a correct and generalizable way is one of the current fundamental challenges in machine learning. In this respect, logic puzzles provide a great testbed, as we can fully understand and control the learning environment. Thus, they allow to evaluate performance on previously unseen, larger and more difficult puzzles that follow the same underlying rules. Since traditional approaches often struggle to represent such scalable logical structures, we propose to model these puzzles using a graph-based approach. Then, we investigate the key factors enabling the proposed models to learn generalizable solutions in a reinforcement learning setting. Our study focuses on the impact of the inductive bias of the architecture, different reward systems and the role of recurrent modeling in enabling sequential reasoning. Through extensive experiments, we demonstrate how these elements contribute to successful extrapolation on increasingly complex puzzles.These insights and frameworks offer a systematic way to design learning-based systems capable of generalizable reasoning beyond interpolation.

GraphFSA: A Finite State Automaton Framework for Algorithmic Learning on Graphs

Aug 20, 2024

Abstract:Many graph algorithms can be viewed as sets of rules that are iteratively applied, with the number of iterations dependent on the size and complexity of the input graph. Existing machine learning architectures often struggle to represent these algorithmic decisions as discrete state transitions. Therefore, we propose a novel framework: GraphFSA (Graph Finite State Automaton). GraphFSA is designed to learn a finite state automaton that runs on each node of a given graph. We test GraphFSA on cellular automata problems, showcasing its abilities in a straightforward algorithmic setting. For a comprehensive empirical evaluation of our framework, we create a diverse range of synthetic problems. As our main application, we then focus on learning more elaborate graph algorithms. Our findings suggest that GraphFSA exhibits strong generalization and extrapolation abilities, presenting an alternative approach to represent these algorithms.

CoRe-GD: A Hierarchical Framework for Scalable Graph Visualization with GNNs

Feb 09, 2024

Abstract:Graph Visualization, also known as Graph Drawing, aims to find geometric embeddings of graphs that optimize certain criteria. Stress is a widely used metric; stress is minimized when every pair of nodes is positioned at their shortest path distance. However, stress optimization presents computational challenges due to its inherent complexity and is usually solved using heuristics in practice. We introduce a scalable Graph Neural Network (GNN) based Graph Drawing framework with sub-quadratic runtime that can learn to optimize stress. Inspired by classical stress optimization techniques and force-directed layout algorithms, we create a coarsening hierarchy for the input graph. Beginning at the coarsest level, we iteratively refine and un-coarsen the layout, until we generate an embedding for the original graph. To enhance information propagation within the network, we propose a novel positional rewiring technique based on intermediate node positions. Our empirical evaluation demonstrates that the framework achieves state-of-the-art performance while remaining scalable.

SURF: A Generalization Benchmark for GNNs Predicting Fluid Dynamics

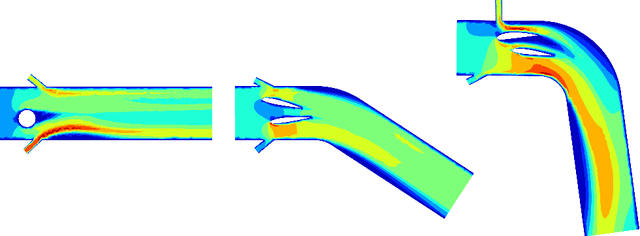

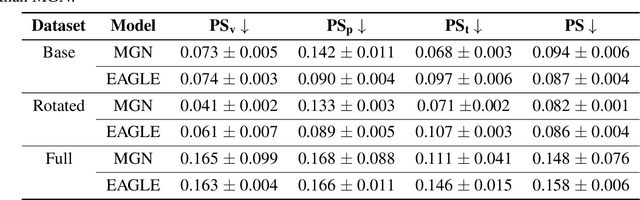

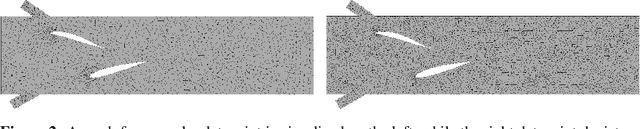

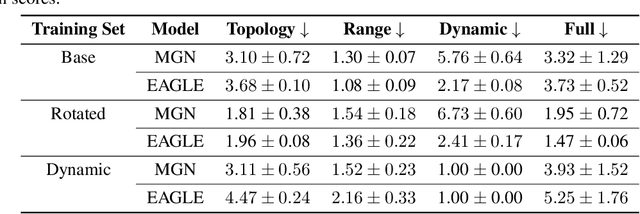

Nov 20, 2023

Abstract:Simulating fluid dynamics is crucial for the design and development process, ranging from simple valves to complex turbomachinery. Accurately solving the underlying physical equations is computationally expensive. Therefore, learning-based solvers that model interactions on meshes have gained interest due to their promising speed-ups. However, it is unknown to what extent these models truly understand the underlying physical principles and can generalize rather than interpolate. Generalization is a key requirement for a general-purpose fluid simulator, which should adapt to different topologies, resolutions, or thermodynamic ranges. We propose SURF, a benchmark designed to test the $\textit{generalization}$ of learned graph-based fluid simulators. SURF comprises individual datasets and provides specific performance and generalization metrics for evaluating and comparing different models. We empirically demonstrate the applicability of SURF by thoroughly investigating the two state-of-the-art graph-based models, yielding new insights into their generalization.

Flood and Echo: Algorithmic Alignment of GNNs with Distributed Computing

Oct 12, 2023Abstract:Graph Neural Networks are a natural fit for learning algorithms. They can directly represent tasks through an abstract but versatile graph structure and handle inputs of different sizes. This opens up the possibility for scaling and extrapolation to larger graphs, one of the most important advantages of an algorithm. However, this raises two core questions i) How can we enable nodes to gather the required information in a given graph ($\textit{information exchange}$), even if is far away and ii) How can we design an execution framework which enables this information exchange for extrapolation to larger graph sizes ($\textit{algorithmic alignment for extrapolation}$). We propose a new execution framework that is inspired by the design principles of distributed algorithms: Flood and Echo Net. It propagates messages through the entire graph in a wave like activation pattern, which naturally generalizes to larger instances. Through its sparse but parallel activations it is provably more efficient in terms of message complexity. We study the proposed model and provide both empirical evidence and theoretical insights in terms of its expressiveness, efficiency, information exchange and ability to extrapolate.

SALSA-CLRS: A Sparse and Scalable Benchmark for Algorithmic Reasoning

Sep 21, 2023

Abstract:We introduce an extension to the CLRS algorithmic learning benchmark, prioritizing scalability and the utilization of sparse representations. Many algorithms in CLRS require global memory or information exchange, mirrored in its execution model, which constructs fully connected (not sparse) graphs based on the underlying problem. Despite CLRS's aim of assessing how effectively learned algorithms can generalize to larger instances, the existing execution model becomes a significant constraint due to its demanding memory requirements and runtime (hard to scale). However, many important algorithms do not demand a fully connected graph; these algorithms, primarily distributed in nature, align closely with the message-passing paradigm employed by Graph Neural Networks. Hence, we propose SALSA-CLRS, an extension of the current CLRS benchmark specifically with scalability and sparseness in mind. Our approach includes adapted algorithms from the original CLRS benchmark and introduces new problems from distributed and randomized algorithms. Moreover, we perform a thorough empirical evaluation of our benchmark. Code is publicly available at https://github.com/jkminder/SALSA-CLRS.

Traffic4cast at NeurIPS 2022 -- Predict Dynamics along Graph Edges from Sparse Node Data: Whole City Traffic and ETA from Stationary Vehicle Detectors

Mar 14, 2023

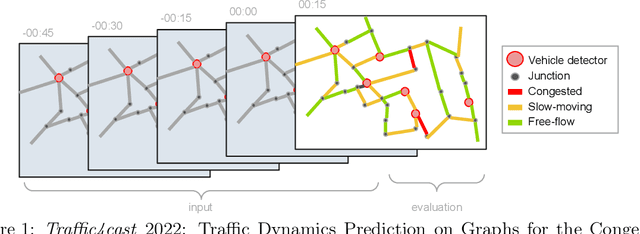

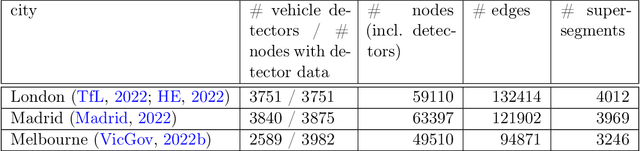

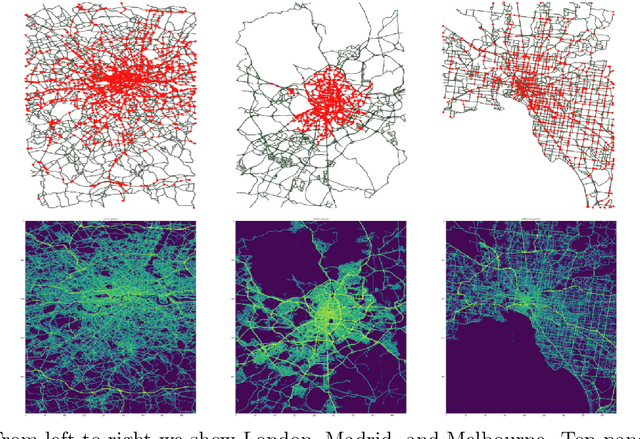

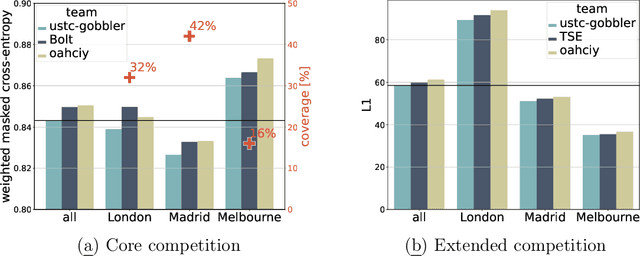

Abstract:The global trends of urbanization and increased personal mobility force us to rethink the way we live and use urban space. The Traffic4cast competition series tackles this problem in a data-driven way, advancing the latest methods in machine learning for modeling complex spatial systems over time. In this edition, our dynamic road graph data combine information from road maps, $10^{12}$ probe data points, and stationary vehicle detectors in three cities over the span of two years. While stationary vehicle detectors are the most accurate way to capture traffic volume, they are only available in few locations. Traffic4cast 2022 explores models that have the ability to generalize loosely related temporal vertex data on just a few nodes to predict dynamic future traffic states on the edges of the entire road graph. In the core challenge, participants are invited to predict the likelihoods of three congestion classes derived from the speed levels in the GPS data for the entire road graph in three cities 15 min into the future. We only provide vehicle count data from spatially sparse stationary vehicle detectors in these three cities as model input for this task. The data are aggregated in 15 min time bins for one hour prior to the prediction time. For the extended challenge, participants are tasked to predict the average travel times on super-segments 15 min into the future - super-segments are longer sequences of road segments in the graph. The competition results provide an important advance in the prediction of complex city-wide traffic states just from publicly available sparse vehicle data and without the need for large amounts of real-time floating vehicle data.

Visual Abstraction and Reasoning through Language

Mar 07, 2023Abstract:While Artificial Intelligence (AI) models have achieved human or even superhuman performance in narrowly defined applications, they still struggle to show signs of broader and more flexible intelligence. The Abstraction and Reasoning Corpus (ARC), introduced by Fran\c{c}ois Chollet, aims to assess how close AI systems are to human-like cognitive abilities. Most current approaches rely on carefully handcrafted domain-specific languages (DSLs), which are used to brute-force solutions to the tasks present in ARC. In this work, we propose a general framework for solving ARC based on natural language descriptions of the tasks. While not yet beating state-of-the-art DSL models on ARC, we demonstrate the immense potential of our approach hinted at by the ability to solve previously unsolved tasks.

Learning Graph Algorithms With Recurrent Graph Neural Networks

Dec 09, 2022Abstract:Classical graph algorithms work well for combinatorial problems that can be thoroughly formalized and abstracted. Once the algorithm is derived, it generalizes to instances of any size. However, developing an algorithm that handles complex structures and interactions in the real world can be challenging. Rather than specifying the algorithm, we can try to learn it from the graph-structured data. Graph Neural Networks (GNNs) are inherently capable of working on graph structures; however, they struggle to generalize well, and learning on larger instances is challenging. In order to scale, we focus on a recurrent architecture design that can learn simple graph problems end to end on smaller graphs and then extrapolate to larger instances. As our main contribution, we identify three essential techniques for recurrent GNNs to scale. By using (i) skip connections, (ii) state regularization, and (iii) edge convolutions, we can guide GNNs toward extrapolation. This allows us to train on small graphs and apply the same model to much larger graphs during inference. Moreover, we empirically validate the extrapolation capabilities of our GNNs on algorithmic datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge