Jingxin Zhang

Cross-Modal Learning for Anomaly Detection in Fused Magnesium Smelting Process: Methodology and Benchmark

Jun 13, 2024Abstract:Fused Magnesium Furnace (FMF) is a crucial industrial equipment in the production of magnesia, and anomaly detection plays a pivotal role in ensuring its efficient, stable, and secure operation. Existing anomaly detection methods primarily focus on analyzing dominant anomalies using the process variables (such as arc current) or constructing neural networks based on abnormal visual features, while overlooking the intrinsic correlation of cross-modal information. This paper proposes a cross-modal Transformer (dubbed FmFormer), designed to facilitate anomaly detection in fused magnesium smelting processes by exploring the correlation between visual features (video) and process variables (current). Our approach introduces a novel tokenization paradigm to effectively bridge the substantial dimensionality gap between the 3D video modality and the 1D current modality in a multiscale manner, enabling a hierarchical reconstruction of pixel-level anomaly detection. Subsequently, the FmFormer leverages self-attention to learn internal features within each modality and bidirectional cross-attention to capture correlations across modalities. To validate the effectiveness of the proposed method, we also present a pioneering cross-modal benchmark of the fused magnesium smelting process, featuring synchronously acquired video and current data for over 2.2 million samples. Leveraging cross-modal learning, the proposed FmFormer achieves state-of-the-art performance in detecting anomalies, particularly under extreme interferences such as current fluctuations and visual occlusion caused by heavy water mist. The presented methodology and benchmark may be applicable to other industrial applications with some amendments. The benchmark will be released at https://github.com/GaochangWu/FMF-Benchmark.

Experiment-based deep learning approach for power allocation with a programmable metasurface

Jul 26, 2023

Abstract:Deep learning, as a highly efficient method for metasurface inverse design, commonly use simulation data to train deep neural networks (DNNs) that can map desired functionalities to proper metasurface designs. However, the assumptions and simplifications made in the simulation model may not reflect the actual behavior of a complex system, leading to suboptimal performance of the DNNs in practical scenarios. To address this issue, we propose an experiment-based deep learning approach for metasurface inverse design and demonstrate its effectiveness for power allocation in complex environments with obstacles. Enabled by the tunability of a programmable metasurface, large sets of experimental data in various configurations can be collected for DNN training. The DNN trained by experimental data can inherently incorporate complex factors and can adapt to changed environments through its on-site data-collecting and fast-retraining capability. The proposed experiment-based DNN holds the potential for intelligent and energy-efficient wireless communication in complex indoor environments.

SCCAM: Supervised Contrastive Convolutional Attention Mechanism for Ante-hoc Interpretable Fault Diagnosis with Limited Fault Samples

Feb 17, 2023

Abstract:In real industrial processes, fault diagnosis methods are required to learn from limited fault samples since the procedures are mainly under normal conditions and the faults rarely occur. Although attention mechanisms have become popular in the field of fault diagnosis, the existing attention-based methods are still unsatisfying for the above practical applications. First, pure attention-based architectures like transformers need a large number of fault samples to offset the lack of inductive biases thus performing poorly under limited fault samples. Moreover, the poor fault classification dilemma further leads to the failure of the existing attention-based methods to identify the root causes. To address the aforementioned issues, we innovatively propose a supervised contrastive convolutional attention mechanism (SCCAM) with ante-hoc interpretability, which solves the root cause analysis problem under limited fault samples for the first time. The proposed SCCAM method is tested on a continuous stirred tank heater and the Tennessee Eastman industrial process benchmark. Three common fault diagnosis scenarios are covered, including a balanced scenario for additional verification and two scenarios with limited fault samples (i.e., imbalanced scenario and long-tail scenario). The comprehensive results demonstrate that the proposed SCCAM method can achieve better performance compared with the state-of-the-art methods on fault classification and root cause analysis.

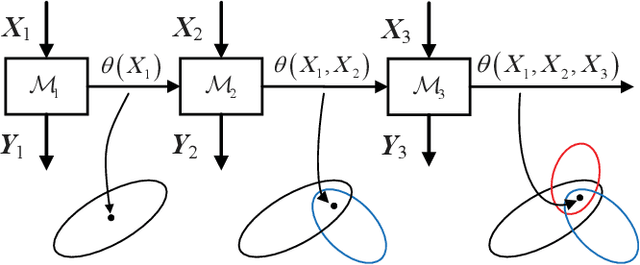

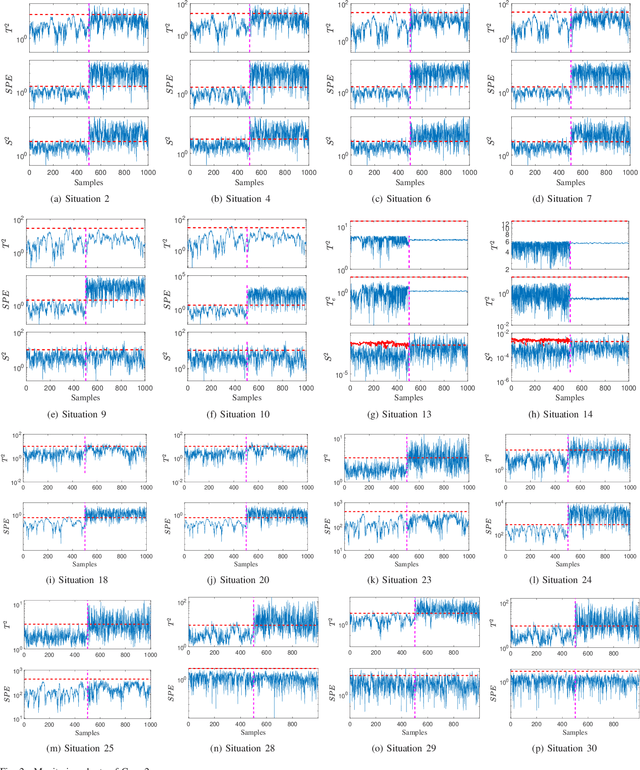

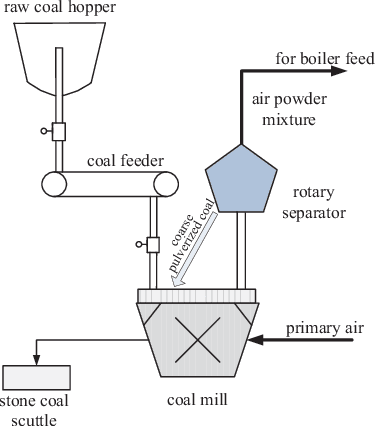

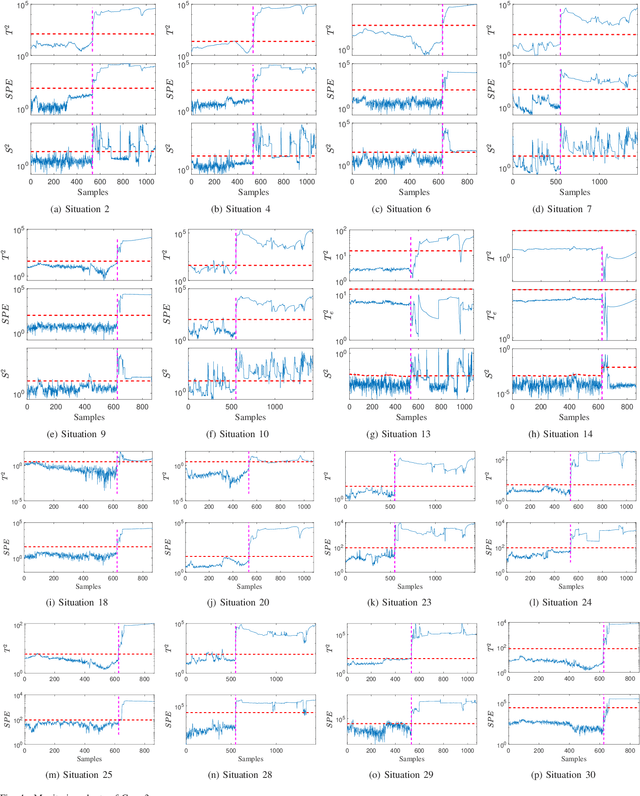

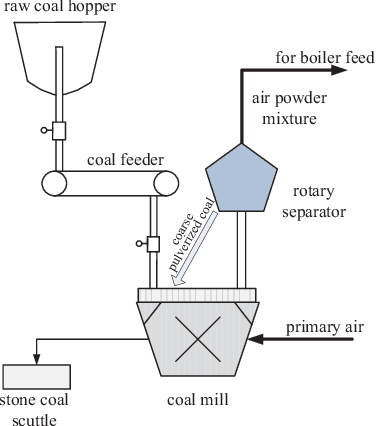

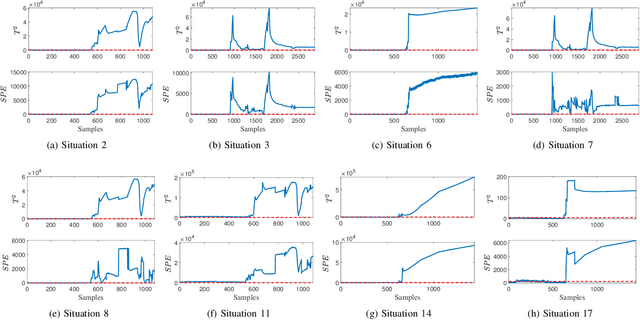

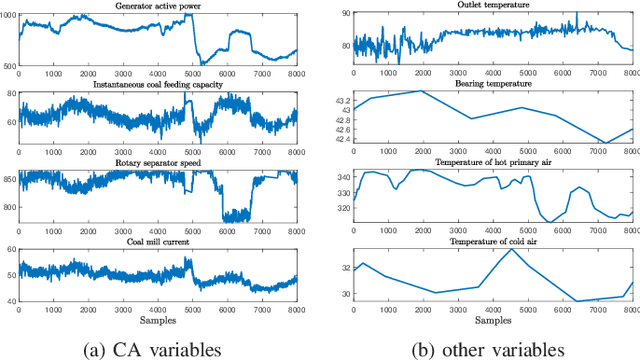

Continual learning-based probabilistic slow feature analysis for multimode dynamic process monitoring

Feb 23, 2022

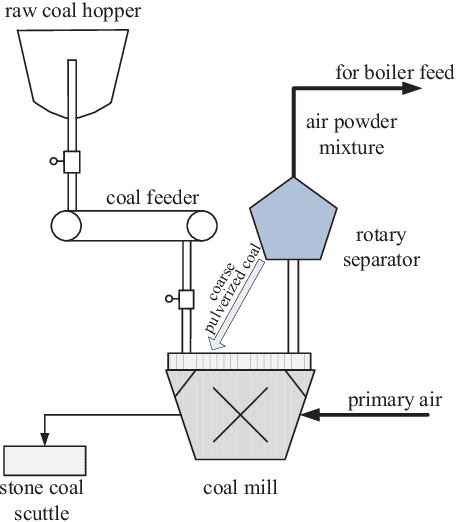

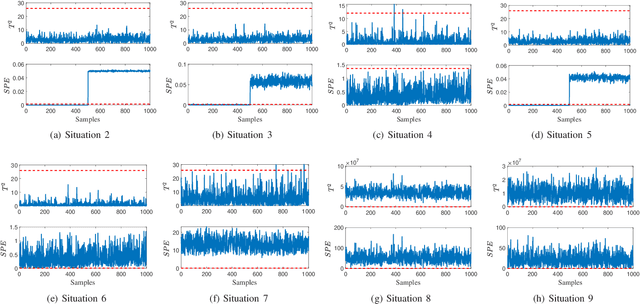

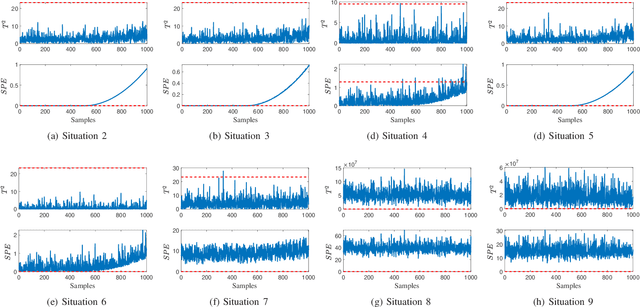

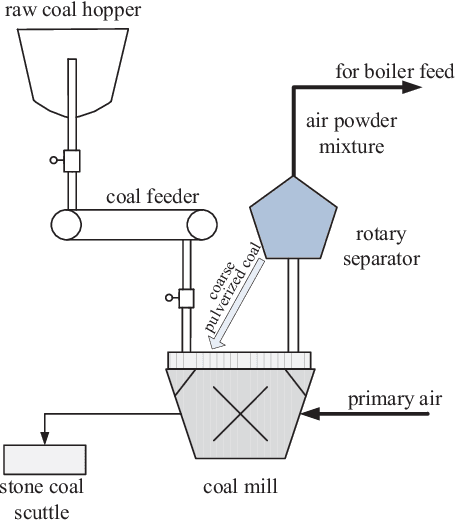

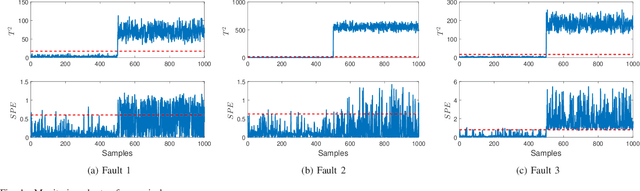

Abstract:In this paper, a novel multimode dynamic process monitoring approach is proposed by extending elastic weight consolidation (EWC) to probabilistic slow feature analysis (PSFA) in order to extract multimode slow features for online monitoring. EWC was originally introduced in the setting of machine learning of sequential multi-tasks with the aim of avoiding catastrophic forgetting issue, which equally poses as a major challenge in multimode dynamic process monitoring. When a new mode arrives, a set of data should be collected so that this mode can be identified by PSFA and prior knowledge. Then, a regularization term is introduced to prevent new data from significantly interfering with the learned knowledge, where the parameter importance measures are estimated. The proposed method is denoted as PSFA-EWC, which is updated continually and capable of achieving excellent performance for successive modes. Different from traditional multimode monitoring algorithms, PSFA-EWC furnishes backward and forward transfer ability. The significant features of previous modes are retained while consolidating new information, which may contribute to learning new relevant modes. Compared with several known methods, the effectiveness of the proposed method is demonstrated via a continuous stirred tank heater and a practical coal pulverizing system.

Structure Parameter Optimized Kernel Based Online Prediction with a Generalized Optimization Strategy for Nonstationary Time Series

Aug 18, 2021

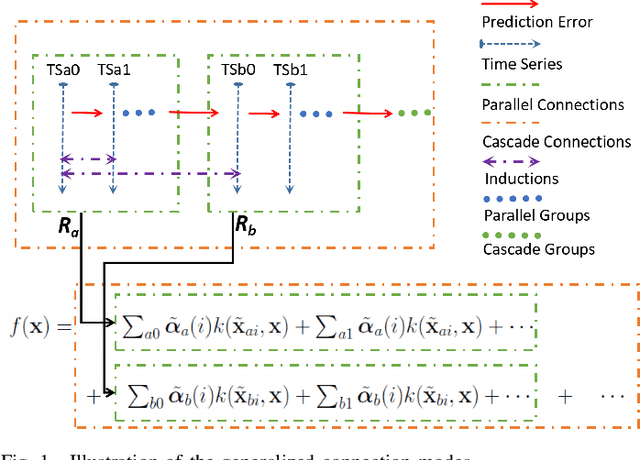

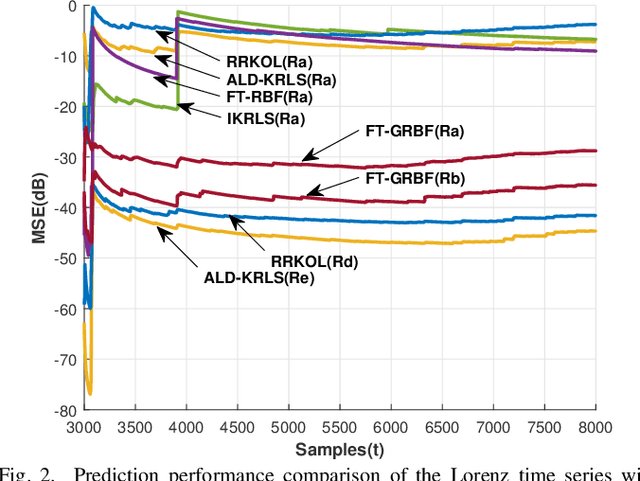

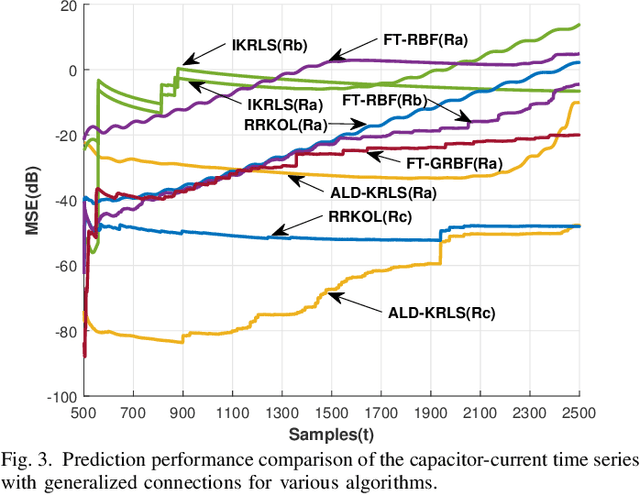

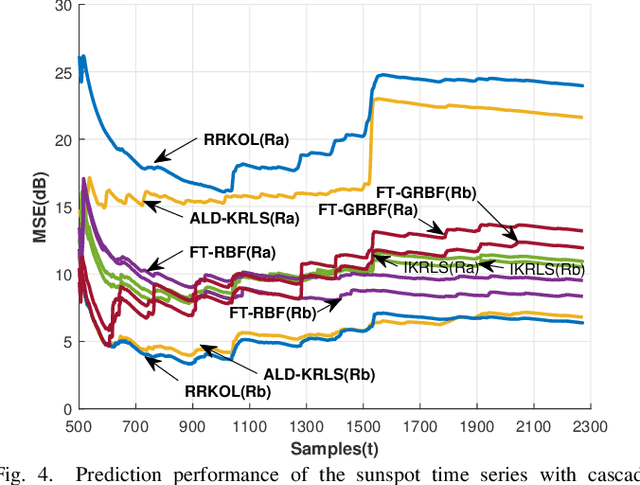

Abstract:In this paper, sparsification techniques aided online prediction algorithms in a reproducing kernel Hilbert space are studied for nonstationary time series. The online prediction algorithms as usual consist of the selection of kernel structure parameters and the kernel weight vector updating. For structure parameters, the kernel dictionary is selected by some sparsification techniques with online selective modeling criteria, and moreover the kernel covariance matrix is intermittently optimized in the light of the covariance matrix adaptation evolution strategy (CMA-ES). Optimizing the real symmetric covariance matrix can not only improve the kernel structure's flexibility by the cross relatedness of the input variables, but also partly alleviate the prediction uncertainty caused by the kernel dictionary selection for nonstationary time series. In order to sufficiently capture the underlying dynamic characteristics in prediction-error time series, a generalized optimization strategy is designed to construct the kernel dictionary sequentially in multiple kernel connection modes. The generalized optimization strategy provides a more self-contained way to construct the entire kernel connections, which enhances the ability to adaptively track the changing dynamic characteristics. Numerical simulations have demonstrated that the proposed approach has superior prediction performance for nonstationary time series.

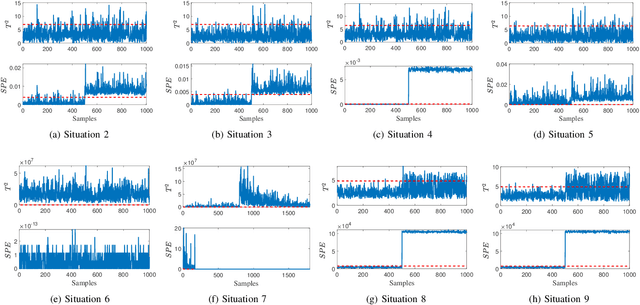

Self-learning sparse PCA for multimode process monitoring

Aug 07, 2021

Abstract:This paper proposes a novel sparse principal component analysis algorithm with self-learning ability for successive modes, where synaptic intelligence is employed to measure the importance of variables and a regularization term is added to preserve the learned knowledge of previous modes. Different from traditional multimode monitoring methods, the monitoring model is updated based on the current model and new data when a new mode arrives, thus delivering prominent performance for sequential modes. Besides, the computation and storage resources are saved in the long run, because it is not necessary to retrain the model from scratch frequently and store data from previous modes. More importantly, the model furnishes excellent interpretability owing to the sparsity of parameters. Finally, a numerical case and a practical pulverizing system are adopted to illustrate the effectiveness of the proposed algorithm.

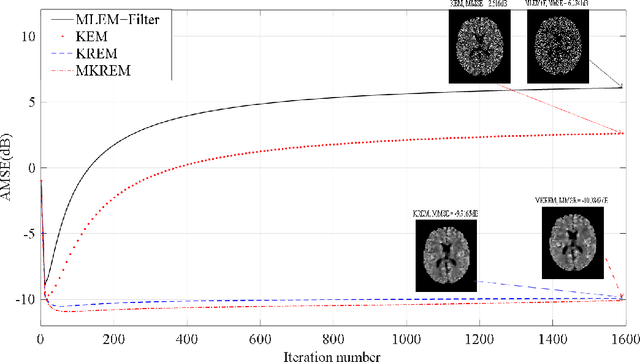

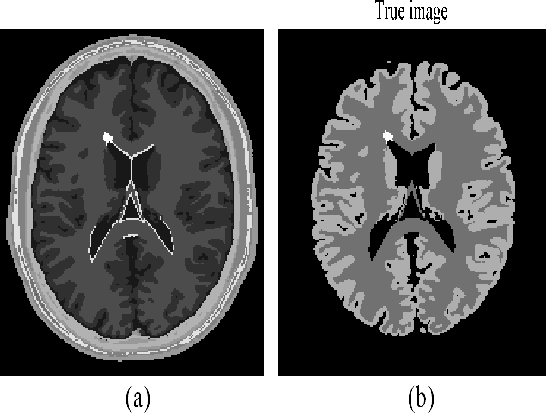

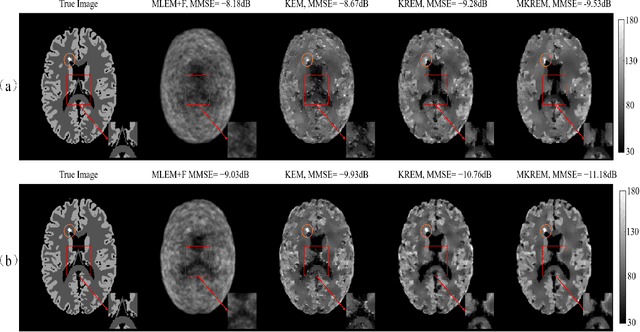

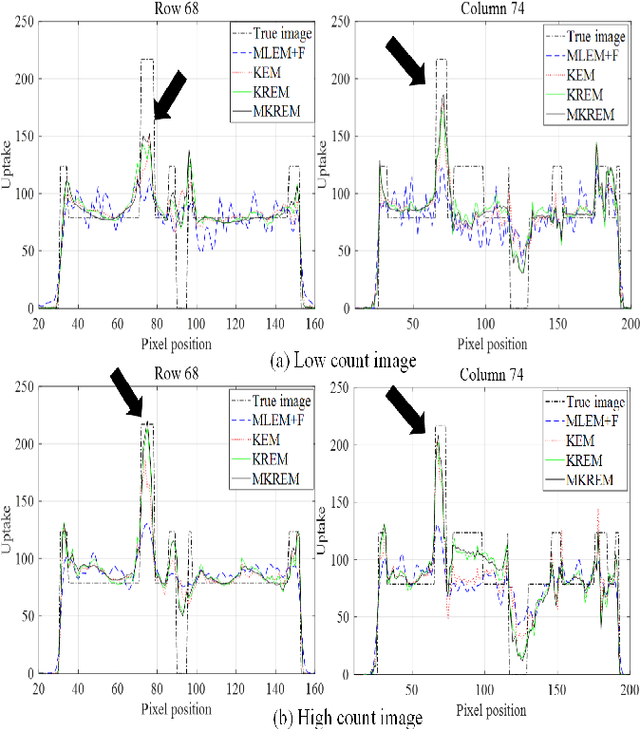

PET Image Reconstruction with Multiple Kernels and Multiple Kernel Space Regularizers

Mar 04, 2021

Abstract:Kernelized maximum-likelihood (ML) expectation maximization (EM) methods have recently gained prominence in PET image reconstruction, outperforming many previous state-of-the-art methods. But they are not immune to the problems of non-kernelized MLEM methods in potentially large reconstruction error and high sensitivity to iteration number. This paper demonstrates these problems by theoretical reasoning and experiment results, and provides a novel solution to solve these problems. The solution is a regularized kernelized MLEM with multiple kernel matrices and multiple kernel space regularizers that can be tailored for different applications. To reduce the reconstruction error and the sensitivity to iteration number, we present a general class of multi-kernel matrices and two regularizers consisting of kernel image dictionary and kernel image Laplacian quatradic, and use them to derive the single-kernel regularized EM and multi-kernel regularized EM algorithms for PET image reconstruction. These new algorithms are derived using the technical tools of multi-kernel combination in machine learning, image dictionary learning in sparse coding, and graph Laplcian quadratic in graph signal processing. Extensive tests and comparisons on the simulated and in vivo data are presented to validate and evaluate the new algorithms, and demonstrate their superior performance and advantages over the kernelized MLEM and other conventional methods.

Monitoring nonstationary processes based on recursive cointegration analysis and elastic weight consolidation

Jan 21, 2021

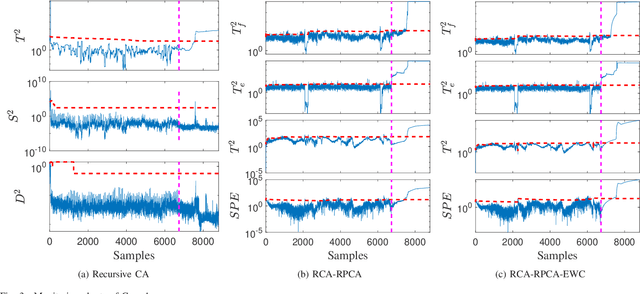

Abstract:This paper considers the problem of nonstationary process monitoring under frequently varying operating conditions. Traditional approaches generally misidentify the normal dynamic deviations as faults and thus lead to high false alarms. Besides, they generally consider single relatively steady operating condition and suffer from the catastrophic forgetting issue when learning successive operating conditions. In this paper, recursive cointegration analysis (RCA) is first proposed to distinguish the real faults from normal systems changes, where the model is updated once a new normal sample arrives and can adapt to slow change of cointegration relationship. Based on the long-term equilibrium information extracted by RCA, the remaining short-term dynamic information is monitored by recursive principal component analysis (RPCA). Thus a comprehensive monitoring framework is built. When the system enters a new operating condition, the RCA-RPCA model is rebuilt to deal with the new condition. Meanwhile, elastic weight consolidation (EWC) is employed to settle the `catastrophic forgetting' issue inherent in RPCA, where significant information of influential parameters is enhanced to avoid the abrupt performance degradation for similar modes. The effectiveness of the proposed method is illustrated by a practical industrial system.

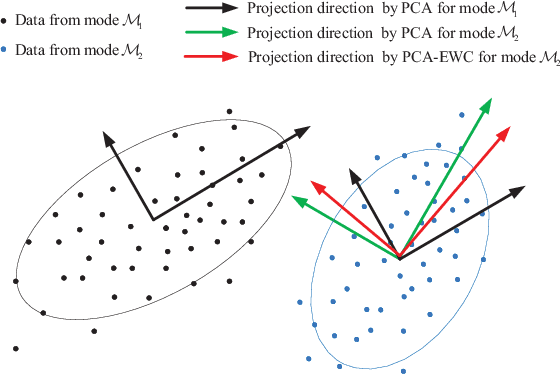

Monitoring multimode processes: a modified PCA algorithm with continual learning ability

Dec 28, 2020

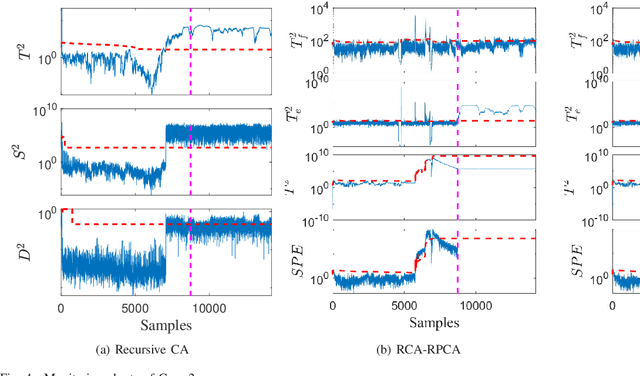

Abstract:For multimode processes, one has to establish local monitoring models corresponding to local modes. However, the significant features of previous modes may be catastrophically forgotten when a monitoring model for the current mode is built. It would result in an abrupt performance decrease. Is it possible to make local monitoring model remember the features of previous modes? Choosing the principal component analysis (PCA) as a basic monitoring model, we try to resolve this problem. A modified PCA algorithm is built with continual learning ability for monitoring multimode processes, which adopts elastic weight consolidation (EWC) to overcome catastrophic forgetting of PCA for successive modes. It is called PCA-EWC, where the significant features of previous modes are preserved when a PCA model is established for the current mode. The computational complexity and key parameters are discussed to further understand the relationship between PCA and the proposed algorithm. Numerical case study and a practical industrial system in China are employed to illustrate the effectiveness of the proposed algorithm.

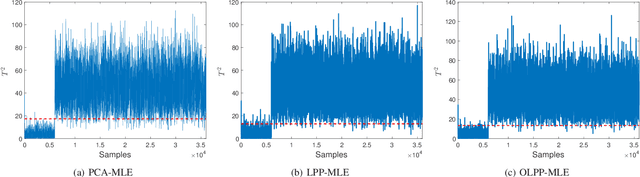

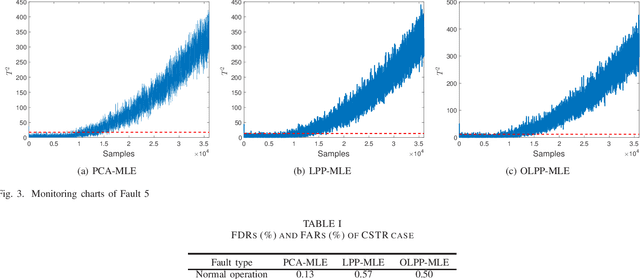

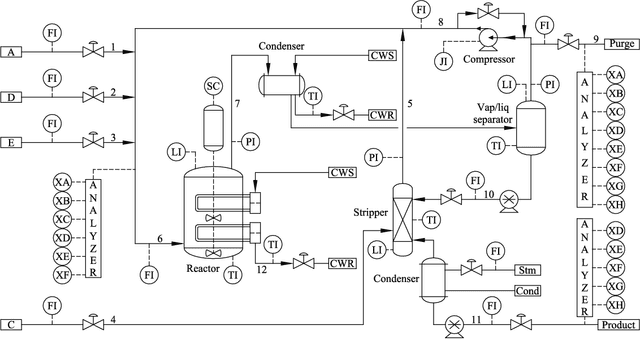

Process monitoring based on orthogonal locality preserving projection with maximum likelihood estimation

Dec 13, 2020

Abstract:By integrating two powerful methods of density reduction and intrinsic dimensionality estimation, a new data-driven method, referred to as OLPP-MLE (orthogonal locality preserving projection-maximum likelihood estimation), is introduced for process monitoring. OLPP is utilized for dimensionality reduction, which provides better locality preserving power than locality preserving projection. Then, the MLE is adopted to estimate intrinsic dimensionality of OLPP. Within the proposed OLPP-MLE, two new static measures for fault detection $T_{\scriptscriptstyle {OLPP}}^2$ and ${\rm SPE}_{\scriptscriptstyle {OLPP}}$ are defined. In order to reduce algorithm complexity and ignore data distribution, kernel density estimation is employed to compute thresholds for fault diagnosis. The effectiveness of the proposed method is demonstrated by three case studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge