Jianmin Yang

Dynamic Programming-Based Offline Redundancy Resolution of Redundant Manipulators Along Prescribed Paths with Real-Time Adjustment

Nov 26, 2024

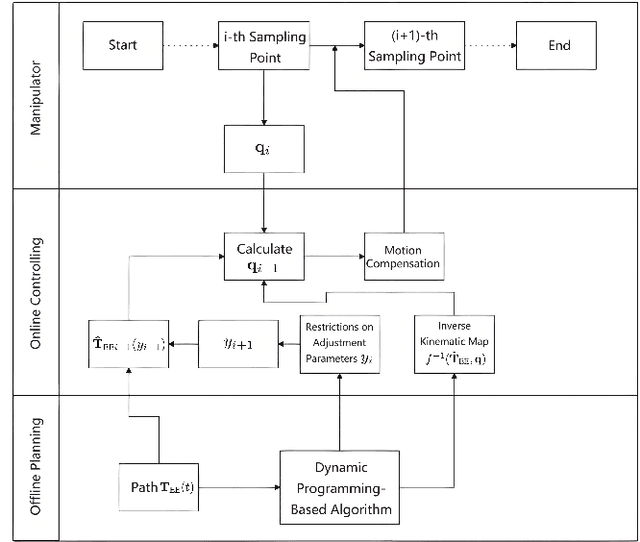

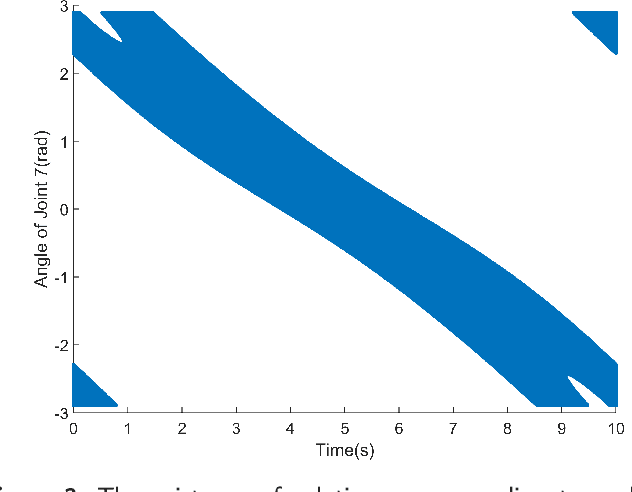

Abstract:Traditional offline redundancy resolution of trajectories for redundant manipulators involves computing inverse kinematic solutions for Cartesian space paths, constraining the manipulator to a fixed path without real-time adjustments. Online redundancy resolution can achieve real-time adjustment of paths, but it cannot consider subsequent path points, leading to the possibility of the manipulator being forced to stop mid-motion due to joint constraints. To address this, this paper introduces a dynamic programming-based offline redundancy resolution for redundant manipulators along prescribed paths with real-time adjustment. The proposed method allows the manipulator to move along a prescribed path while implementing real-time adjustment along the normal to the path. Using Dynamic Programming, the proposed approach computes a global maximum for the variation of adjustment coefficients. As long as the coefficient variation between adjacent sampling path points does not exceed this limit, the algorithm provides the next path point's joint angles based on the current joint angles, enabling the end-effector to achieve the adjusted Cartesian pose. The main innovation of this paper lies in augmenting traditional offline optimal planning with real-time adjustment capabilities, achieving a fusion of offline planning and online planning.

Dynamic Programming-Based Redundancy Resolution for Path Planning of Redundant Manipulators Considering Breakpoints

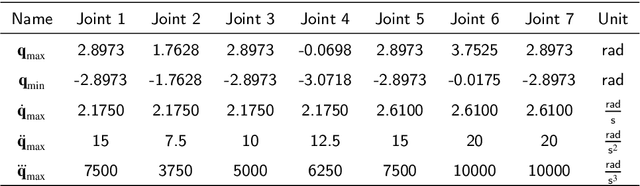

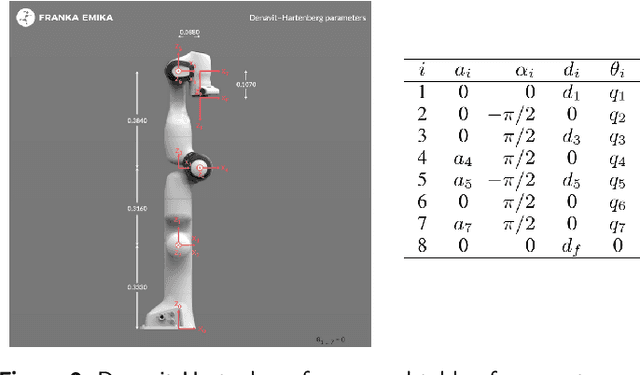

Nov 26, 2024Abstract:This paper proposes a redundancy resolution algorithm for a redundant manipulator based on dynamic programming. This algorithm can compute the desired joint angles at each point on a pre-planned discrete path in Cartesian space, while ensuring that the angles, velocities, and accelerations of each joint do not exceed the manipulator's constraints. We obtain the analytical solution to the inverse kinematics problem of the manipulator using a parameterization method, transforming the redundancy resolution problem into an optimization problem of determining the parameters at each path point. The constraints on joint velocity and acceleration serve as constraints for the optimization problem. Then all feasible inverse kinematic solutions for each pose under the joint angle constraints of the manipulator are obtained through parameterization methods, and the globally optimal solution to this problem is obtained through the dynamic programming algorithm. On the other hand, if a feasible joint-space path satisfying the constraints does not exist, the proposed algorithm can compute the minimum number of breakpoints required for the path and partition the path with as few breakpoints as possible to facilitate the manipulator's operation along the path. The algorithm can also determine the optimal selection of breakpoints to minimize the global cost function, rather than simply interrupting when the manipulator is unable to continue operating. The proposed algorithm is tested using a manipulator produced by a certain manufacturer, demonstrating the effectiveness of the algorithm.

LSM-YOLO: A Compact and Effective ROI Detector for Medical Detection

Aug 26, 2024Abstract:In existing medical Region of Interest (ROI) detection, there lacks an algorithm that can simultaneously satisfy both real-time performance and accuracy, not meeting the growing demand for automatic detection in medicine. Although the basic YOLO framework ensures real-time detection due to its fast speed, it still faces challenges in maintaining precision concurrently. To alleviate the above problems, we propose a novel model named Lightweight Shunt Matching-YOLO (LSM-YOLO), with Lightweight Adaptive Extraction (LAE) and Multipath Shunt Feature Matching (MSFM). Firstly, by using LAE to refine feature extraction, the model can obtain more contextual information and high-resolution details from multiscale feature maps, thereby extracting detailed features of ROI in medical images while reducing the influence of noise. Secondly, MSFM is utilized to further refine the fusion of high-level semantic features and low-level visual features, enabling better fusion between ROI features and neighboring features, thereby improving the detection rate for better diagnostic assistance. Experimental results demonstrate that LSM-YOLO achieves 48.6% AP on a private dataset of pancreatic tumors, 65.1% AP on the BCCD blood cell detection public dataset, and 73.0% AP on the Br35h brain tumor detection public dataset. Our model achieves state-of-the-art performance with minimal parameter cost on the above three datasets. The source codes are at: https://github.com/VincentYuuuuuu/LSM-YOLO.

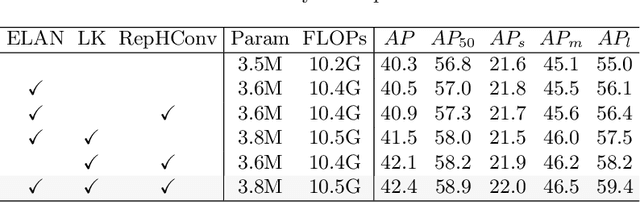

Multi-Branch Auxiliary Fusion YOLO with Re-parameterization Heterogeneous Convolutional for accurate object detection

Jul 05, 2024

Abstract:Due to the effective performance of multi-scale feature fusion, Path Aggregation FPN (PAFPN) is widely employed in YOLO detectors. However, it cannot efficiently and adaptively integrate high-level semantic information with low-level spatial information simultaneously. We propose a new model named MAF-YOLO in this paper, which is a novel object detection framework with a versatile neck named Multi-Branch Auxiliary FPN (MAFPN). Within MAFPN, the Superficial Assisted Fusion (SAF) module is designed to combine the output of the backbone with the neck, preserving an optimal level of shallow information to facilitate subsequent learning. Meanwhile, the Advanced Assisted Fusion (AAF) module deeply embedded within the neck conveys a more diverse range of gradient information to the output layer. Furthermore, our proposed Re-parameterized Heterogeneous Efficient Layer Aggregation Network (RepHELAN) module ensures that both the overall model architecture and convolutional design embrace the utilization of heterogeneous large convolution kernels. Therefore, this guarantees the preservation of information related to small targets while simultaneously achieving the multi-scale receptive field. Finally, taking the nano version of MAF-YOLO for example, it can achieve 42.4% AP on COCO with only 3.76M learnable parameters and 10.51G FLOPs, and approximately outperforms YOLOv8n by about 5.1%. The source code of this work is available at: https://github.com/yang-0201/MAF-YOLO.

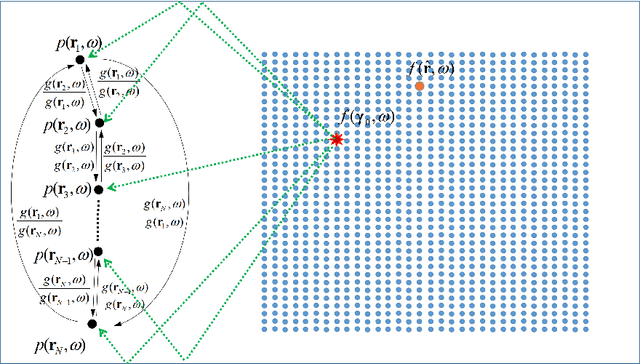

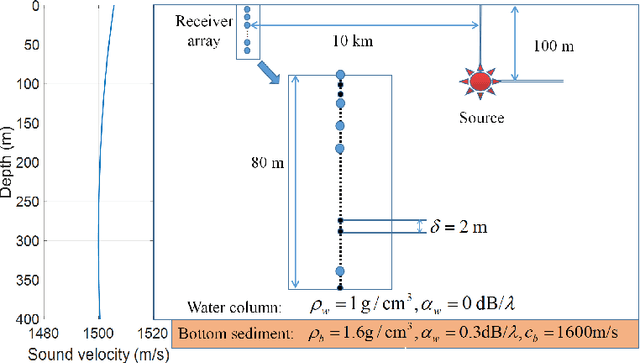

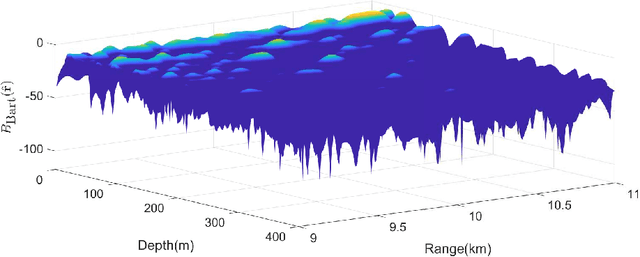

Graph-based Matched Field Localization for an Underwater Source

Jan 18, 2021

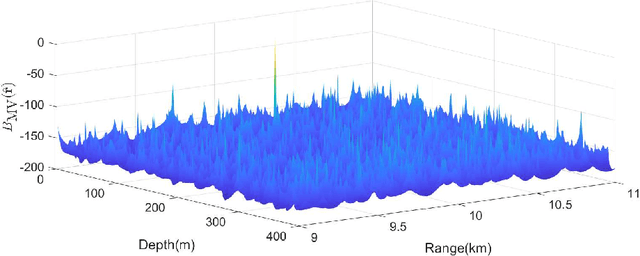

Abstract:Matched Field Processing (MFP) locates the underwater sources by matching the received data with the replica vectors, which could be regarded as a generalized beamformer. In this paper, the MFP method is combined with a recently developed framework -- Graph Signal Processing (GSP) method. Following the paradigm of GSP, a spatial adjacency matrix is constructed for the arbitrary distributed sensors based on the Green's function, then the source is located by utilizing the graph Fourier transform. The simulation results illustrate that the Graph-based MFP outperforms the the conventional MFP processors -- the Bartlett processor and the Minimum Variance processor -- for its good accuracy and robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge