Idan Refaeli

Proof Minimization in Neural Network Verification

Nov 11, 2025Abstract:The widespread adoption of deep neural networks (DNNs) requires efficient techniques for verifying their safety. DNN verifiers are complex tools, which might contain bugs that could compromise their soundness and undermine the reliability of the verification process. This concern can be mitigated using proofs: artifacts that are checkable by an external and reliable proof checker, and which attest to the correctness of the verification process. However, such proofs tend to be extremely large, limiting their use in many scenarios. In this work, we address this problem by minimizing proofs of unsatisfiability produced by DNN verifiers. We present algorithms that remove facts which were learned during the verification process, but which are unnecessary for the proof itself. Conceptually, our method analyzes the dependencies among facts used to deduce UNSAT, and removes facts that did not contribute. We then further minimize the proof by eliminating remaining unnecessary dependencies, using two alternative procedures. We implemented our algorithms on top of a proof producing DNN verifier, and evaluated them across several benchmarks. Our results show that our best-performing algorithm reduces proof size by 37%-82% and proof checking time by 30%-88%, while introducing a runtime overhead of 7%-20% to the verification process itself.

Proof-Driven Clause Learning in Neural Network Verification

Mar 15, 2025Abstract:The widespread adoption of deep neural networks (DNNs) requires efficient techniques for safety verification. Existing methods struggle to scale to real-world DNNs, and tremendous efforts are being put into improving their scalability. In this work, we propose an approach for improving the scalability of DNN verifiers using Conflict-Driven Clause Learning (CDCL) -- an approach that has proven highly successful in SAT and SMT solving. We present a novel algorithm for deriving conflict clauses using UNSAT proofs, and propose several optimizations for expediting it. Our approach allows a modular integration of SAT solvers and DNN verifiers, and we implement it on top of an interface designed for this purpose. The evaluation of our implementation over several benchmarks suggests a 2X--3X improvement over a similar approach, with specific cases outperforming the state of the art.

Marabou 2.0: A Versatile Formal Analyzer of Neural Networks

Jan 25, 2024

Abstract:This paper serves as a comprehensive system description of version 2.0 of the Marabou framework for formal analysis of neural networks. We discuss the tool's architectural design and highlight the major features and components introduced since its initial release.

DEM: A Method for Certifying Deep Neural Network Classifier Outputs in Aerospace

Jan 04, 2024Abstract:Software development in the aerospace domain requires adhering to strict, high-quality standards. While there exist regulatory guidelines for commercial software in this domain (e.g., ARP-4754 and DO-178), these do not apply to software with deep neural network (DNN) components. Consequently, it is unclear how to allow aerospace systems to benefit from the deep learning revolution. Our work here seeks to address this challenge with a novel, output-centric approach for DNN certification. Our method employs statistical verification techniques, and has the key advantage of being able to flag specific inputs for which the DNN's output may be unreliable - so that they may be later inspected by a human expert. To achieve this, our method conducts a statistical analysis of the DNN's predictions for other, nearby inputs, in order to detect inconsistencies. This is in contrast to existing techniques, which typically attempt to certify the entire DNN, as opposed to individual outputs. Our method uses the DNN as a black-box, and makes no assumptions about its topology. We hope that this work constitutes another step towards integrating DNNs in safety-critical applications - especially in the aerospace domain, where high standards of quality and reliability are crucial.

Formally Explaining Neural Networks within Reactive Systems

Aug 06, 2023

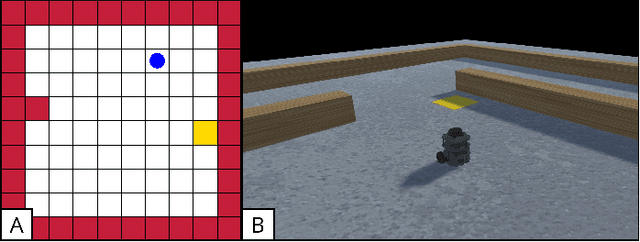

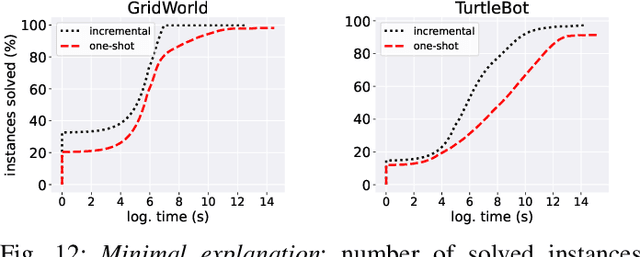

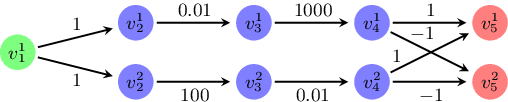

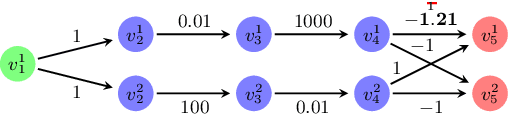

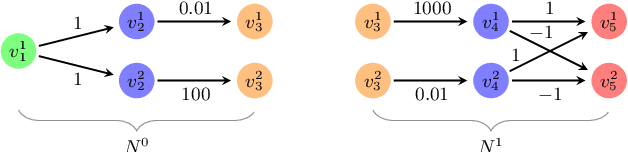

Abstract:Deep neural networks (DNNs) are increasingly being used as controllers in reactive systems. However, DNNs are highly opaque, which renders it difficult to explain and justify their actions. To mitigate this issue, there has been a surge of interest in explainable AI (XAI) techniques, capable of pinpointing the input features that caused the DNN to act as it did. Existing XAI techniques typically face two limitations: (i) they are heuristic, and do not provide formal guarantees that the explanations are correct; and (ii) they often apply to ``one-shot'' systems, where the DNN is invoked independently of past invocations, as opposed to reactive systems. Here, we begin bridging this gap, and propose a formal DNN-verification-based XAI technique for reasoning about multi-step, reactive systems. We suggest methods for efficiently calculating succinct explanations, by exploiting the system's transition constraints in order to curtail the search space explored by the underlying verifier. We evaluate our approach on two popular benchmarks from the domain of automated navigation; and observe that our methods allow the efficient computation of minimal and minimum explanations, significantly outperforming the state of the art. We also demonstrate that our methods produce formal explanations that are more reliable than competing, non-verification-based XAI techniques.

veriFIRE: Verifying an Industrial, Learning-Based Wildfire Detection System

Dec 06, 2022

Abstract:In this short paper, we present our ongoing work on the veriFIRE project -- a collaboration between industry and academia, aimed at using verification for increasing the reliability of a real-world, safety-critical system. The system we target is an airborne platform for wildfire detection, which incorporates two deep neural networks. We describe the system and its properties of interest, and discuss our attempts to verify the system's consistency, i.e., its ability to continue and correctly classify a given input, even if the wildfire it describes increases in intensity. We regard this work as a step towards the incorporation of academic-oriented verification tools into real-world systems of interest.

Minimal Multi-Layer Modifications of Deep Neural Networks

Oct 20, 2021

Abstract:Deep neural networks (DNNs) have become increasingly popular in recent years. However, despite their many successes, DNNs may also err and produce incorrect and potentially fatal outputs in safety-critical settings, such as autonomous driving, medical diagnosis, and airborne collision avoidance systems. Much work has been put into detecting such erroneous behavior in DNNs, e.g., via testing or verification, but removing these errors after their detection has received lesser attention. We present here a new tool, called 3M-DNN, for repairing a given DNN, which is known to err on some set of inputs. The novel repair procedure implemented in 3M-DNN computes a modification to the network's weights that corrects its behavior, and attempts to minimize this change via a sequence of calls to a backend, black-box DNN verification engine. To the best of our knowledge, our method is the first one that allows repairing the network by simultaneously modifying multiple layers. This is achieved by splitting the network into sub-networks, and applying a single-layer repairing technique to each component. We evaluated 3M-DNN tool on an extensive set of benchmarks, obtaining promising results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge